Predict Home Prices via Machine Learning in Python

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Links: Github | Kaggle Challenge | Presentation

Introduction

If you are a real estate investor or potential buyer of a home, how do you determine if the asking price is above or below that home’s true market value? Most of us would simply predict by looking at other homes with similar features: location, size, and age, the number of bedrooms and bathrooms, etc.

This process is relatively easy and straightforward for one home. But what if you’re looking to purchase many homes, or if you’re wanting to predict prices on homes with very different features? Or perhaps you can’t find a similar house on which to base your comparison. Worse, accurately predicting true value becomes increasingly complex as you add more homes and features. Thankfully, we used machine learning in Python to help solve this particular issue.

The Data

The data we used to develop our machine learning model comes from the Kaggle: Advanced Regression Techniques challenge. This data focuses strictly on residential homes in Ames, Iowa, and was split into two sections - a “train” dataset, which included houses with price data attached, and a “test” dataset, made up of a separate group of houses without pricing information.

The Challenge

We were tasked with predicting the final price of the 1,459 homes in the “test” dataset by training a machine learning model on the “train” dataset. The overall accuracy of our predictions was measured by Kaggle, which had the true prices for the homes in the “test” dataset. Below, we lay out the framework for how we processed the data, determined our best machine learning model, and how accurate our predictions were when compared to the actual home values.

Our Project Workflow

- Exploratory Data Analysis

- Data Preprocessing

- Modeling & Results

- Final Takeaways

Exploratory Data Analysis

As with any data-driven project, it was crucial that we examined the provided data before diving in and beginning to make adjustments, as the results of exploratory analysis necessarily informs how the datasets should be processed before applying machine learning techniques. The “train” set contained 1,460 observations with 79 predictor variables (excluding ‘SalePrice’ and ‘ID’ variables), of which 28 were numeric and 51 were categorical. The “test” set contained 1,459 observations and the same 79 predictor variables. Below is a summary of the data’s key characteristics:

Checking the Target Variable: SalePrice

- After visualizing the histogram and probability plot of the SalePrice variable from the train set, it became clear that home prices are positively (right) skewed and exhibited a non-linear trend (see below). Therefore, we knew that we would need to apply a transformation to this variable to increase the effectiveness of the regression models we intended to employ.

Examining Variable Distribution

- We also checked for skewness among our input variables, to see if there were any variables that might be unevenly represented within the data. Below, we demonstrate the variables that exhibited the most skewness.

Looking for Missingness

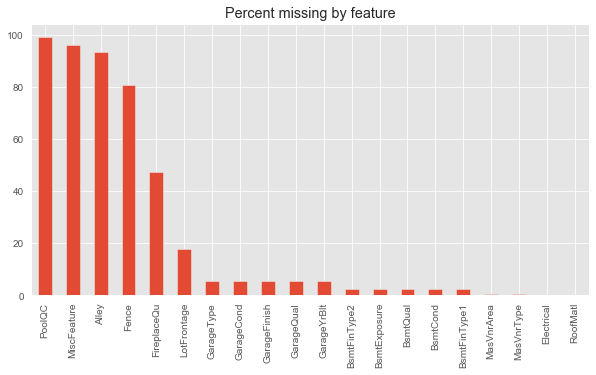

- Additionally, both the train and test datasets were incomplete, in the sense that many observations had missing values for one or more feature variables. In fact, there were four variables that had over 80% of their data missing, as can be seen from the graph of variables with missing values below. Additionally, we identified that the number of variables with missing values in the test set was higher than that in the train set. Further below, we discuss our methodology to imputing missing data.

Identifying Outliers

- In order to check for linearity, a condition of linear regression techniques, we looked at the scatterplots of various variables against the target variable SalePrice. When we did so, we identified two outliers in the graph between SalePrice and LivingArea, as seen below. Because these outliers represent a large deviation from the general trend and drastically change the calculation of the linear relationship between these two variables, we knew we needed to address these two points.

Examining Multicollinearity

- Lastly, we checked for variables with high correlation with each other (multicollinearity). We did so because one of the required assumptions for linear regression models involving multiple variables is that there is little-to-no multicollinearity between feature variables. However, our analysis revealed that a handful of our feature variables could pose a multicollinearity problem, including:

- First Floor (Sq.Ft.) & Total Basement (Sq.Ft.)

- Total Rooms Above Ground & General Living Area

- Garage Area (Sq.Ft.) & Garage Cars (One vs. Two)

- House Year Built & Garage Year Built

Data Preprocessing

Overall, we implemented the following pipeline for our data processing:

- Import raw data

- Remove outliers in training data

- Impute data:

- Impute pseudo-missing values

- Impute true-missing values

- Re-engineer categorical features as necessary

- Add new feature variables

- Dummify categorical feature variables

- Remove unnecessary feature variables

One important quality of the data that it’s important to reconcile is that there were many more feature variables with missing values in the test dataset than there were in the training dataset. As a result, our group decided on a data processing pipeline that took a function-forward approach, so that the end result would be a single function that would correctly process either the training or the test dataset.

Therefore, the below detailed explanation puts forth our philosophy on first types of variables (pseudo-missing, true-missing, categorical, etc.) and then on specific variables, rather than on an individual dataset.

Outliers

- An examination of the distribution of our feature space revealed that there were two clear outliers in the relation between SalePrice and GrLivArea (a representation of the overall living area of the house). While the overall relational trend between these two variables is positive and linear, there were two observations with a living area greater than 4000 square feet but a sale price less than $300,000, which had negative effects on the overall trend between the two variables.

- Because there were only two observations out of the 1,460 observations in our training data, we judged it more efficient to remove those observations than to attempt to transform the GrLivArea variable to reduce the outlier-induced skew.

Data Imputation

Pseudo-Missingness

- There were a number of feature variables that contained missing values that did not, in fact, represent missing data. Instead of an incomplete observation, missing values in these features represented a lack of the element in question on the property. Our group termed those features with this quality “pseudo-missing.”

- The general philosophy for a given variable X (where X represents a housing feature such as a pool, a fireplace, etc.) was to impute missing values as “No X”.

- The fourteen feature variables with pseudo-missingness were: Alley, BsmtCond, BsmtQual, BsmtFinType1, Fence, Fireplace, GarageCond, GarageFinish, GarageQual, GarageType, GarageYrBlt, MasVnrType, MiscFeature, and PoolQC.

Actual Missingness

- In addition to the feature variables with pseudo-missingness, there were five features where missing values indicated true missing data. Those variables were: Electrical, MasVnrArea, LotFrontage, BsmtExposure, and BsmtFinType2. Because many of our machine learning modesls are unable to handle missing data, it was necessary for us to impute the missing values for these feature variables given the data to which we did have access.

- By and large, the variables were imputed using Mode imputation. When doing so, feature variables were grouped by other, relevant features (for example, MasVnrArea was grouped by Neighborhood and YearBuilt) to provide a more accurate and granular imputation.

- Additionally, a handful of variables related to garages and basements exhibited both pseudo-missingness and true missingness. Those variables were first processed to fill any pseudo-missingness. This was achieved by imputed rows that corresponded to missingness in all features related to the relevant housing amenity (garage or basement) with the same methodology described in the pseudo-missingness section above; once that was taken care of, the remaining missing values were imputed using the appropriate mode imputation.

Categorical Variables

- The distribution of a large number of categorical variables was such that many of the features were dominated by a single value, with the remaining values being more sparsely populated. Because this sparsity resulted in a lack of representative data, and in an attempt to reduce the feature space expansion resulting from the need to dummify categorical features, we consolidated those categorical variables to follow the general pattern of “Dominant Class(es), Other.”

- Feature variables engineered in this way were: Exterior1st, RoofMatl, RoofStyle, Condition1, LotShape, Functional, Electrical, Heating, Foundation, and SaleType.

- Additionally, some categorical variables represented an ordinal ranking - for instance, there were numerous variables related to the quality or condition of some housing feature, which were represented by categorical strings (such as “Excellent,” “Fair,” etc.). Because quality and condition are inherently ranked, we converted these variables to ordinal variables, to reduce the need for dummification.

New Feature Variables

- To reduce the size of the feature space without losing important data, the following feature variables were combined to create new feature variables:

- 1stFlrSF + 2ndFlrSF + TotalBsmtSF -> TotalSF

- BsmtFullBath + FullBath -> TotalFullBath

- BsmtHalfBath + HalfBath -> TotalHalfBath

- Additionally, new categorical feature variables were created to indicate whether a given home had a garage (IsGarage) or a pool (IsPool).

Dummify Categorical Features

- All categorical feature variables were dummified using One Hot Encoding for use in linear regression-based machine learning models.

Removing Unnecessary Features

- Some feature variables were rendered unnecessary by the engineering above. Others were so highly correlated with other variables that including them violated core assumptions of linear models, and yet others had too few observations to be statistically useful.Target Variable: SalePrice

- Dropped due to no useful information: Id

- Dropped due to re-engineering: TotalBsmtSF, 1stFlrSF, 2ndFlrSF, BsmtFullBath, BsmtHalfBath, FullBath, HalfBath

- Dropped for correlation reasons: BsmtFinSF1, BsmtFinSF2, BsmtUnfSF, GrLivArea, GarageYrBlt, GarageCars

- Dropped for lack of observations: LowQualFinSF, PoolArea

Modeling & Results

After handling all missingness and generating our desired features, we moved on to machine learning-driven modeling. Based on our research, we decided to approach modeling as the following:

- Train various baseline models (or Level 1 models) to predict the SalePrice on the training set.

- Predict the SalePrice on the training set using baseline models.

- Train a meta model (or a Level 2 model) to predict the SalePrice using the predictions from Step 2.

Modeling Methodology Visually Explained

You may notice that this approach essentially represents a stacked model. On a technical level, we used sklearn’s StackingRegressor to achieve our modeling objective.

Level 1 Models

The various Level 1 Models we generated can be generalized into two categories: linear models and tree based models. Each model was first tuned using sklearn’s GridSearchCV to find the optimal hyperparameters on the train dataset, and then trained on the overall train dataset. Below is a summary of the mean squared errors (our evaluation metric) from the cross validation:

Linear Models:

- Ridge: Cross Validation Score = 0.11513

- Lasso: Cross Validation Score = 0.11355

- Elastic Net: Cross Validation Score = 0.11355

Tree Based Models:

- CatBoost: Cross Validation Score = 0.11291

- Gradient Boost: Cross Validation Score = 0.11211

- LightGBM: Cross Validation Score = 0.11573

Of particular note is that when tuning our Elastic Net model, we found that the optimal rho (the parameter that controls the weighted balance of Ridge and Lasso models in the Elastic Net) was 1, suggesting that the optimal Elastic Net model was essentially the Lasso model.

After training the Level 1 models, we visualized the models’ predicted prices against the actual prices provided by the dataset, as well as the most important feature variables that contributed to each model’s predictions. As can be seen, although the two different classes of models (linear and tree) had different important features, within each class the models had similar important features.

Additionally, it is important to recognize that the plots for predicted price vs. actual price visually demonstrate that the tree-based models are likely overfitting the training data, a general weakness of tree-based models.

Model 1: Ridge

Model 2: Lasso

Model 3: Elastic Net

Model 4: Catboost

Model 5: Gradient Boost

Model 6: Light GBM

Level 2 Models

For the Level 2 Model, we first tried simply averaging all the predictions from the Level 1 models. We then tried a more complicated model for our meta model. We elected to use a ‘Lasso’ model as our meta model for our second stacking regressor. The table below showcases the cross validated RMSE scores for our Level 1 models as well as both our stacked regressor Level 2 models. Additionally, the table includes the Kaggle scores (the errors between our final predicted prices and the actual prices of the test dataset) for each model.

As can be seen, even the simple average stacked model performed better than any single Level 1 model, with an RMSE score of 0.10921 (the closest Level 1 model is the Gradient Boost model, with an RMSE score of 0.11211). Moreover, our final model, the Lasso stacked regressor, achieved a best cross validation score 0.10895.

Final Takeaways

To conclude, our approach, from data exploration through modeling, resulted in a highly accurate predictive model. Overall, our model placed in the Top 14% of Kaggle submissions and we were happy with our result.