Efficient Supply Allocation for a Ride-Hailing App

Introduction:

In the competitive world of ride-hailing services, efficiently allocating supply (drivers) to meet the ever-changing demand (riders) is crucial for the success of the platform. This blog post explores data-driven solutions that actively guide drivers towards areas with higher expected demand, optimizing both rider experience and driver earnings. We will discuss the problem statement, propose a solution, build a baseline model, design the deployment process, outline communication strategies for driver recommendations, and suggest an experiment to validate our solution in live operations.

Problem Statement:

Efficiently allocating supply and meeting rider demand while providing stable earnings for drivers is our primary challenge. Understanding how demand fluctuates over time and space actively helps us comprehend supply dynamics.

- Explore the Data and Suggest a Solution: We actively analyze historical data to identify patterns and suggest a solution that guides drivers towards areas with higher expected demand at a given time and location. This involves examining data such as rider pick-up/drop-off locations, timestamps, and other relevant factors. By leveraging this information, we actively build a predictive model that estimates future demand, enabling optimal allocation of drivers.

- Build a Baseline Model: We actively develop a baseline model as an initial version of the predictive model, serving as a starting point for further improvements. The model actively considers various features such as time of day, day of the week, weather conditions, local events, and historical demand patterns. We actively employ machine learning algorithms like regression, time series analysis, or even deep learning models to actively train the model on the collected data.

- Design and Deploy the Model: We actively design and deploy the model in a scalable and efficient manner. This involves actively setting up a data pipeline to continuously collect and preprocess new data, regularly training the model to adapt to changing patterns, and actively integrating it into the ride-hailing app's backend infrastructure. We actively include monitoring and evaluation mechanisms to ensure the model's performance and actively make necessary adjustments as needed.

- Communicate Model Recommendations to Drivers: To actively guide drivers towards areas with high demand, clear and concise communication is vital. The ride-hailing app actively displays real-time heat maps or zones indicating areas with expected high demand. Drivers actively receive notifications, in-app messages, or alerts suggesting optimal areas to position themselves. Additionally, the app actively offers incentives or bonuses to encourage drivers to move to high-demand areas.

- Experiment Design for Validation: Validating the effectiveness of the solution in live operations actively requires a well-designed experiment. A possible approach is to actively divide the city or region into control and experimental groups. The control group actively follows the existing allocation strategy, while the experimental group actively utilizes the new predictive model for driver deployment. Key metrics to actively monitor include rider waiting times, driver earnings, and overall user satisfaction. Statistical analysis can actively compare the performance of the experimental group against the control group, ensuring the efficacy of the proposed solution.

Exploratory Data Analysis (EDA):

The preprocessing function is applied to the dataframe, sorting it chronologically and dropping missing values. Date and time components are extracted from the "start_time" column. Locations are filtered to include only same-city orders. Distance and cost per kilometer are calculated. Locations are approximated for improved granularity. Data is aggregated by location, day of the week, and time, calculating the number of orders and average ride value. The resulting dataframe is ready for further analysis, providing insights into ride-hailing dynamics.

Visualization:

Our objective is to visually depict the starting and terminal locations of drivers and riders for each weekday.

To accomplish this, we can create separate plots or maps for each weekday, where the starting and terminal locations are represented as points on the map. By assigning different colors or markers to drivers and riders, we can easily differentiate between them.

These visualizations will enable us to observe any spatial patterns or clustering of starting and terminal locations for drivers and riders on different weekdays. This information can be valuable for understanding the demand-supply dynamics and optimizing driver allocation based on the specific patterns observed on each weekday.

Time Series Visualization:

To visualize the time series data, we can utilize the provided "plotTimeSeries1" function. This function utilizes the Pandas and Matplotlib libraries for plotting.

The function first calculates the average ride value per hour by grouping the data based on the "day_and_hour" column. This average value is merged back into the dataframe.

Next, the function calculates the average ride value per weekday and hour by grouping the data based on the "day_of_week" and "time" columns. This average value is also merged back into the dataframe.

Using the Matplotlib library, a line plot is created with the x-axis representing the "day_and_hour" and the y-axis representing the average and individual ride values. Two lines are plotted: one for the average value and one for the individual value.

The plot provides a visual comparison between the average value and the individual value over time, allowing us to observe any trends, patterns, or fluctuations in the ride values.

The plot is displayed with a title, x-axis label ("day_and_hour"), y-axis label ("Value"), and a legend to differentiate between the average value and individual value.

This time series visualization provides insights into the average and individual ride values over time, aiding in the analysis of ride value dynamics and potential patterns.

The time series analysis reveals clear seasonality patterns based on the time of day and weekday. Additionally, any random fluctuations or noise in the data have been minimized.

This indicates that we can make reasonable predictions about the number of orders or ride values at a given time. We can utilize the average number of orders per time or the average ride value per time to make these predictions.

By leveraging the patterns observed in the time series data, we can make informed forecasts and optimize decision-making regarding driver allocation, supply management, and resource planning.

Exploring data and optimizing driver allocation plan:

Our plan is to develop a machine learning model that can predict the demand for drivers at specific times and locations. We will explore two approaches to tackle this unsupervised machine learning problem.

Approach 1: Modeling the ride-hailing service state - We will create a model that considers the time of day, day of the week, and location to recommend drivers to move to areas with a higher expected demand compared to the available number of drivers. This approach aims to optimize driver allocation based on the expected number of riders at each location.

Approach 2: Clustering mobility patterns - We will utilize clustering techniques to identify patterns of mobility within the ride-hailing service. This will help us forecast demand for each cluster and guide drivers accordingly. By understanding and leveraging these patterns, we can make more accurate predictions about demand in different areas.

To achieve this, we need to establish a stable average number of riders at each location, per time, and per weekday. We will approximate the starting locations and time to ensure granularity and create meaningful groups for analysis. However, limitations exist, such as the absence of driver location data at specific times and the need to incorporate road networks to determine the actual shortest distance for drivers.

By implementing these approaches, we aim to improve the efficiency of driver allocation, optimize resource utilization, and enhance the overall ride-hailing experience for both drivers and riders.

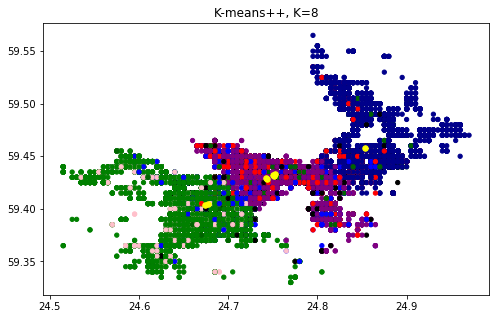

(1) Exploring clustering methods and dimensionality reduction:

At the macro level, we can employ categorical variable dummy encoding and Principal Component Analysis (PCA) to reduce dimensionality. This will enable us to identify unique clusters using clustering algorithms such as K-means, DBSCAN, Hierarchical Clustering Analysis (HCA), or MLP (Multi-Layer Perceptron). Each algorithm will be carefully evaluated to understand its strengths and weaknesses, selecting the most suitable approach for our specific objective.

(2) Building and documenting the baseline model:

Our baseline model will utilize standard sklearn tools to compare the number of riders and drivers in each location. Its purpose is to establish a straightforward model that serves as a benchmark for evaluating the performance of more complex models. This enables us to determine whether the advanced models genuinely enhance the solution's performance or introduce unnecessary complexity.

To construct and document our baseline model, we have already outlined the problem statement and objectives. We will identify suitable evaluation metrics and select an appropriate model type. Data preprocessing will involve handling missing values, outlier removal, feature selection, and data scaling to ensure feature consistency.

Once the data is prepared, we will train the baseline model using the chosen algorithm. This entails fitting the model to the training data, adjusting its parameters to minimize training data error.

We partitioned the map into 1366 unique starting locations and 1590 unique terminating locations. Using a decision tree model, we constructed a linear model that forecasts the number of riders per hour at each location. If the actual number of riders surpasses this prediction, drivers are directed to that location.

Additionally, we performed Principal Component Analysis (PCA) and applied K-means clustering to identify distinct clusters that exist for each weekday. This approach allows us to better understand the spatial and temporal patterns of demand and guide drivers towards areas with higher expected demand.

Model Deployment:

For efficient model deployment, it is crucial to ensure reliability and minimize chances of failure in real-time production. To achieve this, the model and system should have the capability to revert back to the initial state and automatically reproduce the entire modeling output stack if necessary.

To catch any potential issues before they reach production, continuous integration should be implemented as part of the deployment process. This involves conducting automated tests on the main pipeline to verify that the data and prediction metrics are within acceptable bounds.

Once the model is live, it can be extracted using the pickle module and optimized for mobile devices to ensure smooth performance. Additionally, creating an interactive dashboard using frameworks like Dash or a Flask API can enable rendering the model's output in JSON format.

When the API is invoked, the relevant data is parsed, and the output is sent to the front end of the application. Utilizing the GPS live location, the driver's current location can be obtained and forwarded to the model for further processing.

Having a well-defined rollback plan is essential in case the model fails to meet the required accuracy in production. Instead of deploying the model to the entire user base, it is prudent to expose it to a certain percentage of elite users, typically 10% of the total user base, to gauge its performance before a full rollout. This approach ensures that any potential issues can be identified and addressed promptly while minimizing the impact on the overall user experience.

Conclusion:

Efficient supply allocation actively drives ride-hailing apps to meet rider demand and provide drivers with stable earnings. By actively leveraging data analysis, predictive modeling, and effective communication strategies, ride-hailing platforms actively guide drivers towards areas with higher expected demand. This actively improves the overall user experience and optimizes driver utilization and earnings. Through careful experimentation and validation, these solutions can be actively fine-tuned and implemented for live operations, ensuring sustained success in the dynamic ride-hailing marketplace.