Optimizing Machine Learning Algorithms to Model Allstate Loss Claims

Introduction

A viable business model for an insurance company has to involve some way to assess how much payout they can expect for a given customer. If the payout is larger than what the company has accumulated from the customer’s payments then a loss is incurred. Allstate Insurance is trying to determine if they can predict the loss to expect from a customer. To gain a better understanding of how this can be done, Allstate submitted a Kaggle challenge where the goal was to see if participants could accurately determine the loss incurred for a given customer given various features about them.

Purpose

We participated in the Kaggle challenge with the goal of learning how to best optimize machine learning algorithms, use feature selection, use exploratory data analysis and a wide range of machine learning techniques. By accomplishing these goals, we could then earn competitive score.

Exploratory Data Analysis

Through Kaggle, Allstate provided a training data set and a test data set with 188,318 and 125,546 customers, respectively. Customer features consisted of 116 categorical variables and 14 continuous variables. There was a lack of domain knowledge as the categorical variables were in the form of A,B,C, etc. and the continuous variables were scaled to range from 0 to 1.

As a pre-processing step for our models, we created dummy variables from the categorical variables and we tested the data for near-zero variance. We noted there were 54 factors that were removed every time, We eliminated these factors in advance to cut down on pre-processing time.

The loss variable from the training data set was greatly skewed to the right, indicating extremely large loss values. To transform loss into a more normally distributed form we performed a log transformation. This can help improve the accuracy of methods not robust to outliers. Figure 1 presents the two forms of the response variable before and after the transformation.

Figure 1

The left density plot shows the original, untransformed response and the right density plot is the log transform of the response. We chose to use the log response to satisfy the regression assumption of normality.

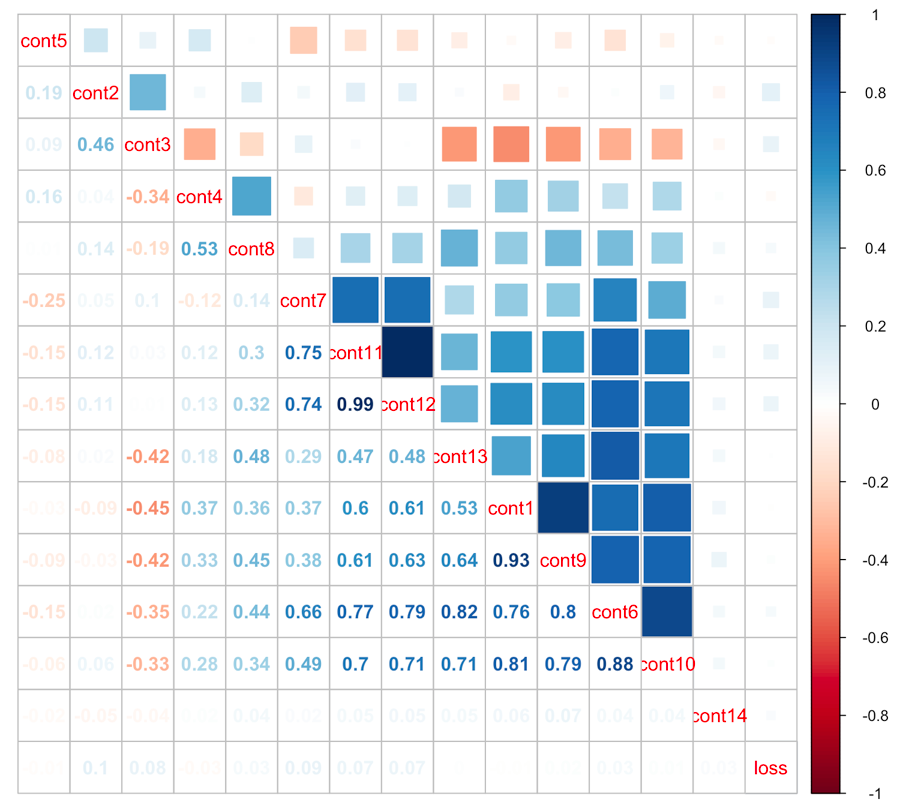

A look at the linear correlation between the continuous variables with themselves and with the loss dependent variable in the training data set reveals two important results, shown in Figure 2. First, none of the continuous variables have a strong linear correlation with the log loss. This suggests that a linear model is not likely to perform well. Second, there is a high degree of collinearity among some of the continuous variables. While one could remove these collinearities (for example, by performing Principle Component Analysis), we did not need to do so as the non-linear models we employed do not require such assumptions.

Figure 2

The collinearity plot used. The response variable, loss, has been transformed to the log scale. We can see that variables cont11 and cont12 are highly colinear. Loss has only very slight collinearity with continuous variables cont2, cont3 (negative), cont4, cont6, cont7, cont 8, cont11, and cont12.

The Model Maker

In order to streamline our team’s workflow, we wanted to make the process of trying out different models as easy and efficient as possible. Therefore we created a model maker pipeline, which takes in parameters specific to a model and outputs performance results, as shown in the diagram below.

Diagram

The model maker employs the caret package, an ensemble suite of many different machine-learning algorithms implemented in R. It also provides convenience functions for performing feature selection, cross-validation and parameter tuning. Utilizing caret allowed us to automate these steps as a streamlined workflow.

For example, suppose we want to test gradient boosting. We then simply specify in a parameters file the method name “gbm”, a grid of parameters to test, cross-validation settings (the partition split sizes, number of folds etc.), as well as miscellaneous parameters for that run, such as whether to take the log transform. An example model parameters file is given below.

Given these parameters, the model maker performs pre-processing and cross-validation on the grid of parameters, builds the optimal model, and outputs a time-stamped folder of results and a Kaggle submission file. Results included the estimated MAE from cross-validation and the optimal model parameters. Allstate defined the score as the Mean Absolute Error (MAE) between the predicted losses and the true losses. A lower MAE results in a higher score.

Parallelization was utilized in R, and model tests were run on the server. The server we used was the Brandeis University HPC Cluster. The server had multiple servers available, with each server proving 32 cores of computational power and several hundred gigabytes of RAM. This allowed us to attempt more complex models including KNN which requires a large amount of RAM.

Results

We trained and tested 4 non-linear models in the R Caret package: k-Nearest Neighbors (kNN), Gradient Boosting Machines(GBM), XGBoost, and Neural Networks.

The advantage of kNN is that there is only one parameter to consider, the number of neighbors. The disadvantages are that it takes a long time to run on large data sets (it runs in O(n2), where n is the number of observations), it requires a 100GB of RAM on this dataset, and it is not as accurate as other methods we tried. The final Kaggle score that we got with kNN was 1507.53360 MAE.

The advantages of GBM are that it is robust and generally performs well out of the box. We took our final set of parameters from tweaking suggestions on the Kaggle Forum and got a score of 1163.47311 MAE. The final model used 5000 trees, with an interaction depth of 5, a learning rate of 0.03 and 20 minimum observations per terminal node.

Advantages of XGBoost are that it is well parallelized in R and can yield results significantly faster than GBM, while the drawback is that there are many parameters to tune (7 in total). XGBoost is often the most competitive method in Kaggle competitions, and some variant is often utilized in the winning solutions. We looked to the Kaggle Forum for suggestions on the parameters and we cross-validated among four parameters (Figure 3). It is illustrative to observe some trends in this figure. For higher learning rates (eta), higher depths in trees perform worse than shallower trees. Conversely, for lower learning rates the higher depth of 12 can outperform the shallower tree models. In other words, if the learning rate is high our trees should be simple as to not overfit. If the learning rate is low more complex trees can improve performance. Our Kaggle score for XGBOOST was 1148.65697 MAE and our final parameters included 3000 trees, a learning rate of 0.01, a max depth of 12, and a regularization parameter or gamma, of 2.

Figure 3

Neural Networks have the advantage that they are very flexible and are robust to outliers. The disadvantage is that it is difficult to find parameters that yield the best global solution. We decided to use the nnet R package and to utilize one hidden layer, which simplified the possible network topologies to consider. Apart from the number of nodes in the hidden layer, the nnet package allows a second parameter to tune called the decay. The purpose of this decay parameter is to prevent individual large values of weights from dominating the network and to prevent over-fitting. The use of the decay parameter in nnet can be compared to the shrinkage parameter in Ridge regression. We performed cross-validation on the entire training data set and found that ideal values for the decay as well as the number of nodes. We decided to use a decay parameter of 0.1 and 25 nodes in the final network model. The Kaggle score for this neural network model was 1206.69697 MAE.

Table 1

A summary of model results is given by the following table.

Methods |

Best Kaggle Score |

| KNN | 1533.743

[Not Submitted] |

| Gradient Boosting Machines | 1163.47311 |

| XGBoost | 1148.65697 |

| Neural Networks | 1206.69697 |

Conclusion

To summarize, we found that for this problem XGBOOST performed best followed by GBM. In third place after the tree methods was the neural network model, and kNN performed the worst. Also, even the best submitted Kaggle result still had a mean absolute error of over 1000. This tells Allstate the limit of how much they can forecast is within about $2000 of accuracy. Clearly, judging the validity of claims using a model can be difficult.