Predicting Diabetic Patient Readmissions with Machine Learning

Introduction

The objective of this project is to develop machine learning models that will predict whether diabetic hospital patients will be readmitted within 30 days. For a bit of context, the Affordable Care Act created the Hospital Readmission Reduction Program to improve the quality of healthcare for Americans by tying hospital payments to patient readmission rates. In other words, this program seeks to incentivize hospitals to provide high-quality healthcare by financially penalizing hospitals with higher readmission rates.

This project endeavours to aid in achieving the goals set forth by the Hospital Readmission Reduction Program by positively impacting two key stakeholders in the American healthcare system:

- Patients: Correctly identifying patients who are likely to be readmitted and will enable hospitals to take preventative measures so that patients will receive the best possible healthcare and avoid adverse health consequences

- Hospitals: Tying lower readmission rates to higher hospital revenues will foster efficiency from a financial perspective, allowing hospitals to reallocate a larger pool of resources to important expenses such as personal protective equipment

In short, lower readmission rates lead to better health for patients and better fiscal health for hospitals.

Dataset

The dataset being analyzed in this project was procured from over 10 years (1999-2008) of clinical care in 130 hospitals for the purposes of analyzing the impact of HbA1c (blood sugar level) on hospital readmissions. It is suitable for this project of developing a predictive model for hospital readmissions because it contains over 50 features with each instance representing patient and hospital outcomes (whether they were readmitted to the hospital).

To qualify as an instance in this dataset, each observation has met the following criteria:

- It is an inpatient encounter (a hospital admission)

- It is a diabetic encounter, that is, one during which any kind of diabetes was entered to the system as a diagnosis

- The length of stay was at least 1 day and at most 14 days

- Laboratory tests were performed during the encounter

- Medications were administered during the encounter

Citation: Beata Strack, Jonathan P. DeShazo, Chris Gennings, Juan L. Olmo, Sebastian Ventura, Krzysztof J. Cios, and John N. Clore, “Impact of HbA1c Measurement on Hospital Readmission Rates: Analysis of 70,000 Clinical Database Patient Records,” BioMed Research International, vol. 2014, Article ID 781670, 11 pages, 2014.

Process

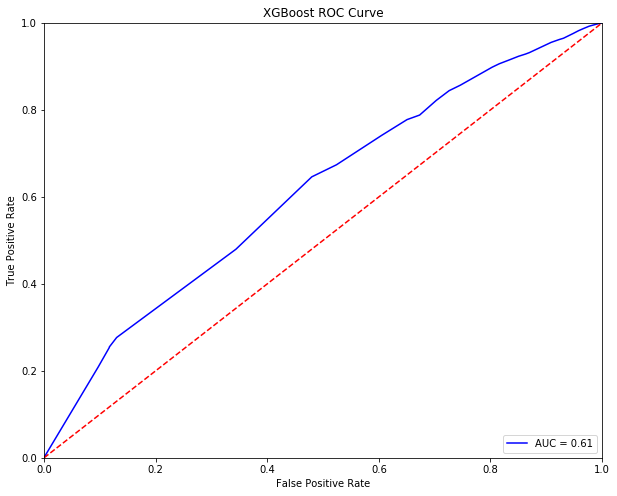

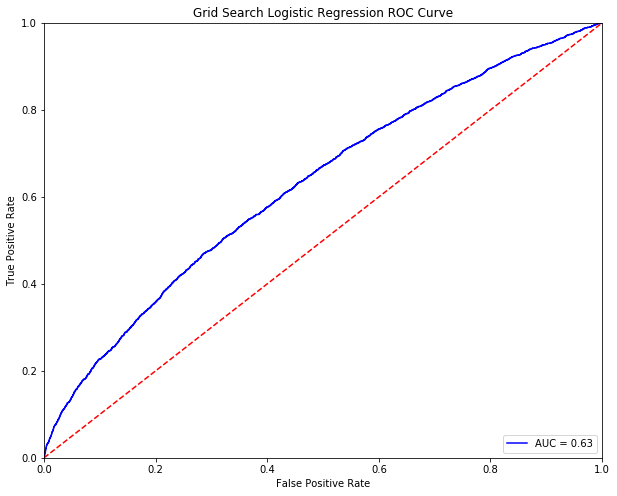

The process for this project entails the training of Decision Tree, Logistic Regression, Random Forest and XGBoost algorithms. The models will then be evaluated on the AUC (Area under (ROC) Curve), which serves as a measure of model performance across all possible classification thresholds. After plotting and determining each model's AUC, feature importances will be plotted for each model, whenever possible, to discern which factors are most likely to contribute towards a patient's readmission.

Once each of the algorithms are evaluated at a base level (default parameters), we will try to optimize each algorithm through hyperparameter and other machine learning techniques (bootstrap aggregating and stacking) to see if more robust models can be created.

The basic outline of what is described above can be summarized as follows:

- Data Processing

- Training Base Machine Learning Algorithms

- Evaluating Base Machine Learning Algorithms (AUC / Feature Importance)

- Hyperparameter Tuning with GridSearchCV + Other Machine Learning Techniques (Bagging / Stacking)

- Evaluating Optimized Machine Learning Algorithms (AUC / Feature Importance)

Model Evaluation

In order to best measure how well models predict hospital readmissions, it is important to understand the ROC Curve and AUC (Area Under the Curve).

An ROC (receiver operating characteristic) curve visualizes the performance of a classification model across all classification thresholds by plotting the true postive rate (true positives / all positives) and the false positive rate (false positives / all negatives) as seen below:

Source: https://developers.google.com/machine-learning/crash-course/classification/roc-and-auc

AUC (area under the ROC curve) measures the 2-dimensional area underneath the ROC curve and ranges in value from 0 to 1 as shown below.

Source: https://developers.google.com/machine-learning/crash-course/classification/roc-and-auc

In an extreme example, a perfect classifier would maximize true positives while minimizing false positives, thus bringing the ROC Curve all the way to the top left (simultaneously making the AUC cover the entire plot). Alternatively, a model that is always wrong would bring the ROC curve all the way to the bottom right, thus minimizing the AUC.

Data Processing

Before diving into training our base machine learning models, it is crucial to examine the data and process them so they are suitable for machine learning models. In terms of first steps, features must be processed to address a number of issues that must be resolved before training machine learning models. When observing this particular dataset, the two prevalent issues are missingness and an abundance of categorical features.

Missingness

With regards to missingness, features with missing values must be imputed or dropped (if most of the data is missing or if the variance is low) as done below:

Numeric and Categorical Features

Once the issue of missingness is resolved, the next step is to transform numeric and categorical features. One thing that is clear when taking a closer look at this dataset is that few key categorical features such as A1C result (which measures recent blood sugar levels) are actually quantifiable features that can be measured. Thus, as a part of the data processing, proxy values have been mapped to categories for such features as shown below:

Once numeric features and categorical features are properly delineated, numeric features are scaled and categorical features are encoded so that all data are numeric in line with what is suitable for training machine learning models.

Imbalanced Dataset

One issue that is noticed when examining the output variable is that the dataset is heavily imbalanced towards the majority class (not readmitted). If we do not address this issue, our models will have poor predictive power for the minority (readmitted) class.

To remedy this problem, we can implement the Synthetic Minority Oversampling Technique (SMOTE) to upsample the minority class in our training data set.

Base Machine Learning Models

Now that the data has been prepared, the next step lies in training our base machine learnings and evaluating their performance (AUC) to establish a baseline upon which performance can be improved by leveraging hyperparameter tuning and other machine learning techniques.

The best performing base model appears to be Logistic Regression with an AUC of 0.63. When observing the important features as deemed by the model, it is clear that some few factors such as the number of inpatient visits or whether a patient has diabetes as well as the number of other conditions diagnosed could be useful indicators of whether a patient will be readmitted in the near future.

Now that some performance baseline has been established as summarized below, the next step in this process is to optimize our models and discerning which factors could be useful in predicting patient readmissions.

Optimized Machine Learnings Models

With an idea of baseline model performance in mind, the next step will be to tune machine learning models using hyperparameter tuning. Additionally, the bootstrap aggregation technique will be applied the Logistic Regression classifier to determine whether a significant improvement in performance can occur. Furthermore, all of the refined models will be stacked to create a voting classifier.

Hyperparameter Tuning

The first avenue through which we can improve upon base algorithms is hyperparameter tuning. Different parameters for the base models can be tweaked to determine the ideal parameters for predicting diabetic patient readmission.

For tree-based models, such as Random Forest or Decision Trees, parameters such as the maximum depth of a tree or the maximum number of features to consider when traversing a tree can be tweaked to maximize model performance.

Similarly, for Logistic Regression, which is a linear model, the risk of overfitting the training data can be optimized by tinkering with parameters such as the regularization strength or penalty method, so that only the key features are considered when classifying new patients as readmitted or not readmitted.

Indeed, when examining the performance of tuned models to that of base models, it is clear that there is improvement in AUC, particularly in tree-based models such as the Decision Tree Classifier and the Random Forest Classifier.

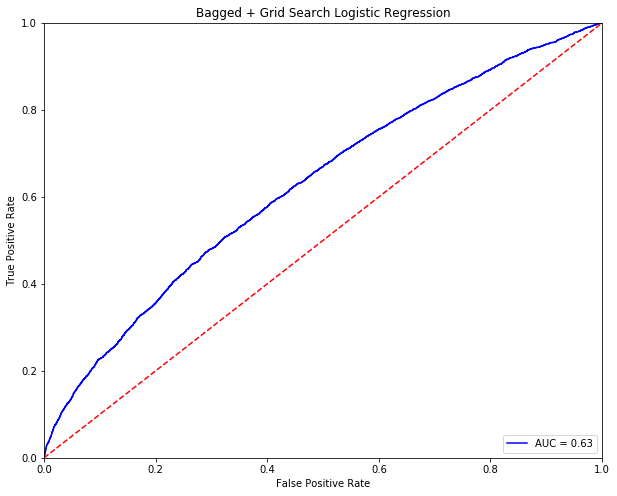

Bootstrap Aggregation

Thinking beyond, hyperparameter tuning, a useful technique for improving models is bootstrap aggregation (bagging). Bagging is effectively a two-step process which contains these two steps:

- Bootstrapping: a sampling technique where out of n samples, k samples are chosen with replacement for training instances of a machine learning algorithm

- Aggregating: aggregating the predictions of multiple trained instances that are trained on seperate bootstrapped samples

The intention here is that bagging will take advantage of ensemble learning with randomly sampled datasets to convert multiple weak learners into a single strong learner, resulting in reduced variance and overfitting.

Source: https://www.researchgate.net/figure/The-Bagging-Bootstrap-Aggregation-scheme_fig2_284156704

When combining bagging with Logistic Regression, AUC remains flat at 0.63. Perhaps a different machine learning technique will yield better results.

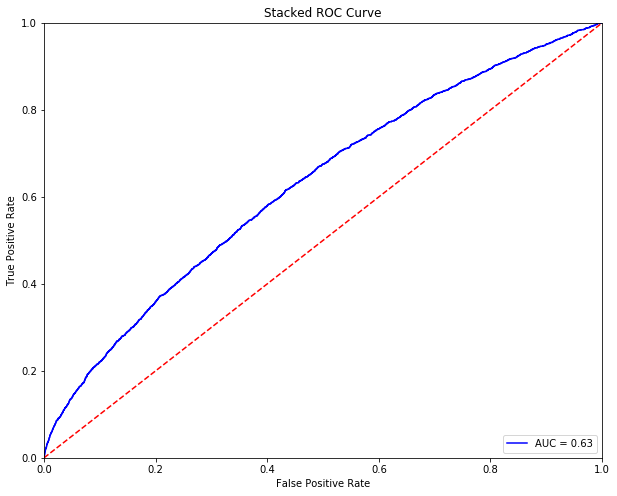

Model Stacking

Another machine learning technique that will be considered is stacking, which combines the predictions from two or more base machine learning algorithms. The benefit of stacking is the combined predictive power of a range of robust machine learning algorithms, which should yield predictions that are better than that of any individual algorithm in the ensemble.

Source: https://www.researchgate.net/figure/The-Stacking-scheme_fig1_284156704

From stacking multiple base models, an AUC of 0.63 was achieved.

Conclusion

After evaluating the machine learning models that were trained in this project, a maximum AUC of 0.63 was achieved with the following models:

- Logistic Regression Classifier

- Tuned Logistic Regression Classifier

- Bagged Logistic Regression Classifier

- Tuned Random Forest Classifier

- Stacked Classifier

From these models, we can see that certain features can be instrumental in predicting diabetic readmissions.

In terms of translating these insights into preemptive actions that can be taken to reduce patient readmissions, leveraging a few of features deemed as important by the highest performing machine learning models trained in this project can help:

- Number of Inpatient visits: patient intervention teams should pay close attention to frequent visitors to the hospital and appropriate log the reason for each visit to address the root cause of admission

- Diabetes Medication: more medications could indicate the potential for more complications - as such key barometers of diabetic health such as a1C (blood sugar levels) should be tracked

- Number of Diagnoses: diabetes in conjunction with other health conditions can lead to severely adverse complications - hospitals should ensure that all health conditions are addressed

- Age: patient intervention teams should maintain regular and routine communication with older patients to anticipate any reason for readmission

All things being said, thank you very much for taking the time to go through my analysis of diabetic readmissions. In this project, I implemented the Decision Tree, Logistic Regression, Random Forest, and XGBoost algorithms. Additionally, I tried to improve the performance of our models through hyperparameter tuning as well as useful machine learning techniques such as bootstrap aggregation and stacking. For models where applicable and interpretable, leveraging feature importance proved useful in discerning which aspects of a patient encounter could serve as a flag for hospitals to take action to prevent a patient from being readmitted.

Future Work

In terms of future work, the feature engineering space would be one lever that can be used to improve model performance. The way to best do so is to conduct some qualitative research to strengthen domain expertise in areas such as medications and other health conditions that could have a big impact on diabetic patients.

Being able to better contextualize all of these features with domain knowledge, would no doubt, lend towards a better patient experience!

Contact

If you have any questions or comments, please feel free to reach out to me on LinkedIn or GitHub.