LendingClub Loan Default Predictions with Machine Learning

Link to Dash App | Link to Github

The skills the author demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction to LendingClub

Nowadays consumers can invest in consumer loans through peer-to-peer financing platforms such as LendingClub. LendingClub enables investors to browse consumer loan applications containing the applicant’s credit history, loan details, employment status, and other self-reported personal information, in order to make determinations as to which loans to fund.

Loans from applicants deemed at higher risk of default, such as those with lower FICO credit scores, will typically carry higher interest rates and therefore the potential to yield a higher return on investment (ROI) for the investor. Conversely, lower risk-of-default loans will usually carry lower interest rates. Therefore, a machine learning model that could accurately predict the default risk of a loan using the available data on the LendingClub platform could help investors to maximize their investment returns by identifying the loans worth investing in.

The dataset used for this project was provided by LendingClub and contains 2,260,701 observations of individual loan applications submitted between 2007-2018. There are 151 quantitative and qualitative features in the dataset capturing information on the loan amount, loan interest rate, loan status (i.e. whether the loan is current or in default), loan tenor (either 36 or 60 months), applicant FICO credit score, applicant employment history, and other personal information. Some of these features are only relevant after loan issuance and therefore not available to investors at the moment of investing. To better understand the features available to investors, I used the LendingClub Data dictionary available on their website to investors.

Research Goals About LendingClub

The goal of this project is to predict default probabilities of 2018 loans in the LendingClub portfolio by training our models on pre-2018 loan data in order to uncover the best investment opportunity set for an investor looking to maximize his or her returns on the 2018 loan set.

To achieve this, our model’s predicted loan default probabilities for a given loan are combined with that loan’s term (36 or 60 months), monthly installment notional (the amount the debtor pays every month) and funded amount (the initial amount of the loan) in order to produce an expected internal rate of return (IRR) for the loan. Using these predictions, our model then allocates capital to all loans it predicted as good in the 2018 test dataset and does not fund any loans it predicted as bad in order to arrive at an IRR-optimized portfolio.

The loan_status dependent variable, which captures loan defaults as 0's and loan non-defaults as 1's, is heavily imbalanced, with non-defaults outnumbering defaults 6.7:1 in this dataset. This class imbalance will be important later on when training our machine learning models.

For this project I will be using the Python libraries for data manipulation (pandas, numpy), regular expressions (re), data visualization (plotly, matplotlib, seaborn), machine learning (scikit-learn, catboost), deep learning (keras, tensorflow), API implementation (flask), web framewokrs (dash) and statistics (scipy stats, itertools and statsmodels). Amazon Web Services’ (AWS) Elastic Computing (EC2) platform was also used to train our neural network model in the cloud.

The following steps were taken to create a highly predictive model:

-

Data exploration

-

Data preprocessing

-

Feature engineering

-

Feature selection

-

Training Machine Learning Models & hyperparameter tuning

-

Implementing real-time ML predictions using the Flask API

-

Portfolio Optimization

LendingClub Data Exploration - Pt. 1

Several interactive exploratory data analysis plots were created in the following Dash app using plotly’s Python library in order to build some high-level intuition of some of the relationships between different variables. Of note, the Dash app allows the user to interactively update the top-right graph and two bottom graphs with data for a different state by hovering their cursor over a state in the top-left map graph. The user can also reactively update the map graph to display data for different years by using the provided DateRange slider.

This choropleth plot shows that certain states exhibit significantly greater average default rates than others and that a loan applicant’s state may be an important predictor of loan default. Interestingly, there appears to be regional clustering in default patterns, as southern states such as Oklahoma, Arkansas, Louisiana, Mississippi, and Alabama exhibit higher average default rates (>21%) while northwestern states such as Oregon, Washington, Idaho, and Wyoming exhibit lower average default rates (<17%) versus the average 14.97% default rate across all states. Interestingly however, despite these apparent differences in loan default rates by state, interest rates charged by loan grade by state are quasi-identical, likely due to federal and state anti-discriminatory and usury regulations.

LendingClub Data Exploration - Pt. 2

While one might reason this may be due to different average loan quality distributions by state, the below scatterplot shows this not to be the case, as all states display a similar left-skewed distribution in loan grade quality. Indeed, all states exhibit 15%-20% of loans graded as ‘A’, 25%-30% graded as ‘B’, 25-33% graded as ‘C’, 15-20% graded as ‘D’, 5%-10% graded as ‘E’, 0%-5% graded as ‘F’, and 0%-1% graded as ‘G’. Based on these similar loan quality distributions between states, we would expect state variables to not be a strong predictor of loan defaults, though other unobserved macro variables operating at the state-level may also impact loan defaults rates. Further investigation is needed to ascertain this.

I was interested in visualizing how repayment rate trended with FICO scores in our dataset. As expected, FICO scores exhibit a strong positive correlation with repayment rate, with an added logarithmic flattening at the tail end of the curve as repayment rate essentially plateaus past >700 FICO scores. On the other hand, interest rates display an expected strong negative correlation with FICO scores, with added negative convexity.

LendingClub Data Exploration - Pt. 3

These two observations taken together offer a potentially interesting insight that applicants with FICO scores in the 700-750 range, having a similar repayment risk profile than applicants in the 800-850 range while being charged 3% higher interest rates on average, may offer an investor the opportunity to unlock added alpha by offering more loans to applicants in this 700-750 FICO score subgroup.

I was also interested in looking at default rates by employment length, as my original hypothesis was that applicants with lesser amounts of work experience would exhibit higher default rates on average. As shown in these bar interactive graphs in the Dash app, this is not the case across almost all states, as applicants with <5 years of work experience (bars colored in green) actually display higher loan repayment rates than applicants with >5 years of experience on average. Work experience may therefore not be as predictive of a variable as originally thought, though additional investigation would be needed to confirm this.

LendingClub Data Preprocessing

The first step in this data preprocessing process was to drop variables with >50% missing data, which included 42 variables. Variables that would introduce data leakage into our models, such as collection_recovery_fee, which captures the collection recovery fee earned by LendingClub once a loan has been charged off, were dropped as well. Next, other variables that included only one feature, such as policy_code, were dropped, while features like zip_code, which could potentially cause our models to overfit noise, were dropped as well.

Based on a careful read of LendingClub’s provided data dictionary, certain other numeric features were imputed using the mean, such as annual_inc (variable capturing self-reported annual income provided by the borrower during registration), bc_util (variable capturing ratio of total current balance to high credit/credit limit for all bankcard accounts), or delinq_2yrs (variable capturing number of 30+ days past-due incidences of delinquency in the borrower's credit file for the past 2 years), while others were imputed to zero depending on what NaN values were interpreted as most likely representing.

Similarly, missing values in certain other numeric and categorical variables were imputed with the highest-frequency number / class for those variables, as appropriate. Post this data preprocessing, our dataset contained 94 features vs the 149 we started with.

Independent variables were scaled only in cases where the models being trained on this data required feature normalization in order to ensure better convergence. Feature normalization was therefore used to scale the training data used in our multilayer perceptron while our linear and tree ensembling models were trained using unscaled data. As the y target variable was already in the set [0,1] once our qualitative loan default description were mapped to 0’s (bad loan) and 1’s (good loan), feature normalization was not required for our target variable.

Feature Engineering

In order to calculate Internal Rate of Return values for each of our loans, a variable cashflows was created to model cash flow schedules for our loans based on loan funded amount, loan term, loan funded date, loan interest rate, and loan default probability outputted by our CatBoost Classifier model for that loan. Based on the cashflow variable, another xirr variable was created based on calculating the net present value of this series of cashflows and optimizing for the IRR’s of these loans using the Python optimize.brentq() function. These IRR values will later be used to calculate the final IRR of our selected portfolio subset of loans based on our machine learning predictions.

Similarly, in order to later optimize our loan prediction cutoff values, a variable False_Negative_Cost was created to quantify the opportunity cost of predicting loan x as bad when it was in fact a good loan. This variable would later be used to further compare our different models as well.

Lastly, as date variables in the LendingClub dataset were passed in string format, different date variables were created by transforming these variables to datetime format and extracting specific date attributes such as loan issuance month and year, which were passed as standalone features in the final dataset used to train our models.

Feature Selection

To avoid multicollinearity in the data, both numeric and categorial variables exhibiting high degrees of multicollinearity >0.85 were dropped from the dataset. For categorical variables, this resulted in sub_grade, which had a 1.00 correlation value with grade, being dropped.

Similarly, for numeric variables, this method flagged (funded_amnt, installment), (fico_range_low, fico_range_high), (open_acc, num_sats), among others, as highly correlated pairs, of which the first variable of each pair was dropped.

For linear models, Recursive Feature Elimination (“RFE”) was employed to select the most important half of features in the dataset. The precision, recall and accuracy scores of these RFE-selected models were then compared to those of the linear models with no feature selection. Additional features were added / dropped from that point as appropriate. Conversely, RFE was not employed for tree-ensembling models that implicitly perform feature selection when outputting predictions.

Machine Learning Results on LendingClub - Pt. 1

The eight models I was interested in testing for this problem were Logistic Regression, Linear Discriminant Analysis, Quadratic Discriminant Analysis, Multinomial Naïve Bayes, Gaussian Naïve Bayes, Gradient Boosting Classifier, Random Forest Classifier, and CatBoost Classifier.

I also built a Multilayer Perceptron (MLP) neural network with eight Dense layers and five dropout layers using AWS Elastic Compute in order to investigate how a neural net would perform with this tabular data with a large amount of features.

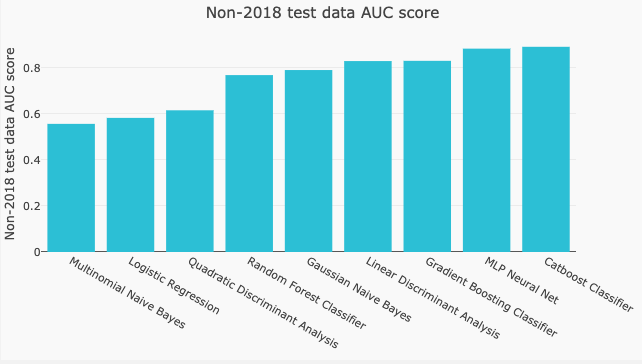

ROC_AUC score was used to measure the performance of each model as ROC_AUC offered a holistic view of the impact of both false negatives and false positives in the data in order to get a sense for how imperfect specificity and sensitivity would later translate into lost interest income and loan principal loss respectively.

ROC_AUC scores were computed on predictions for both the non-2018 test data and the 2018 test data in order to see if any of our models were overfitting the non-2018 training data. The majority of models reported higher ROC_AUC scores on the non-2018 target data than on the 2018 target data in the range of 1-6 percentage points, which is likely the result of slight overfitting that should nonetheless not be a cause for concern given the relatively small AUC score deltas.

Machine Learning Results on LendingClub - Pt. 2

Notably, however, our Quadratic Discriminant Analysis model exhibits some slight underfitting behavior with ROC_AUC being 2.5 percentage points higher when predicting 2018 data, and our Linear Discriminant Analysis and Random Forest Classifier models exhibit overfitting behavior of 7pts+ on the non-2018 data vs the 2018 data.

As can be seen from the above interactive table built in Dash, the models with the highest ROC_AUC scores are Catboost Classifier and MLP Neural Net. One surprising result was our Random Forest Classifier not reaching the 0.80 ROC_AUC benchmark achieved by the other tree-based models, namely Gradient Boosting Classifier and CatBoost Classifier.

These ROC_AUC scores by model are visualized in the below bar graphs for both the non-2018 test data and 2018 test data. Of note, the Dash app also allows the user to interactively sort the models by ROC_AUC score by clicking the arrows at the top of each column in the datatable, which automatically triggers a Dash callback that reactively updates the bar graphs below. The user can also select different models in the datatable, which will also reactively highlight in a different color the corresponding columns in the bar graphs.

Tuning Cutoff Threshold in Catboost Classifier

In order to optimize the prediction cutoff values in our CatBoost Classifier model, I created a scatter plot of a new variable cf_diff capturing the cost of both types of mis-classifications for different prediction cutoff values. If a loan was classified by our model as good when it was in fact bad, the principal loss of that loan would be added to cf_diff. Conversely, if a loan was classified as bad when it was in fact good, the sum of the interest payments we missed out on times the default probability of that loan would be added to cf_diff.

Summing cf_diff for different prediction cutoff values produced the following graph, showing that a cutoff value of 0.73737 minimized cf_diff. This high cutoff value makes intuitive sense as the principal loss of defaulted loans (=0) that were predicted as good (=1) should be expected to outweigh the lost interest income of non-defaulted loans (=1) that were predicted as bad (=0) in the aggregate. The user can hover over each point in this graph to view the total dollar cost for different prediction cutoff points.

Portfolio Results on LendingClub

The predictions from our Catboost were used to allocate capital to loans predicted as ‘good’ and to deny investment for loans predicted as ‘bad’. This optimized portfolio produces a net realized IRR of 7.40% for 36-month loans and 10.63% for 60-month loans, assuming 0% loan recoveries in the case of default, versus LendingClub's self-reported 2018 rates of return of 6.30% for 36-month loans and 8.11% for 60-month loans, which are further inclusive of actual loan recoveries post-default.

These results further compare favorably to a baseline model predicting all loans as ‘good’ loans, which yields 5.89% IRR for 36-month loans and 9.67% IRR for 60-month loans. A two-sample t-test of our model portfolio against the baseline portfolio further shows these results are statistically significant to the 1% level, and that our model produces 1.51% and 0.99% of alpha for 36-month and 60-month loans respectively versus the baseline model portfolio.

Real-time Machine Learning Predictions using Flask About LendingClub

In order to output real-time loan default predictions for each of the models, I created a Flask app that allows the user to select (i) a model of interest and (ii) a loan applicant subset of the data in order to output real-time default or no default predictions for that applicant. Pickle (.pkl) files of our data and each of our models are fed into the Flask back end to reactively output predictions for loan applicant subsets of our data.

Furthermore, the ‘Model Description’ section reactively provides a description of the model once the user has made their model description, while the ‘Applicant Overview’ section provides descriptive statistics on the selected applicant in order to contextualize each real-time machine learning prediction.

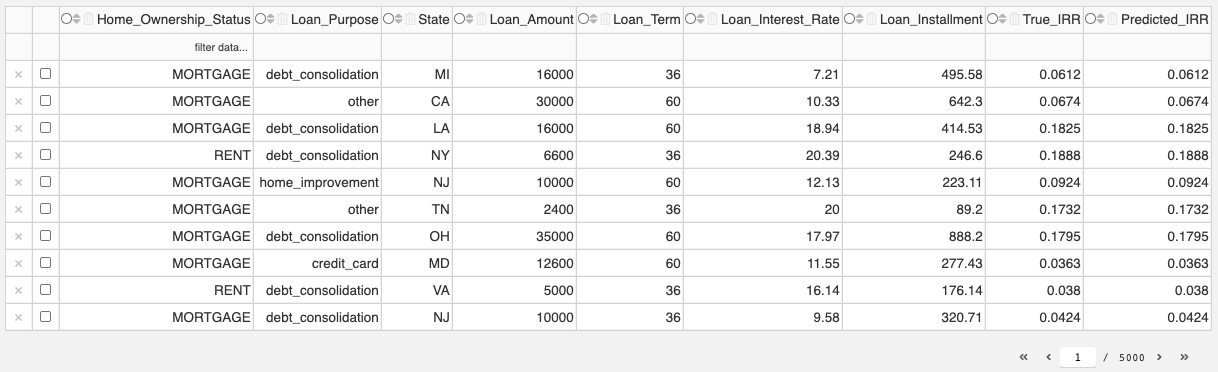

Portfolio Optimization & Customization

The below interactive datatable allows the user to optimize a portfolio as measured by IRR by specifying certain portfolio characteristics, such as exposure to a certain state, to a certain loan purpose class, or a certain loan applicant homeownership class. The datatable automatically sorts these loans by our model's predicted IRR to return the optimal loan subset based on the specified Home_Ownership_Status, Loan_Purpose and State parameters. This allows the user to quickly return a loan portfolio tailored to their investment criteria.

Thanks for Reading!

If you enjoyed this Dash app or would have any questions on some of this methodology, please feel free to contact me at phil062497@gmail.com. Thanks for reading!