Data Building: Portfolio Recommendation System

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Brief Introduction of Lending Club

Lending Club is a peer-to-peer lending platform where the borrowers go in to request for loans and investors can choose which loans to invest in. Lending club is the intermediary that does the processing and handles the evaluation of loans and dictates the appropriate interest rate for them.

The Data Set

The data set consists of over 2 million observations and 150 features. It certainly is a data set that paralyzes my 16GB RAM laptop. The data set can be found in kaggle.com. To circumvent the problem of dealing with such big data, we tackled the data set in a couple different ways. We initially stratified and sampled from the pooled data to establish a baseline reference. Eventually we went into a time series model and mimicked online training.

The Goal

Admittedly, I have not heard of Lending Club before. Yes, say what you want. I have been living in a manhole for a long time. I knew nothing about finance let alone the idea of asking for money if you need to pay back with an interest rate of 40%. There was an observation where a borrower was asking for $5000 dollars for a vacation and the interest rate was almost 20%, and one with an annual income of well over one million dollars but asking for a loan of $2000... anyway, the goal here is not to unravel Lending Club.

I will not analyze in depth the features of the Lending Club loan data set (though a big portion of time went into understanding the composition of many features). The features in the data set is vast and complicated. A lot of them are intertwined with one another in a highly complex manner. Certainly a very deep level of understanding of each feature is required in order for one to wrap one's head around the data set.

Without the finance domain expertise and given the limited time window we had (2 weeks), there was no way we could have analyzed the data set in a way that it deserved to be analyzed. Instead, what I hope to demonstrate here is how my teammates (Ashish, Brian, Gabriel, Tomas) and I tackled this data set in a progressively more and more sophisticated manner.

The ultimate goal was to build a portfolio recommendation application that would take into account user's risk appetite. Naturally, our final target variable would be total return for each loan. However, to get to it we had to separately predict on the type of loan (default vs non-default) and the return rate (or loan duration) for each type of loan. This meant that our work needed to separate into a classification and regression problem.

Trimming the Data Set

The very quick and lazy method would've been to randomly sample the data set. We did this and quickly performed a logistic regression to predict the target variable (of whether the loan would default or not). We got an instant 80+% accuracy. However, we knew beforehand that the result was not reliable.

The worst accuracy one can obtain through random picking for this data set would be about 80+%. This is because non-default loans (our target class) make up on average about 80% of the all the loans. Perhaps accuracy was not the right metric. The point here though is that we had to think through carefully what exactly it means for a model to be good.

Making the data realistic

We removed all information that were not present on or before the loan issuance date and kept only loans that are complete. This roughly halved the entire data set. For details on what features were removed and why, the reader can refer to the notes in my github.

Dealing with missingness

We removed features with over 95% missingness. After that, there still remained many features with significant amount of missingness. Note that 1% of missingness means tens of thousands of missing entries. This number is significant. We saw that a big part of missingness comes from the fact that many features were simply not recorded in the earlier years. Take a look below:

The top plot shows many missing features, those that were not part of the record in the early years (shown is data for 2007). The bottom plot shows that all features had recorded information (shown data is for 2018). The newer features were included gradually starting around 2012 where more and more observations began to fill up the features.

Recorded features in 2007 and 2018 differed significantly. To simplify the matter at hand, we chose to impute with -999. This was a justifiable choice because all entries had a positive value and we were going to use random forest as our main model for development which is a model that can work with this type of imputation. Note that analytical models such as logistic regression would not work with -999 imputation because the imputed values would skew the fitted regression line.

Outlier Removal

We removed outliers that were over five standard deviations away from the mean.

Stratified Sampling

To maintain the distribution of loans over time as well as with grades, we stratified the data set by months and by grade and sampled randomly within each stratum. We then pooled all the samples together.

Down Sampling

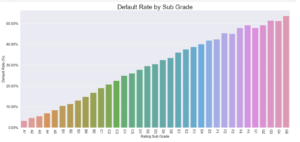

The default rate for each subgrade. It ranges from about 2% for high grade loans to above 50% for low grade loans.

Classifiers do not work well if there is huge class imbalance, such as the case for some of the grades (high grade loans in particular). Seeing that the data set is so big, down sampling to treat the imbalance was the logical move. We made it such that default loans and non-default loans were split exactly 50-50 across all grades. We do note that although down-sampling does not affect the classification labeling (i.e. the label prediction), the predicted probability must be adjusted (see ref for equation).[1]

The Basic Pipeline

The data set is fed into two models simultaneously. One is the classifier (on the left), the other is the regressor (on the right). Random forest was chosen for both types of modeling. The predicted target variables are then combined together to generate the final target variable: total return.

The pipeline consists of two models. We first needed to classify the loans into ones that will default and ones that don't default (yielding a probability for default, PD). With the classification, the loans are separated into their respective groups and a regression was performed for each. The regression was for finding out the return rate (or loan duration which is related to one another directly) for a type of loan (default, rD, or non-default, rND). These target variables are combined through:

Total Return = PD · rD + (1-PD)· rND

Note that the return rate (rD and rND) of each type of loan is directly tied with the loan duration (we have all the information for a given loan to link the two). Predicting on the return rate is equivalent on predicting the loan duration. Because we suspected the non-linear nature of the problem, we stuck with random forest as our model for development.

We believe that this methodology was sound, at least from a mathematical perspective. When we got our predicted results back, however, we surely panicked. The total returns for all loans were negative and our accuracy (for classifying the loans) was at around 50% (worst possible value).

We double triple quadruple checked all our steps. Everything made sense. The only culprit would be the data set but we doubted this. Our EDA showed somewhat distinguishable patterns. But of course, we were always just looking from a collapsed point of view, examining one variable at a time.

We quickly realized and accepted the insufficiency of blindly applying machine learning models. Even with a high complexity model such as the random forest, the model is unable to maneuver through the cracks. Human intervention is clearly needed.

Our Time Series Model

The unpredictability of any financial object is, speaking from my personal opinion, always associated with time. To make things predictable for a computer we needed to simplify the behavior: allow the machine to learn locally in time first where behaviors are generally much more predictable, have it remember these past observations, make the rigid machine become an adaptive one, and make it become smarter over time.

The Time Window

Our time series model begins by first determining the window size of the time increment. We do this by putting data sets of different window sizes through our basic pipeline (mentioned above) and checking their error scores. Not to our surprise, we found that as we shrunk the time window size, the error scores improved drastically (from ~50% to ~80% for classification accuracy for the down sampled data set, though the regression R-squared only improved from <0 to ~0.2).

Though the best error is not consistent across all time frames, on average the best performing time window size was 3 months, which makes sense due to the quarterly behavior of the economy. We do note that 3 months did not always contain all representations of all types of loans.

Each green box indicates a month worth of data. Three months make up the window size of a time increment. We train on each time increment individually and the models are combined by giving each a weight. We assign heavier weights to newer samples.

With the time window chosen, we proceed with the implementation of our time series model. We split the entire data set into non-overlapping sets based on the issuance date of the loans (indicated by an individual box in the schematic above). We then model the first time frame with the basic pipeline, save the best parameters for the models in a pickle file, model the second time frame with the basic pipeline, perform a weighted average on the two models, where a heavier weight is given to the more recent model.

This composes the ensemble of two models. A third data set for the third time frame is then introduced. We train a model on this third data set and with the resulting model, we perform a weighted average with the previous ensemble of two models where again we give the more recent model a heavier weight. Mathematically, the approach translates to the following:

f = ΣNi=1 wN-i · ( fi - fi+1),

where f is the most recent model, fi is the model at time frame i, w is the weight coefficient for the different models, and N is the number of time frames incorporated to date.

Incorporating this type of model meant that the list of most important features may potentially change between time frames. What we did was: as we progressed along time and gradually incorporated newer data sets, we updated the aggregated feature importance list. Features were constantly compared with a random dummy variable and the models would only account for features that were more important the random variable in the aggregated list.

Time Series Results

With our time series model, we saw a drastic improvement in default classification accuracy (from ~50% to ~80% for our down sampled data set) and some respectable improvement in the regression R-squared score for return rate (<0 to ~0.2). Clearly the regression still needed some work but overall we certainly felt that we were going in the right direction in terms of modeling and we thought that we would get at least some sensible results... The predicted total return for our loans were, like the results for the pooled data, mostly negative.

The predicted average default probability is about 40% (strikingly high...), the loan duration varied by subgrade with an average of 1.9 years for 3 year term loans and 2.6 years for 5 year term loans. The return rate for default loans averages to be about -0.85 whereas the return rate for non-default loans were on the order of ~0.1. If the predicted values were indeed true, it makes sense that the total return per loan is negative. The loss induced by defaults (together with its high probability) is too tremendous to be outweighed by marginal gains of non-default loans.

On the left shows the predicted loan duration for 3 (blue) and 5 (orange) year loans. On the right shows the default probability distribution

The predicted return rate of the loans. It is clearly bimodal here. The investor would either lose big or win by a much smaller margin.

The Portfolio Recommendation App

The portfolio recommendation app can be found here. To make the app work online, we had to truncate the data set significantly. The idea would be as we gathered newer data sets regarding complete Lending Club loans, we would update our adaptive time series model with the new data set, and then use the model to train on newly posted loans and post the best performing loans onto the app.

For this recommendation system, we made it such that it can account for the user's risk appetite. The user can choose whether he or she wants to follow a risky, neutral or safe objective. To account for the different types of objectives, we grouped the loans into three distinct categories based on the loan variance. Looking at the plot above for loan variance, we see that the behavior is bimodal. We designated safe to be the left shoulder of the left peak, neutral to be the right of the same peak, and risky to be the wide peak on the right.

Once the user selects their objective, a specific set of loans are loaded into the app. The recommendation begins by first simultaneously maximizing the total returns, minimizing the loans' summed variance and obeying all the constraints imposed by the user such as budget and loan type. Minimization of loans variance is what guarantees portfolio diversification. The recommended portfolio is the solution to this constraint-based optimization problem.

Conclusion

A glaring problem for now is that the predicted total returns per loans are mostly all negatives. I would assume on average the returns to be at least slightly positive. However, looking at the performance of Lending Club as a business, I see that their stock values have been plummeting. It just very well may be that Lending Club loans are not worthy for investment. The high default rates are too punishing.

Regardless of what is actually happening though, it is inarguable that our machine learning models suffer from our lack of understanding of the domain. The boost in our model performance by introducing our simple time series model is a testament to this fact. In fact, after brief consultation with a finance expert (thanks Mike), we learnt that our data set most definitely needed an overhaul.

The convention in finance is to look at annualized rates and this is for good reason. The information need to be compared on a "standardized" scale where values do not get inflated by loans' lifetimes. It is highly possible that our modeling were affected by this. So moving forward, what we would need to do is to break down each loan with survival analysis. This will allow us to see the loans as a function of rates which may lead us to more accurate modeling.

P.S. In my previous blog post I saw the insufficiency of human intuition and saw the need of machine learning. In contrary, this time, I saw the insufficiency of machine learning alone. Machine learning surely is not omnipotent and must have human interception. Neither one can excel on its own.