Using Data to Analyze Ames Iowa Housing Prices

The skills the author demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Background info

- The data set comes from Kaggle

- Ames, Iowa is a city 30 miles north of Des Moines, capital of Iowa.

- 79 exploratory variables to explain dependent variable, Sale Price

- 1460 houses for use to train models

- 1460 houses for price prediction

- 2006-2010

Project Objective

- Find the key features that influenced the sales price of homes in Ames, Iowa between 2006-2010

- Develop machine learning algorithms to predict the prices.

- Draw conclusions from the best model

What to Expect

- Exploratory Data Analysis

- Feature Engineering

- Linear Modeling

- Model Selection

- Conclusion

Exploratory Data Analysis

Exploratory Data Analysis- Checking NAs

First I wanted to check how many missing values are in both the training and prediction set, as displayed below.

Training Set: 5568 Missing Values

Prediction Set: 7000 Missing Values

Exploratory Data Analysis - Visualization of Sale Price

Here we visualize the dependent variable, Sale Price to see what kind of distribution we're working with. As we see below, the distribution is right skewed. There is a skewness of 1.88 and a Kurtosis of 6.53. From initial visuals, we might have some outliers that are heavily influencing the mean.

EDA- Checking Linear Regression Assumptions

Checking Linearity

Kaggle tells us that each independent variable has an affect on Sale Price. Linearity occurs when every variable explains or has an influence on the dependent variable. Checking Linearity is best done using scatterplots for continuous variables and box plots for categorical variables. We have visualizations below for GrLivArea (Above ground living area in square feet) , MasVnrArea (Masonry veneer square footage), and OverallQuality. Linearity is satisfied.

Checking for Homoscedasticity (Constant Variance)

This assumption states that the variance of error terms are similar across the values of all the independent variables. A plot of residuals versus a predictor can check constant variance among the residuals as displayed below.

In the case of the training set, we have a case of heteroscedasticity. The variance of the error terms get larger and larger as the independent variables get larger too. So homoscedasticity is not satisfied.

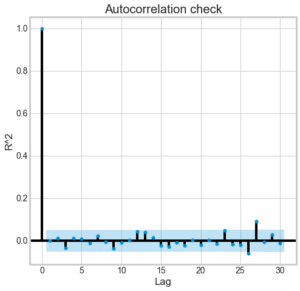

Checking for Independence of Errors

Linear regression analysis requires that there is little or no autocorrelation in the data. Autocorrelation occurs when the residuals are not independent from each other. The residuals aren't dependent with each other because each y value doesn't depend on the previous y value as we see below so Independence of Errors is satisfied.

Checking for Multivariate Normality ( Normality of Errors )

Errors between residuals should be normally distributed. This assumption may be checked by looking at a Q-Q-Plot. When we look at a Q-Q plot, the blue dots should pretty much be aligned with the red line. In this case we see that the blue dots follow a curved pattern and doesn't line up with the red line so Multivariate Normality is violated here.

Checking for Multicollinearity

Multicollinearity occurs when the independent variables are too highly correlated with each other. The coefficient estimates corresponding to the related variables will not be accurate in giving us the actual picture. The estimates can be very sensitive to minor changes in the model so it's important to check if Multicollinearity is occurring. The Pearson Correlation Coefficient is used to see if there’s multicollinearity in our data. If any correlation is above 0.8 or below -0.8, there's multicollinearity.

- 0.83 correlation between GarageYrBlt and YearBuilt

- Makes sense because most garages are built the same year the house was built

- 0.88 correlation between GarageCars and GarageArea.

- Makes sense because the amount of cars that a garage can hold and the Garage Area will both increase as one increases

- 0.83 correlation between 1stFlrSF and TotalBsmtSF

- Sometimes the 1st Floor is the same thing as the basement in some houses.

There is a case of Multicollinearity.

Using Data to Analyze Feature Engineering

- Imputing missing values(NAs)

- Handling violated assumption of low multicollinearity through feature creation

- Outlier Detection and removal

- Handling other violated assumptions of Multivariate Normality and Homoscedasticity

Feature Engineering- Handling NAs

- Examine each variable with missing values to decide what kind of solution we should follow for each of them

- Some NAs reveal the absence of a feature such as for Alley or Fireplace. For features like these, replace NA with “none”

- Impute NAs with the number zero (0), median, mode as necessary for integer type values

- These actions resulted in having zero NAs in training and prediction set.

Feature Engineering- Handling High Multicollinearity

- Create New Features to tackle multicollinearity:

- TotalSF = TotalBsmtSF + 1stFlrSF + 2ndFlrSF

- By adding SF of every floor we get rid of the possibility of TotalBmstSF being explained by 1stFlrSF and vice versa

- hasgarage: a binary categorical variable to check whether there is a garage. Developed from the GarageArea attribute.

- YrBltAndRemod = 'YYearBuilt + YearRemodAdd

- Developed to get rid of correlation between YearBuilt and GarageYrBlt

- TotalSF = TotalBsmtSF + 1stFlrSF + 2ndFlrSF

- Drop all features through which new features were created

- After doing all this, all the correlations were below 0.8 or above -0.8, so there's no multicollinearity.

Feature Engineering- Outlier Detection

- Cook´s distance is implemented.

- Also, to identify outliers I checked whether they fall out of the inter quantile range (IQR).

- After outliers were found through each method, I dropped outliers that were found in both methods.

- The reason we follow this approach is that the Cook´s distance assumes an ordinary least square regression in order to identify outliers.

- Given that I also plan to implement different linear models (lasso and ridge in particular), it was decided to also use the IQR.

- 5 outliers removed in total

Feature Engineering - Satisfying other linear regression assumptions

One of the ways to deal with skewed data is to do a Logarithm transformation on Sale Price. This helps normalize skewed data and lessens overfitting. Below we can see before and afters of the SalePrice. Now we can apply our linear models.

Multivariate normality assumption becomes satisfied.

We no longer have a case of heteroscedasticity so homoscedasticity is now satisfied.

Using Data to Analyze Model Selection

- Dummify non-binary categorical variables

- Fitted and cross validated four regression models

- Multiple Linear Regression

- Ridge Regression

- Lasso Regression

- Elastic Net Regression

Conclusion

- Ridge Regression was chosen because it has a high adjusted R ^2 (0.933), and the MAE is the lowest (0.069).

- Originally there was heteroscedasticity before the log transformation.

- I wanted to use MAE because errors got worse as continuous variables got larger

- The top 4 features with a higher positive impact on price:

- Neighborhood_Crawford

- TotalSF

- OverallQual

- GarageCars

- Crawford seems to be the neighborhood that positively affects the prices most. Building in that location has a great positive effect.

- Doesn’t mean the neighborhood has the highest house prices

- Bottom 4 features

- Neighborhood_Edwards = houses located in neighborhood Edwards

- LotShape_IR3 = irregular lot shape

- Condition2_PosN- near construction sites

- Neighborhood_MeadowV = Houses located in neighborhood Meadow Valley

- Prediction Error