Data Analysis Web-Scraping Project: Reuters News Articles

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Background

As an avid investor with a master’s in financial engineering, and more than seven years of front-office experience in the financial industry, I closely follow the financial news every single day to manage my own personal investments in stocks, fixed income, and commodities. I actively digest developments through Barron’s, Bloomberg, CNBC, the Federal Reserve, Reuters, and the Wall Street Journal. In this web-scraping project, we will focus on Reuters.

Reuters employs over 2,600 journalists in almost 200 locations around the world to deliver more than 2 million news stories annually. Topics covered included, but are not limited to, developments in the financial markets, business, geopolitics, technology, among others. Additionally, every single day, more than one billion people worldwide digest Reuters news in 16 different languages.

In this largely exploratory web-scraping project, we gathered, processed, visualized, and analyzed half a year’s worth of Reuters news articles (6,531 articles in total between July 2019 and Jan 2020), to address the following questions:

- Which topics (keywords) have been actively discussed? What insights can we derive from these?

- Can we classify the category of articles better? Can we make it easier for Reuters (and other news sources) to tag their articles, and also for readers to find the topics that they really care about?

- Can we measure the sentiment of the articles? What are some other projects that we can undertake in the future via sentiment analysis and related NLP techniques?

Visualization of Trends in the Data

For our first glance, we counted 54,000 unique words that appeared in these 6,531 articles. In this “word cloud”, larger words represent higher frequency of occurrence

The 20 most common words and their number of appearances in these 6 months’ worth of articles are illustrated here:

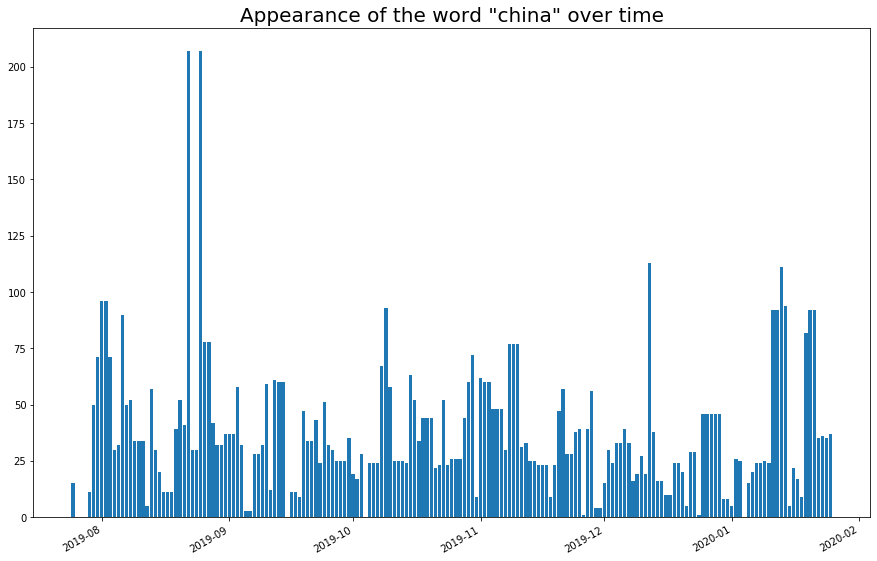

At time series analysis also shows how certain key words spike in occurrence when geopolitical developments intensify:

The 4x increase on Sep 17th, 2019 shows the impact of the drone strikes on Saudi Arabia’s oil refineries.

Similarly, the 2x increase on Aug 25th 2019 was when President Trump declared that he regrets not raising tariffs higher on China. Subsequent spikes were related to progress in trade negotiations.

The spike on Dec 19th, 2019 was due to Boeing “temporarily halting” its 737 Max production.

Trends

Trends in which topics were being discussed and how long certain topics stay hot can serve as useful guidelines to investors, business reporters, as well as business managers. For instance, from this data, we already noticed that:

- Macro-economic developments (on the trade war / tariff front, spikes in the price of crude oil, and interest rate policies of the Fed) take front stage. The U.S. and China are the two largest economies in the world, and how they work out disagreements on trade policies and supply chain arrangements impacts the global economy significantly. Crude oil is a crucial supply-side input to the economy, affecting the prices of gasoline, fertilizer, and plastics. And interest rates, particularly those in the dollar-dominated space, affect the prices of all sectors of the stock market and even other asset classes.

- The saying that "No news is good news" rings true here. Boeing's name appeared in the news far more frequently any other company (with 2,136 appearances), and their stock price has declined from almost $400 per share to almost $300 in the past one year, as a result of fallout from the 737 Max crisis. In contrast, Tesla (with 836 appearances), with epic earnings results, rallied aggressively from $230 to $560 (more than doubling in price) in just the past 6 months, and the news coverage was quiet relative to that of Boeing.

- News coverage of sudden (and usually negative) news events stays elevated for just 2 days at most. In most of these cases, immediate and quick news coverage is more important than in-depth analysis of the causes and implications.

Can We Assign Better Labels to the Articles?

By default, Reuters assigned 21 different classifications:

- 'Business News'

- 'Technology News'

- 'Big Story 15'

- 'Japan'

- 'World News'

- 'Foreign Exchange Analysis'

- 'Cyber Risk'

- 'Sustainable Business'

- 'Environment'

- 'Fintech'

- 'Wealth'

- 'Entertainment News'

- 'U.S.'

- 'Politics'

- 'Deals'

- 'Supreme Court'

- 'U.S. Legal News'

- 'Brexit'

- 'Asia'

- 'Hedge Funds - Asia'

- 'Top News'

Some of these appeared redundant. Yet, others appeared incomplete.

For example, we see labels for “Supreme Court” and “U.S. Legal News", which should be lumped together. We also see labels for “Japan”, “Asia”, and “Hedge Funds – Asia.” Additionally, there is a category specifically for foreign exchange, but none for stocks, fixed income, or commodities (even though we know that oil was a big topic during the past 6 months). Can we improve on this?

We asked k-means clustering to find us 10 new classifications for these articles. Here are the most common key words and some sample headlines from these 10 categories:

-

Most common words: 737, boeing, max, U.S., airlines.

“Boeing's 777X jetliner successfully completes…”

“S&P Global places Boeing's rating on credit watch”

“Airbus hits record highs, 737 MAX buyers fall…” -

Most common words: profit, shares, sales, estimates forecast

“Intel's blockbuster results lift shares…”

“American Airlines beats quarterly profit estimates…”

“Tesla's furious rally pushes market value past…” -

Most common words: Fed / Fed’s, economy, rates / rates, cut, inflation

“U.S. data point to moderate economic growth…”

“Fed on hold, but will financial risks matter?”

“The yield curve's still weird…” -

Most common words: oil, U.S., Saudi, crude, OPEC

“U.S., Canadian oil company bankruptcies surge”

“Oil edges up after five days of losses ahead of…”

“Divergent paths: Oil, natural gas going different directions…” -

Most common words: billion, CEO, sources, says, deal

“JPMorgan board raises CEO Dimon's pay...”

“Morgan Stanley executive Rich Portogallo…”

“Volkswagen CEO: Seat's de Meo probably in talks…” -

Most common words: trade, stocks, wall, street, S&P, rally

"S&P 500 gains, Nasdaq hits new high as investors..."

"Risk assets fall as Chinese virus triggers anxiety..."

"Wall Street slips from records after jobs data..." -

Most common words: sources, exclusive, billion, IPO

"Exclusive: Eurazeo hires banks for $2.2 billion..."

"Air France-KLM proposes buying 49% of Malaysia..."

"As Aramco hails record IPO..." -

Most common words: U.S., new, probe, court, antitrust

"U.S. Justice Dept. plans to hold meeting to discuss..."

"Insys founder Kapoor sentenced to 66 months in..."

"Apple lawsuit tests if an employee can plan..." -

Most common words: U.S., trade, China, deal, Trump, tariffs

"U.S. to unveil crackdown on counterfeit, pirating..."

"China will increase imports from U.S...."

"China, U.S. sign initial trade pact but doubts..." -

Most common words: U.S., says, trade, talks

"Brazil investors pour record $5.4 billion into..."

"Italy tax police search offices of Eni manager..."

"Amazon asks court to pause Microsoft's work on..."

Overall, we can say that k-means actually did a fantastic job with classifying these articles into 10 categories, simply based on frequency of words, without any labels given. Additionally, after the unlabeled clustering, we can observe some clear patterns:

- K-means clustered all of the articles related to Boeing's 737 fallout into category #1

- Corporate earnings releases into category #2

- Federal Reserve interest rate policy into category #3

- News about the crude oil market into category #4

- CEO / corporate management actions into category #5

- Stock market price movements into category #6

- IPOs and acquisitions into category #7

- Legal news into category #8

- The ongoing trade war between U.S. and China into category #9

- We can roughly label this one as "miscellaneous" (articles that did not fit neatly into any of the prior categories)

This was a demonstration of how even basic data science techniques can save journalists time (from having to label articles) and readers time (from searching for articles in the wrong section). The use of supervised learning techniques could add even more value in this area.

Analyzing Sentiment via Basic NLP

Python provides an easy-to-use and popular NLP library called "NLTK" that allows us to tokenize (split articles / sentences into words), remove stop words (remove non-essential words such as "the", "is", "in", etc), and evaluate sentiment (assign a number between -1 and 1, where -1 is very negative and +1 is very positive)

For instance, NLTK produces a sentiment of 0.34 for this short article:

- “SEATTLE (Reuters) - Boeing Co (,) on Saturday successfully completed the maiden flight of the world's largest twin-engined jetliner, the 777X. ,The 252-foot-long passenger jet landed at Boeing Field near downtown Seattle at 2:00 p.m. local time”

Running through our half year's worth of data, we see that NLTK assigned:

- The lowest sentiment to Sep 17, 2019. China's industrial production declined to a 17.5 year low, the oil market was still recovering from the drone attacks, and the S&P500 declined slightly by 0.3% that day

- The highest sentiment to July 28, 2019 (a Sunday). When stocks opened on July 29 (the Monday after), they were slightly up by 0.1%

One question that came to mind was: is the news article sentiment for that day correlated to the stock market return? And if so, could we monetize it in some way?

The answer was (perhaps) predictable:

Correlation

With a correlation of 0.02, there was no meaningful relationship between the return of the S&P500 for that day vs. the overall sentiment of news articles from Reuters. Some very plausible explanations:

- NLTK is not well-trained for financial news sentiment. Assessing the sentiment of something common such as "reviews were overwhelming positive for this new movie" is very different from assessing the sentiment of something very financial-jargon heavy such as "the yield curve bear-flattened post-FOMC meeting as a hawkish tone weighted on the market."

- The financial markets are already very efficient in the developed world. It would surely take more than a simple sentiment analyzer to predict stock market returns

- Stocks move based on where reality materializes vs. expectations. So, even if the sentiment were very negative, if the earnings release or trade deal negotiations came less negative (but still negative overall), the stock market could actually rise instead of fall.

Regardless, NLP has much potential in many other areas. It is already used in auto-complete / auto-corrections, for customer satisfaction, translation between two languages, spam filters, and chat bots. In the arena of news articles, it is used to assess political bias, or to assess whether a news article is fake or real. Already, we are seeing that there are already numerous areas where artificial intelligence is used to produce financial reports automatically (Narrative Science Inc), or alert reporters on developing stories before they become hot topics (such as Heliograf).

An optimistic viewpoint is that data science and machine learning will complement human reporters and financial analysts, freeing their time up to allocate towards tasks that are not easily automated, as opposed to taking jobs away from humans.

Appendix

Some technical notes

- I used the 250 most common words as my feature space (m = 250), with 6,500 observations (n = 6,500) to feed into the k-means algorithm

- Choosing the number of clusters in k-means is somewhat of an art (although techniques like the "elbow method" help give us a guide line). Although I chose k = 10 in this article, we obtained decent results for values up to k = 15 as well

- Random initialization helps prevent k-means from getting stuck at local optima

Tools used for this analysis

- Python tools: Scrapy (web-scraping spider), Jupyter notebook

- Python libraries: NumPy, Pandas, Matplotlib, Seaborn, WordCloud, Sklearn, NLTK, TextBlob

Contact:

- GitHub: https://github.com/jzl4/Web_Scraping_Project

- Linkedin: https://www.linkedin.com/in/joe-lu-44945114/

- Gmail: Joe.Zhou.Lu@gmail.com

Sources

- liaison.reuters.com/

- www.wsj.com/articles/robots-on-wall-street-firms-try-out-automated-analyst-reports-1436434381

- www.forbes.com/sites/nicolemartin1/2019/02/08/did-a-robot-write-this-how-ai-is-impacting-journalism/#51cd24267795

- link.springer.com/article/10.1007/s00799-018-0261-y

- www.forbes.com/sites/enriquedans/2019/02/06/meet-bertie-heliograf-and-cyborg-the-new-journalists-on-the-block/#66acc5bf138d

- narrativescience.com/