Funding Goals: Which Kickstarter Projects Reached It?

The skills the author demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Summary of Kickstarter Project Success Article

- I looked at the latest data from the crowdfunding site Kickstarter to analyze trends in project success, using a mixture of quantitative analysis and natural language processing.

- Around 40% of the projects meet their funding targets - this varies significantly across time and project type. I built models to predict which projects succeed, focusing on technology projects which typically have high funding goals and low success rates.

- I explored a variety of models on the project and text data using tree-based, deep learning techniques and AutoML to improve the f1 score from 57% to 75%.

- Tools used: Python, Google Colab, sklearn, shap, pdpbox, autosklearn, FastAI libraries, Bokeh, github here

Introduction to Kickstarter

Kickstarter is one of the leading global platforms for crowdfunding creative projects, with diverse campaigns ranging from $1000 craft experiences to $5 million tech product releases. Creators pitch new projects to “backers” who can contribute towards a project for various levels of rewards, typically including the product or service itself. The funding model differs from many other crowdfunding sites as it does not involve any transfer of equity and the pledges are only collected if the entire funding goal is reached.

The website is a great source of information on interesting ideas and public responses, and can be readily scraped to explore several interesting questions:

- What are the patterns of project success across time and categories?

- How likely is a given project to succeed? How would you design a project to maximize the chance of success?

- How much is likely to be raised? What goal should be set?

NLP

For now, I’ll focus on questions (1) and (2) - these fit neatly with NLP approaches explored in my ML research project and the risk modelling principles from my previous job.

Unsurprisingly, there are plenty of data science blogs, papers and repos that examine Kickstarter data already – it should be noted that I am looking at the pure prediction problem which makes good results harder to obtain. My aim is to model whether a new project is likely to succeed using a subset of the information available at the launch - so I try to be strict about maintaining temporal structure.

In the future, I would like to add more information about each project like the risks and challenges or specific pledge details. Examining the “trajectory” of a project and comparing to data from Indiegogo (a similar tech-focused crowdfunding site) would also be interesting.

Applications

Predictions could be useful for a few different audiences:

- Project creators – how should project pitches be designed to maximize the chance of success?

- Backers – could potential backers filter projects to focus their attention on new projects with a reasonable chance of success? Backers may also have a "portfolio" of projects pledges - they can offer no support, small support or significant support across a wide range of live projects and the best action could depend on the likelihood of success for each project.

- Kickstarter – could recommendation engines help promote projects which are likely to be near the success threshold?

Dataset

I use data scraped from the Kickstarter website in mid-December – the dataset is fairly large, capturing around 200,000 individual projects which span back to the inception of the organization in 2009. This contains a mix of quantitative data on funding goals and timings, categorical information on the project types and raw text like the project description. Quite a bit of pre-processing is needed to build a clean dataset with useful

Features:

- Language – since I’ll be using NLP techniques, I focus on the projects described in English, cutting out around 6,000 using the langdetect library

- Project organizer - projects can be created and run by individuals or organizations and it seems likely this could interact with success rates. However, the dataset only contains the name of the creator – so I used a combination of the NameDataset library and the entity detection to flag names which are likely to be organizations. I also add a metric to capture the number of historic projects run by each organizer

- Funding window – I added variables to capture the time gap between project creation, launch and funding deadlines, as well as the funding rate ($/day requirement)

- Sentiment – used textblob library to label the objectivity and polarity of the project pitch – perhaps enthusiasm and devotion matters to project success?

- Duplicates – I found there were approximately 2700 duplicates blurbs in the dataset, often capturing where a project spans categories or is relaunched. To remove this distortion, I cut all duplicate rows

- Currencies - I convert goal amounts to USD equivalents for a consistent size metric. I also take natural logs of these variables to help capture the broad range in project scale - this does not directly affect tree-based logic but does aid visualization

- Encoding – standard dummification approaches for categorical variables

- Dropping variables - all information which would only be available after the project launch is removed eg staff favorite flags.

Point in Time Nature

There are some issues relating to the point-in-time nature of the data – the dataset is scraped at a specific time, but posts may be removed or edited after launch. You might expect this to positively skew success rates as unsuccessful campaigns could be hidden or removed from the site. To explore this, I looked at the projects listed by year against a historic dataset scraped through a similar process in 2018 – this analysis indicates that many of the pre-2015 projects do not appear in the latest dataset.

Despite this the success rate profile does not look materially different between these two cuts, which may mean the skew is not systematic. So, inaccuracies in the historic project database could be an issue– in an ideal world I would aggregate a set of unique projects across scraped data at all available points. For now, I’ll proceed with 2019 data on the assumption that historic projects are missing completely at random.

Exploratory Analysis on Data on Kickstarter Project Success Rate

Simple analysis of the dataset is revealing - the most popular categories to launch a Kickstarter project relate to music, film & music and art.

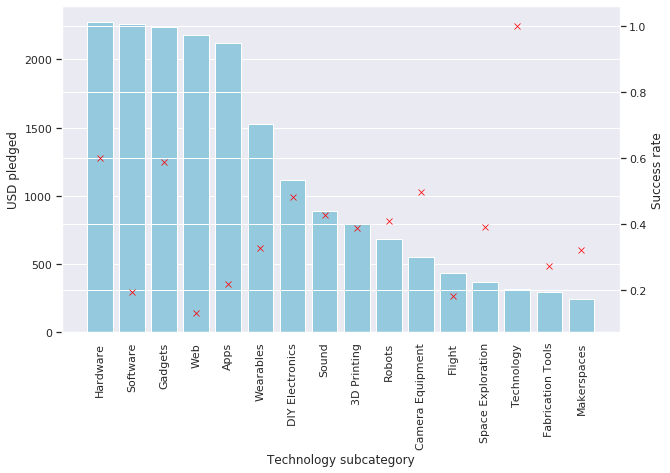

Focusing instead on the flow of funding offers a different view - significantly more money is pledged towards technology, design and games with an average of $30k+ per project, compared to <$12k in all other categories. There is significant variation in success rates by category: more than 75% of comics/dance projects succeed, while fewer than 30% of food/journalism projects are successful.

Modelling Technology Project Success

My aim is to build a classification model to predict which projects succeed – I will focus on the f1-score to judge the results as this reflects a useful mix of the accuracy and recall. To deal with this small class imbalance I use under sampling of the majority class (failure) which means results across approaches are more comparable.

To partition the dataset for modelling purposes, I want to set this up as a pure prediction problem – so for model tuning/selection I use data up to 2017 for training with validation on 2018 data. For the final performance test, data up to 2018 is used with testing on 2019 information. This does create challenges with setting appropriate thresholds since the aggregate success rate drops from around 75% before 2013 to below 50% in 2014-16.

For efficiency I focus on the technology category – this contains about 18,000 projects across 16 subcategories with an average success rate of c40% over the period 2009-2017.

Results across a set of Models

My approach considers results across a set of models:

- Baseline heuristic model which uses aggregate success rates by category - this simple approach predicts that all ‘Technology, ‘Hardware’ and ‘Gadget projects will succeed, and others fail

- Random forest using all project parameters excluding the project description

- Neural net based on tabular project data with two layers of 500 and 50 nodes with dropout, batchnorm and ReLU activations

- NLP model trained on the descriptions only, using an RNN language model and encoder trained on wikitext

- A combined random forest model using (2) and (4)

- A combined neural net based using (3) and (4)

- An autoML pipeline using the sklearn package using the same data as (4)

Insights

These results show that the NLP encoder, neural net and random forest models offer similar improvements over the baseline model; these are complementary approaches based on different inputs, so the combination brings a significant jump in performance. Auto ML does offer a further marginal improvement, although the complex pipeline means this is at the expense of interpretability.

The final f1 score current is a significant improvement on the baseline model. Whilst this is not a particularly accurate model, this result is probably unsurprising - assessing the creativity, appeal and viability of a new project pitch is surely a very difficult task. But it does demonstrate that there are patterns in the dataset which can help differentiate likely winners.

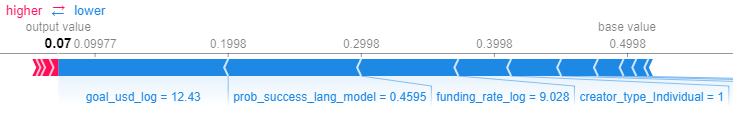

To understand the model output, I focus on the combined NLP and random forest model and use explainability tools from the shap and pdpbox libraries. The top features are visualized in order of importance below showing:

- The NLP model output ("prob_success_lang_model") is most important.

- Year of launch is important with early years adding to the likelihood of success (since aggregate rates were higher).

- If the creator is an organization rather than an individual, this generally improves the modelled odds of success.

- High funding rate requirements reduce the chance of success.

- Gadgets and hardware projects are more likely to succeed while web projects are penalized.

PDP

Partial dependencies plot (PDP) better demonstrate some of these trends - the below chart shows how the modelled likelihood of success drops with increasing (logged) funding requirements, assuming all other variables are held equal. So, if you want to design a successful project, picking the funding target and window is critical.

The most useful output of my work is probably the modelled probability of success, rather than the predicted label. This could help stakeholders help assess the likelihood of success and act accordingly. The shape library offers useful visuals to show the key factors behind individual predictions: for instance, the below 2019 project was predicted to have an 8% chance of success mostly driven by the comparatively high goal/funding rate and unfavorable project description.

Whereas another 2019 project generated a 70% likelihood of success as a result of the favourable project description, gadget categorisation and long creation window.

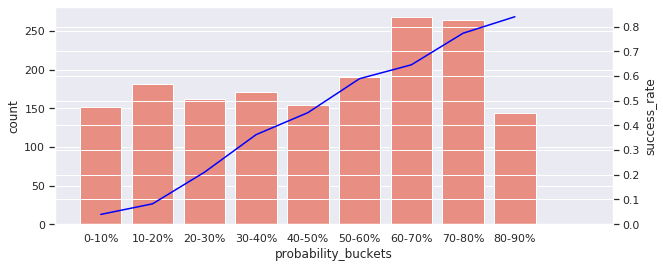

The utility of probabilistic predictions is best viewed across populations. In the bar chart below, you can see the predicted distribution of success % for the 2018 validation set – this demonstrates how the model produces a good distribution of success bands across the full range from 0 to 100%, although very high likelihood predictions are rare. The overlaying line shows the actual realized success % within each bucket which has the expected linear trend. As an example, there are around 310 projects which were modelled to have a 60-70% chance of success; of these, 65% actually raised their target.

A similar pattern is seen on the 2019 test dataset, indicating that the model does offer predictive power for future projects.

Application

To allow users to experiment with the dataset and predictions, I created a dynamic interface using the Bokeh library. This has three tabs showing the project success over time, the key prediction variables and the model outcome.

The app can be run locally by downloading the Bokeh folder in my GitHub folder. Then navigate to the directory and use the terminal to run the following command: bokeh serve --show main.py