A POS Tag Approach to Predict Drug Interactions & User Score Rating

1,2471,247 Comments in moderation

This blog explores the utility of a (NLP) Part-Of-Speech Tag counts based methodology to predict Drug Interactions & User Score Rating

MOTIVATION: In this blog I address a key question pertinent to Drug Interactions that concerns public health in general. Herein I present a model that predicts the number of side-effects incurred to a patient under medical dose. I'll also present a model that predicts the Consumer Score Rating on a Drug Product based on user feedback. Arguably, the Consumer Score Rating model can offer potential business intelligence and insight in order to better streamline marketing strategies to improve sales. It is expected that the model will be especially useful soon after a product is launched in the market for which user reviews are available.

WORKFLOW & QUESTIONS OF INTEREST: Much information pertinent to various drug products is made freely available to public at drugs.com. This information is extracted and made use of to further draw insights and build data-driven applications. To address the specific questions of interest i.e., to predict - (1) the number of Drug Interactions and (2) the Consumer Score Rating, relevant information is extracted from drugs.com. To this end, I developed and implemented a Spider using (Python's) Scrapy. The spider extracted the pertinent user demographic/profile information, user experiences, inter alia, for analysis. This raw data set was utilized to perform NLP Sentiment analysis using Text Blob. The data set was further expanded to add predictor variables that correspond to the Part-Of-Speech Tag counts from each user review. This final data set served as a starting point to build the aforementioned predictive models of interest. The models were built using regression algorithms. Besides the standard regression algorithms (Linear, Lasso, Elastic Net, kNN, Decision Trees), Boosting algorithms such as Adaptive Boosting, Random Forests, Gradient Boosting, Extra Trees were also investigated.

METHODOLOGY:

- DATA EXTRACTION: Several popular drug products from drugs.com were included in the model pipeline for predictive analytics and visualization. The spider crawled all web pages (~6500) to collect user reviews for each drug product. Specifically, from each user post the following quantities/attributes were extracted - (1) user Comment (text data), (2) the Time Duration the consumer used the drug product, (3) the number of FDA Alerts, (4) the Class of the Drug, (5) the Name of the Drug, (6) the user Rating, (7) the number of Useful Reviews for a specific user Comment, (8) the Date the Comment was posted, (9) the Drug Interactions (Major, Moderate and Minor), and (10) the Pathological Condition for which the Drug was consumed. (The code for the Spider can be found from the following github link: https://github.com/uppulury/DrugReview). The following table summarizes the meaning of each variable:

| Variable | Type | Permissible Value |

| User Comment | Text | User's comment |

| Time Duration | Categorical | 0,1,2,3,4,5,6,7 |

| FDA Alerts | Numerical | >=0 (integer) |

| Class | Categorical | Text |

| Name | Categorical | Text |

| User Rating | Numerical | (Integer) 1-10 |

| Useful Reviews | Numerical | (Integer) >=0 |

| Date | String | String |

| Drug Interactions | Numerical | (Integer) >=0 |

| Pathological Condition | Categorical | Any text |

| POSTag Count (normalized) | Numerical | (Rational) 0 to 1 |

| Subjectivity | Numerical | (Rational) 0 to 1 |

| Polarity | Numerical | (Rational) -1 to 1 |

- 880 distinct drug products and 163,000 user reviews were scraped. Hence the sample size of the data set is 163,000.

- DATA PREPROCESSING: (1) The number of Major, Moderate and Minor Interactions for each Drug Product was extracted from the raw data. (2) In order to quantify the influence of Time Duration (on User Rating) a user consumed a particular product, the Time Duration attribute is ascribed to a categorical (number) variable.

- Using the Python library - TextBlob, sentiment analysis and part-of-speech tagging was performed for each user comment/review. More specifically, the subjectivity and polarity scores for each review were calculated. The part-of-speech tag counts were normalized. These POS Tag counts and the subjectivity and polarity scores were used as attributes/predictor variables for the data set. It is posited that the POS Tag counts, subjectivity and polarity scores offer improved predictive ability for 'User Rating'. The tag details are as follows from the following table:

- The attribute of "Useful_Reviews" (i.e., the number of users who find a review useful) is extracted as a string data type from the Spider crawls. This attribute is type cast to a floating point variable.

- This preprocessed data set is considered as the final data set for the task of developing an ML model to predict the User Rating and the Major Drug Interactions.

FEATURE SELECTION:

DESCRIPTIVE STATISTICS:

- DISTRIBUTION OF ATTRIBUTES: Each feature in plotted to understand their distribution.

- CORRELATION MATRIX: The correlation matrix reveals interesting correlations among feature attributes of the POS Tag counts of user provided reviews and the extracted features (using the python spider) such as user-rating, time-duration, etc. The correlation matrix holds promise for further ML modeling tasks.

(The code for the descriptive statistics and the two models is available from the following git repository: https://github.com/uppulury/DrugReview)

USER-RATING MODEL:

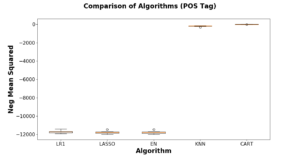

- Spot Check of Algorithms: The preprocessed data set was split into 80/20 train/validation sets using Scikit-learn's "train_test_split" function. Using 10-Fold Cross Validation, the following Regression algorithms were tested on the training data set i.e., Linear Regression, Lasso, ElasticNet, kNN and CART. The data is modeled using regression algorithms using the scoring metric of 'neg_mean_squared_error'.

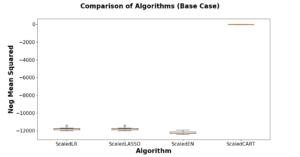

- Testing Algorithms on Standardized Data: Owing to a near Gaussian like distribution and/or single exponential like distribution in the data set (especially features of polarity, subjectivity, FDA alerts, etc) the training data set was standardized using Scikit-learn's Standard Scaler tool. Algorithms were tested on this standardized data using 10-Fold CV (results are shown in the plot below)

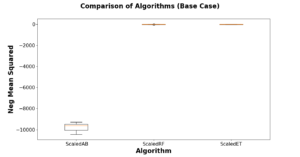

- Boosting/Bagging Algorithms on Standardized Data: Adaptive Boosting, Random Forests and Extra Tree algorithms were tested on the standardized data sets (shown below) to evince the Extra Trees algorithm achieved a better score in comparison to RF and Adaptive Boosting. An interesting trend is observed in the case of boosting/bagging algorithms in that similar trend lines appear across various algorithms for the POS Tag model versus the Base Case model (i.e., without POS Tag features). However, in the case of Random Forests and Extra Tree Regressors the POS Tag count features improve the scoring metric by 4-5 units with a better improvement evinced for Extra Trees Regressor (5 units).

- The ML model was fine tuned for optimal hyper parameters using Extra Trees Regressor and the Grid Search CV approach. 100 tree estimators for the model yielded optimal scoring output beyond which the the scoring output tended to flatten out with increasing number of trees. Hence, a total of 100 trees were utilized to finalize the ML model to predict the User-Rating.

- The finalized model was fitted using the training data set and scored on the validation set. The POS Tag model produced a score of 4.58 versus a score of 9.87 for a model without POS Tag count features in the model using the 'mean_squared_error' scoring metric. Hence, it is concluded and proposed that the predictive model with POS Tag count features/attributes in combination with the Extra Trees Regressor (and NLP tools) is to predict the User-Rating from user feedback.

MAJOR-INTERACTIONS MODEL:

The above approach was applied to predict the property of 'Major-Interactions' that a drug had with other drugs that were simultaneously consumed by a user. The figures below summarize the results. The model with the POS Tag approach gained only modest improvements compared to a model without POS Tag count features.

(1) Spot Check Algorithms:

(2) Test Algorithms on Standardized Data:

(3) Boosting/Bagging Algorithms on Standardized Data:

(4) FINE-TUNING:

The finalized model with 100 trees was fitted and scored to predict the Major-Interactions variable to evince a mean squared error of 1.76 for the POS Tag model versus a score of 1.38 for the base case model (without POS Tag features). Owing to a large variation in the target variable more advanced feature selection methods will be needed to build useful predictive models in this scenario.

- SUMMARY: The quantitative range of the target variables in each of the studied model (i.e., 'Major-Interactions' and 'User-Rating') amount to variances of ~ 17370 and 11 respectively. It is quite possible due to the high variance in the Major-Interactions target variable, the POS Tag count approach could have suffered marginal gains from the scoring algorithms. Nevertheless, to engineer more features to a raw data set of size 163,000 using POS Tag counts is still interesting in it's own right to probe a select class of regression problems i.e., User Rating model in this case. Hence, it is concluded that depending on the nature of the data set itself the POS Tag counts can potentially offer useful insights into predicting target variables with confidence.