Feed The Beast: A Roadmap To A Spark-Based Cryptocurrency Analyzer

"Give me a place to stand, and I will move the world." A strong statement from the Greek mathematician, Archimedes, when demonstrating the principle of the lever. Shift this lofty sentiment to today's exploding computational tools, and you'll get a range of wonderment at what has been accomplished with machine learning to a somewhat unsettling possibility expressed by the 3rd option in the Simulation Hypothesis (please don't unplug the machine). But, either way, feeding vast amounts of data to every more powerful machines continues to shake the world.

Of the many solutions to those big data demands in the machine learning community, one of the more popular ones is the marriage between Hadoop and Spark. These technologies provide an exploratory vehicle for this project, and cryptocurrencies, the fuel.

The Goal

Although the primary goal of this project is, selfishly, to explore the intricacies and subtleties of the technologies involved, it is further motivated by an interest in cryptocurrencies. Specifically for this project, a fairly big data solution should allow us to ingest the fast growing data associated with these virtual currencies, analyze it's structure (similar to this paper's constructed bitcoin transaction graph analysis)

and make some kind of prediction of interest. In this case, to predict price movements for a cryptocurrency via bayesian regression for a buy/sell/hold signal (an effective and fairly straightforward strategy already achieved). Of course, this also puts portions of the system under realtime constraints.

The Data

The fuel for the machine is cryptocurrency, which means we are talking about the blockchain, arguably the most significant part of the bitcoin/blockchain invention (and a concept leveraged by all other cryptocurrencies). As a distributed database itself, some individuals newly introduced to the topic may wonder why you need to put a database into another datastore such as the components in Hadoop(Hbase/HDFS). And that's because the duplicated and distributed blockchain is really about data integrity and not processing speed. In a world where hacking is proving that all data is not only vulnerable to theft and ransom, it is unknown manipulation that will be the worst scenario in most cases (currency transactions/balance, voting tallies, etc). The distributed ledger properties of the blockchain helps solve that problem. In fact, most databases have, or are in the process of introducing blockchain properties into their structure.

But we want all the details of the blockchain itself ingested into our Hadoop/Spark cluster. These details can take up substantial space. As of the date of this blog post, the bitcoin blockchain takes up almost 145 GB. Ethereum, a younger but second largest cryptocurrency by market capitalization, is growing even faster and may even hit 1 TB before 2018 (ethereum also contains a concept called Smart Contracts to provide distributed computation that certainly adds weight to its blockchain, and definitely other promising analyses at some point). And these are just 2 of at least 609 actively traded cryptocurrencies with some market capitalization value.

All this means our processing cluster needs to be initially seeded by the entire current blockchain of any selected cryptocurrencies, and then be able to ingest realtime blockchain updates as well (using ethereum and bitcoin as ranges, anywhere from ~17 seconds to 10 minutes).

The blockchain data itself can be acquired in a multitude of ways. Since I wanted all original files for later use/testing, I ran the Bitcoin Core software on a cheap EC2 instance and saved the synced data files to an S3 bucket. I then used another free program (rusty-blockparser) to parse and dump the original data files into csv files. I could then do a HDFS -put operation directly from S3 to initialize the cluster.

System Design

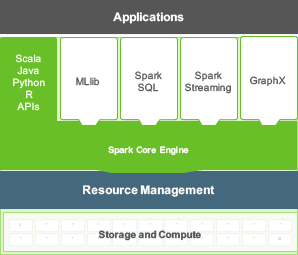

For pure convenience and custom configurability, we are going to take advantage of Amazon's Elastic Map Reduce service. We can choose pretty much any assortment of big data software and virtual hardware configurations. In fact, there are so many options available that you could spend months tweaking configurations for different priorities. Here is an overview of the generic Spark architecture.

Using this image as a reference, we'll work our way up the stack. And since we can always scale the system up once everything is in place, we'll start off supporting a single cryptocurrency, bitcoin to keep things simple.

- Storage and Compute

We want all the data in memory so Spark can be leveraged to its full potential. It's worth noting, however, that this is really not absolutely necessary since Spark knows when to write or pull data from disk very quickly and efficiently. But since we are just starting with one currency, it doesn't hurt. And again, there is a million ways we could configure things, but we will go with using 3 c3.8xlarge EC2 instances as our main worker nodes. That'll give us compute optimized machines with each having 32 cpu cores, 60 GB or RAM, and 640 GB of SSD drives. This will be more than sufficient for our 145GB+ dataset. Our controller master node doesn't have to be as powerful, so we can downscale the instance to a m3.2xlarge which gives the machine 8 cpu cores, 30 GB of RAM, and whatever EBS storage you want to add. Hadoop's HDFS is the primary data replication system here.

- Resource Management

The cluster resource management system is YARN (Yet Another Resource Negotiator). The name kind of says it all, but it's pivotal component that you fortunately don't really have to touch.

- Spark Core Engine

Our main area of play. Here, our RStudio/Shiny frontend will invoke all the heavy lifting that Spark can offer. We'll use the MLlib (Spark's machine learning library) for blockchain query, but not for price predictions since Spark only offers multinomial and Bernoulli naive Bayes algorithms. These are suggested as good document classifiers, not price signals.

Spark Streaming is an abstraction for numerous methods of streaming data into the cluster for processing in a discrete manner. For example, a twitter stream could be classified for sentiment before being ingested and updating application dashboard. For us, a cryptocurrency exchange (Bitfinex's Websocket API) provides book trades via a websocket that can be utilized by a Spark Stream TCP connection.

And lastly we have Spark's GraphX, the graphs and graph-parallel computation component. This is ideal for our graph analytics goal. Blockchains are ultimately immense graphs that require scalable resources for modeling.

- Applications

This is currently our RStudio/Shiny connection to Spark, but obviously you have the capability to wire up numerous other frontends.

The Beast Choked Too Soon

You can spend alot of money too quickly if you begin your testing with high-end machine instances. I started with much cheaper instance to try to probe different characteristics of the system, such as network latency, and computational loads. The system can quickly bog down if you throw to much data in before figuring some of these characteristics out.

That includes the data format done during any translations or incoming data streams. You really have to know your data semantically and structurally.

Things To Be Aware Of

There are always road bumps on any journey. Maybe a little bit of a surprise, scaling more powerful EC2 instances is one of them. Creating clusters requires that you investigate your maximum allowable running instances for a specific machine type. Getting approval can actually take more than a week, and can easily be denied if Amazon engineers feel it could "destabilize" other parts of their system. This is probably the most significant item to be aware of in designing a powerful interactive cluster.

Next On The List

Since Spark can easily scale to thousands of nodes and petabytes of data, there are several enhancements worth investigating. One of Spark's advantages is the numerous technologies that can be incorporated, such as the open source, deep learning software called H2O.ai . Able to leverage Spark's scalability, H2o provides a very useable interface for application developers to create machine learning apps for continuous data ingestion and learning.