Anomaly Detection Data with Fraudulent Healthcare Providers

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

LinkedIn | GitHub | Email | Data | Web App

Introduction

Healthcare insurance fraud is not common but unfortunately, it does exist. According to the National Health Care Anti-Fraud Association data, health care fraud costs around $68 billion annually in the US alone. This is only a fraction of the total revenue of the industry, so identifying fraudulent activities in healthcare is a practice of anomaly detection.

In this project, we were given a folder of datasets with patient data, and inpatient (IP) and outpatient (OP) claims data where each record represents a claim that was submitted by a healthcare provider to an insurance company. We also had a list of providers with a column determining whether they should be flagged fraudulent or not.

Our task was to study the datasets we were presented with and to identify providers who were submitting potentially fraudulent claims.

A major challenge in our case was that our task was to identify potentially fraudulent providers, but our data was at the patient and claims level. Therefore, we needed to use this patient and claims data to aggregate features by provider. In other words, we had to make a new dataset with information for each individual provider, using the information from all of that provider’s claims and the patients they served. With this new provider-based dataset, we could analyze the providers and detect the potentially fraudulent anomalies.

Feature Generation Data

Our provider-based table ended up with 45 generated features which were created during the data analysis phase of our project, based on what seemed to be important for identifying fraudulent providers. If we noticed a difference in distribution between fraudulent and non-fraudulent providers, we’d come up with a way to create a feature to capture that information.

We’ll take you through a small sample of our features to give an idea of the kind of information we were looking for.

1. Claim Duration

One simple, yet effective feature that we generated was Duration Mean. For each provider, this is the average of the difference between each Claim Start Date and Claim End Date. In the healthcare industry, this is often referred to as Length of Stay.

While Duration on OP claims were mostly zero (which makes sense because OP interactions by definition take place within one day), the Duration Mean on IP claims showed much more variance between providers, and even showed different distributions between clean and potentially fraudulent providers. The plot below shows the distributions for IP Duration Mean, with the orange line being potentially fraudulent providers and the blue line being non-fraudulent providers.

2. Number of Claims & Average Reimbursement

Another interesting pattern found in the data and then used as a feature was the Number of Claims and Average Reimbursed Claim amount per provider. When looking at each group (OP and IP) of providers independently, it appeared that potentially fraudulent providers had a large number of claims with a low Average Reimbursed Amount as illustrated in the two figures below.

3. Duplication of Claims

One common type of fraud is to submit duplicated claims, where one claim duplicates the key features (diagnosis and procedure codes) of another claim.

We found that the following combination of codes is the least number necessary to define duplicated claims: admit diagnosis code + diagnosis codes 1-4 + procedure code1.

More restrictive code combinations did not yield fewer claim duplications while less restricted combinations yield more duplications. Under this definition, only 3.5% of IP claims were duplications to at least another claim, versus 44.3% for OP claims.

This drastic difference is likely explained by the distinct nature of IP and OP claims. OP claims usually have 0 procedure codes and 1-2 diagnosis codes, while IP claims which usually have 1 procedure code and 9 diagnosis codes. Therefore, it’s much more likely to “duplicate” another OP claim. The features we generated included the number and ratio of duplicated claims and a yes-no flag of whether the provider contains duplicated claims at all.

Next, we determined that the 2 features of duplication (IP & OP) showed distinguishing distribution patterns between potential fraudulent and clean providers, thus justifying its inclusion in the provider feature table and usage in machine learning. Potential fraudulent providers showed a much higher and more spread out duplication ratio than clean providers, for IP claims. However, the difference is non-distinguishable in OP claims, suggesting that IP duplication features would contribute much more to the detection of fraudulent than OP duplication features.

Machine Learning Data

1. Methods to deal with class imbalance

One of the major issues with anomaly detection is that the detected feature is very imbalanced, which must be dealt with before training a machine learning model.

To handle the class imbalance, we employed different methods at the corresponding execution levels. During the train-test-split, we turned on the stratify argument so that the data is split in a stratified fashion, ensuring the class proportion is maintained in both train and test data. When training our Logistic Regression and Random Forest models, we set the class_weight argument to “balanced”. For Gradient Boosting and Support Vector Classifier, we used synthetic minority oversampling technique (SMOTE) to achieve a balanced training data.

Another approach we used to address class imbalance is to use recall as the score to evaluate model performance instead using accuracy. The reason is that recall score measures the True Positive rate, or the proportion of actual positives that your model identified. By maximizing recall, we cast the widest net possible, which fosters confidence in our model’s ability to catch as many fraudulent providers as possible.

2. Logistic Regression Data (LR)

Our first machine learning model was a penalized logistic regression. This allowed us to accomplish two tasks at once: make predictions on our binary target variable and analyze results with logistic regression, while also performing empirical features selection with a lasso penalty term.

After standardizing our features with StandardScalar, we used GridSearchCV with our scoring metric set to recall to find the optimal parameter C. Our best estimator on GridSearchCV gave us a fairly good score and did not show signs of overfitting. The recall score with training data was .90, and the recall score with test data was .89.

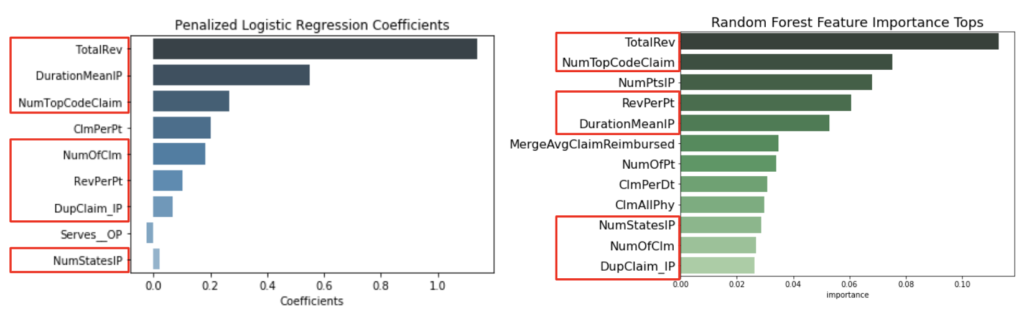

For our secondary purpose of using penalized logistic regression (empirical feature selection), our model performed well again. It reduced 45 features down to 9, giving us insight on the most important features when determining whether a provider should be flagged as potentially fraudulent. As we’ll see later, many of our 9 remaining features were also selected by Random Forest as the most important features, which made us more confident in reporting those as the most valuable features to answer our research question.

3. Random Forest (RF)

We chose to try Random Forest Classifier on this dataset because by its design, it behaves a little similar to Lasso Logistic regression in the sense that it gives you a model and at the same time the most important features. This result, when compared to our Logistic regression, would help us compare important features between the two models.

Once fitted, our best model from the grid search gave us a scored 0.94 on our train dataset and 0.70 on the test. Even though it was more overfit than Logistic Regression, both models reported almost the same set of important features, which we will discuss later on.

4. Gradient Boosting Classifier (GBC)

Next we explored gradient boosting classifiers as they are known to perform better than Random Forest on imbalanced data. This model fit the residual of each previous tree to the next one hence the reduced model bias. Hyperparameters tuned included max features, min_samples_split, and n_estimators (as in Random Forest), as well as the learning rate.

During the first round of grid search, the least overfit parameters were chosen as the center for the second round of grid search. Train and test scores showed an increasing trend as hyperparameters change. There was a train-test gap of scores, but very small (< 0.03) and narrowly distributed (std 0.006). Generally speaking, Gradient Boosting Classifier fitted the data pretty well (0.99) and predicted the classification relatively precisely (0.93).

5. Support Vector Classifier (SVC)

Finally, we tried a Support Vector Classifier using linear and nonlinear kernels. With the nonlinear kernel (poly, sigmoid, rbf), unlike Gradient Boosting Classifier, the score of grid search models oscillated majorly between 0.8 and 0.9. The best model had a train score of 0.85 and a test score of 0.83. With the linear kernel, which was very computationally expensive and time-consuming, the final train and test scores were 0.88 and 0.84, not much higher than that of non-linear kernels.

6. Data on Model Comparison & Feature Selection

Based on test recall scores, the performance of models is as follows:

GBC (.93) > LR (.89) > SVC (.84) > RF (.7).

Gradient Boosting Classifier performed the best, followed closely by simple Logistic Regression. Except for Random Forest, the other models did not show much overfitting, meaning their train and test scores were close.

Bringing our focus back to the most important features for detecting fraudulent healthcare providers, we focused on Logistic Regression and Random Forest. The 9 features that were selected by Logistic Regression turned out to be highly consistent with the most important features selected by Random Forest. 7 features overlapped.

Among those features, many of them correlated with the size of a provider. In general, bigger providers handle more patients, claims, a larger amount of revenue (deductible + reimbursement), more numbers of claims per patient, more inpatients that come from different states, etc. Our model suggested that such providers tend to be fraudulent. Intuitively, bigger providers possess more resources to conduct fraud and they have a high volume of claims in which they could bury fraud claims to avoid detection.

Our model also highlighted certain features that not only are red flags of fraudulence but also indications of the type of fraud that may have been conducted. Two major types of fraud are fabrication (creating a claim to represent services that never happened) and misrepresentation (exaggerating or distorting rendered services).

For example, Revenue per Patient and Average of Duration IP are strongly correlated with a high probability of fraud. These features, if associated with a true fraudulent claim, would be a misrepresentation of actual services. On the other hand, Number of Claims per Patient, and Duplicate IP Claims could represent fabricated claims, likely to be based on popular or existing information from the same or another provider.

Data Conclusion

At the onset of the project, we were uncertain how our strategy of creating a new dataset of provider-based generated features would play out, but in the end we were not only impressed with the results of our models but also the interpretations that they provided. With in-depth data analysis and a comprehensive approach to machine learning applications, we created models that did well to predict fraudulent providers on unseen data, and identified the features to look out for when investigating potentially fraudulent providers.