Key Learnings from Predicting Housing Prices

Project Overview

We set out to predict housing prices in Ames, Iowa, utilizing over eighty features ranging from square footage to number of rooms to kitchen quality. To effectively optimize our predictions, we established a pipeline to easily preprocess the data, store auxiliary functions, run models, and auto-generate output files. We iteratively implemented model improvements to reduce our prediction error, measured here as root mean squared logarithmic error (RMSLE).

Through this process, we learned a lot about effective pipelines, the nuances of regression, the value of stacking, and the implications for real estate markets. Here are our top five takeaways.

Takeaways

Making a well-designed pipeline can significantly improve efficiency.

We decided to build a pipeline at the beginning. There are two major advantages for such an approach: on individual level, repeated codes are greatly reduced; on team level, each individual’s results can be easily reproduced in a standardized way by all other members, which provides a common ground for the team to validate and improve the methodologies.

Our pipeline contains the following major units:

- A preprocessing unit: this unit helps us do the data preprocessing, includes imputing missingnesses, enriching data, removing outliers, etc. We could control which functionalities to achieve by passing a set of boolean flags. Also, cross validating different choices helps us identify which preprocessing step is crucial and which is invalid.

- A collection of utility functions to help us handle repetitive and common actions.

- A train and evaluation unit: this unit is the core of this project. It trains and cross- validates different models that we use. It also produces prediction on the test set that is ready to be submitted and returns metric, features importance for further analysis.

- A stacking function that does the stacking for us. Other than the usual functionality of producing prediction, it can also save the trained result in a local file. An advantage of this approach is that we don’t need to retrain a model again and again if it is a stable cornerstone of many different stacking configurations.

Carefully going through all the features and understanding the data are crucial.

After spending time to carefully understand the feature space, we started addressing missingness. The data set contains ambiguity of the use of ‘NA’. ‘NA’ entries refer to a feature being unknown in some cases and not applicable in other cases (i.e. the house has no fireplace). The latter case was easily handled by replacing missing items with a new categories that signaled if the feature does not exist in the house. The former was treated on a case by case basis using mode imputation or simple regressions to impute. Lastly, we cross-referenced features that related to one another (i.e. Basement Finish, Basement Height, Basement Condition) to insure that there was consistency. We found inconsistencies across feature groups and imputed accordingly.

Our target variable -- sale price -- has a positive skew that often formed non-linear relationships with our most predictive features. Additionally, the Kaggle competition evaluates models based on the root mean squared logarithmic error. Therefore, predicting an $800,000 home to be $600,000 invokes the same penalty as predicting a $30,000 home to be $40,000. Transforming our target from sale price to the natural log of the sale price remedied these problems,and our model results improved materially.

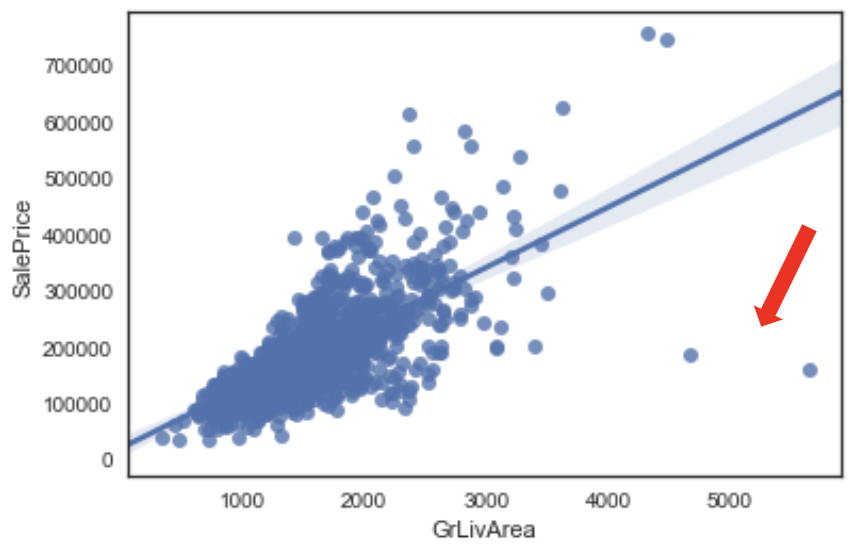

Outliers

In examining the relationships between the features and the target (sale price), we found a few outliers stood out. For instance, two homes with very high living areas had very low sale prices. After examining these records further, we decided to omit them. This ended up improving model accuracy and generalizability.

Feature Engineering

Next, we performed feature engineering, producing additional predictors. Some of these, especially the feature interactions, proved quite helpful in our modeling. For example, multiplying the various quality ratings (kitchen quality, garage quality, etc.) together got us an aggregate quality for the property and further distinguished high quality from middling quality.

Different models are good at solving different problems. Try different models and compare!

Given the linear relationships in the data, we hypothesized that an elastic net regression would be predictive of sale price. Regularization allowed us to include numerous features without worry of multicollinearity and feature selection. We used cross-validation with 10 folds to tune our parameters and examined residuals closely to identify and address residual patterns. After noticing that the cross validated lambda tended to score very well in sample and poorly out of sample, we manually increased our lambda parameter until these two metrics converged.

Aside from the linear models, we also tried two tree based models: random forests and gradient boosting models. Here are our observations:

- Tree based models took longer to train than linear models. However, using tree based models alone did not generate good results for us.

It is very easy to overfit the training set using either random forests or gradient boosting models. It is hard to strike a balance. - You can see the learning curves for random forest models below: the out-of-bag prediction errors plateaued, and the gaps between training error and out-of-bag error are huge.

- Gradient boosting provided better results than random forests. We used the xgboost package. It provides efficient implementations, rendering the training speed much faster than random forest implemented in sklearn.

Finally, to further improve our score, we used the common Kaggle practice called stacking. Quoted from a guide to stacking on Kaggle: “Stacking (also called meta ensembling) is a model ensembling technique used to combine information from multiple predictive models to generate a new model. Often times the stacked model (also called 2nd-level model) will outperform each of the individual models due its smoothing nature and ability to highlight each base model where it performs best and discredit each base model where it performs poorly. For this reason, stacking is most effective when the base models are significantly different.”

We trained several stacking models. For these stacking models, we generally chose :

- a lasso regression with very small alpha

- a bagging trees model : that is, we allow all features to be used in choosing the split,

- a ‘moderate’ random forest : usually this means a random forest with max depth around 3, max features around 5 ( we call it moderate because it is still able to fit the train set decently)

- a ‘complicated’ gradient boosting model: one that performs best on its own compared to all other gradient boosting models

- one or two gradient boosting models with very small learning rates and relatively few training rounds.

Note that the last 2 gradient boosting models are far from convergent: their learning rates and training rounds are so small that they cannot even fit the training set to a decent level. We chose these worse performing models deliberately by experimenting many different settings, and it indeed aligned with the philosophy that we want more diverse models.

Here is another direction that we could go to improve further if we had the time: experiment with more tree based models to gain a sense of what ‘simple’, ‘moderate’ and ‘complicated’ mean and then stack with all different combinations of ‘simple’, ‘moderate’ and ‘complicated’ models to cross validate.

One final note on boosting our score: we took an average of our best stacking models. This step was simple yet effective.

Try to understand your model in real life context.

We have successfully built some machine learning models which have good prediction power, but our job is not done yet. We still need to further explore and understand the models. The graphs below show the feature importance of both linear regression and random forest. It seems that they are very different at the first sight, but if you look more carefully, you will discover many similarities. The features with red underlines (add_OverallGrade, add_GrLivArea*OvQual, YearsOld, etc.) are mostly related to the overall quality or overall condition, the features with blue underlines (TotalSF, SF_score, BsmtFinSF1, 1stFlrSF, etc.) are mostly related to sizes of the house. If you look at these features in this way, both random forest and linear regression models put great emphasis on the overall quality and the sizes of the houses on the sale prices.

How to understand this finding in real life context? Imagine the normal procedure when we are going to buy a house. We usually go to the open house to take a look. Unlike what we have done in this project, most people will make a decision if they want to buy it and the price range they would like to pay in about half an hour. It is impossible to go through a checklist of all 100 features. What are the most important factors for them to consider? First impressions trump the checklist. If you really like the house, you would likely consider the higher price to be justified. What makes a good first impression? The overall quality, just as the machine learning models showed. There are other concerns that carry a great deal of weight like considering if it meets their particular requirements. Questions people do ask include: How many people do we have in the family (now and in the future)? How many cars do I have? Do I need a basement? All these considerations are related to needs, and the needs largely determine the size of the target house, which is also revealed by the models. Therefore, the result from feature importance analysis is consistent with our real life experience.

Conclusion

In summary, as what we discussed above, we learned a lot of practical experience in machine learning workflow from this housing price prediction project. In our opinion, the key to a successful machine learning project is to keep trying. That means employing different feature engineering strategies that produce, various models with different parameters and so on. There must be many cases the algorithm fail, but we can still learn from the failures. In these tests, a well-designed pipeline becomes extremely helpful to reduce the heavy repetitive work. One last note, do not stop just after you can make accurate predictions. Try to make sense of your model and get some actionable insights: this is how machine learning helps us in real life.

See our github for more details.