Accurately Predicting House Prices and Improving Client Experience with Machine Learning

Introduction

Initial meetings with real estate clients often involve a reality check around price expectations. For example, a buyer comes in expecting they can get much more for their money than is reasonable given the market. Perhaps a seller assumes they can get a price much higher than is typical due to anecdotal evidence that the market is much hotter than it is. Depending on the severity of the disconnect, these meetings can be tense and may be less likely to end in a transaction, resulting in lost time. We will demonstrate how house price prediction using Machine Learning can be leveraged to engage with the public at scale by utilizing a web application.

Using a pared-down, user-friendly version of the model, this application allows prospective clients to explore the true market value of their property of interest. This allows the prospective client to level-set expectations around pricing before scheduling time with an agent and allows the firm to increase the number of client meetings that end in a transaction - saving the client and the firm time.

In addition to building credibility, a pared-down version can also be used as an example while advertising the comprehensive model offered to signed clients. These cutting-edge techniques that are used and trusted across various sectors can set a realty group apart from the competition. Demonstrating a reliance on data-based insights adds to a firm's credibility and allows agents to answer common consumer questions with less manual effort. The result is a higher proportion of leads with realistic expectations who are more likely to choose the realtor to represent them in their real estate transaction.

Research Questions

- What factors affect house prices?

- Can we train a model to accurately predict house price?

Project Goals

- Improve price prediction accuracy using ML

- Develop an intuitive application (Shiny App) to improve client experience

Potential Benefits

- Create realistic expectations during client onboarding

- Increase the proportion of new clients that move forward with a transaction

Data Exploration

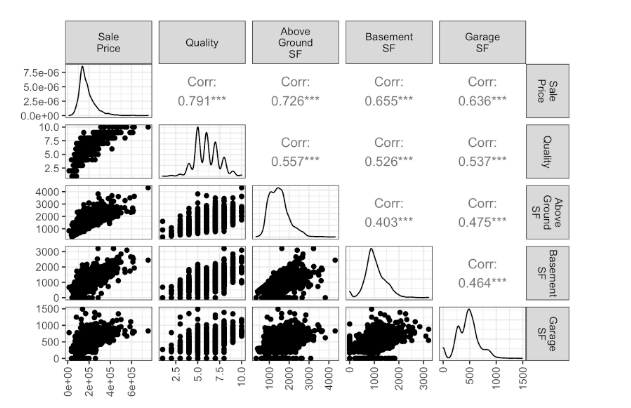

Exploratory data analysis consisted of the examination of the distributions of the continuous variables, specifically looking for normality or interesting patterns. The variables were plotted in scatter plots compared to the target variable, sale price, to assess any existing relationships. Categorical variables were examined for completeness and their distribution across the data set.

An example of the correlation matrices examined during exploratory data analysis can be seen below.

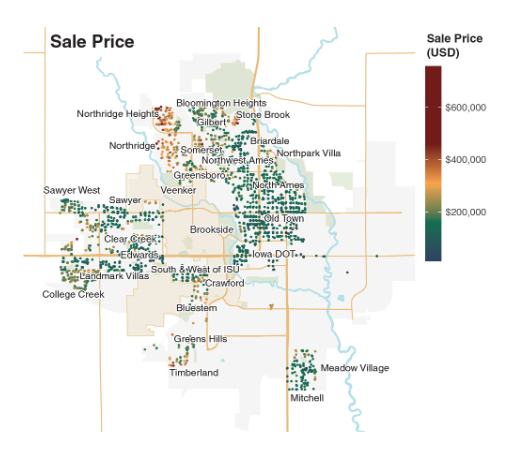

There is an old adage that emphasizes the three most important factors for real estate properties: “location, location, location!”. In the Ames 2015 market, this old saying holds true. When mapping the geo-coordinates of each house and their respective sale prices, we can see a pattern emerge.

Houses located in the northernmost region of the city commanded the highest prices, being situated between the creek and the largest local green space, whilst still maintaining distance from the busier town centre where Iowa State University is located. Some more expensive homes are also seen in the western and southern parts of the city.

When examining the location of houses based on age, it is no surprise that the oldest homes are found closer to the city centre. It appears that newer home developments began to be built away from town centre outwards to the northern, western, and southern edges as the city expanded over time.

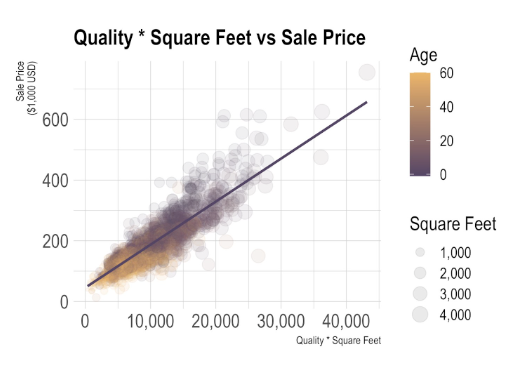

House size is a key feature that can be expected to play a large role in predicting house prices. The graph below displays the expected size and price trend: as house sizes increase, prices also increase. A vast majority of homes in Ames are single-family detached homes, with a higher density of homes falling between ~750 ft2 ~2000 ft2.

As house sizes increase beyond 2,000 ft2, the data begins to feather out more, suggesting that other traits aside from size may influence house prices at this point - two houses that are 3,600 ft2 can have a wildly different prices, with prices differences up to $200,000.

The overall quality and condition of a house are important features wherein high scores in both categories are expected to be universally desirable. To examine the relationship between these two features with respect to price, a Pearson’s correlation matrix was created. Overall quality ranking and overall condition appear to be correlated, as evident from the gradual increase of average price per ft2 for homes with higher scores for both quality and condition.

In contrast, if a house has a poor overall quality score, it is also likely to have a poor overall condition rating and a lower square foot price.

When looking at how basement properties relate to price, houses were first grouped into different age categories and faceted based on the total proportion of finished basement. Houses less than 20 years old had the highest proportion of finished basements, and were also of highest build quality.

In contrast, new to middle aged homes (< 20 years old) tended to have a greater proportion of finished basements, and were typically average build quality. These comparisons become more contrasted as house age increases, with a majority of homes having 0% finished basements and the majority being of poor build quality in houses greater than 100 years old.

Segmenting the data based on house foundation materials with respect to age and price shows an interesting shift in the primary building materials used over time. The oldest pre-war homes, (greater than 70 y/o), were typically built with a brick and tile foundation.

In the post-war era, primary building materials shifted towards cinder block in middle aged (20-70 y/o) homes. The newest homes (less than 20 y/o) are made almost exclusively of poured concrete, which have higher raw material costs, and result in stronger foundations, subsequently commanding the highest prices.

This is an interesting observation, as Ames happens to be situated in Iowa’s tornado alley, seeing an average of 337 tornadoes a year. Folks looking to make a wise investment and to protect their homes from frequent abrasive weather conditions may gravitate towards houses that have a higher basement quality with stronger foundations.

Model Preparation

Data cleaning

The majority of the missing values were imputed with zeroes or not applicable (NA) depending on the standard outlined in the data dictionary. For example, the area of the exterior represented by masonry was set to zero when the value was missing, and the masonry type was set to “None” indicating no masonry area was present. A second example, blanks present in variables related to basement quality were set to “NA” when the total basement square feet were zero.

Missing values for lot frontage (i.e. linear feet of the street connected to the lot) were imputed based on the lot area. We assumed there was a relationship between the lot size (area) and lot frontage (i.e. linear feet of the street connected to the lot). The simplest way to estimate this was to assume all lots were rectangular.

For those houses with lot frontage data present in the data set the length of the lot was calculated by dividing lot area by lot frontage (assumed to represent width). The median length of each lot configuration type (e.g. inside lot, corner lot, etc.) was computed. This median was used to estimate the lot frontage for the houses with missing values (lot area/median lot length = lot width (lot frontage)).

Basement square feet were broken into categories based on how finished each portion of the basement was. We transferred these data from their original form into a form more in line with the rest of the data. Specifically, we created a new column for each type of basement finish and populated the new columns with the number of square feet of that basement finish type that each house possessed.

Feature engineering

Some features were engineered by combining or replacing data in meaningful ways to allow for a more understandable or powerful predictive model. The features we created from the data included the following:

Outdoor Space - a sum of the square feet of all types of outdoor structures meant to extend living space (e.g. wooden decks, screened porches, etc.)

House Footprint - the percent of the lot area consumed by the house and garage; this can also be regarded as a proxy for privacy or housing concentration (i.e. the larger the portion of the lot consumed by the house and garage the less space between the house and the property line and therefore less privacy)

Age of House - year sold - year built or remodeled (if applicable)

First Floor Percentage - based on an informational interview it was learned that master bedrooms on the first floor are more desirable - the ground floor may be more important in house price than others

Approximate Room Size - total rooms above grade / square feet above grade; given the semi-recent trend for more open floor plans being more popular, this measure was meant to capture if this trend had an impact on house price

Area with Good Quality - giving square feet more weight/value in houses of higher quality

Area in Good Condition - giving square feet more weight/value in houses given a higher score for the better condition

Multiplying quality by square feet conveys more value to higher quality properties per square foot than to those of lower quality. This interaction of quality and size also revealed the cleanest relationship in the data. When compared to square feet versus price, the variance around the relationship line was more homogeneous in the quality * square feet graph.

And it makes sense that a smaller higher quality house may be equivalent in price to a larger lower quality house. It may be important to note that newer houses tend to be rated as higher in quality, and newer houses tend to be larger than older houses.

The below box plot demonstrates a trend for newer houses to be rated as higher quality than older houses. This may be an interesting relationship to further explore. Specifically to determine if quality is actually related to inherent quality or is somehow linked to stylistic trends that consumers associate with quality because they are more desirable traits. The middle rated quality spans the full house age range, but low contains only the oldest and high contains only the newest houses.

Somewhat visible in the quality * square feet graph above, below the slight relationship between house age and size is shown more clearly. Essentially, newer houses tend to be larger than older houses.

Geographic Value

The neighborhood was labeled inconsistently, and the sizes of the neighborhoods (i.e., the number of houses in each neighborhood) varied. Some neighborhoods had less than ten sales data, which could result in overfitting. Instead of using a categorical feature, we engineered a new numerical feature that is related to the geographic value. The geographic value is determined by the superposition of the Gaussian surfaces. Here is an example of the geographic value fitted after the ridge regression model.

The geographic value indicates how much value is added to the house for being at that location. The same house with the same features will have a higher price if the geographical value is higher. Using this feature, we can estimate the house price without labeling it in a certain neighborhood. Interestingly, the geographic value correlated with the crime rate – regions with higher crime rates have lower geographic values. This adds support that the geographic value developed here is reflective of real variations in Ames and not just arbitrary fluctuations.

Machine Learning Algorithms

We began with 145 features including the original data, one-hot encoded categorical features, and engineered features. This was initially reduced to 45 through exploratory data analysis. And finally, the features were reduced to 32 using Lasso Regression. The data were then split into the train (70%) and test (30%) groups without stratification.

Multiple Linear Regression

First, the Lasso Regression model was used to simplify the model, reducing redundancies and risks of multicollinearity. The graph below shows the coefficients of some variables with different lambda values (log-scale). The features that “survive” longer up to a high lambda value have a larger contribution (either positive or negative) to the price. For example, the quality and condition have a large impact on the house price compared to the number of bedrooms and bathrooms.

Then, the Ridge regression model was trained. Grid search with cross-validation (3 folds) identified lambda of 0.0032 to be the best for the Ridge model and returned an R2 of 0.900. The figures below show how the prediction compares to the actual sales price. And the residual map shows which house the pricing model is overestimating (red) and underestimating (blue).

Using the set of features reduced from the Lasso regression, another regularization model was implemented called the Elastic Net regression, which uses a weighted combination of both L1 and L2 penalties. Through k fold cross validation and grid search (5 folds), the model returned an R2 score of 0.898 with the optimal lambda value being 9.326x10-6. The optimal L1 ratio was found to be 0.0, exclusively regularizing using the L2 norm and making the model equivalent to a Ridge regression.

Support Vector Regression

A support vector regression model using a radial basis function kernel and epsilon set to 0.1 and gamma set to 0.01 returned an R2 of 0.918. Grid search and cross-validation with 5 folds returned epsilon of 0.01, gamma unchanged, C of 1, and an R2 score of 0.916.

Random Forest Regression

Random Forest ensembling method was implemented next since it is a supervised learning method that performs well on both linear and non-linear problems. Random Forest models operate by constructing several trees during training and computing the mean to output a new result to serve as the prediction.

The baseline Random Forest regression implemented returned an R2 of 0.898 with 1,000 trees and 7 max features (the number of features in the feature space to be considered for each split in each tree). Grid search with cross-validation (5 folds) indicated the optimal parameters to be 500 trees, and minimum samples per leaf of 1 and 2 per split, yielding an R2=0.899.

Gradient Boosting

Gradient boosting is a method of converting weak learners (performing slightly better than random chance) into strong learners by learning from and reducing error with every tree that is added. When gradient boost was implemented with 5,000 trees, a learning rate of 0.01, and 7 max features, an R2 of 0.922 was returned.

XGBoost, on the other hand, a machine learning model famous for championing Kaggle competitions, is an optimized version of gradient boosting. When implemented using 5,000 trees, a learning rate of 0.01, 7 max features, gamma of 0.01, row subsample of 0.8, and column sample of 0.8, an R2 of 0.923 was returned.

K fold cross validation and grid search were attempted for this algorithm but were quickly aborted as the model was too computationally expensive to yield results within a reasonable period of time. Despite this, XGBoost produced the best results, slightly improving upon the gradient boosting score and yielding the highest R2 score out of all the models tried.

The Most Important Features

To identify the house features which contributed most towards predicting price, we computed the permutation feature importances for the Random Forest CV, Gradient Boost, XG Boost and SVR CV models, then calculated an average weighted importance score. The plot below shows the ten features with the highest weighted importance scores, as well as individual model importance scores.

Permutation importances are favored over the well known feature importance calculations available in tree-based models, because it is more reliable and can be broadly applied to any model. It determines feature importance by measuring the magnitude by which the prediction error increases when a feature is randomly shuffled or permuted. This is done by first taking a baseline score on a validation data set.

Then, a feature column from the validation set is shuffled or permuted, and the model is scored again. If the model does not rely on that feature for its predictions, the score will remain unchanged. Features which are not helpful to the model’s predictive power can be identified if the reduction in error is zero.

In contrast, if the feature is important for the model predictions, the random shuffling of the feature column will result in a reduced score or accuracy. This is done over the entire feature space to determine relative order of importance.

Although this method is more computationally expensive than the Gini impurity calculations performed in tree-based feature importances, it is still efficient to run since model retraining is not required. In addition, it is considerably less biased towards continuous and categorical variables with high cardinality.

Weighted permutation scores pointed to overall quality as being the most important predictor of house price, followed by first floor square footage, room size, total rooms above ground, and number of garage cars.

Most of the individual models returned top features which were in agreement with the other models, with the exception being the SVR (with cross validation and grid search), which gave total rooms above ground and room size a higher weight than other models.

Model Deployment

The model was deployed via a web-based application using Shiny in R studio. The application provides the user with an interactive interface to explore house prices in Ames by adjusting various characteristics of the house including the number of bedrooms, number of bathrooms, square feet, and neighborhood. Descriptive visualizations on the exploratory tab allow users to examine the distribution of price points given key characteristics.

While the prediction tab allows a price prediction to be returned with a visual representation of the prediction with the variation represented by other houses of similar sizes and characteristics. This demonstrates the functionality and value provided by a pared-down house price prediction model.

"Map" Tab

The Shiny App developed in RStudio was created with ease of use in mind. The prototype uses historical data from Ames, IA, but can easily be adapted to be used with current listings and in any location.

To use the dashboard, the potential buyer would simply adjust the parameters available under the “Map” tab. The user would have the ability to select a “Neighborhood”, adjust “Price Range” and also select “House Properties” such as number or bedrooms or bathrooms etc.

After adjusting all the parameters the houses fitting the criteria would be visible on the map, this would give the potential buyer an idea of where (in which neighborhoods or next to which landmarks) the houses with selected criteria are available and approximate density of listings to choose from.

"Analysis" Tab

In the “Analysis” tab the user would select the “Neighborhood” from the dropdown menu. The analysis graphs would show:

- The price distribution in that neighborhood

- Quality by square foot price (which in turn show what quality of houses are available in the neighborhood selected)

- Square foot price by above ground living area

- Price by building type

"Predictions" Tab

In the “Predictions” tab the user would be able to select “Neighborhood”, “Beds”, “Baths”, “Area” and “Building Type”, the outcome would be an approximate price prediction. This would be a great tool for buyers and sellers alike. Buyers would be able to see how much a house of their dreams would cost and then adjust their expectations. Sellers would be able to input their own house criteria and see how much they can list it for/expect to sell it for.

Conclusion

After reviewing the data provided in the Ames, Iowa house sales data set we noted several interesting relationships and potential avenues for further research and development. Including potential trends in foundation type, house size, and quality related to age of house.

We cleaned the data mainly by inserting missing 0’s and NA’s. And finally before applying machine learning techniques we engineered some novel features including: outdoor space, house footprint, square feet * quality rating, and a geographic value better able to contribute to house price estimates than neighborhood names.

These features in addition to some of the original data were used to train multiple regression (Ridge and Elastic Net), support vector machines, random forest, gradient boosting, and XGBoost. All of the models produced R2 scores for the test data set of around 0.9, with the lowest being 0.898 for random forest, and 0.923 was the highest and was produced by XGBoost (hyper parameters not tuned with grid search due to computation time).

The features which were consistently ranked as most important for predicting house prices were overall quality, first floor square footage, room size, total rooms, and garage cars. Other important features included number of baths, house footprint, age, fireplaces, basement baths and basement quality.

Lastly, we deployed a proof of concept web-based application to allow potential clients to explore house prices in Ames, Iowa. This app is based on a pared-down version of the multiple regression model. This app serves two purposes: 1) to allow potential clients to level set on dollar figures prior to their initial meeting with the real estate firm, and 2) to attract clients to the firm by highlighting the data-based approach taken by the firm.

Data Source

Sales Data

2,571 house sales in Ames, Iowa between December 2006 and July 2010

https://www.kaggle.com/c/ames-housing-data

Geocode of each house

https://github.com/topepo/AmesHousing/tree/master/data