Data Prediction of Zillow Rentals for Phoenix and Tampa

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Motivation

US renters paid $512.5 billion in rent in 2019, according to Zillow’s data report. Leading the pack was Phoenix where the rental market had a growth rate of 7.6% and closely trailing was Tampa with a growth rate of 4%.

Figure 1: Zillow Press Release about US rental market

Goal

The goal of this project was to gain insights into the important factors that influence the rental values of homes and apartments in Phoenix and Tampa. This was done by exploring the Zillow Rental Index (ZRI) provided by 7Park Data and American Census Survey (ACS) data obtained from Google BigQuery. The ZRI was the target variable predicted based upon the ACS data.

Data Exploration

Figure 2: Average ZRI for Phoenix and Tampa from 2015 to 2017

When doing exploratory data analysis of the provided ZRI data, we found that in addition to having a much more competitive rental market than Phoenix, Tampa is comparably least affected by seasonality as well. An immediate insight drawn from Phoenix’s sellers' market is that waiting about 4 months (Late Winter/Early Spring) will yield more of an ROI in rent properties investments.

Figure 3: ZRI Average Growth Rate for Phoenix and Tampa from 2015 to 2017

Seasonality can also be seen in the plot above displaying Phoenix and Tampa ZRI Average Growth rate from 2015 – 2017. In the early autumn months, we can observe a decrease in ZRI average growth rate as opposed to metrics seen in late winter. However, in order to get more of an idea of the granularity of the growth rate we have to delve into the zip code level.

Figure 4: ZRI of most populous zip code from Phoenix and Tampa

Looking at Phoenix and Tampa’s most populous city, we found a consistent increase in ZRI starting from 2015 to 2017. Of the two, Tampa is the more competitive market.

Figure 5: ZRI Growth Rate of most populous zip code from Phoenix and Tampa for 2015 to 2017

At a granular level, when observing the most populous zip codes of Phoenix and Tampa's average growth rate, Zip Code 85225 (located in Phoenix) showcased more of an increase in growth rate compared to its Tampa counterpart. Although both populous zip codes displayed an overall increase in growth rate throughout the years and seasonality trends, exploring factors such as the effect of the School Calendar on the rental index growth rate might be insightful to explain drops and spikes during Autumn and Late Winter/Spring.

After studying the target variable (ZRI), we also went on to compare the ACS features of both cities in the years 2015-2017. With over 50 features to study, we focused on features that seemed to identify the uniqueness of each city.

Ways Phoenix and Tampa are different

The first feature we looked at was the geographic location and income per capita. Located f off the coast of the Gulf of Mexico, Tampa offers many coastal areas. Phoenix, on the other hand, is a landlocked city in the middle of the country. The income per capita in Tampa is highest near coastal areas. In Phoenix, the income per capita is highest in the northeastern suburban area. So, geographically both cities were significantly different, and income per capita was related to the geographic layout.

Figure 6: Phoenix Map

Figure 7: Tampa Map

Age of Population

The next feature we analyzed was the age of the population. As seen in the graphs below, one can surmise that the Phoenix population is significantly younger than that of Tampa. Tampa is a city for retirees and this is indicated by the higher number of people over 60. It is surprising that in addition to having more people of age above 60, Tampa also has a significantly lower population of people under 18.

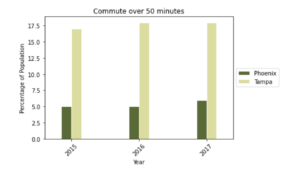

Commute Time

The third feature we studied that showed that the cities were different was the commute time. The data showed that the commute time in Tampa was significantly higher than in Phoenix. Upon investigating further, we found that Tampa had a lot of traffic due to the combination of poor infrastructure and lack of good public transport. Most people drove and did not carpool. Phoenix has short commute times because of superior infrastructure and many alternative routes to destinations and diversity in employment centers.

Ways Phoenix and Tampa are the same

There were three ACS features that the cities had in common r. All were related to housing. The first feature was the ratio of single-family to multi-family dwellings. The ratio in both cities is very similar, as shown in the graph below. The second feature was the ratio of vacant dwellings. This ratio was also similar in both similar.

Rent Burden

The third feature that both cities had in common was rent burden. The graph below shows that the majority of renters in both cities had a rent burden of 10%-50%

Data Workflow

Overview of workflow

We wanted to work with reliable data. For this reason, we decided to keep only those observations for which we had at least 70% of the data. We also used 70% as our minimum for the values on features used in the observations. For the few observations that had only a few missing values, we imputed the missing values using two strategies: i) forward filling, which uses the previous value to fill the present missing one, and ii) median value imputation. We dropped all the observations for which the label, or dependent variable, was missing.

Data on Feature Engineering

Grouping

For feature engineering, our goal was to take the features we gathered from the ACS data and group several of them together. The feature (female_under_18) variable captures information of several individual features such as females_under_5, females_5_9, and others. These were then grouped further into an age feature.

We also grouped several other features, including, rent burden, commute types (public or private), income brackets and size of dwellings, etc. Redundant features. such as additional geoid which was a blank field and married households, whose information was already captured by family households, were removed. Additional features such as the water to land ratio in each zip code of the two cities were added. This analysis leads us from over 240 features to just 59 of the most relevant features for the development of our models.

New features

We reasoned that access to water would have an impact on rental values, especially for Tampa. Thus, we used publicly available geographical data (geo US boundaries) to engineer a new feature that represented the water to land ratio of each Zip Code.

VIF analysis (discarded features)

After grouping the features, we still found a very high level of multicollinearity among them as seen in the below VIF graph:

As seen in the above graph, VIF levels far exceeded the threshold of 5 to 10. In order the reduce the VIF, we removed the following features:

- married_households

- owner_occupied_housing_units_median_value

- owner_occupied_housing_units_lower_value_quartile

- less_than_college_educated d

- amerindian_including_hispanic.

After removing the features listed above, the VIF improved significantly with almost all groupings being below 9.

Cluster Analysis

We tried to analyze multicollinearity within the grouped features using cluster analysis but found this to be redundant with the VIF analysis.

Data Modeling

We started by building a battery of linear, ridge and lasso, and tree-based, random forest and gradient boosting models. The dependent variable was the ZRI values for December 2017. As predictors, we included the following:

- Variables obtained from the census data.

- ZRI values for December 2015. That included, historical data from two years before the dependent variable we are trying to predict.

These models are implemented for the cities of Phoenix and Tampa separately. The quality of the predictions by our models will be tested against unseen data. The metric for doing this will be the root mean squared error (hereinafter ‘RMSE’). The tree-based models provide the better predictions. Our gradient boosting model allows for predictions that deviate only 0.03% from the actual values we are trying to predict.

Motivated by the high accuracy of our models, we move on to evaluate how well they generalize to other American cities. If they perform well even in different cities, we may have found a model that can provide important information on cities across the country.

Unfortunately, the accuracy of the models drops significantly when applied to different cities. For example, our gradient boosting model for Phoenix predicts values for Tampa that diverge 12.97% (RMSE) from the true ZRI values. What could be the reason for this? This loss of accuracy could be explained if customers looking to rent in each city would value real-estate properties differently. In order to test this hypothesis, we sought to identify which particular variables influence our models the most in each city:

Figure 8: Most Important Features according to the models

As we can see in the figure above, there are substantial differences between the variables that better predict rental prices in each city. Median age appears as one of the best predictors in Tampa, though it doesn’t come up among the five most important ones in Phoenix. The fact that Tampa is a popular destination for retirees probably explains this difference.

We can also see how geographic factors are important. Tampa is a coastal city, and the water to land ratio appears as the third important factor determining the rental price. When a property is located near the coast, the cost of renting such property increases. Of course, this variable is non-relevant in Phoenix, a continental city.

The next step in our research was to determine to what extent is historical data relevant in predicting ZRI values. In this regard, models were fitted using census and historical data separately. We found that census data can predict with high accuracy, only 1.9% (RMSE) deviation from the actual ZRI values.

However, predictions from historical data alone provide even better results, similar to the ones obtained from our first models. At this point, we questioned whether it would be more efficient to predict using only historical data, as there would be no need to collect census data In our next section, we explore how effective this strategy would be.

Residual analysis

Our previous models highlight the power of the historical rental values to predict future ones. As relevant as that might be, it is not very informative. To get more insightful results we decided to use a different approach: we modeled the residuals. This was a two-steps approach:

- We used a simple linear regression of 2016 ZRI values on 2018 ZRI values. This model, which we call the historical model, explained 96% of the variance in Phoenix and 94% in Tampa. Then we used the full dataset to re-train the historical model and then used it to make predictions. Finally, we calculated the residuals by subtracting the predictions from the true values.

- We used the residuals as the dependent variable and ACS features to predict them. Since there were no obvious linear relations between the residuals and the features, we used tree-based models.

The residuals from the historical model vary in magnitude and can be positive or negative (Figure 9). Negative residuals indicate that the historical model is overpredicting the ZRI in that specific Zip Code. On the contrary, positive residuals are those Zip Codes for which the historical model underpredicts the ZRI.

After cross-validation and parameter tuning, the Gradient Boosting model was superior to Random Forest on predicting the residuals. Our best model explained 57% of the residuals’ variance in Phoenix and 64% of it in Tampa. The most important features for predicting rental values, in addition to historical data, were different for the two cities that we analyzed (Figure 8).

Figure 9: Variations from the historical data

Insights

Our analysis on the Zillow Rental Index resulted in actionable insights. First of all, we backed our intuitive observations on the importance of historical rental values to predict future ones. If you were to rely only on one feature to predict future rental values, that should be the current value without hesitation. However, those areas in which rental prices deviate from the historical ones (i.e. those that have a large residual from the historical model) proved to be very informative as to what other features impact rental values.

We visualized the residuals and the most important features of each city into maps to aid our understanding of their relationships. In both cities, we observed that most Zip Codes were accurately predicted by the historical model (pink), as well as a few examples where the ZRI values were underpredicted (yellow) or overpredicted (purple). Surprisingly, Zip Codes where ZRI is overpredicted by the historical model to be the ones with the highest income per capita in both cities (Figures 11 and 12).

This observation uncovers an innate weakness of the ZRI as a metric because it fails to accurately describe those sectors of the dataset. In fact, Zillow has updated its metric and replaced the ZRI with ZORI to better capture the US rental market.

Zipcodes

Our analysis highlights those Zip Codes that are good investment opportunities. The Zip Codes that appear in our residuals maps in yellow are places where people are paying more in rent than expected. Thus, we recommend that investors take a good look at the possibility of acquiring properties there. Interestingly, in Phoenix, it seems that the low availability of vacant houses for sale is partially driving this deviation from the historical predictions (Figure 10).

Figure 10: Residual Analysis for most important features for Phoenix-models

In a specific region southwest of Tampa, a group of overpredicted Zip Codes is also the one with the highest median age and water to land ratio (Figure 11).

Most of these overpredicted Zip Codes coincide with the highest income per capita in both cities. However, according to our analysis, on average, people are not willing to pay those higher rates. Consequently, investors in those Zip Codes that are overpredicted by the historical model (purple color in the Residuals maps) that have the historical rental values as a target for properties in these areas should prepare for extended vacancy periods

Figure 11: Residual Analysis for most important features for Tampa

Future Work

In order to take this project, there are a lot of directions we could take. One immediate future step we could take is to explore more cities to validate our models, as well as develop representative models for the cities and test them on a more granular level to generate insights and compare them to other cities.

In addition, it would be interesting to incorporate other data sources to improve the fidelity of our model. Sources such as crime statistics and migration data have been considered to refine our models. Furthermore, as was discussed in the insights section previously, we would like to explore the new Zillow index, ZORI which is an improvement of ZRI, and see how our models change.

We also want to study some features more deeply. As was discussed in the feature engineering section, several features were grouped together to better describe the data as well as reduce multicollinearity but their effect is not clearly visible. So we want to study them in more depth to see what would be a better way to store that information in our models to improve the performance.

Moreover, we would like to perform a time series analysis and investigate the features that affect the seasonality of the rental index. Finally, we want to explore how our models could change to account for the impact of COVID-19 on the rental market. Finally, we could create an interactive app in order to better display our data insights.