Data Analysis: Predicting Zillow Rent Index Values

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Project Overview

Objective

The real estate market is one of the most lucrative and most attractive markets for a high-yield investment in the United States. Having a tool to accurately predict, based on data, what housing prices are going to be in the future provides a unique opportunity to allocate the investment capital, both for corporate and individual clients.

The goal of this project was to develop a tool that will allow the company to make precise predictions of what the housing rental prices are going to be in 1-3 years. The rental pricing indicator, which is the main target of the projections, is Zillow Rent Index (ZRI). ZRI is an estimated dollar-valued index intended to capture the typical market rate for a given region or housing type.

Zillow Rent Index shows the mean of rent estimates that fall into the 40th to 60th percentile range for all housing types in a given region (usually zip code).

Data

The project included five key sources of data that were used to build a machine learning model.

First of all, the historical values of the Zillow Rent Index. The second piece of the data is the demographic data from the US Census Bureau, specifically American Community Survey data, detailed to a zip-code level. The third piece of data is the data from the Internal Revenue Service, which includes zip-code level detailed information on the tax returns.

The fourth open data source is the Bureau of Labor Statistics information regarding the historic unemployment levels detailed by a specific county. The last piece of data used was private data provided by the capstone sponsor company, mainly focused on income, employment, and workforce information.

Data Preprocessing

Missingness and Imputation

Similar to many other publicly available datasets, as well as to many privately obtained ones, datasets used in this particular project contained a considerable amount of missing values.

Since Zillow Rent Index Values are the main predictive target for the project, it was most important to get rid of observations (zip codes) that have a considerable amount of missing values, in order to allow us to train an accurate model effectively.

Zillow Rent Index dataset contains values of the ZRI for each month starting from the year of 2010. The first step was to break down the dataset into smaller datasets, each containing values for just one year.

Next, in each one of the newly created datasets, the zip codes that contain more than three months of missing values were removed. The reason for such strict missingness requirement was the necessity to avoid imputing a large portion of missing values, and in that way, destroying the actual “dynamics” of the ZRI throughout the year.

After cleaning missingness and joining each year back into one extensive dataset, the number of remaining zip codes available for analysis became 9863, down from the original 13181 zip codes.

Missing values in the final dataset were filled using linear extrapolation to preserve the existing relationships within the neighboring ZRI values.

Normalization

Next step in the data preparation preprocessing is normalization. Normalization was performed for several main reasons. First, in order to ensure the linearity of relationships between explanatory variables and the target variable. Second, to ensure the normality of data distribution. Finally, to try and reduce the influence of the outliers on the distribution.

All of the above steps helped to make sure that the linear models that are going to be built will have greater predictability capacity.

The graph below demonstrates how the skewness of the data and the influence of outliers were partially remedied by performing the log transformation of the data.

Outliers

The next step in the data preprocessing journey is the removal of some of the noticeable outliers, which can significantly alter the distribution, relationship between features and the target, and, as a result, reduce model efficiency.

Data Structuring

The final step in the data preparation process is structuring data in a way that will allow for the capture of the most aspects of relationships between dependent and independent variables, as well as enable us to observe a certain degree of dynamics throughout several time periods.

For the purpose of analysis, all of the datasets were transformed to contain an average yearly value of each indicator. The main reason for this was the absence of the monthly level data in most of the datasets used.

Serving the goal of capturing a wider array of relationships between features and viewing a more extensive dynamics of the indicators, each of the independent variables was transformed into three variables to capture the value of that feature throughout a three-year period.

Another area of concern regarding datasets used is the recency of data. At the moment of the analysis, the Census Bureau and IRS datasets’ most recent period was 2017, and for the Bureau of Labor Statistics, the most recent year was 2018. Therefore, to keep the distance between the year being predicted and the year being used for training, the lags of three and two years respectively were introduced.

The final data structure turned out to resemble a 3-dimensional structure, with each observation being a zip code in a specific year, containing three years of values for each one of the variables.

Machine Learning

Train/Test Data Splitting

In the beginning, all the datasets were split into the train and test datasets. Training datasets contained the data that was aimed at predicting the value of the Zillow Rent Index in the year 2018. Test datasets consisted of data aimed at predicting the value for the year 2019. Therefore, instead of using, for example, 70/30 traditional train/test split, we created an entirely separate dataset for testing, to see how well the model will perform when predicting values for the following year. In the same exact way, we would use this model to predict values for the year 2020.

Modeling

For machine learning purposes, the team built five models. Three linear models - Ordinary Least Squares, Lasso, Ridge, one tree-based model - Gradient Boosting Regressor, and one Time Series Model.

At the first stage, the models were built for each of the datasets separately, in order to observe the performance of every type of data in the task of predicting future values of the Zillow Rent Index. One exception being the unemployment dataset since it includes only one variable, that being the percentage of the unemployed population.

Each of the models also includes the historical values of the Zillow Rent Index, to measure how much better the model will perform if we include any additional data besides just the values of ZRI from previous years.

Adding historical values of ZRI in other datasets allows for a direct evaluation of the necessity and usefulness of the additional data, as opposed to just predicting future values by looking at past values.

Two primary metrics for model performances were - Coefficient of Determination (R-squared) and Root Mean Squared Error (RMSE). The first one helped to observe how well our models describe the nature of relationships between Zillow Rent Index and variables, and the second one, helped us to evaluate how close we are, in absolute US dollar terms, to the actual rent values.

Results

First, the team created a “baseline” model - the model that predicts values of the Zillow Rent Index solely based on values of the previous 3 years. The purpose of this model is to serve as a benchmark for comparison with other models that incorporate more data. The performance of the baseline model can be seen below.

It shows the highest value of the Coefficient of Determination and the lowest value of the Root of Mean Squared Error, among all the results of all the models trained. The best performing model, in this case, is Ordinary Least Squares, which gives us a value of 99.21% for R-squared, and an error of $48.94.

Next are the best results of different variations of the data found in the dataset from the US Census Bureau. We can observe the best performances for four types of models. One for the model that includes all the available variables. One with only important variables. Another two are Voting Regressors (OLS, Lasso, Ridge, and Gradient Boost) for models with all the features included and the model with just the most important features.

Models

As we can see, the models that give us the highest coefficient of determination, which shows how well our model describes the actual variation of data, isn’t necessarily the model that allows us to make the most accurate predictions. While Voting Regressor, with all the variables included, “explains” the distribution slightly better than other models, at the same time, the model that includes just the most important features from the Census Bureau’s demographic data enables us to make the most accurate predictions.

More than that, it is evident that we can obtain both a better R-squared score, 99.24% vs. 99.21% and RMSE scores $47.63 vs. $48.94, by incorporating demographic data in addition to Zillow Rent Index values from previous years. Therefore we confidently conclude that the US Census Bureau dataset contains an added benefit.

Another set of models is based on the data obtained from the Internal Revenue Service. Just like we did with the US Census Bureau dataset, the best possible results are presented in the table below.

As we can see, this type of data also allows us to get results that are more accurate than the baseline model. Unlike with the demographic data, in the case with Tax Return data, the best performing model from both R-squared and RMSE perspectives is the Voting Regressor trained on just the most important features from the Internal Revenue Service dataset.

IRS Data

The best performance, based on IRS data, is also more accurate than the performance of the baseline model. Therefore, we again conclude that additional data, on top of the historic ZRI values, is beneficial for our modeling.

The last bit of independent model building is the models based on the data provided to the team by the capstone project sponsor. In this case, the situation does not seem to be as clear as with the previous two datasets. As we observe below, the best performing type of combination of data is the model with just the top features. It gives us the values of R-squared of 99.20%, which is slightly lower than the 99.21% value of the baseline model.

However, our RMSE result is $48.54, which is better than the baseline’s model value of $48.94. Based on that, we can conclude that this dataset is capable of giving us additional information in our quest for the best performing model.

Finally, after evaluating performances of all the models that include various data sources, we now want to assess the performance of the model that will incorporate the information from all the available sources.

Data Combinations

We performed two types of data combinations to evaluate performances. First, we selected the most important features from all of the datasets and combined those features into one single dataset.

The second type of combination is what can be called “second-level” modeling. We made predictions using separate models for each dataset, and then we averaged those predictions and compared them to actual values from a test dataset.

Both of the ways of combining datasets gave us better results than any other model based on just one or two sources of data.

The model that allowed us to get the best possible Coefficient of Determination, as well as the smallest possible Root of Mean Squared Error, is the “second-level” model where we average predictions of the best performing top-feature models from the models trained on the separate datasets.

One of the possible reasons for such an improvement in performance may be the fact that by training models on different bits of data, rather than on all the datasets combined, we were able to capture a higher percentage of variance and examine the importance and the degree of influence of the features independently from one another. Averaging results from those independent models gave us a more accurate prediction than any other combination of data.

Feature Importances

In addition to building a high performing model, the team also made an effort to create a significant level of interpretability of the model. It is exactly what allows for a more in-depth analysis of the factors that are having the most considerable influence on determining the rental prices across the country.

On top of that, the team performed a 2-staged analysis to discover the difference in features importance across some of the largest cities in the United States.

First, by determining the most important features for the country based on the combined dataset, and then by using the most important features nationally to train different models for various cities, and evaluate the importance of different groups of factors for each one of them.

The list of the most important features across the country, as well as their proportional importance (adds up to 100%), is shown on the graph below.

The list of the most important features across the country, as well as their proportional importance (adds up to 100%), is shown on the graph below.

Differences

As we can see, there are some noticeable differences between the cities in terms of how much of the “power” in determining the Zillow Rent Index a feature has in one region or the other. Thanks to the color-coding on the graph. We can observe that New York and Phoenix, for example, have significant differences in the top two features that can determine the level of the rent in the respective city.

Some of the differences in the top 6 most important features for each city can be seen in the table below.

Here we can see that, for example, in Los Angeles and Phoenix, it is much more important to know what are the lowest prices in particular zip codes in order to predict the overall level of prices. We can also see another similarity between Los Angeles and Phoenix in terms of the importance of the amount of Hispanic population in those areas.

Similarities

Similarities between Los Angeles and Phoenix should not come as a surprise since both of the cities are situated in the West-South of the country.

By contrast, New York and Los Angeles have only two similar features among the top ones for each one of them. This also makes sense, since most of the people have heard about the difference between what’s essential in New York as opposed to Los Angeles.

At the same time, New York and Chicago have a much more prominent degree of similarity, equivalent to that between Los Angeles and Phoenix.

As a conclusion of the feature importance section, it is interesting to take a look at some of the “curious cases” - features that seem to be much more critical in one of the cities, but not in others.

For example, we can see that Baby Boomers’ income levels tend to hold extreme importance in Phoenix, a popular place for people to retire. At the same time, this feature’s prominence is nowhere near as high in other large cities.

The same can be said about the importance of six-figure income employees in New York, as opposed to their significance in any other region.

The age of the house seems to be more critical in Los Angeles and Houston, compared to other large cities, mainly due to a reason that more land is available in those areas. As a result, there is a greater opportunity to build newer and better housing, in contrast with New York or Chicago, where people don’t seem to care much about the building’s age since most of the best-located buildings were built a long time ago.

Time Series

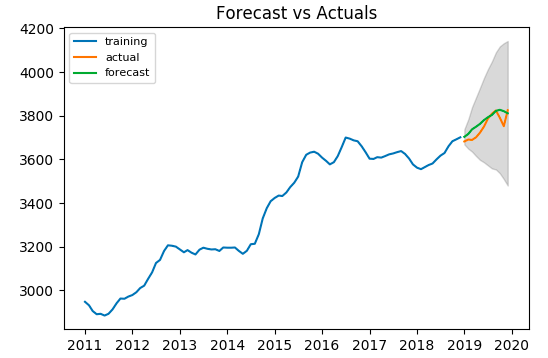

The analysis above gave a one-year-forward forecast for the Zillow Rent Index. In order to make a forecast on a monthly level, we applied a time series analysis on the Zillow Rent Index, which contains monthly data since September 2010. A seasonal autoregressive model is built for each Zip Code based on data from 2011 to 2018, then evaluated according to the monthly predictions and observed values in 2019. To illustrate how the process works, we take one Zip Code, 10006, in New York, for example.

The rental market usually experiences seasonal fluctuation, which repeats every year. The time-series data can be decomposed into an overall trend multiplied by a seasonal component.

The autocorrelation function shows that first-order differencing is sufficient to make the time-series data stationary. We also performed partial autocorrelation function on the differenced data and determined that first-order autoregression should be used. Data from 2011 to 2018 was used to train the model. Monthly predictions were made for 2019.

The same procedure is carried out for each Zip Code. To compare the result with the one-year forward prediction previously obtained, we took the average prediction of twelve months in 2019. The overall R^2 and RMSE turn out to be 99.31% and $49.74, comparable to the results of previous models.

Conclusion

Building a multi-level predictive model is never an easy task. At every stage of the process, there are multiple decisions to be made regarding the way in which to perform a specific task. As experienced data scientists often say: “Data Science is as much a science as it is an art”.

By being strict about cleaning the data, by not allowing the simply fill-in the NAs with random numbers in order to have observations included, we were able to keep the most “real” data and, in this way, ensure the integrity of the data-training procedure.

Train Model

More than that, the decision to train models on the available datasets separately, in order to get a more precise understanding of the importance of features independently of one another, ensured a more holistic approach, which resulted in greater accuracy than models involving all the available data merged.

After that, we went deeper into the analysis and decided to investigate the difference in local rental markets among some of the largest cities in the United States. As a result, we observed some reasonable and sometimes unexpected variations in the importance of explanatory variables for each city. Following this, we used some intuition and domain knowledge to try to explain the key drivers behind certain features being more prominent.

Finally, we incorporated a Time Series analysis, which allowed for an entirely different perspective on the data. It gave us an ability to capture historical values over a more extended period of time. Allowed us to have a more detailed dynamic based on the monthly values, and most importantly, provided an opportunity to capture seasonality patterns that play an incredibly significant role in the real estate market.

Thank You

Thank you for taking the time to read our blog post. If you are interested in other blog posts by the project team members or want to learn more about their background, please feel free to follow the links by clicking on the names of the team members provided in the next section.

The Team

The team that worked on the project consists of Alex (Oleksii) Khomov Ting Yan, Marina Ma and Lanqing Yang.