Rating the Rankings: A Method for Measuring the Effectiveness of Head-to-Head Ranking Systems

Are chess players ranked more accurately than tennis players? Which professional sport is best at ranking its teams for the playoffs? Is the NCAA getting better or worse at seeding teams for the March Madness basketball tournament? In short, what's the right way to measure the performance of a ranking system?

I started thinking about questions like these as I was working on a project relating to the prevalence of upsets in tennis. An "upset," of course, happens when the winner of a head to head contest is the person or team that was expected to lose. Often, the expected outcome of a contest is formally embodied in some sort of ranking system. For our purposes here, a ranking system is one that assigns an ordinal position in a list to all participants in a competition. In a competition involving n competitors, each competitor is assigned a ranking number of between 1 and n. Lower ranking numbers typically indicate better historical performance, so that the top competitor has a ranking of 1, and so on. It is worth noting that a ranking system is different from a rating system. With a rating system, some sort of scoring mechanism is used to assign points to each competitor, and competitors' historical performance can be compared by comparing their points. Rating scores can be, and often are, used to create rankings, in a way that assigns the top rank to the player with the highest rating score, and so on through the ordered list of rating scores.

The 2016 NCAA Men's Division I College Basketball Tournament Bracket (from cbssports.com)

Ranking systems are used in many different domains. As most everyone knows, they're frequently used in sports. The annual March Madness college basketball tournament, for example, ranks each of its 64 teams from 1 through 16 in each of four separate regions. Indeed, many single elimination playoff systems in sports assign a "seed" -- that is, a rank -- to all or most of the competitors. Among other things, this kind of ranking allows tournament directors to create a match schedule that pairs top competitors against poor competitors in early rounds, increasing the likelihood that the best competitors won't meet until later rounds.

As I was evaluating tennis upsets, I found that in men's tennis, the average severity of upsets (the difference in rank between the winner and loser) has been decreasing over the past 30 years. In wondering why this might be, it occurred to me that perhaps tennis officials changed the rating system during that period, so that tennis rankings got "better" in a way that resulted in fewer matches where players with very low ranks beat players with very high ranks. (Let's get what could be a confusing point out of the way: when I say "low rank", I mean a player whose historical performance has been poor compared to other competitors and whose ranking number is high; the "highest ranked" player has a rank of 1). But how could I evaluate the effectiveness of a ranking system? More precisely, how could I quantify the degree to which a ranking system accurately reflected the differences in the performances of the competitors?

In trying to answer that question, I came up with an approach based fundamentally on simple linear regression. Here's how it works. Before beginning the explanation, however, I should note at the outset that I am most certainly not a professional statistician. I have no doubt that what's here will seem overly simplistic, at best, to anyone with any degree of statistical sophistication. Even though I knew my work would not be at a professional level, I wanted to go through the process of coming up with a method for measuring ranking system effectiveness as a personal learning exercise. So I pressed on.

Figure 1: Expected Winning Percentage of Players vs. Their Opponents in a "Perfect" Ranking System

I started by thinking about how to compare a perfect ranking system to a ranking system's actual performance. The closer the actual performance is to the perfect system, the better the actual system is, right? But what would a perfect ranking system would look like? We'll, that's pretty easy. Because our ranking system should be a good predictor of match outcomes, a perfect system is one where the higher ranked player beats the lower ranked player every time.[1] Figure 1 on the left shows the expected performance of this perfect system in a hypothetical environment. Let's suppose it's showing the performance of a perfect system that's ranking the top five tennis players. Each dot represents a player's winning percentage against each possible opponent. The color of the dot shows the player, and the opponent is on the x axis. The y axis shows the expected winning percentage for each player when playing each other player. So in our perfect ranking system, the player with Rank 1 (red) always wins, meaning Rank 1 would have a 100% winning percentage against all other players. The player with Rank 3 (green) has a zero winning percentage against Rank 1 and Rank 2, but 100% against Rank 4 and Rank 5 (and no entry for 3, since of course Rank 3 can't compete against itself). The result is a step function, as depicted in Figure 1.

Figure 2: Regression Approach to Expected Winning Percentage By Player Rank

The problem with this approach is that it doesn't account for the fact that we expect the difference in player ranks to drive different winning percentages. In other words, even though we know that upsets happen in the real world, we still expect there to be fewer upsets when Rank 5 plays Rank 1 than when Rank 2 plays Rank 1. The step function doesn't incorporate that reality -- it assumes the same winning percentage (zero or 100) regardless of the difference in the players' rankings. To better incorporate a sliding scale of win likelihood, I used a regression approach. Specifically, I ran a regression line (using least squares regression) for the "distribution" of points for each player's expected winning percentage. Figure 2, to the right, shows the results of this regression approach in a 20 player environment. It shows the expected result for five of the 20 players. Rank 1 has no points that are not at 100%, so the Rank 1 regression line is simply a horizontal line at 100%. Rank 5, in contrast, has four points at an expected winning percentage value of zero, and those points pull the left side of the Rank 5 regression line downward. Rank 10, near the midpoint of the player rankings, has a regression line that is balanced between winning and losing (it's not perfectly balanced, since there is no actual "middle" player in a group of 20 players, but of course it's close). The pattern continues through Rank 20, which has a horizontal regression line at zero percent.

The regression lines give us a hypothetical predicted value against which to compare actual results. Suppose that, historically whenever Rank 5 and Rank 16 have played, Rank 5 has won 40% of the time. We can say that this deviates from the expected result by about 50 percentage points, since our regression line tells us that Rank 5 should have a winning percentage of about 90% against Rank 16. As discussed below, there are a lot of things we can do with these residual (actual minus predicted) values.

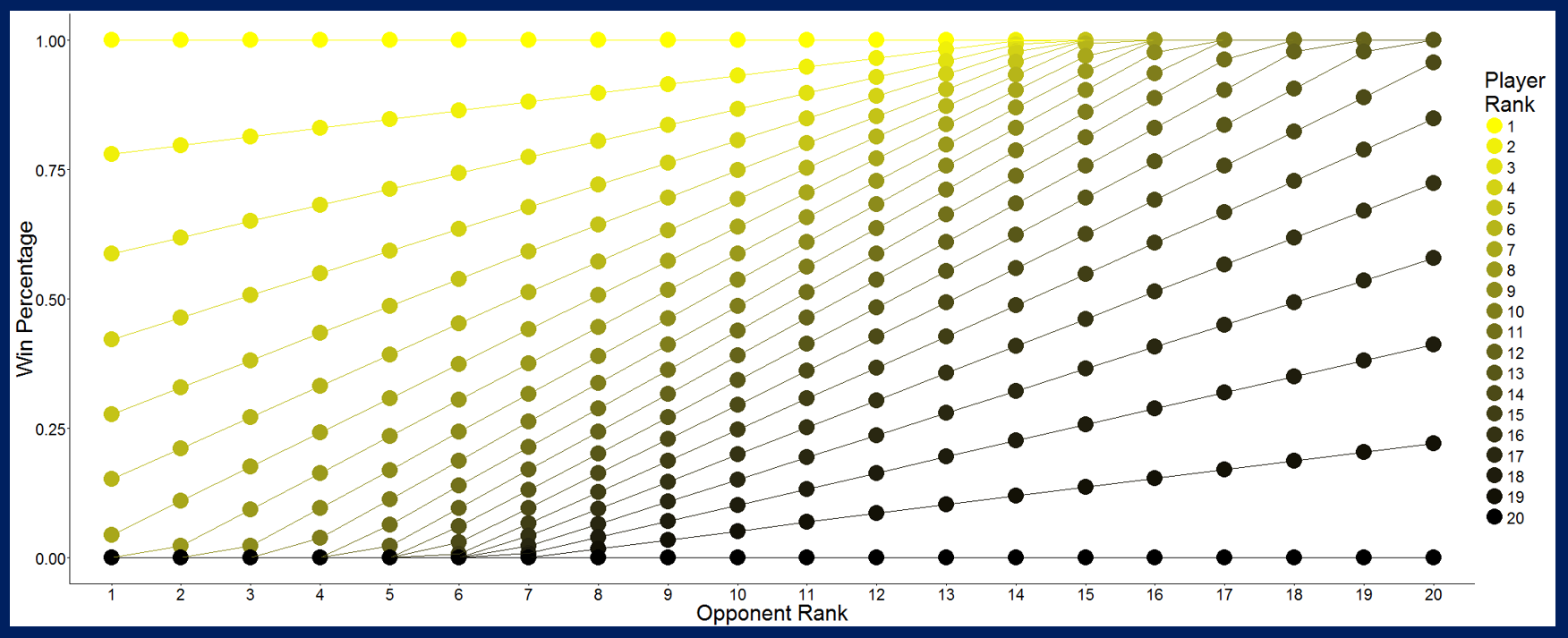

Figure 3: Adjusted Regression Approach Showing Expected Winning Percentage For 20 Player Environment (some points obscured by overlapping points).

Before we get to using the residuals, though, yuou may have noticed a problem. Figure 2 above shows that due to the slope of the regression lines, some expected values for winning percentages are above 100%, and some are below 0%. Obviously, it's impossible for one player's winning percentage against another player to be over 100 or under zero. To fix this, I simply reduced any percentage that was above 100 down to 100, and increased any percentage that was below zero up to zero. Figure 3, left, shows this adjusted regression approach for a 20 player environment. The resulting lines are akin to the lines associated with a sigmoid function. Adjusting the regression lines this way had the effect of reintroducing the problem of an inability to differentiate expected winning percentages for a small subset of all points. To me, this seemed preferable to penalizing a ranking system (i.e. calculating a residual) for failure to demonstrate impossible winning percentages, but an argument could be made the other way, too.

At last we are left with a model of the expected values for winning percentages against which we can compare observed values. The observed values, of course, are the actual winning percentages of different ranks in matches against each other. The result of that comparison is a residual value for each player - opponent pairing. There are many things we can do with those residual values. We can, for example, compare the performance of a ranking system over different sets of matches. Did the tennis ranking system perform better in 2006-2010 than it did in 2011-2015? To answer that question, we'd compare the observed values for each of the two sets of matches to the expected values in our adjusted regression approach. The average residual value (the standard error, if you will) for each set of matches would be an assessment of the performance of the ranking system for that set of matches. Whichever set had a lower average residual value -- let's call it the Ranking System Performance Score or RSPS -- demonstrates better ranking system performance. We can even use RSPS to compare the performance of ranking systems across different sports. Is the tennis ranking system better than, say, the March Madness ranking system? Grab some data for both and compare their RSPS values.

In the next post in this series, we'll put the RSPS to use and analyze some tennis data.

Posts in this series:

- What's Happened to Big Upsets in Professional Tennis?

- Rating the Rankings: A Method for Evaluating the Effectiveness of Head-to-Head Ranking Systems (this post)

- Tennis vs. Chess: Comparing Ranking Systems in Different Domains

Notes:

1A truly "perfect" raking system can't exist, and we shouldn't want one. That's because obviously, at least for sports, a 100% perfect ranking system would be 0% fun -- without the possibility of an upset there would be no reason for fans to watch games. But that doesn't mean we can't use the perfect ranking system as the baseline against which we compare rankings systems, for purposes of evaluating the rankings system's effectiveness.

2My quick review of more sophisticated prior work done specifically on ranking systems didn't reveal much in the way of research. I should admit that my review of prior research was not at all thorough, since I wanted to go through the process of coming up with a method for measuring ranking system effectiveness as a learning exercise. I also note that rating systems get much more attention than ranking systems, presumably because ranking systems typically aren't much more than ordering the ratings. For examples of work in this area, see, e.g. "An overview of some methods for ranking sports teams", and, specific to tennis, Searching for the GOAT of Tennis Win Prediction (abstract). Finally, it is worth noting that one popular rating methodology is the Elo system, which got its start in chess but is often use in many other environments involving match competition. Elo is a rating sytem, though, and not a methodology for measuring the effectiveness of the rankings it can generate. In other words, what I've described here could be used as a metric for assessing how well Elo ratings perform in a given sport. I emphasize again, however, the point I made above about my lack of professional statistician skills.