Restaurant Market Data Analysis of US Cities

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

*The link to my Github repository containing code for this project can be found here

Introduction

Today, there are many places on the internet where consumers can offer their reviews and opinions. Restaurant reviews, such as Yelp.com and Tripadvisor.com, are among the most popular review websites to obtain data, as consumers love to share their experiences (good and bad!) after dining out. This is excellent for consumers, helping them to look for places to eat and get ideas for new restaurants to try, both in their hometowns and while traveling.

The abundance of compiled data could also provide useful insights for those in the restaurant business, if they have the ability to collect and analyze this wealth of data. To this end, I designed a web scraping project to obtain bulk market data on restaurants from the 60 top U.S. cities on TripAdvisor.com, with key parameters scraped from each restaurant in each city including Average Score, Cuisine Type, Price, and Number of Reviews. Using this data can give insight into a number of important questions, including the following:

- What types of restaurants (grouped by cuisine) have the strongest markets in a given city (measured by metrics including number of restaurants, average review scores, number of reviews per restaurant, etc.)

- Which restaurant markets are under-performing in each city (small number of restaurants, with generally poor reviews)

- Which markets may offer potential for growth in a selected city (low number of establishments, but generally well-received, with high review scores)

- Given a specific type of cuisine, where in the U.S. is this cuisine most and least popular or highly-rated?

In summary, I scraped basic data from approximately 120,000 restaurants in the top 60 U.S. cities (according to TripAdvisor.com), and after some data wrangling and pre-processing (described below), updated my analysis to reflect the top 50 U.S. cities.

Web Scraping Process

To scrape information from TripAdvisor.com, I used Scrapy, which required a spider with three parse functions. The overall layout of TripAdvisor is friendly to large-scale data collection, largely because there is a page available (see above) where all U.S. cities are displayed in descending order from most to least popular. The first scrape function programmed into the Scrapy spider was to parse links to each city page from this list of the top cities.

I then passed this link into a second parse function to parse the "front restaurant page" of each city. On the front page, the top 30 restaurants are listed, so I needed to parse the data from these restaurants using this parse function. I also used this function to gather the links to all the subsequent pages, where information from all the other restaurants was found.

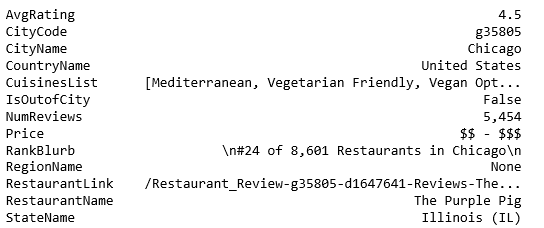

Finally, I wrote the third parse function to scrape restaurant data from these subsequent pages. After this scraping was complete, I wrote the output to a JSONL file, in which each line represented one restaurant. This could then be converted to a Pandas dataframe, with the following entry as an example:

Data Analysis

I then formatted and cleaned this data frame. Most importantly, I had to create two different versions -- one with the "Cuisines List" column retained as a list (the "collapsed" DF), and one with the Cuisines List expanded (the "expanded" DF). In the Collapsed DF, every row represented one unique restaurant, whereas in the Expanded DF, each row represented a specific cuisine in a restaurant, and a restaurant with multiple cuisines would occupy multiple rows. Different analytic questions required either the Collapsed or Expanded DF, depending on the specific question being asked.

Data Visualization

I first did a simple comparison of bulk data among the top 50 U.S. cities. I noted, for example, that more "touristy" cities (Orlando, Las Vegas, New Orleans, New York, etc) tended to have the highest number of reviews per restaurant, which would be consistent with the hypothesis that people are more likely to rate and review their experiences while on vacation.

Later, I also compared the frequency of each restaurant cuisine among all 50 cities in aggregate, noting the top cuisines included American, Asian, Italian, and cuisines with specific dietary preferences including vegetarian. I used this information to normalize the relative frequency of each cuisine in an individual city.

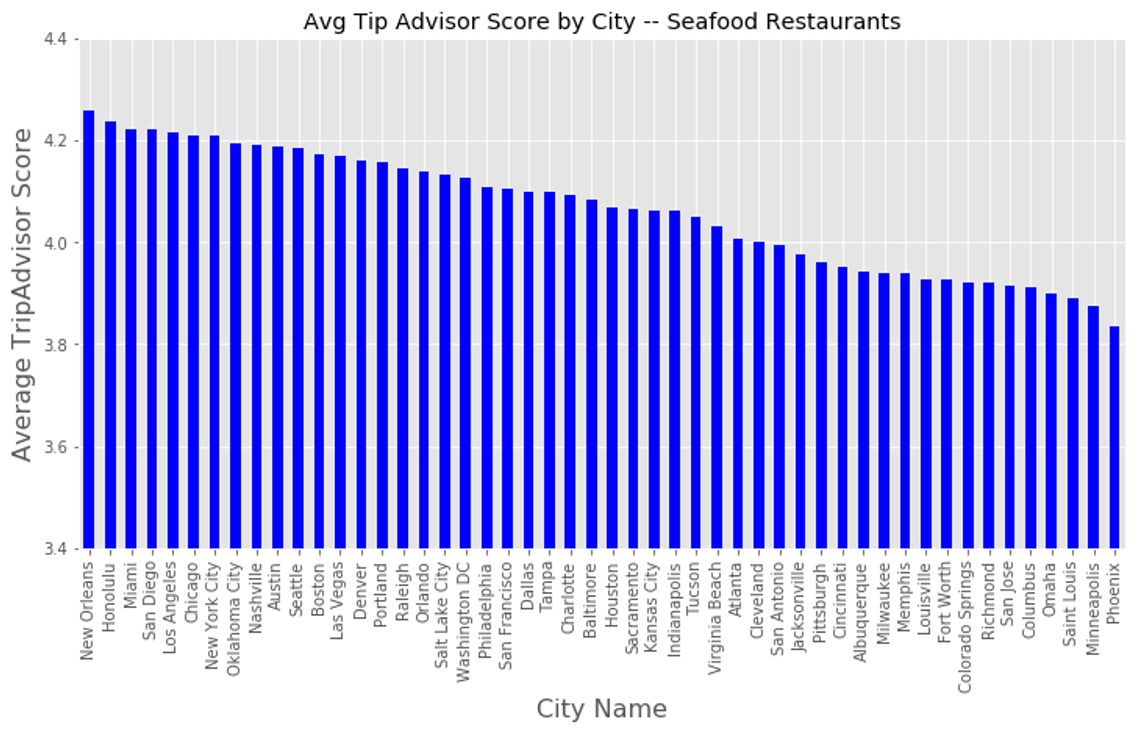

Based on this cleanup and analysis, a number of visulizations were possible. First, I was able to ask, for a given cuisine, which U.S. cities were the most highly-rated (based on average score) for that particular cuisine. This would be indicative of a strong market for that cuisine in a given city. An analysis of, for example, Seafood restaurants yielded insights in line with what would be expected (strong reviews in coastal cities and poor reviews in landlocked areas such as Phoenix:

Furthermore, with this analysis, I was able to select a city and visualize which markets were strong and weak in that city. I chose, as an example, Baltimore, and observed a dearth of Latin restaurants and relative strength of Seafood and Greek restaurants there:

Conclusions

Using this tool, it is possible to perform these visualizations for any of the top U.S. cities, or for any desired cuisine. This analysis could be a useful starting point for an entrepreneur doing basic market research to determine the potential for growth in a given market.

This method could also be used to scrape data from other geographies, for example in large suburban areas with many potential consumers. The difference would simply be that data would need to be scraped from multiple towns and cities, then combined.

Overall, the availability of information from over 100,000 restaurants from the top 50 American cities provides a promising target for web scraping, and the method I designed was able to collect this data and perform basic market analyses with them. This analysis could be enhanced by obtaining more detailed financial data, and by incorporating further information such as pricing and detailed reviews, to learn more about consumer attitudes in selected cities, and to get a better gauge of potential restaurant markets.

*The link to my Github repository containing code for this project can be found here!