Studying Data to Predict Housing Prices in Ames

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

As an entry for the Iowa House Pricing Prediction Kaggle Competition, we put together a unique set of python code that allowed us a score in the 15th percentile. The data set provided by Kaggle introduced 1460 houses, each with 80 different characteristics that may or may not contribute to the way each house is the price. In this study and exploration, we utilize machine learning methods in python and a combination of regression methods to attempt house pricing prediction.

Data Cleaning

The dataset required initial cleaning and filling of empty or meaningless values. In the first figure, we observe a visualization of our data set an its "NA" or missing values. Our goal was to assign those empty values to help us increase our accuracy in overall housing price prediction.

Figure 1: Initial visualization of dataset and missing or empty values (white voids).

Figure 1: Initial visualization of dataset and missing or empty values (white voids).

We utilized three ways of data cleaning. To tackle the variables containing missing or empty values, we took a look at each one and replaced the missing value with the overall mode for all of the houses in that variable. In some cases, we decided to replace with the modes of each neighborhood instead of the whole dataset. Figure 2 shows the dataset create that isolates the mode for each neighborhood and variable, so the values that replace the missing values are more specific to different neighborhoods. This allowed us to create a more sophisticated data set that was tailored to the individual characteristics of different neighborhoods.

Figure 2: Data set created from modes of neighborhoods for each variable.

Figure 2: Data set created from modes of neighborhoods for each variable.

Some variables required special case handling due to that missing value to be not missing at random. One example of this was the PoolQC variable which contained the most amount of missing values. To fill the missing data, the variable was compared to the PoolArea variable.

Realistically, if the PoolArea value for a house exists and the PoolQC does not, then that particular house does contain a pool, however the quality of the pool was left unrecorded. The PoolQC variable's missing values were assigned according to the OverallQual of the home. If the OverallQual was larger than 5, the PoolQC missing value was filled with 'Gd', and filled with 'Fa' for values less than 5. Figure 3 shows an example of three missing PoolQC values and their new assignments.

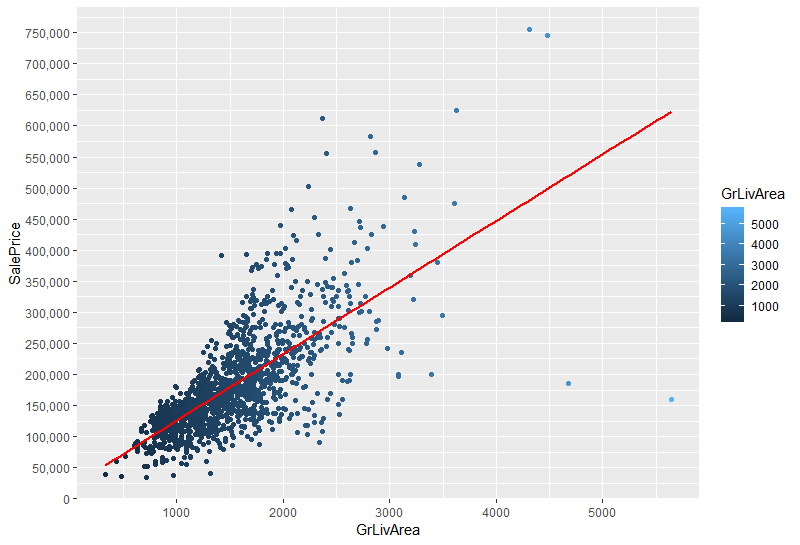

Moving onto an initial look at outliers, we first removed two data points from GrLivingArea. In the following graph, those data points (highlighted in yellow) do not follow the general trend of GrLivingArea and SalePrice. We later return to outlier removal after we began to run models.

Sale Price Variable

Our goal in this project is to predict the Sale Price given the features in the test dataset. So first, we examined this column on the train dataset.

Figure 5: Sale Price variable is not normally distributed.

Figure 5: Sale Price variable is not normally distributed.

When we look at the histogram of Figure 5, it is possible to notice that there is a skew in this variable, which violates one of the assumptions of multiple linear regression, normality. To try to get a variable with a standard normal distribution, we applied the log transformation (Figure 6).

Figure 6: Sale Price variable after the log transformation.

Figure 6: Sale Price variable after the log transformation.

Feature Engineering

When we analyzed all the features, we realized some feature could be concatenated. The first one was the total square feet of the house.

- BsmtFinSF1

- BsmtFinSF2

- 1stFlrSF

- 2ndFlrSF

Another combined variable is the bathrooms in the house. We counted fullbath for 1 and halfbath for 0.5.

- FullBath

- HalfBath

- BsmtFullBath

- BsmtHalfBath

We also combined the total porch size.

- OpenPorchSF

- EnclosedPorch

- 3SsnPorch

- Screenporch

- WoodDeckSF

Also, to be able to do the linear regression models, we dummified all categorical variables into dummy ones.

Data Correlations

To better understand the overall dataset, we analyzed the correlation among the variables of the housing data set with the heatmap on the Figure 7. In this chart, darker colors indicate a larger correlation between two variables while lighter colors show a smaller correlation.

Figure 7: Correlation among the variables.

Figure 7: Correlation among the variables.

The next heatmap (Figure 8) indicates the most correlate features with sale price. It is possible to see that variables such as overall quality, size, and age are highly correlated with price.

Figure 8: Most correlate features with sale price variable.

Figure 8: Most correlate features with sale price variable.

Another chart that can give us a good idea about variable relationship is the scatter plot shown on Figure 9.

Outliers in Our Data

First, we created a linear regression Ridge model. It produced good results with default values. The goal here is to use a straightforward model to identify any outlying points which are properties with sale prices far away from what's expected given their features.

Then, we defined a function that fits the model and checks the residuals between the model's predicted sale prices and the true sale prices. We calculate the standard deviation of the residual: and around 20 or so properties with residuals greater than three times this standard deviation are removed from the training data so they don't skew the parameters of the fitted models.

We also did a similar thing with Elastic net, which produced similar results. Then, we graphed the outliers for these function on Figure 10 (orange dots), and we found the index of these outliers and we removed these rows.

Feature selection/modeling

Regular regression coefficients describe the relationship between each predictor variable and the response.

The first selection method is to find the coefficients of predictors using sklearn preprocessing and pipeline packages of robustscaler and make pipeline.

Robustscaler removes the median and scales the data according to the quantile range. The reason why we used this is because Standardization of a dataset is very important. Typically this is done by removing the mean and scaling to unit variance. However, there might be additional outliers can often influence the sample mean / variance in a negative way. In such cases, the median and the interquartile range often give better results.

In addition, we used tenfold cross-validation. Figure 11 is the picture of found coefficient importance for our first model.

Figure 11: Coefficient importance for the first model.

Figure 11: Coefficient importance for the first model.

Secondly, we used embedded methods of feature selection: Ridge & Lasso. This helped us regularize our models by adding a penalty against complexity to reduce the degree of overfitting.

We ran both original models in addition to these models with built in validation (RidgeCV & LassoCV), which automatically selected the ideal value of alpha.

Only features within 2 standards of deviation.

Figure 12 is the coefficients of after running Lasso.

Next, we used Elastic nets, which combine L1 & L2 methods which address “over-regularization” by balancing between lasso and ridge penalties. The alpha value was tuned with cross-validation.

More Modeling

We implemented gridsearch for optimal parameters. We used cross-validation function of XGBoost’s ‘early stopping’ to obtain optimal boosting rounds.

Later, we discovered the method of implementing meta-regressor through a process called stacking generalizations which trains a model on a part of the training set. The stackingCVRegressor and Vecstack are some of the options.

These models split training data first into a new training set and a holdout set. Then the algorithm tests these models on the holdout set and uses these predictions as input for the 'meta model, which is the meta-regressor to be fitted on the ensemble of regressor. Surprisingly, parameter tuning for this model is fairly easy. However this took forever to run! Around 1 hour each time. However, it didn’t give the lowest RSME score but it is still immensely useful.

Lastly, final averaging weights are mostly trial and error. We gave the last 2 0.3 weights and the other one 0.2 or 0.1 weights.

Result

The child of our combined effort resulted in a 0.115 Kaggle RSME score which places us in the rank 621.