Studying Data to Predict Housing Prices in Ames

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

The Ames Housing data set supplies sale price information for close to 3000 homes in Ames, IA, depending on some 79 features. Such a feature-rich dataset provides excellent opportunity to apply machine learning techniques to predict the sale price of houses. As such, the main goal of this project is to explore the various predictive models and gain a better understanding of the mechanisms behind them. In the course of finding the model that gives us the most accurate results, we hope to acquire deeper insight into the different models we used, the dataset itself, and the process as a whole.

Data Exploration and Cleaning

As a first step to finding a suitable model to relate the sale price of houses to the other variables, we explored the data to get a better sense of the different features present and how to handle them. One of the first things that we noticed was the histogram of the target variable, sale price, when plotted, is clearly not normally distributed.

The plot has a distinct rightward skew. Such a skew is not surprising, since you would expect there would be more houses with higher than mean prices than otherwise. Given this information, if we were to do a linear fit of the sale price, we should log transform the data first so that the column is actually normally distributed.

Correlation Between Various Features and Sale Prices

Next, we investigated the correlation between the various features and sale price. The top ten features with a correlation of 0.5 or higher with sale price were plotted and we noticed many of these features were not only highly correlated with sale price but also with each other. As we do feature selection and engineering later on, this is something we should keep in mind.

Continuing our investigation of the dataset we looked for missing values and figured out how to deal with them. The first figure is a graph showing percentage that each column is filled(not null) and the second is the actual number of missing values for each column. As we can see, there are quite a lot of missing values in several columns. In general, we classified them based on what the missing value represent and impute them accordingly.

Observations

The majority of the missing data correspond to “No such Feature” and we impute ‘None’ or 0 for them. With the other missing values we took a more granular approach. For the missing numerical features we grouped the data according to its corresponding Neighborhood, and then imputed the mean or median for the Neighborhood, whichever seem more appropriate. This is reasonable as we expect houses in each neighborhood should share similar features.

For the missing categorical features we grouped the data similarly but imputed the mode of the Neighborhood instead. With these steps we were able to take care of the majority of the missing values. There were a few special cases which we handled individually, such as the “Garage Year Built”, which we imputed the same year as the house built year, and kitchen quality, which we scaled according to its overall house quality instead of going by neighborhood average.

Feature Engineering

For feature engineering we began with the simplification of features. Because many features are related to each other, and are highly correlated as shown in the previous chart, we condensed many columns into one. Altogether, we built five new features:

- Total Bathrooms: Sum of Above ground full and half baths and Basement full and half bath.

- House Age: The difference between Year Sold and Year Remodeled

- Remodeled: Binary value representing if the has remodeled year was different than house built year

- Is New: If Year Sold equals Year Built

- Neighborhood Wealth: A categorical value (1-4) of different groups of houses based on disparities in their neighborhoods median wealth.

Data Transforming / Scaling

After noting that several of the variables such as Ground Living Area showed a mostly linear relation to sale price, we decided we were going to use linear models to fit the dataset. We were unsure whether it will be the best method, but we wanted to give it a try. Due to this fact, we need to scale our data as well as transform our categorical values to dummy variables. For this we simply used Scikit-Learn’s “Standard Scaler” method to scale the data (subtract the mean and divide by the standard deviation) and Pandas “Get Dummies” method to one hot encode our categorical features. As mentioned earlier, we also performed a log transformation on the target variable to normalize it.

Modeling

Below is a list of methods we used:

- Linear Models

- Ridge Regression

- Lasso Regression

- ElasticNet Regression

- Support Vector Machine

- Tree based

- Random Forest Regression

The results are summarised in table below. A detailed discussion of each model will followed.

Linear Models: Regularized Linear Regressions

The linear models we tried were regularized models, such as Ridge, Lasso, and the ElasticNet regressions. Based on the general linear trend among the target and the predictors as mentioned earlier, we expected the linear models to work fine with the data. Since the data had more than 200 features and we do not have an exact way to choose them according to their importance for predicting the house price, it would be difficult to use the general linear regression models. We decided to directly try the regularized regression models so we can select the meaningful features for the prediction, mitigate overfitting and overcome multicollinearity problems at the same time.

Because Lasso and Ridge regressions put constraints on the size of the coefficients associated to each variable, which depend on the magnitude of each variable, standardization as we mentioned before was necessary. Second, we removed the outliers which were significantly far off from the linear relationship between the target and some of the main predictors as shown in the plots below. Although the outlier removal might have caused information loss, we saw that it did improved the performance of the models when comparing the results from before and after their removal.

Results

The results of the Ridge, Lasso and ElasicNet models, with the hyper parameters used are shown below. The hyper parameters, λ and the L1 ratio, were optimized by using grid searches with the LassoCV/RidgeCV/ElasticNetCV (K=10) functions from the Scikit-Learn package. For the model evaluation, 10-folds cross validations were used for each model.

In the plots comparing our prediction from the Ridge/Lasso models to the original target, all the models seemed to agree pretty well. All the models got R2 around 0.92, RMSE of less than 0.12, and got the best Kaggle leaderboard scores among the other models that we ran. As we expected, the target variable, sale price (log-transformed), showed a relatively linear relationship with the predictors. The ridge model received the best Kaggle leaderboard score, but the other models show similar performances as well.

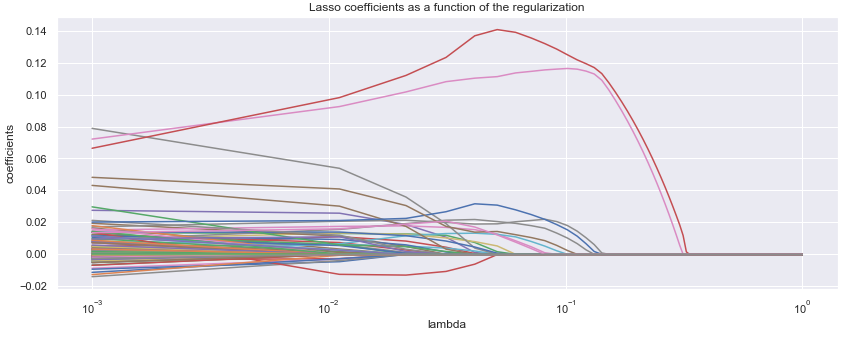

Coefficient Plots

We also used the Lasso method to generate the coefficient plots below showing the importance of the different variables. In the plot, the later a variable turns to zero, the more it affects the target. The two variables that most influence the model are Total Square Ft. and Overall Quality. Other important variables are the Ground Living Area, Year Built, and Overall Condition.

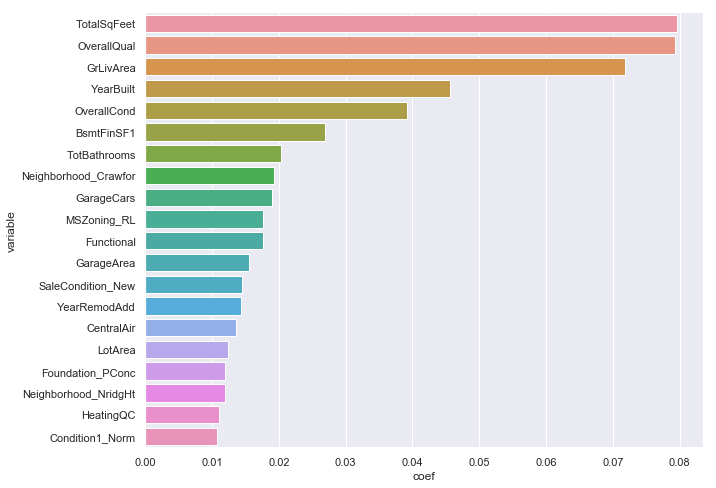

Another way to view which feature importance is shown below. The top-20 variables ranked by magnitude of the coefficients from our best lasso model is plotted, showing the same variables, Total Square Ft, Overall Quality, etc. affect the house sale price the most. One should note that in general, the size of the coefficients may not be an indicator of feature importance. But since we have scaled all our variables, we can use this metric as measure of feature importance more readily.

Support Vector Machine Regression

After trying the Ridge / Lasso based linear models, we tried the SVM based regression to see if we can use a different model to get results that are just as good or better by using parameter tuning methods such as grid search and cross-validation (CV). For experimental purposes, we first tested SVR without parameter tuning, then obtained benchmark results with parameter tuning. We recorded the RMSLE benchmark using a 5-fold CV done on the training set, Kaggle leaderboard score, and finally the computational time of each configuration. Below is a table summary:

Results

The results we obtained through SVR showed us several key points. First, the choice of kernel in the SVR model plays a critical role in all three statistics. The linear kernel produced extremely small RMSLE even before parameter tuning, indicative of severe over-fitting. While the Gaussian kernel had relatively large RMSLE, but actually showing an improved Kaggle score over the linear kernel.

The next trend we observed was that in general SVR training time increases by at least one order of magnitude when we used GridSearchCV as a parameter tuning framework. This suggests that in projects involving larger datasets, one is advised to first run the model without parameter tuning as a benchmark, as model performance based on different kernels correspond well with performance after parameter tuning. For example, the RBF (Gaussian) kernel achieved the best Kaggle leaderboard result both before and after tuning. Conversely, Poly and Sigmoid kernels performed poorly both before tuning and after tuning.

The last conclusion we can draw is that the 5-fold CV benchmark on the training set for different model kernels is a good indicator for the Kaggle performance of the kernels. If a model performs well under the 5-fold CV benchmark, it is likely to perform well in the test set as well.

Random Forest

Due to the high number of categorical features we felt the next best course of action would be to train a Random Forest model because of its inherent resiliency to non-scaled and categorical features. It would also allowed us to Even though it may not be the most time efficient process, we implemented a Grid Search Cross Validation method to tune for the best hyperparameters. We started with a fairly coarse grid search tuning over large gaps in the parameters and ended with a very fine search to hone in on the best parameters.

To test the usefulness of these hyperparameters we also modeled a base random forest estimator, using just 10 trees and the rest as default settings. With this base estimator we achieved an accuracy of 99.20% with an average error rate of 0.0954. Our tuned model achieved an accuracy of 99.26% with an average error rate of 0.0888. With only a 0.06% increase in model accuracy, in most cases it would not have been worth it to spend the time tuning, especially for large datasets. This shows that our hyperparameter optimization process is not as efficient as it could be.

Looking Forward / Summary

As we completed our analysis of the dataset, we thought of ways that we can improve our model. One idea that we discussed but did not have time to implement was to perform some sort of classification before doing the modeling. We could add our own classes or groupings as variables and check feature importance to see if and how our models changed based on this new variable. These classification can even be done with unsupervised methods such as clustering to discover hidden groupings within the data and utilize them as new variables. Finally, we could have use ensemble methods to combine our models to obtain the best results.

In conclusion, this is a basic analysis of the dataset using relatively rudimentary modeling techniques. Given the relatively simplicity of the data, despite the large number of features, it is not surprising that we obtained the best results with our linear models. With more time and now a greater understanding of what other modeling processes are out there, we feel that a much more in depth analysis and subsequent modeling process can be done.