Utilizing Data to Detect the Red Flags of Medicare Fraud

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

LinkedIn | Other Work | Github Repository

Introduction

Medicare is the federal health insurance program of the United State of America for people sixty-five and older or with special conditions. Medicare fraud is a multi-billion-dollar problem not only for the Medicare program and the United States Government but also for the recipients forced to pay higher premiums to offset the fraud. Data shows that improper payments total almost $50 billion each year to doctors, hospitals, and networks committing fraud.

Unlike centralized healthcare systems, Medicare acts as the healthcare insurer rather than the service provider. Subsequently, this allows most healthcare providers to accept Medicare patients but also decreases the ability for fraud oversight.

Medicare fraud is committed in numerous ways and fraudsters become more creative and subtle as overt fraud is caught and prosecuted. Several common methods for fraud are upcoding diagnosis, unbundling procedures, kickbacks to other providers, and overbilling. These improper payouts not only sap money from Medicare but also increase the premiums paid by all Medicare recipients to cover the fraudulent payments.

We use a Kaggle dataset encompassing a subset of Medicare claims. This dataset covers more than a year of claims between a group of patients and providers. There are inpatient and outpatient claims tables, as well as information regarding the beneficiaries (patients) and a key indicating if a provider is suspected of fraud.

Investigation

Missingness

The first step in understanding our problem is understanding what is missing in our dataset. Luckily, this is a high-quality dataset and almost all missingness is intentional.

This occurs in several ways. The largest sources of missingness are Diagnosis and Procedure codes, which can be seen on the right side of both graphs in Fig 1. These codes, along with the corresponding physicians, are intentionally missing because not all patients need all 10 diagnosis codes, even fewer need all 6 procedure codes, and many patients lack an Operating Physician because they did not undergo an operation. It seems that the only real source of missingness is the amount of deductible paid by the patient; several hundred values missing which seems to indicate payment was not collected.

Data on Fraudulence

To predict fraudulent providers, we are given a key indicating if a particular provider (represented by a unique code) is suspected of fraud. In this key, there is a 9% prevalence of fraud. These fraudulent providers make up 36% of outpatient claims, 58% of inpatient claims, and 38% of overall claims (Fig. 2).

This is because fraudulent providers are submitting more claims than non-suspect providers. While most non-suspect providers are submitting 10-200 claims throughout our dataset, suspect providers are submitting 100 to 1000 claims (Fig. 3).

While most analyses would aggregate together information about all claims by the provider, this approach misses an important aspect of our data and the real-world problem. Most aggregation techniques (and models built from them) will change significantly when the timeframe of the aggregation is changed.

Aggregation also requires that sufficient time to pass to collect data, which in this case means allowing a provider to commit fraud for a year before rendering a decision on their status. Thus, a better way is to identify the indicators of fraud within each claim and render a decision on that level.

Diagnosis and Procedure Codes Data

One of the most common forms of fraud is upcoding and unbundling, two techniques in which diagnosis and procedure codes are either increased in severity or separated into multiple codes when they should be reported as a single code. These codes are derived from the IDC-9-CM system, which codifies the diagnoses and procedures in the United States.

As we might expect, when we investigate the diagnosis and procedure code distribution across inpatient and outpatient claims, we see that these claims have a unique code distribution (Fig. 4). Inpatients tend to have more diagnosis and procedure codes than outpatients; About 60% of inpatients have 1 or 2 codes and almost no outpatients have any procedure codes. Interestingly, over 60% of inpatients have 9 procedure codes while only 20% have 8 or 10 codes.

One way to visualize this relationship in the context of fraud is using a heatmap (Fig. 5). While a high number of procedure codes per diagnosis code increases the likelihood of fraud, very few patients have more than 2 procedure codes, so this feature only targets a small fraction of fraudulent providers.

Removed Features and Protected Classes

Some features in our dataset held little or sensitive information. One example is the beneficiaries table, which provides patient information such as Medicare type (A or B). This was conveyed in two columns denoting the number of months the patient had participated in Medicare A and B. However, this information is not relevant to the fraud analysis, as evidenced by the fact that both Medicare A and B are heavily skewed to 12 months and there was no clear signal across the months of participation indicating an abundance of fraud (Fig. 6).

Furthermore, the beneficiary table also included race and gender, which are protected classes in the United States. Machine learning models tend to use race and gender information in biased ways, and within our context, there isn’t much information to be gained from incorporating these features (Fig. 7). Thus, for these reasons the beneficiary table was not considered in the fraud analysis.

One possible downside to this decision is that predatory providers might target specific groups as one aspect of their fraud, and thus removing these features prevents the model from identifying this trend. However, without information to support the predatory provider hypothesis, it is better to drop these sensitive features.

Data on Feature Cleaning and Engineering

The last step before modeling is feature cleaning and engineering. Several columns needed minor cleaning, such as characters to numerical values and filling the missing values of Deductible Amount Paid to 0.

Several types of features were engineered in our dataset, most notably the count of diagnosis, procedure, and chronic conditions. Multiple accumulating features were created, including Time Since Last Claim, Total Claim Days, Cumulative Visits, Cumulative Provider Visits (Fig. 8), and cumulative payments. Another set of features divides the Insurance Claim Payment amongst the physicians, the number of codes, and days of care.

A feature interaction of note is the Claim End Date and Discharge Date. For the few claims which have a different Claim End Date vs Discharge Date, all claims are from fraudulent providers. A pure node!

Since the models to be employed are all tree-based models, there is no pressing need to encode that data however for good practice the continuous features were encoded using a Power Transformer.

Modeling

Upsampling

While using claims instead of providers offers a more balanced dataset, the 40-60 split still affects our model. To remedy this, I employed Synthetic Minority Oversampling Technique (SMOTE), which creates new data for the minority class (claims from suspect providers) by placing these new values ‘between’ two existing values in high-dimensional space. SMOTE-NC specializes in a mixture of nominal and continuous data; and SMOTE-Tomek removes points whose nearest neighbor is a point of the opposite class, sharpening the boundary between the data (Fig. 9).

Model Selection

The upsampled data was then fed into the out-of-box Random Forest, Gradient Boost, XGBoost, and CatBoost models. The accuracy of each model was assessed. Across all models, those trained with SMOTE-Tomek performed better than SMOTE alone or the original data (Fig. 10). Between models, XGBoost and CatBoost performed better than Gradient Boost or Random Forest. Consequently, XGBoost and CatBoost were selected for hyperparameter tuning.

Hyperparameter Tuning and Analysis

XGBoost and CatBoost were tuned via Randomized Search with Cross-Validation using Recall as the scoring metric. This ‘pushes’ the model towards parameters that minimize false positives (wrongly accused providers) at the expense of accuracy. After tuning CatBoost outperformed XGBoost but both models show signs of overfitting (Fig. 11). On the Test split, the tuned CatBoost model achieved 87% accuracy and 61% recall (Table 1).

| Metric | Train Split | Test Split |

|---|---|---|

| Accuracy | 89% | 78% |

| Recall | 84% | 61% |

| Precision | 93% | 77% |

| F1-score | 88% | 68% |

We can see this visually with a confusion matrix (Fig. 12) where nearly a third of suspected providers are mislabeled as non-suspect (bottom left). However, due to scoring by the recall, very few innocent providers as improperly suspected (top right). This means that while our model can appropriately classify non-suspect claims, it is under-identifying fraudulent claims.

Feature Importance

Instead of the standard feature importance, which is biased towards features with high cardinality and is more sensitive to outliers, permutation feature importance was implemented. Permutation importance iteratively shuffles a feature and examines the drop in score when the relationship between feature and target is disrupted.

In the tuned CatBoost model, we see that State and County have strong feature importance (Fig. 13). This is likely due to a limited number of providers in each state/county, so narrowing down to a single provider provides information on all claims submitted by the said provider (otherwise known as data leakage). This will be remedied in subsequent models.

Several of the engineered features such as chronic condition counts, code counts, cost per procedure code, and the number of patient visits to a provider as in the top-ranked features. However, other engineered features such as the date discrepancy are rated low, likely due to the limited number of samples affected. Additionally, many Boolean features such as the chronic condition had low feature importance.

Aggregation

At this point, we have built a model which performs admirably at predicting the likelihood of fraud for any claim. This is where many presentations traditionally end. However, we were asked to predict whether a provider is suspected of fraud, not a given claim. One way we can do this is by using the predicted probability of fraud for each claim and mapping that back to the provider who originated the claim (Fig. 14)

Using an accumulating average, we can predict the fraudulence of each provider based on the fraud probability of all their submitted claims. While there is some gain in accuracy (Fig. 15), recall and precision suffer (Table 2) due to a high rate of false negatives and false positives.

| Metric | Test Split Aggregated Prediction |

|---|---|

| Accuracy | 87% |

| Recall | 43% |

| Precision | 38% |

Are All Claims Needed?

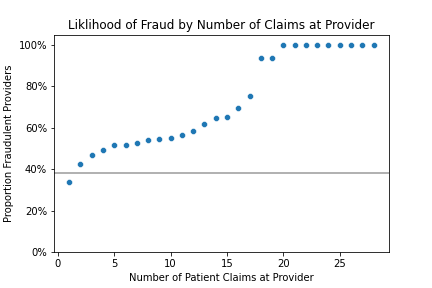

However, not all the claims submitted by providers are needed to make an accurate prediction regarding the provider. From 1 to 25 claims, we increase AUC from 74% with 1 claim to 84% using 25 claims (Fig. 16). The sweet spot appears to be up to 10 claims, which yields nearly identical scores compared to using all claims submitted across the year of our dataset.

While only 75% of providers submit more than 10 claims, 90% of suspect providers submit at least 10 claims. Therefore, we can make a fraud prediction after receiving 10 claims (which are on average submitted within 2 months), instead of waiting to accumulate a years’ worth of claims.

Finally, we want to examine what our model gets wrong. One way to do this is by examining what the distributions of claims look like for innocent and suspect providers. Correctly classified providers have inverse- ‘T’ and ‘T’ shaped distributions, respectively, while misclassified providers tend to have more ambiguous distributions (Fig. 17). This may be due to the non-suspect claims being mixed with suspect claims, or because our model lacks a feature able to confidently discriminate these claims.

Conclusion

Fighting Medicare fraud is a constant battle, and this model is only one tool in our arsenal. We can predict individual claims with a 78% accuracy and aggregate up predictions to make predictions about providers with an 89% accuracy. This prediction holds up even using a limited number of claims, supplying us with multiple opportunities to identify and prosecute fraudulent providers.

Some additional improvements to the model could be claim-specific labeling as opposed to the provider-level labels. Furthermore, the diagnosis and procedure codes contain more information than we currently use, and thus building an NLP on the definitions might prove fruitful. Finally, using the claim predictions to inform a secondary model trained on aggregated predictions shows promise given the results of other projects.

This concludes not only my capstone project but also my time with New York City Data Science Academy. My thanks go out to Gabi Morales, Sam Audino, and the whole NYCDSA team for their support and guidance.