Utilizing Data to Predict Winners of Tennis Matches

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

For my capstone project, I built various machine learning models to use data to predict the winners of matches on the professional Women's Tennis Association (WTA) Tour. I also constructed and deployed an elegant, simple-to-use Dash app that allows users to predict the winners of future WTA matches, display betting odds, and compare statistics between players. The question at the heart of this project, and at the heart of all sports predictions, is: What features must be considered to produce a consistently reliable model?

In the case of tennis, a simple, unscientific, and reasonably accurate method is to predict that the higher-ranked player will win every match--in other words, that there will be no upsets. In the data used for this project, this leads to a correct prediction 66.21% of the time. I considered achieving a predictive accuracy that exceeded this baseline of 66.21% a crucial measure of success, as a sophisticated machine-learning model should be able to outperform such a basic predictive method.

Due to the nature of the baseline, a successful model would need to be adept at the difficult task of predicting upsets--that is, at correctly predicting when a lower-ranked player will win. Given the unpredictability inherent to all professional sports, I aimed for an improvement of 4% to 5% on the baseline.

Data on Feature Engineering

The datasets used for this project are made available by GitHub user JeffSackmann. These datasets contain detailed information for each match played on the WTA tour dating back to the 1970s, including the winner and loser of the match, the players' rankings, the court surface, the tournament level, date, and location, the score of the match, and serve statistics for the players in the match. I used data from only the past twenty years in my predictive models, as differences in racket technology and court surfaces prior to 2000 would likely bias the results.

The primary challenge that the data presented was the need to engineer features that were cumulative and chronological. I first arranged all matches in the dataset chronologically, and then engineered several new features that summarized aspects of a player's performance prior to their current match. The specific features I engineered were:

- Surface win percent: A player's win percent on the current match's court surface prior to the match.

- Level win percent: A player's win percent at a current match's tournament level prior to the match.

- Head-to-head: The number of matches won by the player against her current opponent prior to the match.

- Recent form: A player's overall win percent prior to the current match, plus a "penalty" of log10(1-(overall win %)+(last 6 months win %)).

Background Information of Our Analysis

"Surface" refers to the material of which the court is made, and can take on four values: hard, grass, clay, and carpet. Different court surfaces favor different styles of play--for instance, defensive players often perform well on clay, while powerful, aggressive players excel on grass--and so court surface is a crucial consideration when predicting the winner of a match. "Tournament level" refers to any of eight tiers of professional tournament: Grand Slam, Premier, Premier Mandatory, WTA Finals, Olympics, Fed Cup, International, and Challenger.

Certain players, a prime example of which is Serena Williams, are known for performing exceptionally well at more prestigious events such as Grand Slams, while performing uncharacteristically poorly at smaller events like Premiers and Internationals. Thus, tournament level is also important to consider when making a prediction. "Recent form" quantifies whether a player is on a hot streak, in a slump, or playing close to their usual level. The added "penalty" increases a player's career win percent if she is playing better than average in recent months, and decreases it if she is playing worse than average in recent months.

Zeros

Inherent to these new features was a flaw that needed to be addressed: These features contained a large number of zeros, which could potentially bias the results of any predictive model. Zeros were particularly rampant in rows featuring players who had not played many matches in their careers, so, to combat this issue, I removed all observations containing a player who had played fewer than 100 matches up to that point. I also removed all matches that ended in a "retirement"--that is, a player being forced to forfeit due to injury or illness.

Unfortunately, I found it necessary to remove all matches from the year 2020 from the data set, as player rankings have effectively been frozen due to COVID and may not reflect a player's "true" standing in the sport. For instance, Ashleigh Barty is currently ranked #1 in the world despite having not played a professional match for over 11 months due to safety concerns and stringent travel restrictions in her native Australia. This paring down of the data still left me with ample observations (over 18,000 matches) on which to base my predictive models.

It was also important to obscure the winner and the loser of each match (as this is what I aim to predict!). To this end, I changed all occurrences of the strings "winner" and loser" among the feature names to "player_1" and "player_2," with player_1 referring to the player in each row whose name comes first alphabetically.

Missing Values

Finally, the data contained several missing values, particularly in the "player ranking" features, and this required some creativity to impute. I was able to impute many of these missing values by merging with a separate WTA Rankings data frame. However, many of these values were not missing at all--rather, the "missing" values were indications that the player was unranked at the time of the match, which can occur when a player has not played a match in over 12 months or has come out of retirement.

Using the WTA Rankings data frame, I located these players' rankings at the time of their last match before their extended layoffs, added 1 for each month of their layoff, and imputed the missing ranking with the result. The WTA Rankings data frame had some missing values of its own, and in this case, I imputed instead with players' rankings from the week before, as it is quite unusual for a player's ranking to vary greatly in the span of one week.

Data Analysis and Results

Predictive Models

I tested predictive accuracy for a variety of machine learning algorithms: gradient boosting, random forest, logistic regression, Gaussian naive Bayes, and linear discriminant analysis. Before running my predictive models, I divided my data into an 80/20 train-test split. The train and test accuracy for each model is presented below.

Each machine learning model exceeded the benchmark of 66.21%, and the tree-based models in particular exceeded it by the margin of 4% to 5% that I hoped to achieve at the outset of this project. I wanted to examine the influence that my engineered features had on the predictions, so for the tree-based models, I calculated feature importances.

For both the random forest and gradient boosting classifiers, level and surface win percent, as well as recent form, factored heavily into the predictions, while my remaining engineered feature, head-to-head, did not appear to hold much sway in predicting winners.

Gradient Boosting Classifier

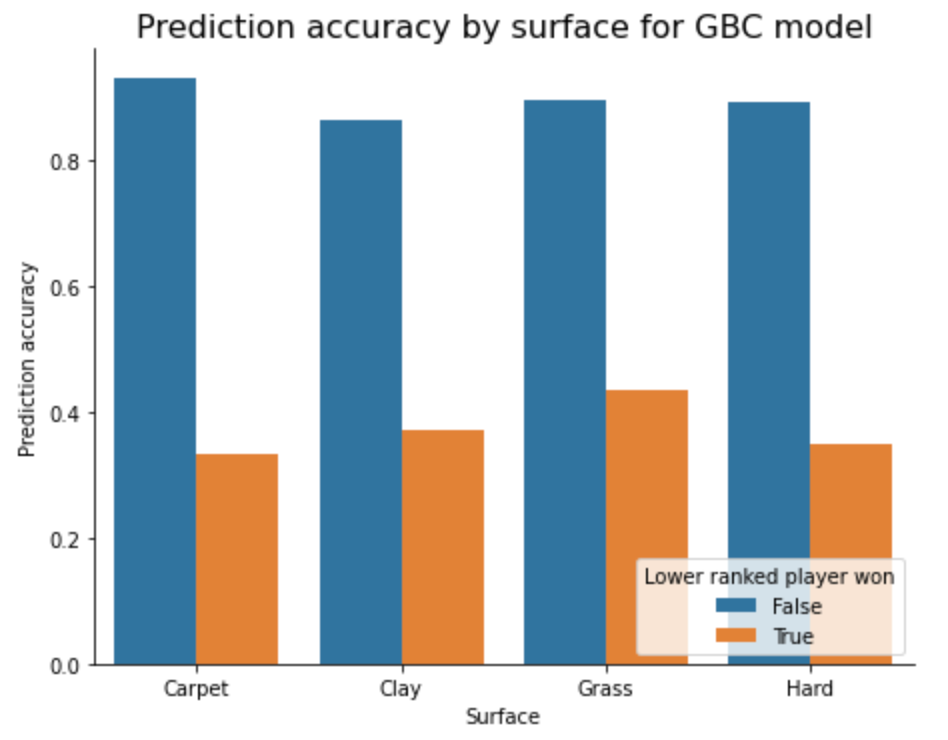

Returning to the sentiment expressed in the introduction--that a successful model must be able to predict upsets--I wanted to look more in-depth into the performance of the most accurate model, the gradient boosting classifier (GBC), in an effort to explain its strengths and its drawbacks. The following charts display the GBC's predictive accuracy for matches in which the higher-ranked player won (non-upsets) and for matches in which the lower-ranked player won (upsets) across each surface and tournament level.

There is little variation in the model's success at predicting non-upsets across all surfaces and tournament levels. Highly noteworthy, however, is the model's ability to predict upsets at four particular tournament levels: Challenger, Olympics, WTA Finals, and Fed Cup. In fact, the overall predictive accuracy at these events was 77.42%, much higher than the 66.21% baseline.

Tournament Level Win Percents

I was curious as to what sets these particular tournaments apart, so I examined more closely the distributions of two particular variables: the difference between players' tournament level win percents and the difference between the logarithm of players' rankings.

For the four tournament levels in question, D, F, O, and C (corresponding to Fed Cup, WTA Finals, Olympics, and Challenger, respectively), the distributions are slightly right-skewed, while the others are more symmetrical. This indicates that tournament level win percent is a stronger predictor at these particular levels than they are on average. When looking at the difference between players' rankings at various tournament levels, we find a much smaller range at levels F, O, and C, in particular.

Log of Players

With less variation in player ranking at these levels, ranking becomes a less important feature, and other features, particularly tournament level win percent, "pick up the slack" in the predictive model. In short, the GBC performed best at tournaments where ranking mattered least. This led me to consider re-running my predictive models without including player ranking as a feature, but, strangely, upon attempting this, the train and test accuracies actually decreased for all models.

Data Results

Finally, I selected eight predictions made by the GBC about particular noteworthy matches from the past 20 years of professional women's tennis. Four of these matches, correctly predicted by the model, were won by the much lower ranked player, and illustrate the ability of the model to weigh other important features, particularly recent form, surface win percent, and level win percent, against player ranking.

The four other matches illustrate a potential drawback of the model: all of these matches were won by the higher ranked player, while the model incorrectly predicted an upset. In these cases, the player's past performance on the given court surface and tournament level perhaps weighed too heavily in the prediction.

Dash App

Given the success of the predictive models, I was excited to translate my work into a Dash app that allows users to make predictions about future WTA matches.

Such a platform should display information that is meaningful for the purpose of sports betting or fantasy sports, so I included betting odds as part of the prediction, as well as a detailed visual comparison between player statistics. The logistic regression classifier was ideal for this purpose, as it is simple to extract the probabilities associated to each individual classification. While this model is not as successful as the tree-based models, its predictive accuracy exceeds the 66.21% baseline by over 3%, and thus it still provides meaningful insights to users of the app. Below is a picture of the user interface.

The app allows the user to select two players, the court surface, and the tournament level. It then displays the predicted winner of a hypothetical next match between these players, as well as her odds of winning. The user can choose to compare visually one of five different statistics: recent form, surface win percent, tournament level win percent, the head-to-head between the two selected players, and the rankings of the selected players.

As previously mentioned, the data on which the logistic regression model is based contains only matches in which both players have played at least 100 professional matches, so users are only able to select such players in the app.

My Github repository that contains code for the Dash app, as well as a slide deck, is available here.