Webscraping Zocdoc in New York and Analyzing Doctor Reviews

Introduction

Zocdoc is a company that was created aiming to make booking doctors easy based on a variety of factors that you can select such as your insurance, what language you speak, location, etc. You can also make an informed choice by seeing reviews for doctors by their overall rating, bedside manner, and wait time rating. After leaving my employer to start bootcamp, I was forced to face a reality where I was suddenly not on a partially subsidized employer sponsored health plan. Experiencing some of the pain points of being on a drastically different insurance plan sparked my curiosity to look into Zocdoc given that they are an online medical care booking service that stores all information about doctors in various cities.

Data Scraping and Cleaning

Using Scrapy Splash, I gathered information and reviews from all doctors in the New York area on Zocdoc. In scraping I ran into one challenge in terms of the site. I wanted to scrape all categories and I realized that no matter what category you land on, you will never see beyond 10 pages of doctors, all of which were dynamically rotating. To resolve this issue I had the scraper iterate through the maximum number of pages per doctor type.

Once the scraper ran through I cleaned and re-organized the data. First I parsed out the reviews since overall rating, bedside rating, and wait rating were grouped together as one string. I also categorized each doctor’s office location into the different boroughs based on zip codes. Finally, given that there were over 100 different doctor types I re-categorized the doctors into broader categories to truncate the types to less than about 30 categories.

To review my dataset and code please see here.

Analysis

After cleaning the data, I began to do some exploratory analysis. I first looked at languages spoken overall in the NY area and Spanish, Russian, and Chinese (Mandarin and Cantonese) were the top languages spoken in doctors’ offices. The average number of languages spoken in each office was 1.9 so on average each office speaks at least one other language aside from English. Breaking this down by borough, Staten Island had the lowest average at 1.75 with a maximum number of languages spoken per office of 3. Meanwhile Brooklyn had the highest average at 2.24 with a maximum number of languages spoken of 5.

Next I looked into doctor types by gender. There were many more men that were surgeons, urgent care doctors, and ENT (ear, nose, throat) doctors versus women who dominated as OB-GYNs, dermatologists, and primary care doctors.

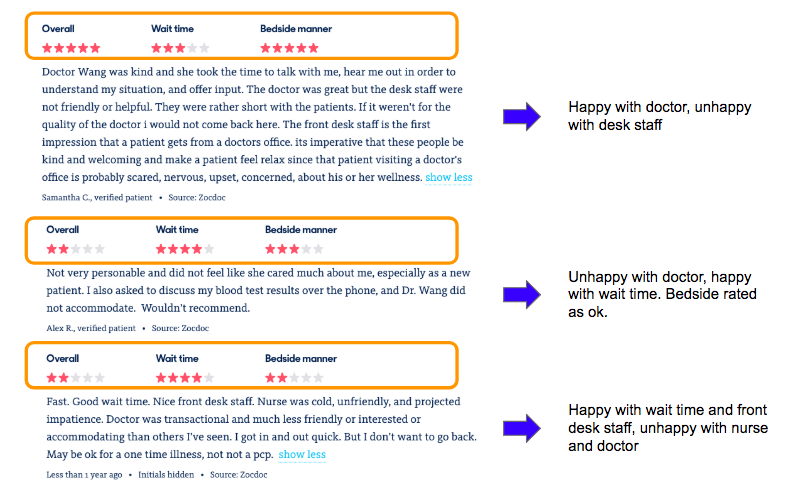

Finally I did a deeper dive into the review data. Zocdoc asks you to rate three aspects once you have met with a doctor for an appointment which is shown in the screenshot below:

In taking a closer look at some reviews from different doctors, it’s clear to see that all interactions in the office are taken into account and not just the interaction with the doctor. Therefore a doctor's rating could be affected by poor outside office staff, or conversely could be rated higher because of good staff even if patients are not particularly happy with the doctor's care itself.

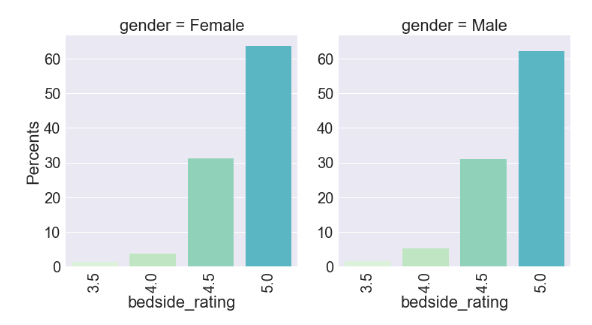

When looking at the distribution of ratings for each type of rating, the distribution is similar and skewed pretty high on a scale of 1 to 5 for both genders. Where we see a slightly different distribution is for wait rating where males actually get down to a minimum of 2.5 which could signify male run doctor offices may not be as timely.

Overall rating distribution by gender

Wait rating distribution by gender

Bedside rating distribution by gender

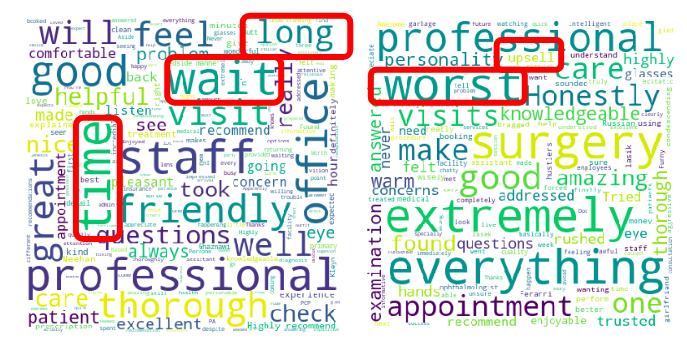

I then created word clouds to compare words that popped in the best and worst ratings. Below is a word cloud for five star overall ratings on the left and five star bedside ratings on the right. Here words like “professional”, “thorough”, “pleasant”, and “friendly” standout.

In contrast below are word clouds for three star ratings to show words that popped for lower ratings. The left is a word cloud for wait ratings and the right is a cloud for bedside ratings. Unfortunately there was not enough data to look lower than three star ratings. For the three star ratings words such as “time”, “wait”, “long” popped for wait rating while words such as “worst” and “upsell” popped for bedside ratings. Even with a few more negative words, the three star ratings still for the most part contained positive words.

I used a TFidfVectorizer from scikit-learn in order to collect the most relevant bigrams from the lower rated reviews. Terms that are bolded are things that doctors should be aware of in order to improve their overall practice.

Three Star Wait Bigrams:

- great bedside

- feel comfortable

- feel rushed

- felt comfortable

- friendly helpful

- friendly professional

- friendly staff

- good experience

Three Star Bedside Bigrams:

- felt stressed

- felt rushed

- felt comfortable

- feeling unsure

- facility filled

- eye tell

- eye surgery

- wrong caused

Analyzing reviews for each doctor through tools like bigrams and wordclouds are some ways that Zocdoc would be able to provide value back to doctors on the platform so that they could adjust their respective practices if there are consistent painpoints.

Next Steps

This analysis could be expanded through further Natural Language Processing using sentiment analysis. Zocdoc allows doctors to be sponsored on the site and pushed to the top of search results persistently. One thing I noticed however is that the review and doctor that is featured is not being vetted to make sure that a negative review is not being featured. For example in the screenshot below, the sponsored doctor has a high rating, but the review that is featured speaks negatively of the rest of the office.

Using sentiment analysis, this type of issue could definitely be avoided to make sure that doctors that pay money to be sponsored are featured with one of their positive reviews as well.