A Closer Look at Tree Boosting Methods

Introduction

In the book The Elements of Statistical Learning (ESL) the authors stated that, “Boosting is one of the most powerful learning ideas introduced in the last twenty years.” This statement was so captivating that we decided to do our capstone project on boosting and learn about the AdaBoost, Gradient Boosting (GB), and XGBoost methods.

This capstone project is unlike others in the NYDSA Bootcamp because it is research-oriented in nature. What we hoped to achieve from this was the ability to locate and understand relevant and reliable literature and be able to understand them. This is important because there are so many sources of information nowadays, ranging from blogs to online discussions, with varying degrees of reliability. Furthermore, modern learning models such as neural networks are becoming increasingly complex. So, it may not hurt to gain some technical exposure. Lastly, we hoped to learn how to explain technical information to a non-technical audience in our final presentation and in this blog.

Boosting is a learning approach that was initially developed over twenty years ago for classification problems. The publication years for the models we consider were 1996 for AdaBoost (Freund and Schapire), 2001 for GB (Friedman), and 2016 for XGBoost (Chen and Guestrin). Boosting turns out to be a general purpose method that can use models other than trees. But what is amazing, as we shall see, is the basic idea behind boosting with AdaBoost, which was to combine a sequence of so-called “weak” learners to create a much better prediction model. This was an innovative idea when it was first introduced in the scientific literature in 1996.

XGBoost appears to be the most popular choice, as it is used in many machine learning challenges. We set out to find the answers to the following questions: Why is XGBoost so popular? How is it different from AdaBoost and GB? What is boosting? Is boosting used exclusively for decision and classification trees? How do we use these boosting models? In this blog post, we will provide answers to some of these questions.

Our blog is organized as follows: First, we illustrate the fundamentals of boosting using AdaBoost.M1 (Freund and Schapire, 1997) in a binary classification task. Second, we describe the basic ideas behind GB and XGBoost. The background knowledge for these two models are somewhat steep so we will focus on an “in a nutshell” type of discussion. Third, we discuss model parameters. We will not provide a step-by-step recipe but outline a general strategy based on what we learned from our research. Finally, we present some results from an analysis of a toxic comments dataset from Kaggle. Our primary aim in the data analysis is to compare the performance of the three boosting models.

What is Boosting?

The main idea behind boosting in AdaBoost is to combine “weak” classifiers to achieve a much better classification performance. A “weak” classifier is defined as a learning algorithm whose error rate is only slightly better than random guessing. In binary classification, the “weak” classifier is better than tossing a fair coin. A model example is a two terminal node decision tree called a “stump”.

In AdaBoost, a weak classifier is sequentially applied to a dataset. In each iteration, the data is modified according to whether observations are correctly classified or not. Observations that are incorrectly classified are assigned higher weights than in the previous classification whereas those that are correctly classified have their weights reduced. This allows previously misclassified observations to have a higher chance of being classified correctly in the next iteration. Initially, the observations are assigned weights of 1/N, where N is the number of observations in the training set.

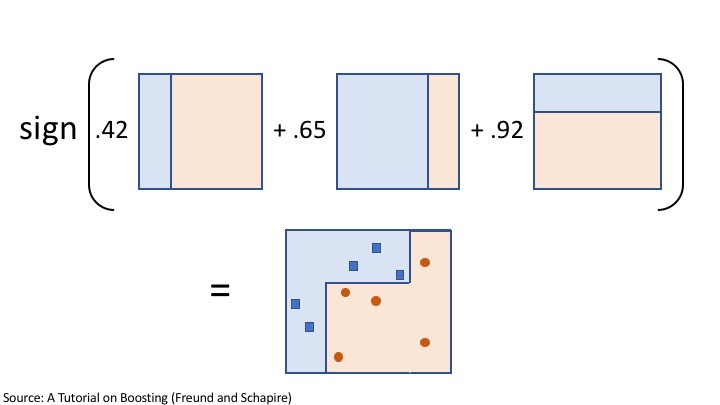

Suppose that the binary outcomes are {-1, 1} and that there are M sequentially produced weak classifiers by AdaBoost. A final prediction can then be obtained by applying the sign function (sign(x) = -1 if x<0, 0 if x =0, and 1 if x>0) on weighted predictions of the M weak classifiers. These weights are determined by the boosting algorithm and are distinct from the weights that are used to modify the data. Higher weights are assigned to the more accurate classifiers which results in a weighted majority vote.

The AdaBoost algorithm process just described is illustrated in the figure below. This illustration was adapted from a toy example in “A Tutorial on Boosting” by Freund and Schapire. We suppose that there are M=3 weak classifiers. After a classifier is applied to the data, the misclassification error and classifier weights are calculated. From these two items, the weights for modifying the data can be calculated and consequently applied to the data. Note that in the figure, the data that are misclassified and hence upweighted are slightly enlarged. The correctly classified data remain the same size in the figure although these should really have reduced weights and sizes. At each iteration, the weights sum up to one.

The sign function applied to the weighted sum of the weak classifiers produces a classifier that is more complex and captures a good decision boundary for classifying the data. We encourage you to determine how this final classifier was obtained by working out the arithmetic inside the sign function in the second figure for each data point.

Surprisingly, a combination of stumps produces a very good classifier. ESL provides a simulated data example (10.2) which we reproduce below using codes from the Scikit-Learn website. Random samples from independent standard Gaussian variables are generated to produce 10 features. The classes are determined based on whether the sum of the squares of the 10 features is larger than 9.34, the median of the chi-square random variable with 10 degrees of freedom. Recall that the sum of the squares of p independent standard normal random variables is chi-square with p degrees of freedom. The simulated data has 2,000 training observations with approximately 1,000 cases in each class and 10,000 test observations. We train and test a stump, a nine-node decision tree, and a boosted classifier of 400 decision tree stumps. The figure below presents a visualization of the simulated data points using the first two principal components. We can see roughly two sets of data points - one set inside (dark color) and another set outside (yellow color) the cloud of points.

The figure below presents the classifier error rate versus the number of boosting iterations (or weak classifiers) of the three classifiers. The green horizontal line on the top corresponds to an error rate of approximately 46% when using only a single stump as classifier. A decision tree with a depth of 9 has an error rate of approximately 31% (orange horizontal line). When boosting stumps (blue lines), the error rates dramatically decrease with the number of iterations, achieving approximately zero training error at around 250 iterations. Note that beyond this, we expect our model to become more complex and hence overfit. Note also that the test error appears to continue decreasing, which suggests that there is no overfitting. This surprising result was empirically observed for boosting, and it contradicts the conventional bias-variance trade-off. But this is not always the case, and there are examples of boosting leading to overfitting.

Gradient Boosting and XGBoost (in a nutshell)

AdaBoost has been actively studied for several years after it first appeared in the literature. It was not until after a few years later that it was discovered to fit an exponential loss function under a general framework called forward stagewise additive modeling (FSAM). We shall omit the discussion about FSAM and describe GB and XGBoost only in the general sense.

A boosted tree model is a function that is formed by a sum of tree models. Basically you initialize a tree and fit it to your data. You then keep adding more trees to it until you obtain a more accurate model. Each added tree is supposed to lead to an improvement of the previous tree-accumulating model, that is, a better fit in the sense of minimizing the loss function. You may recognize this idea of accumulation that leads to an improvement from a numerical optimization procedure called gradient descent used in neural networks. In gradient descent, you initialize the model parameters and move in “steps” that should lead you to the minimum of the loss function. The accumulated “steps” will give you the parameters that yield losses that are closer to the minimum of the loss function. However, whereas in gradient descent we are searching within the parameter space, in gradient boosting we are searching within a “function space.” Thus, GB can be regarded as gradient descent in the function space (ESL; Nielsen, 2016).

The “step” that we just described consists of a gradient which determines the direction of maximal descent. In GB, a tree is fit to the negative gradient using least squares. This will yield an optimal tree partition. Then the weights or values assigned to the partitions are determined via an optimization procedure. Thus for GB, optimization involves finding an optimal tree partition for the fitted tree and then finding the optimal weights for each partition separately. XGBoost also approximates the negative gradient, but the gradient is weighted by the hessian or second order partial derivative of the loss function and the fit is performed using weighted (by the hessian) least squares (Nielsen, 2016). What this ultimately means for XGBoost is that the optimization for the tree partitions and the weights are done simultaneously instead of separately as in GB. This may be a more efficient optimization procedure and could lead to better estimates. We also suspect that this leads to faster computation.

Whew! That was plenty of technical stuff. But we can actually derive a simple result from this. For regression problems that use the squared error loss function, the negative gradient is simply the ordinary residual. Thus, in GB, the boosted tree model is obtained by fitting a tree on the residual from the tree model in the previous iteration. In other words, for squared error loss in regression, GB combines trees that are fit sequentially on the residuals.

GB is a general model because it can accomodate any loss function. When using an exponential loss for binary classification, GB will yield AdaBoost. Note that in Python, GB uses deviance as the default loss function. However, these two loss functions are actually very similar (ESL); so we should expect AdaBoost and GB to yield very similar results.

Model Parameters

We briefly discuss selected model parameters in this section. We will not attempt to provide a specific prescription of how to tune these parameters. Rather, we summarize suggestions or recommendations that we found in the literature and recommend a “to start with” strategy.

The selected parameters are shown in the table below. They are categorized into three. The first three are the main parameters and they are common to all three models. The remaining parameters are grouped according to their purposes, to induce randomness (in yellow) and provide regularization (green).

Note that AdaBoost can be implemented in Python using the AdaBoostClassifier which includes the three main parameters. However, AdaBoost can also be implemented using the GradientBoostingClassifier with an exponential loss as the loss function (the default is deviance). Using this implementation allows AdaBoost to also utilize the additional parameters, subsample and max_features.

(a) Main Parameters

Max_depth controls the depth of the tree. The deeper the tree is, the more complexity is introduced and this will not only increase the variance of the estimator but also decrease the computational speed of the algorithm. Note that adding just one level doubles the complexity of the tree. A recommendation by ESL is to use tree depths of between 4 and 8 inclusive in practice.

The learning_rate controls the contribution of each tree that is added to the ensemble. It can be regarded as controlling the learning rate of the boosting procedure.

N_estimators is the number of boosting iterations (or the number of trees in the boosted model) and it should be set depending on the value for the learning_rate. Low learning rates typically need more iterations and vice-versa. Note that more iterations translates to slower computational speed. ESL states that the best strategy might be to set learning_rate low (<0.1) and then choose n_estimators by early stopping.

When tuning, we found it reasonable to start first with these three main model parameters. We also found it helpful to create a plot of the training and test error rates (for classification) versus the number of iterations (n_estimators). The following plot presents an example showing the results from the simulated data we saw earlier with the boosted stumps for AdaBoost and learning_rate=1.

The figure also includes the error rates in red when using a tree with max_depth=3 instead of a stump. For this more complex tree model, the training error decreases much faster but the test error is worse than the stump’s. It appears that the stump will yield the better estimator for the simulated data. This should come as no surprise because the data was created in an additive fashion with no interaction. In general, stumps capture additive main effects while deeper trees can capture more complex interactions.

We found it useful to plot the learning curves above instead of doing a grid search where we select only a few values for n_estimators e.g. 100, 200, 400, and 1000. A problem with this approach is that the learning curves are not always continually decreasing. In the figure above, it seems that there is a minimum test error rate when n_estimators = 50 which will be missed by our grid search example.

(b) Other Parameters

The parameters that induce randomness are subsample, max_features (in GB) or colsample_bylevel (in XGBoost), and colsample_bytree. When tuning these parameters, only a portion of the data will be used for training as described below. This strategy allows the model to generalize better.

To subsample is to use a portion of the observations or examples for training e.g. 30%. This could produce more accurate models and can also reduce the computing time by roughly the same fraction as the subsample. Note, however, that based on simulations, a subsample can be harmful when learning_rate = 1 (no shrinkage) (ESL).

Max_features and colsample_bylevel is the same strategy used in random forests. Tuning these parameters entails using only a portion of the features or predictors when constructing each tree level in an iteration. Colsample_bytree means that you use only a portion of the features or predictors when constructing a tree in each iteration. Thus, when using both colsample_bylevel and colsample_bytree, the portion of features or predictors when constructing each tree level can be considerably reduced, which may improve computational speed.

The last two parameters, reg_alpha and reg_lambda, perform L1 and L2 regularization of the leaf weights, respectively. Recall that the weights are the function values assigned to the tree partitions. These parameters shrink the leaf weights in an effort to combat variance.

When tuning these other parameters, you may want to first study the effects of each parameter on the learning curves. For example, you can study what happens to the learning curves when subsampling by 0.3, 0.5, and 1. Then you can study what happens when you vary colsample_bylevel. By doing this you will, hopefully, gain some insights as to how the learning curve changes when tuning these parameters. From there, we hope that you can then formulate a grid search or other strategies to find the optimal model parameters.

Toxic Comments Classification

We explore the performance of the three boosting methods using a toxic comments dataset from Kaggle. The task is to build a model that’s capable of classifying comments as toxic, obscene, insult, severe toxic, identity hate, or threat. The dataset contains 159,571 comments from Wikipedia’s talk page edits. The following figure presents some useful information about the data.

The left side of the figure shows that this classification task is not a multi-class but a multi-label problem. In a multi-class problem, each example or observation is assigned to only one class or label. In a multi-label problem, each example or observation can have more than one classes. For example, in the third row of the table above, there are 3,800 comments that can be classified as toxic, insult, and obscene at the same time. The right side of the figure presents the dataset as unbalanced in terms of the labels frequency. Whereas toxic, obscene, and insult are at least 5% frequent, the remaining labels are at most 1% frequent.

The figure above presents the workflow we adopted. For multi-label modeling, we identified at least three approaches in the literature: one versus rest, chain classifier, and power set. For our analysis, we chose to implement one vs rest. This strategy treats each label as an unique target and converts the task into binary classification. As there are six labels, the final model will be a collection of six binary classifiers.

For natural language processing (NLP), we implemented stemming and Tf-Idf. Stemming is a process that chops off the ends of the words but keeps its basic meaning. Tf-Idf is a numerical statistic that is intended to reflect how important a word is to a text.

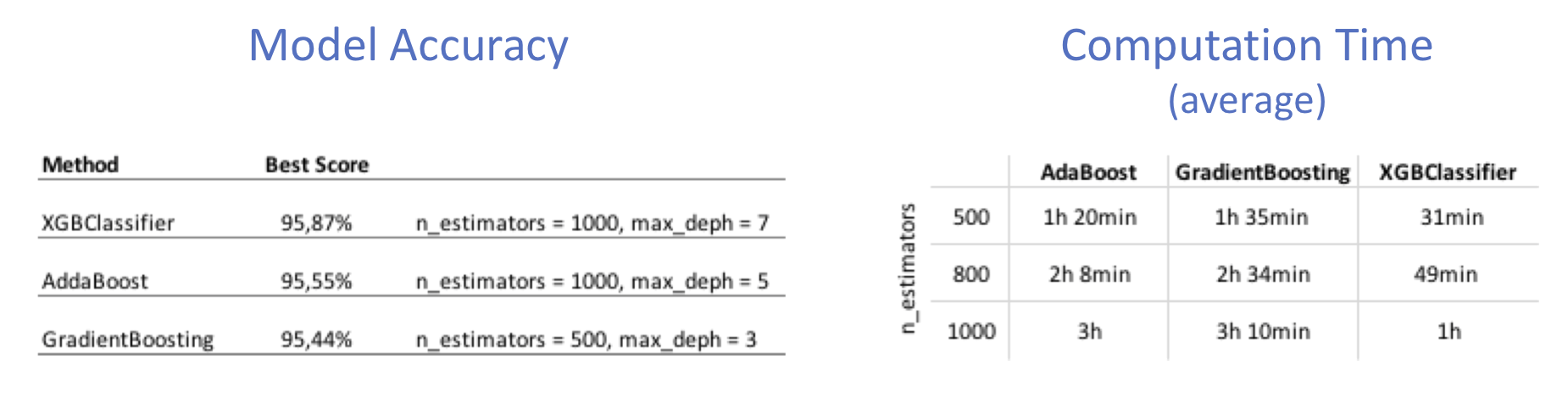

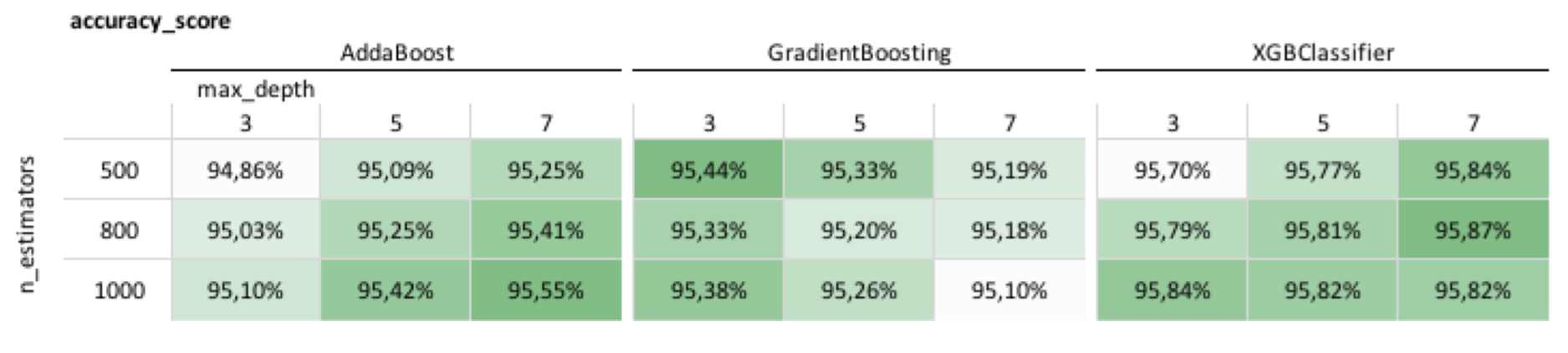

We compare AdaBoost, GB, and XGBoost in terms of test accuracy and computation time. We present only results for the toxic label in the following table.

The performances of AdaBoost and XGBoost appear to get better with increasing model "complexity." By complexity here we mean deeper trees (larger values of max_depth) or more iterations (larger values of n_estimators). The performance of GB appears to get worse with increasing "complexity." The table below summarizes the best test accuracies from the previous table and the computation time for all the calculations in the previous table. The test accuracies are almost the same for all three models but they differ considerably in computational time. The computational time for XGBoost is approximately one-third that of either ADaBoost or GB.

Other Datasets

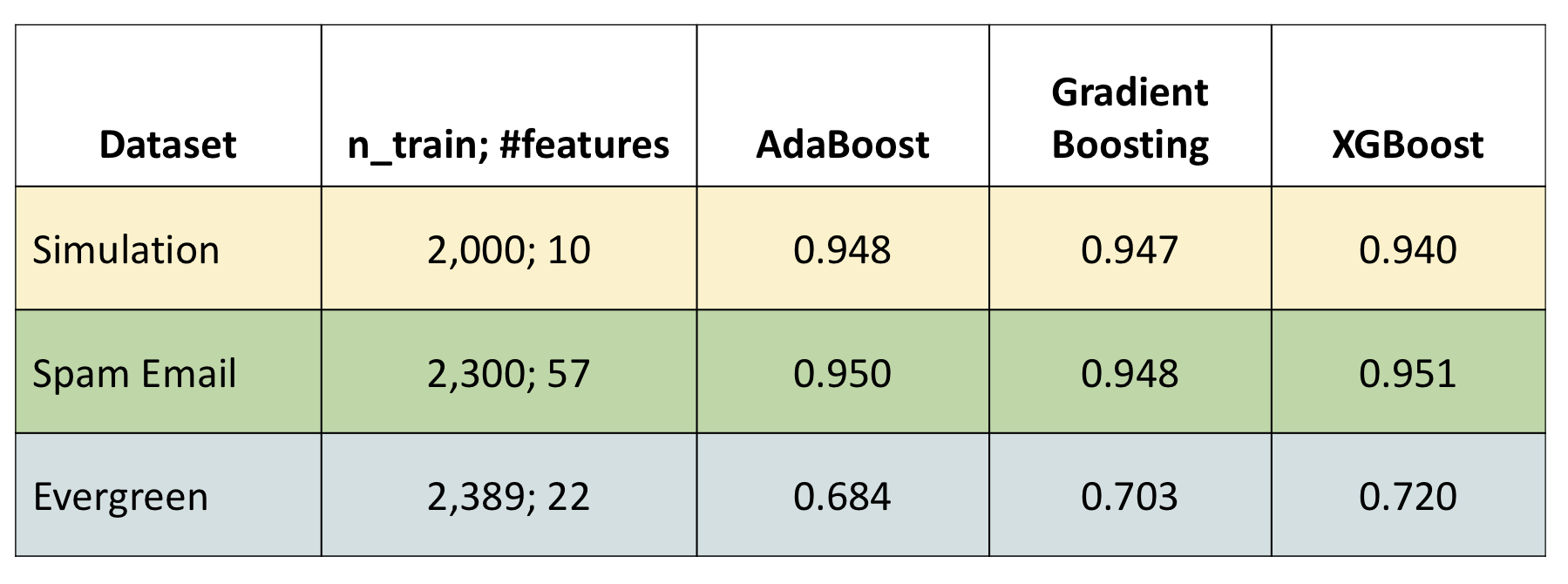

We also compared the three models on three other datasets: the simulated data from ESL, spam email, and Evergreen classification from Kaggle. For the Evergreen dataset, we excluded all observations with at least one missing feature for the time being which reduced the sample size by about a half. Note that our aim is to compare the performances of the three models and not necessarily to obtain the best predictive performance. Note also that the best performance in Kaggle for this dataset was a test accuracy of around 89%.

The results for the simulated and spam email data were very similar across all models. We found differences only for the Evergreen data where there was increasing prediction accuracy from AdaBoost to GB and to XGBoost. The improved performance in XGBoost resulted from tuning the regularization parameters reg_alpha and reg_lambda, and using a tree of max_depth=15.

Conclusion and Further Work

In this capstone project, we learned about the basic ideas that led to the development of the earliest boosting model AdaBoost and how well it performs. We also attempted to describe the general ideas behind GB and XGBoost and learned that GB uses a first order numerical approximation while XGBoost uses a second order approximation. We suspect that this optimization procedure by XGBoost could potentially lead to better results and may be responsible for its efficient computation. We also described the model parameters and learned that XGBoost has more tuning parameters than GB which makes it a more flexible model. However, this also makes tuning the parameters a more difficult process. We outlined some strategies for parameter tuning in this blog post, but this is an area where we need to do more research on.

Although we have not seen clear predictive advantages of XGBoost compared to GB on the datasets that we considered, we suspect that there may be other datasets where XGBoost could potentially beat GB. For example, there may be a dataset with many features in which the model parameter colsample_bytree can be used to sample the features and possibly lead to better predictive performance. We have already seen how tuning reg_alpha and reg_lambda can lead to better performance for the Evergreen dataset, but we would like to see results on other datasets to confirm this advantage.

For the toxic comments dataset, we may consider exploring other multi-label approaches, such as chain classifier and power set, and also other NLP techniques that might help improve model performance such as lemmatization.

References

Our primary references are the following:

- The Elements of Statistical Learning (2017) by Hastie, Tibshirani, and Friedman

- Tree Boosting With XGBoost - Why Does XGBoost Win "Every" Machine Learning Competition? (2016) by Didrik Nielsen

- A Tutorial on Boosting by Freund and Schapire

The first reference includes an entire chapter on boosting but does not include XGBoost. The discussion on GB is technical and statistical and requires some knowledge of basis functions and additive models which are discussed in other parts of the book. The second reference is a very well written master's thesis. It is quite thorough in its discussion of trees and tree boosting, and it makes a comparison of GB and XGBoost. It also goes on further discussion on the advantages of XGBoost. This is one of the best references we found on tree boosting; it is a very good supplement to the original XGBoost paper by Chen and Guestrin (2016). The third listed reference describes the basic ideas of ADaBoost. It was the basis of our discussion of boosting and AdaBoost.

Photo Source: https://stocksnap.io