Sephora Product Success: Capstone and Final Project

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Similar to the Home Depot of tools, or the Staples of office supplies, Sephora is one of the largest beauty retailers in the United States. They carry a wide range of makeup, skincare, haircare, fragrance, and much more. For my final project, I chose to conduct a visual analysis and several machine learning experiments on data obtained from Sephora.com. In total, I collected the information of over 7,400 individual products for this project. My overall goal is to predict a product's star rating and recommendation rate.

Below is a screenshot of an example product page. The main variables of interest are in yellow:

Scraping and Variable Explanation

To gather all the variables (product name, brand, loves, category, reviews, star-rating, price, etc.), I performed web scraping of Sephora.com using Selenium and Python. I recycled some scraping code from a former NYC Data Science Academy student who had previously scraped Sephora, Gurminder Kaur. In order to scrape every product, I had to navigate through 2 pages: the list of brands, and the brand's product page.

From the 'Details' section, I was able to pull the following characteristic features of products sold by Sephora, including:

- Skin Type: a callout used for when a product is made for a specific skin type; Oily, Combination, Dry, Normal.

- Research Results: a callout used for when a product undergoes clinical research and can state results such as "88% visibly smoother skin".

- Dermatologist Tested: a callout use when the product is tested by a dermatologist.

- Formulated Without: a callout used when a product does not contain specific ingredients that consumers find undesirable. Examples include: Parabens, Phthalates, oil, alcohol, silicone, nuts

- Vegan: a callout used when the product is not tested on animals or has ingredients without animal products.

- Clean at Sephora: a label used when a product excludes a variety of certain ingredients.

After the 'Details' section is the 'Ratings and Reviews' section, which lists the number of reviews for each star count and average star rating for the majority of the products. However, there's about 20% of products that have a listed recommendation rate. Brand new products, as well as best-selling products of all categories, have it missing, for reasons unknown to me.

See below for what the recommendation rate looks like on the product page:

Visual Analysis:

As a frequent shopper of sephora.com, I had some initial questions in mind:

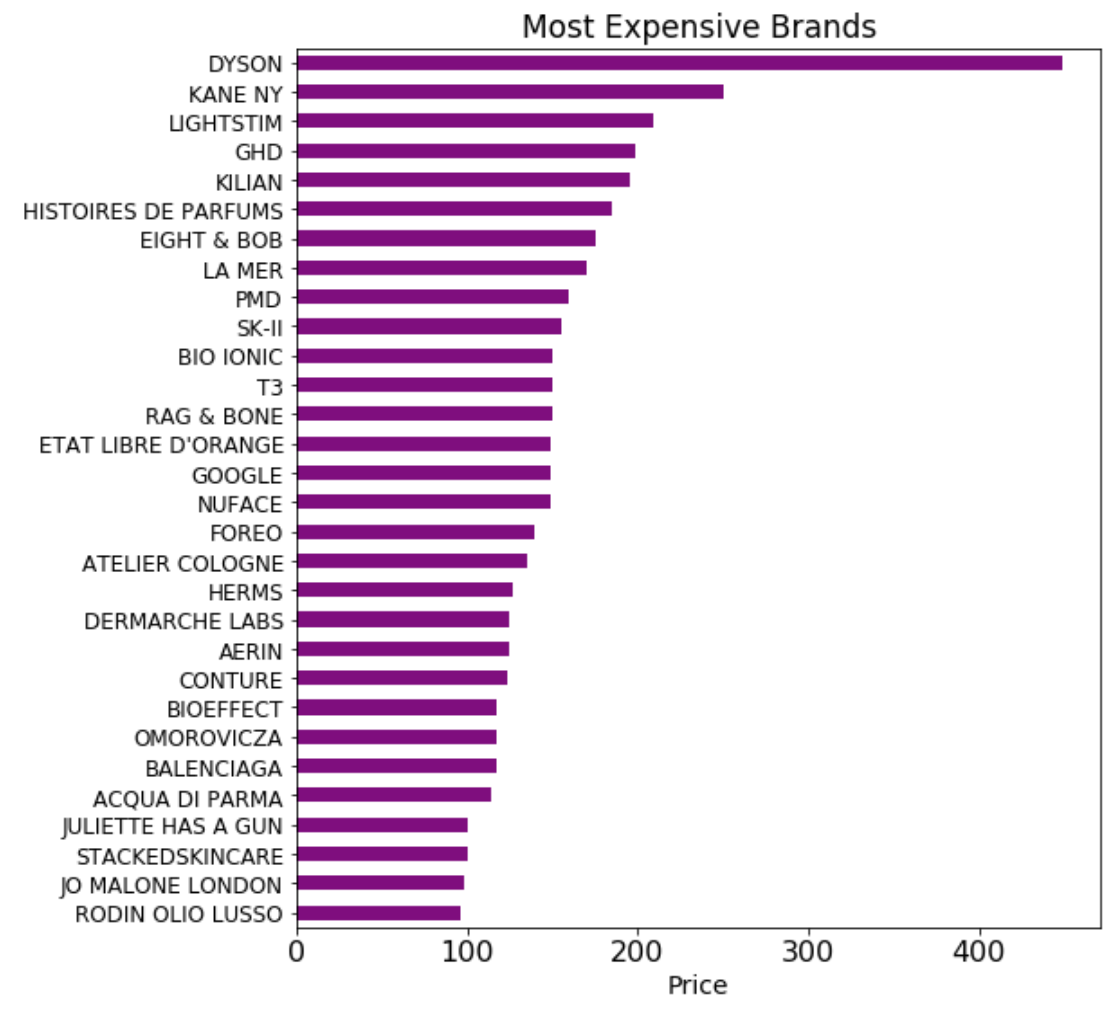

Which brands are the most expensive?

Note: A maximum of 60 products per brand was collected

To start off my analysis, I plotted the 30 most expensive brands using the median. In total, 316 different brands were scraped. The majority of brands in the expensive price range cap before $200 with Dyson big step ahead with a median over $400. Most brands in this graph seem to be in the fragrance and skincare categories. However, Google was a shock. Sephora offers the Google Home, perhaps the company is charting into new territory?

Which star rating is the most common?

Note: The star rating percentage is the total sum of each star rating divided by the total # of star ratings

A large majority of products have 5 and 4 stars. I cannot imagine a product with a 1 or 2 average star rating to stay around in the beauty market for long. This factor is what most likely contributed to such a low RMSE on my star rating machine learning model.

How are product loves (ex: 80k) and recommendation rates correlated to features like average star rating and number of reviews?

Note: I modified the data before visualization to remove missingness and outliers.

Looking at the multiple correlations between the number of reviews, loves, ratings, and recommendation rates, we can come to the following conclusions (mainly about loves and recommendation rates because reviews and ratings are pretty self-explanatory).

- Recommendation Rate and Number of Reviews have a slight positive correlation. When the number of reviews increases, the recommendation rate increases.

- Number of Loves and Average Rating have a positive correlation. As the average rating increases, the number of loves increases.

- Number of Loves and Recommendation Rate have a slight negative correlation. As the number of loves decreases, the number of Loves increases.

- This might be because the recommendation rates are high for newer products with a fewer amount of loves and reviews.

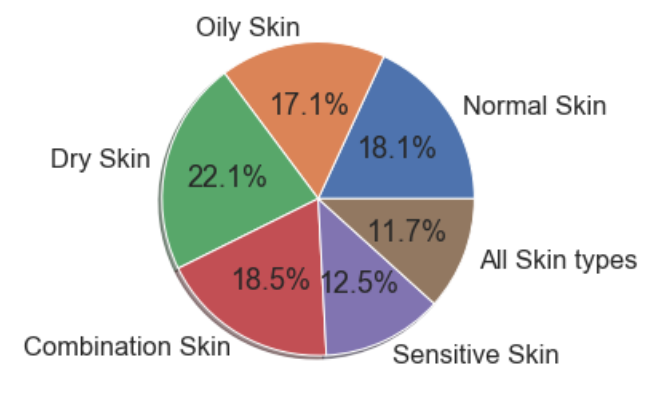

Which skin types are products most commonly advertised for?

A bit of an even playing field here, as each skin type has a product for the buyer's needs.

How do product categories compare with ingredient callouts like Vegan, Clean or Formulated-Without?

I had a hunch that skincare and makeup would have the most offerings, but was shocked to see that 2 of the top categories (Men, Nails) had no clean or vegan callout in the details. After a quickly glancing through the Tools & Brushes category, it was apparent that products like a hairdryer or tweezers are not applicable to the callouts.

It's nice to see that all product categories (with the exception of fragrance and brushes) are more likely to have a dedicated callout for not containing certain undesirable ingredients than not. However, the industry still has a bit more work to do in order for more products to meet the Clean and Vegan label standards.

Machine Learning:

For the machine learning portion of the project, I want to predict the product's average star rating and recommendation rate. I used a linear model and a decision tree model, both easily accessed by Python's Scikit-learn.

For my linear model, I chose ElasticNet, which is a combination of both ridge and lasso regression in that attempts to shrink for model-complexity/ multicollinearity and do a sparse feature selection at the same time. I optimized the model using cross-validation against different alphas.

For my decision tree model, I chose XGBoost, which stands for eXtreme Gradient Boosting. The XGBoost library implements fast and high-performance gradient boosting decision tree models. To break that down:

Decision trees generally do a better job at capturing the non-linearity in the data by dividing the space into smaller sub-spaces. Gradient boosting is an approach where new models are created that predict the residuals or errors of prior models and then added together to make the final prediction.

Before running the final model, I performed CV on a variety of hyperparameters such as the learning late, minimum child weights, minimum samples split, minimum samples leaf, and the number of estimators. This enabled me to achieve the lowest model error.

Predicting Product Average Rating:

I found that newer products had abnormally higher or lower average ratings and recommendation scores, so to mitigate, I dropped any products with less than 150 reviews to get a more accurate score from a larger majority of purchasers.

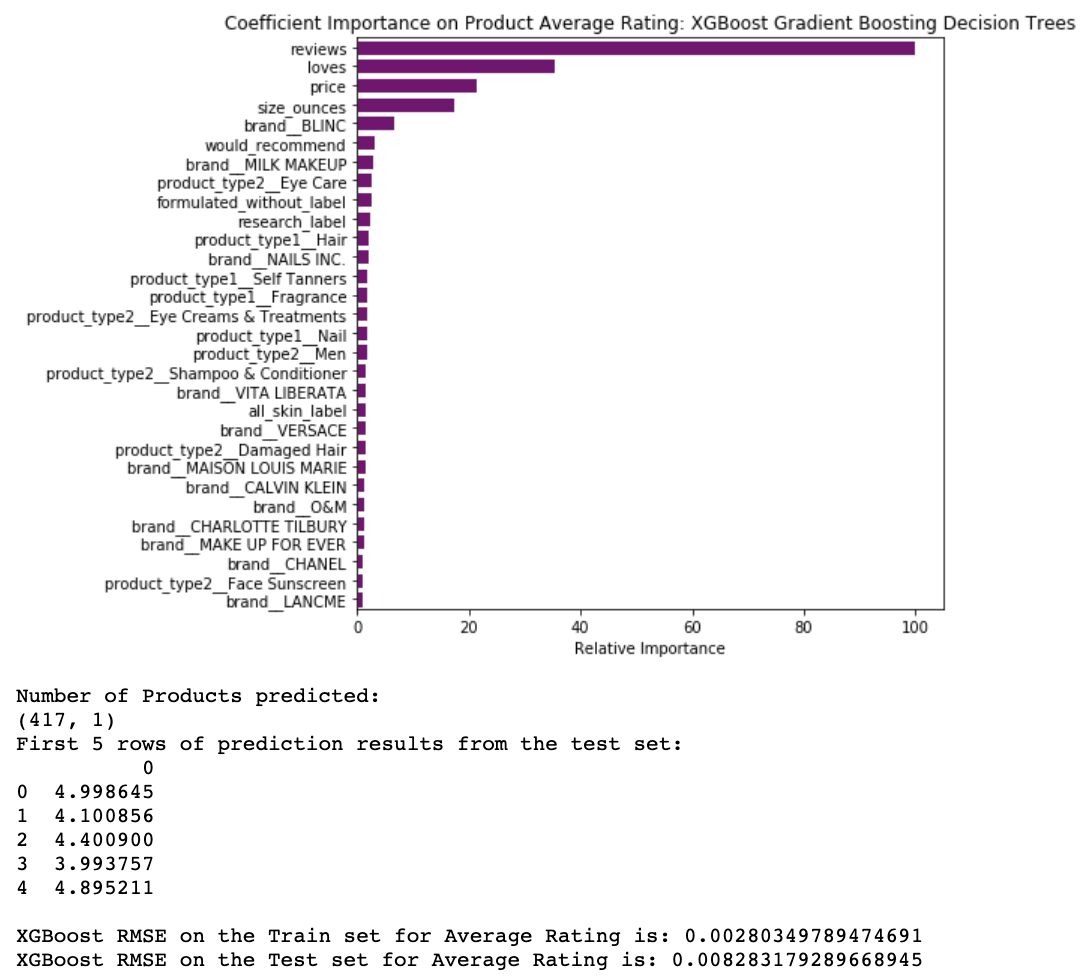

XGBoost:

Unsurprisingly, the most important factor on average star rating is the number of reviews followed by loves, price, and size. It's also notable which brands and product types had an impact on the rating. From the details section of the product page, the important variables were 'formulated_without', 'research', and 'all_skin_label.

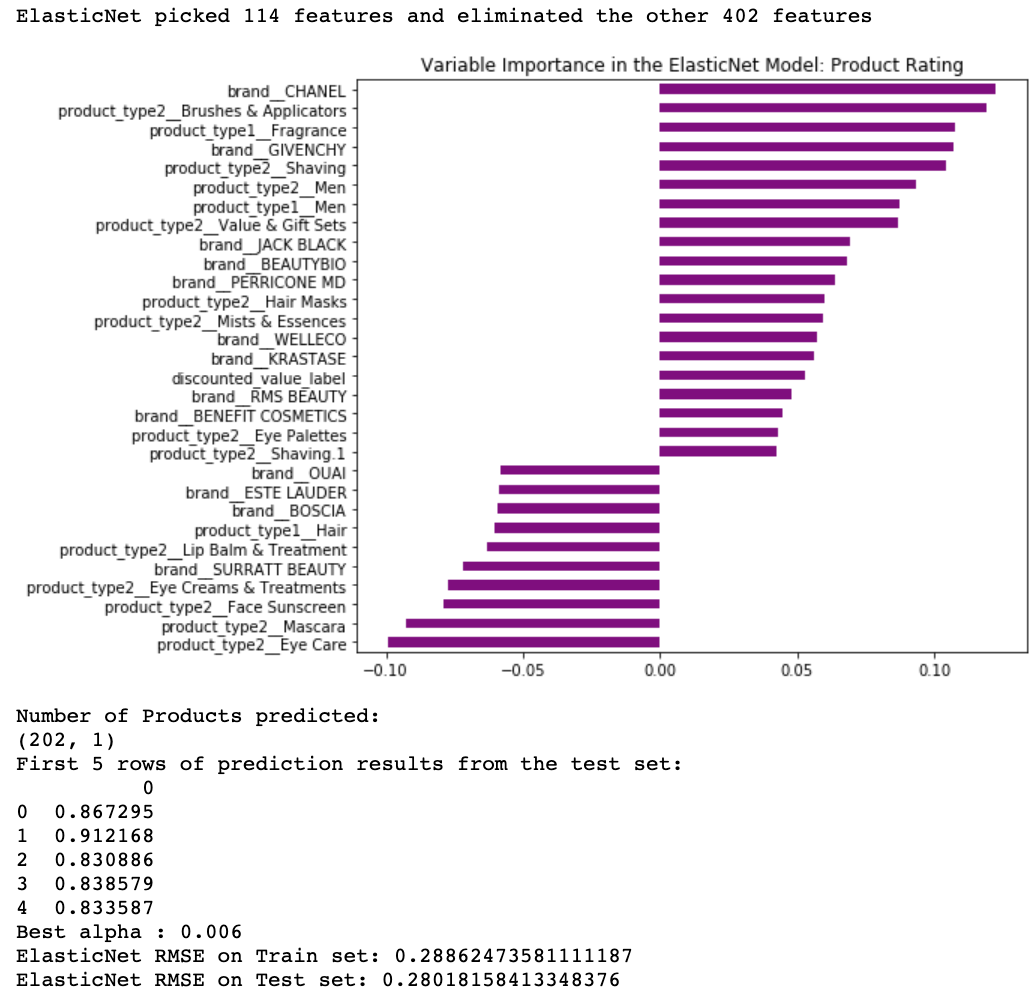

Elastic Net:

For the ElasticNet graphs, the top 15 rows have the highest positive variable importance while the bottom 10 rows have the highest negative variable importance. The only variable besides a brand/category to make an impact in the ElasticNet model is 'discounted_value', which is not a product on sale but usually a bundle of products at a special price. This model is very different from XGBoost in that the number of reviews, loves, and size all lack importance.

Predicting Product Recommendation Rate:

In order to train the model on products that only have recommendation rates, I had to drop almost 6000 products, leaving with me about 1500 products to predict on.

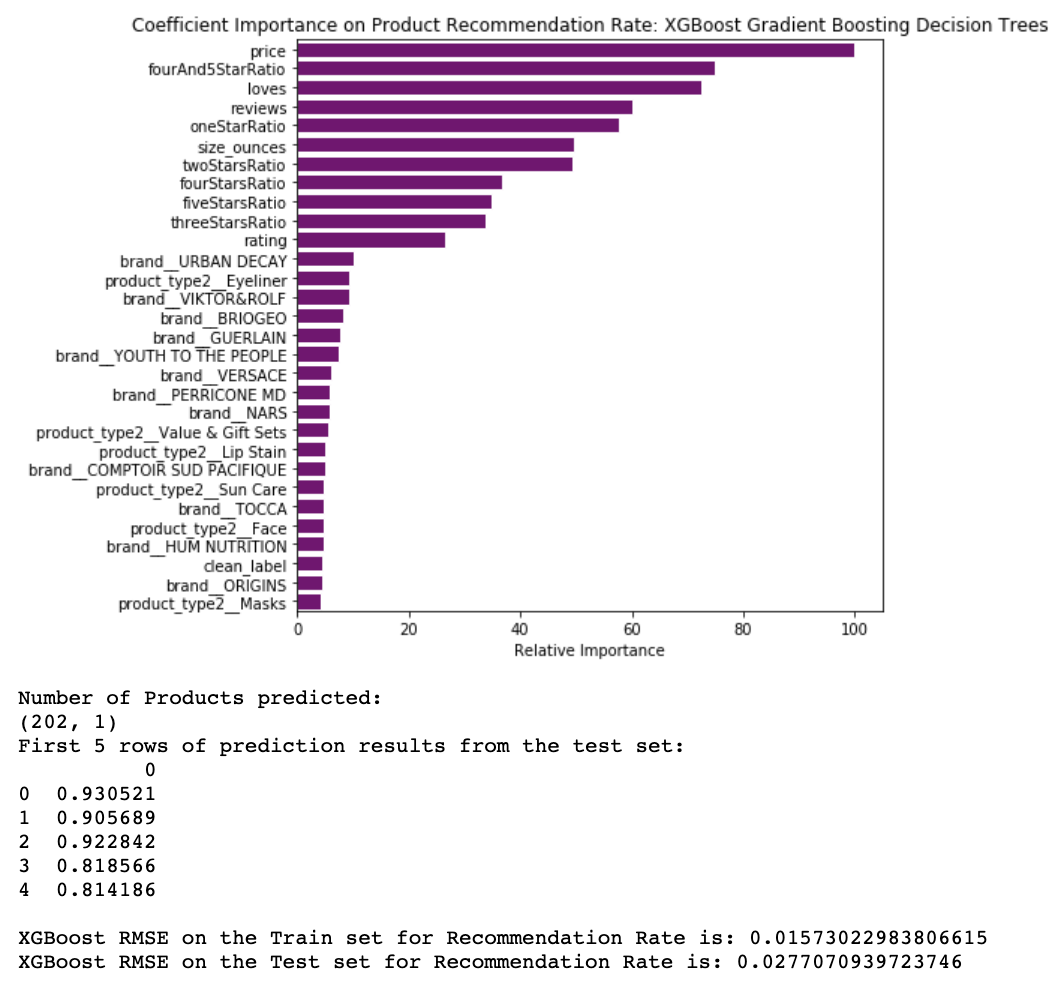

XGBoost:

Similar to the average star rating, the recommendation rate's most important variables include price, loves, reviews, in addition to the various star ratings playing an important role. From the details section, the 'vegan' and 'formulated without' labels were the only variables present in the top 30 of importance. The error is still 2-3% which might make or break my purchase if it predicted 87% and only 85% recommended. Gathering additional variables from the product page would most likely reduce this error.

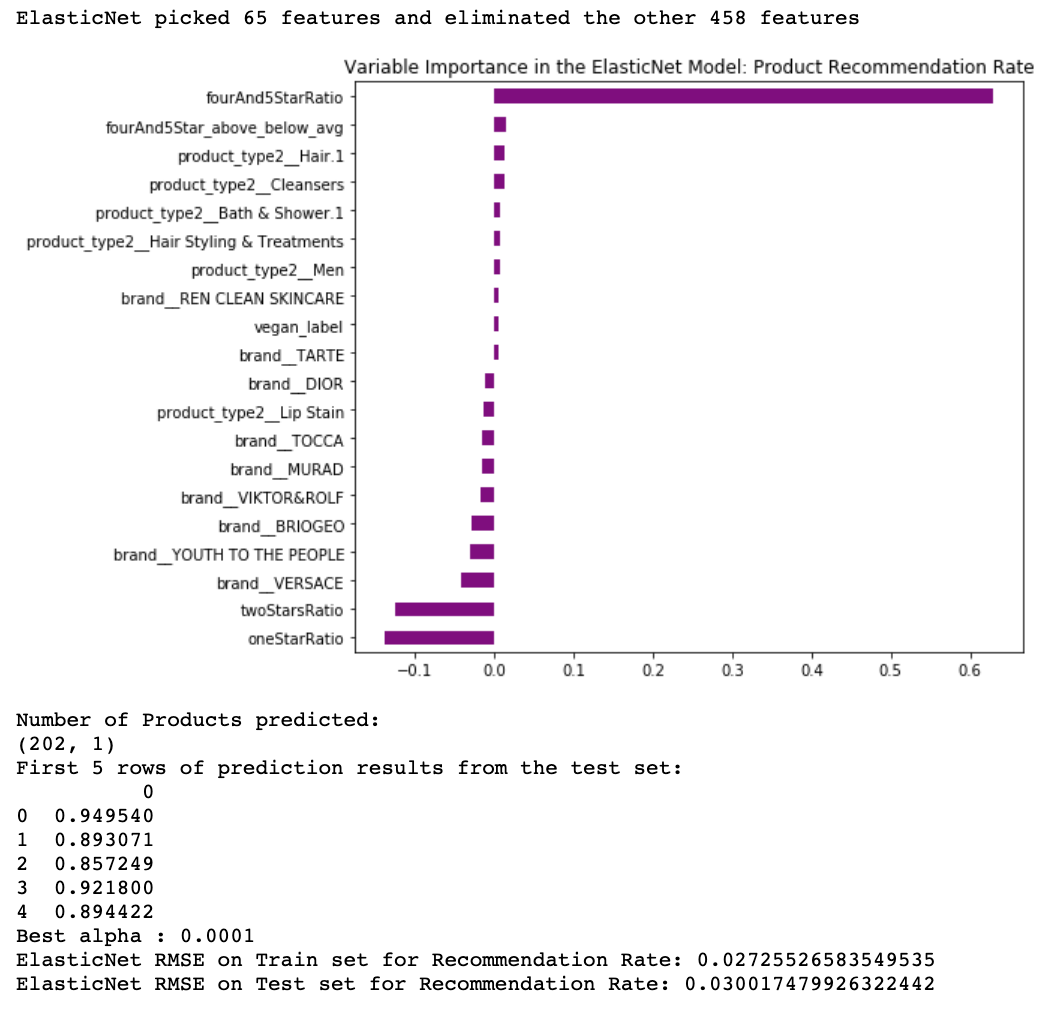

ElasticNet:

fourand5StarRatio by far has the most impact on the product recommendation rate for the ElasticNet model. The error is still 2-3% which might make or break my purchase if it predicted 87% and only 85% recommended.

Results:

Conclusions and further improvements:

In the future, I would like to collect all of the reviewers' data from an accessible API as additional predictors for my machine learning models. Unfortunately, my sale price predictions had too high of RMSE's to post, but adding the reviewer's data and profiles would surely help with the model accuracy.