Data Driven Ads by Starbucks Customer Segmentation

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Starbucks is a multi-billion dollar company with over fifteen thousand stores in the United States, serving tens of millions of customers per year. They are not just a purveyor of coffee, snacks, and light meals; but a brand. In order to maintain this status, Starbucks offers a multitude of payment options including branded credit cards, digital payment options (for example, 'Apple Pay'), and a cellphone app . Not only do these alternative payment methods attract younger customers, they allow for the collection of demographic data of their users that cash and customary credit cards do not.

This demographic and transactional information can be leveraged to increase revenue and brand loyalty. One of the most direct ways of influencing customer behavior is the use of targeted advertisements and offers. For this project, a subset of approximately 15,000 Starbucks app and credit card users and their purchases over the period of one month was gathered with a goal of segmenting the sample, analyzing each cluster's behavior, and generating targeted advertisements to increase customer engagement and sales.

Data Exploration

Gender

Men are over-represented in this data set and there is a small (less than 1% ) of customers who identify as other.

Age

This data represents an older clientele than would be typical of the average Starbucks customer population. This is most likely the result of using data from Starbucks credit card holders.

Income

Most of these customers are earning a decent living. There is a small section of people with an income over $100,00 per year. The largest section represents 'middle-class' earners and there is a sizeable portion of low-income customers.

Total Spending

By far most customers only spend around twenty dollars per month, although there are some patrons who have spent hundreds of dollars at a Starbucks location over this one month period.

Offer Descriptions

Over the course of one month multiple offers and advertisements were sent to each customer. These communications fall into three distinct categories:

- Offer: Buy One Get One (BOGO)

- Offer: Discount

- Informational

Each of the offers have four metrics associated with them:

- Duration : 0 - 7 (Number of days the offer is valid for)

- Difficulty : 0 - 10 (Scaled dollar amount spent required to qualify for the offer)

- Reward : 0 - 10 (Scaled dollar amount of the discount or free item)

- Channels : Mobile, Web, E-mail, Social (The method the offer is communicated to the customer, can be multiple)

Data Wrangling

Compiling all of this information into a single dataframe yields detailed information for each customer, including:

- Gender

- Age

- Yearly Income

- Year became an app user

- Number of BOGO offers received

- Number of Discount Offers received

- Number of Informational offers received

- Average Reward value of all received offers

- Average Difficulty of all received offers

- Average Duration of all Received offers

- Number of offers received by each channel (Mobile, Web, E-mail, or Social)

- Number of offers, received, viewed, and completed

- Hours since their most recent transaction

- Total dollar amount spent

- Number of purchases

This data reveals the detailed behavior of each customer and how they interact with the Starbucks app.

Clustering

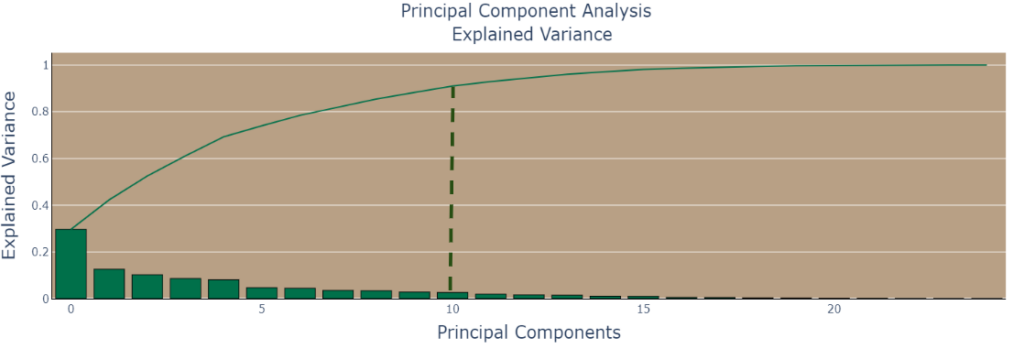

The first step of clustering is dimensionality reduction. Since the primary goal is to cluster our users into distinct categories, interpretability is not a high priority, therefore principal component analysis is a viable method for simplifying our data.

After numerically encoding our nominal variables and using sklearn's standard scaler we can begin P.C.A.

After projecting our data into smaller dimensional spaces we can see that ~90% of the variance is explained by ten principal components, despite our original data having 24 features.

The next step is to use the elbow and silhouette tests after performing K-Means Clustering to determine the optimal number of clusters.

From a purely mathematical standpoint, the high silhouette score and the 'elbow' of the distortion graph indicate two as the ideal number of clusters. However, after performing the segmentation into two clusters, there simply is not enough actionable, from a business standpoint, to justify this segmentation. The two cluster segments are divided primarily on demographic lines; age, gender and income. Although this is a valid clustering, it is desirable to have the clusters separated by their behavior rather than their physical characteristics.

After some experimentation, four clusters seems to be the optimal number for creating targeted advertisements.

Understanding Our Groups

R.F.M.

A simple way to get an overall view of customer value is RFM analysis. For this data, a score of zero through five is given to each customer for the metrics; Recency (How long since the customer has made a purchase), Frequency (how often the customer makes a purchase), and Monetary (The total value of the customer's purchases).

The above graph shows the average RFM score for each cluster.

Clusters 0 and 2, and clusters 1 and 3 tend to have similar scores. Groups 0 and 2 scoring lower on the RFM metric than the other two groups.

Engagement

This graph shows the percentage of offers each cluster viewed and completed.

- Cluster 0 : Views and completes most offers

- Cluster 1 : Views almost all offers but only completes 30%

- Cluster 2 : Views most offers and completes them

- Cluster 3 : Views a little more than half of the offers but only completes a small percentage

From our brief analysis so far, it is apparent that clusters 0 and 2, and clusters 1 and 3 have enough similarities on core business interaction (RFM and offer engagement) that it is useful to group them together in an attempt to understand what separates them into meaningful groups.

Cluster 0 and 2 Analysis

Gender

These two groups have a larger percentage of female representation than the overall sample, with cluster 2 having a slightly higher male percentage.

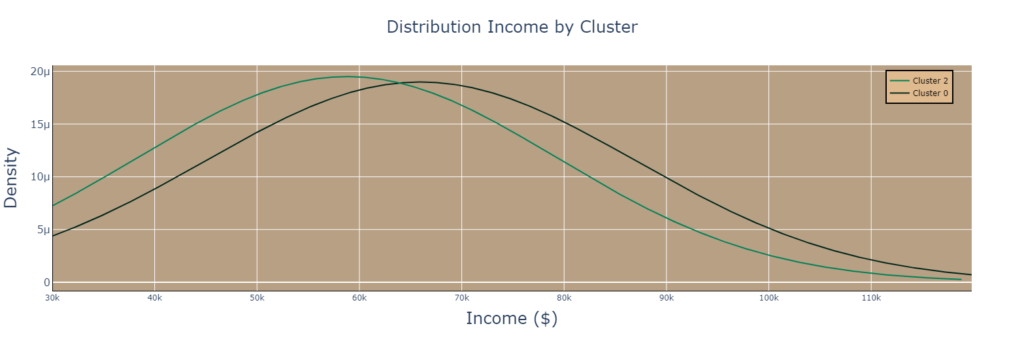

Income

Cluster 0 is slightly wealthier than cluster 2.

Age

Cluster 0 is slightly older than cluster 2.

Revenue

Cluster 0 spends more than cluster 2 but less frequently.

Transaction Frequency

The previous graph shows the number of hours between transactions for each customer in cluster 0 and cluster 2. The most interesting thing to note about the above graph are the spikes at 72, 96, and 169 Hours (3, 5, and 7 days). These two groups show similar behavior with a large percentage of customers routinely shopping at a Starbucks location every three, five, or seven days. This sort of routine pattern indicates a high degree of brand loyalty from these two clusters. The most noticeable difference in these two plots is that cluster 0 is far more likely to make a purchase more than once a day. This multi-daily purchase and regular purchasing frequency indicates that cluster 0 is a "captured" demographic, meaning they already have a high degree of brand loyalty.

Recap: Clusters 0 and 2 Descriptions

Similarities

- Low R.F.M.

- Low Spenders

- Females over-represented

- Engaged with the Starbucks App

Differences

| Cluster 0 | Cluster 2 |

| Wealthy | Poorer |

| Older | Younger |

| Low Spenders | Lowest Spenders |

| Multiple Daily Purchases | Every three and 7 day purchasers |

Cluster 1 and 3 Analysis

Gender

Males are over represented in these two clusters, and the largest percentage of 'other' gender is in cluster 1

Income

The income of these two groups is similar, however; cluster 1 has a slightly higher overall income. The distribution graph of cluster 1 peaks at approximately $65,000 and cluster 3's income at $70,000.

Age

Very similar to income. The age's of these two groups are very similar with a distribution peak at around 55 years old, which is representative of the overall sample.

Revenue

There are very different spending habits for these two clusters. Both groups have spent more than the previous two, however most of cluster 1 spent approximately $200 during this one month period, while cluster 3 spent $125.

Transaction Frequency

This graph shows similar behavior to the previous two clusters. Purchase frequency spikes at 72, 96, and 168 hours, although they are not as pronounced as the previous groups. The most relevant takeaway is that both of these groups are frequent daily purchasers. Most of these customers have made at least one purchase less than 6 hours from the previous.

Recap: Clusters 1 and 3 descriptions

Similarities

- Moderate Income

- Median Age

- High R.F.M.

- Not Engaged with the Starbucks app

- Males over-represented

Differences

| Cluster 1 | Cluster 3 |

| Highest Spenders | High Spenders |

| Highest Number of Purchases Per day | Highest Number of Every 3 day purchasers |

Understanding Context

Over this one month period multiple offers were sent to these customers. It is important to understand the customers' behavior and how it relates to the already sent offers before creating targeted advertisements.

The above graph show the average difficulty, reward, and duration of offers sent to each cluster (As a reminder: duration is number of days the offer is good for, reward is the scaled dollar value of the offer, and difficulty is the scaled dollar amount needed to be spent to qualify for the offer.)

The distribution of each metric across the four clusters is relatively even, with a few notable exceptions.

- Cluster 0 : Received offers with higher than average reward, difficulty, and duration.

- Cluster 2 : Received the most difficult and longest duration offers.

- Cluster 1 : Received the lowest reward, difficulty, and duration offers.

Contextual Analysis

Clusters 0 and 2 have received the highest difficulty offers and have also completed the highest percentage of sent offers. This signifies that the difficulty of the offer has less of a drawback to these customers. Therefore, it is reasonable to assume sending a targeted offer with higher initial purchase would not be affect conversion percentage and would increase revenue.

Cluster 0 takes advantage of most of our offers, even though they receive slightly higher than average difficulty. However, they spend the second least among all of our groups, despite having a large percentage of customers who make multiple purchases a day. These two facts suggest a targeted offer with slightly higher initial purchase (difficulty) and lower reward could generate more revenue from this group.

Cluster 1 has the lowest offer completion ratio of all groups and the highest revenue. Since this group also receives the lowest difficulty offers, in order to increase offer conversion a larger reward would be needed.

Cluster 2 represents our lowest spenders as well as the group most likely to make a purchase once a week. They also complete a high percentage of offers, meaning a shorter duration offer could entice this group to frequent a Starbucks location more often.

Cluster 3 views only a small percentage of offers and completes even less, they also represent our second highest revenue and frequency bracket. In order to increase sales from cluster 3 it will be necessary to increase visibility of our communications with them.

A simple adjustment of the offer parameters may be enough to increase revenue from clusters 0 and 2, however a more nuanced approach may be necessary to increase engagement with the other groups.

Offer Types

The completion ratio of cluster 1 is very low, it may be necessary to change the types of offers they are sent.

Right now, the average member of cluster 1 gets 4 BOGO offers, 3 Discount offers, and 1 informational flyer over the course of one month. Since they are not responding to the current configuration, sending more discount offers with a high difficulty (larger initial purchase) may increase spending and completion.

Cluster 3 receives a low and even amount of BOGO and discount offers and completes both at a relatively even, but low rate. This doesn’t really reveal anything actionable and therefore must look elsewhere. Since the initial viewership of the offers is low, reviewing what methods or channels are used to distribute the offers could produce better results.

Distribution Methods

Interestingly, all four clusters show the same pattern. Each successive cluster received less offers per channel than the previous. There is one notable exception to this pattern with cluster 3 receiving more web offers than cluster 2.

The end goal of visualizing the distribution channels is to determine how to increase the offer viewership and completion ratio of cluster 3. Currently, they are receiving predominately E-mail, and mobile offers, and not responding well. A new tactic of focusing on web based and social media outreach to this cluster could produce results.

Proposing New Offers

Justification

Cluster 0: This group has the highest completion ratio of all clusters, but spend the second least on average. Difficulty does not seem to be a factor with this group therefore giving a low reward but high difficulty (initial spend) could increase revenue. They receive an equal amount of BOGO and discount offers and complete them at even rates, if raising revenue is the goal then discounts seem counter productive. Cluster 0 is engaged in all various methods of offer distribution keeping the most popular, BOGO seems prudent.

Cluster 1: Is the highest spending group but they complete a very small percentage of offers, but there is still opportunity to get a little more revenue with them. They already receive the lowest difficulty offers. An alternative tactic would be an average difficulty offer with a higher reward. They already receive more BOGO offers, and are not converting, it could be time to try more discount offers with this group. Since they already view most of the offers, we will keep the same channels of distribution.

Cluster 2: Represents our lowest spenders and least frequent customers, although they complete a very high percentage of rewards they view. They also have already received the most difficult offers and completed them, difficulty is not a barrier to this group. Increasing their visit frequency with lower duration offers would be an attractive tactic. Keeping the same difficulty and reward they have previously received can help ease the transition to more frequent visits.

Cluster 3: The second highest revenue group but lowest engaging with offers. The new offer would try different channels that what they have been receiving. These customers are already in Starbucks locations frequently, therefore using the average duration for the new offer makes sense. Trying a low difficulty and low reward offer to test the waters and re-evaluate on next months data. Choosing an offer type for cluster 3, who already receives an even amount of discount and BOGO offers, is more difficult. Over the entire month's data, discount offers have a slightly higher completion percentage. This leads to the conclusion a discount offer gives a slightly better chance of completion.

Prediction and Verification

Before adding new offers to the Starbucks portfolio it would be prudent to know how effective they are.

Multiple attempts were made to use the new offers and supervised machine learning to make a prediction as to weather a customer was likely to view and/or complete a proposed offer. However, the relatively small sample size of each cluster (between 3,000 and 4,000 customers) proved to be a hinderance to model training.

Individual behavior in this data set is too random to make a prediction with any degree of accuracy with simple tools like tree based models, linear regression, and K nearest neighbors. Some hint of success was found using support vector machines with a polynomial kernel. One cross validated model achieved an accuracy of 65%, however the excessive computational time (over 36 hours to train and cross validate a single model) proved to be an insurmountable barrier to a reliable mathematical prediction.

A more practical method of validating the effectiveness of the proposed offers is to use A/B testing.

Send a targeted offer to a certain percentage of random customers and compare their conversion ratio against the baseline data. A measurable uptick in completion ratio will provide anecdotal evidence to the offers efficacity.

Conclusion and Next Steps

This real world data set provided an interesting insight into how consumers interact with a major brand. Despite the skewed nature of the demographics (older, credit card carrying consumers) it is still fascinating to see the repetitive and habitual behavior of people. The 'Time Between Transactions' graphs were especially satisfying to create. Being able to visualize the recurrent nature of certain customers was eye opening.

The customer segmentation analysis was time consuming, but worthwhile. It is difficult to predict how clustering algorithms with segment a population and generally surprising the differences that emerged.

The high positive correlation between income and offer completion percentage and spending per month was expected (this is very well illustrated in cluster 2)and visualizing it was gratifying.

The hardware limitations preventing the creation of an accurate predictive model was disappointing, especially considering the confidence I had, with my abilities to create it. In the future, with more processor cycles at my disposal I plan to reattempt to create this model.