House Prices: Advanced Regression Techniques

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction:

A house is usually the single largest purchase an individual will make in their lifetime. Such a significant purchase warrants being well-informed about what a house’s selling price should be; for the buyer, as well as the seller or real estate broker involved.

The power of machine learning provides us with the tools we need to look at a large data set and spit out a predicted value, which was our main goal in this project. Using a dataset containing information on houses in Ames, Iowa, our team leveraged different machine learning techniques to predict sale prices based on both practical intuition and those observed through our exploratory data analysis and model fitting processes.

The general outline of the process was:

- Imputing Missing Values

- Exploratory Data Analysis

- Feature Engineering/Dimension Reduction

- Fixing Skewness and Outliers

- Modeling

The Data Set

This Kaggle data set consists of roughly 3,000 property listings (observations), each with 79 property attributes, and our target, sale price. From the initial Kaggle competition, the observations from our data set were split in half: one half for training our model, and the other to test our results for prediction accuracy.

The 79 features (property descriptions) ranged from the presence of certain amenities (i.e. garage, pool, outdoor porch, etc.), to number of rooms or car garages, square footage of all spaces, quality and condition scores of amenities and property overall, exterior finish types, basement, and much more.

Imputation

With multiple linear regression being our likely model candidate, we knew we had to impute missing values. A significant number of columns initially contained missing values, some with patterns and others missing completely at random. To aid in handling these missing values, we used the variable description text file provided to us, giving direction to our imputation methods. Most null values were changed to None or 0 if, as in the case of no garage present.

While imputing these values, there were some features, such as PoolQuality and PoolArea that showed us we need to pay careful attention to features that may have been attributed to a data collection error or missing completely at random. In this example, we found that there were observations incorrectly labelled as not having a pool when, actually, the ‘PoolArea’ metric shows that there is a pool.

Being mindful of situations like this is key in having meaningful imputed values for the modeling process. It’s the difference between imputing a ‘0’ versus an assumption with an estimated integer value, which would have a significantly different effect on our predictions. For the ‘Electrical’ input variable, we found only one missing value that we imputed with the most common class in this feature, while ruling the other options as logically highly unlikely.

Missing Values

There were five basement features with missing values. We found that missing values for the Basement Quality feature implied there truly is no basement, which is directly related to the remaining four basement features and consistent across all five for dozens of observations, so we imputed these with ‘No_Bsmt’ to all five features where basement quality had null values.

Still, we found BsmtFinSF2 area is greater than 0 in one row, which was imputed with median value since it cannot be NA and also found one row with Unfinished Bsmt when there definitely was a basement where we had imputed BsmtExposure as No Exposure. We had a similar situation with the five garage features where we imputed all the features where garage area was zero, which clearly indicates the absence of a garage.

Lastly, there were a couple hundred values missing for Lot Frontage, the length of the property that borders the street as measured in feet. We knew from the description file that all properties had a lot frontage, but there was no pattern to how or why these values were missing. We decided to base our imputation method on the most correlated and practical variable to lot frontage, ‘Lot Area,’ by using a simple linear regression on all properties with both. We identified a strong linear relationship up until about 200 feet.

We capped the maximum at 200ft for values with a large lot area, as we saw that in our dataset, though there were some very large properties, their lot frontage did not increase indefinitely. This makes intuitive sense because a slightly larger house will have slightly more lot frontage on average (maybe in a linear fashion), but a house that is ten times larger likely does not have ten times more frontage.

Exploratory Data Analysis

After cleaning and imputing the dataset, we began to explore distributions and relationships inherent in the features and target sale price. Firstly, we wanted to establish a baseline for the strength of regression coefficients we might expect in our eventual modeling phase. To do this, we looked at the correlation coefficients of all variables against one another to identify the strength of relationships between variables, and plotted them visually using the Seaborn Python library’s pairplot and correlation heatmap.

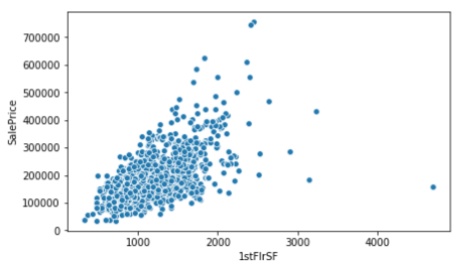

Unsurprisingly, the most correlated features to sale price were the overall quality score (79%), above-ground living area (71%), garage area (64%), and number-of-car garage (62%). These are intuitive, as the first suggests the quality of maintenance and build of the property as a whole, and the latter three suggest the size of key features of most houses.

All those features increase maintaining a consistent ratio with sale price, as they increase in score and size along with property value. Plotting each variable individually against sale price in a scatter plot confirms the strength of the relationship and also shows an early indication of a few outliers where square footage may be large, though property value is relatively low.

First Floor vs Basement

Looking at the correlation of features against one another, we also see some logical linear relationships: first floor square footage vs. total basement square footage, total rooms above ground vs. above-ground living area, garage area vs. number-of-car garage, and garage year built vs. property year built are all strongly correlated relationships. These findings are informative, as we later seek to reduce features and the risk of multicollinearity in our feature engineering and feature selection phases of modeling.

The last notable discovery in our exploratory data analysis is the distribution of our target, sale price. There is a clear right-skew in the distribution of sale price with a vast majority of property values in the $100,000 - $200,000 range, but with a long tail stretching upward towards $800,000 (bottom left image).

Using a Box-Cox transformation, we identified that taking the log of sale price transforms the distribution to approximately normal (bottom right image). This transformation increased the strength of linearity amongst most our variables against sale price, validating the assumption of linearity in regression.

Feature Engineering

We had 79 features but considered such a large number would be detrimental to our model and run the risk of too much dimensionality. Leveraging some of the analysis in exploration with common knowledge about house attributes, we sought to merge certain features and reduce dimensionality. For instance, we had observed strong correlations between many square footage metrics and sale price.

It also makes sense that larger houses, and especially those with large porch/patio spaces and garages, might draw higher sale prices. For that reason, we summed the square footage metrics of total basement, above-ground living area, garage area, open- and closed-porch area, pool area, and deck area into an aggregated total square footage metric.

In the Ames dataset we identified many ordinal features, mostly quality and condition scores, that were recorded with rankings such as “Excellent,” “Good,” “Average,” “Poor,” etc. We made a reasonable assumption that the difference between these scores are roughly equivalent. Therefore, we used scikit learn’s ordinal encoder to preserve these rankings but make their data type numeric to enable regression calculations to understand their values. Notably, many of these metrics often coincided with their respective quality and condition score.

Quality and Condition

Overall, house exterior, basement, and garage all had quality and condition scores that tend to mirror one another more often than not. In this instance, we multiplied and “flattened” the respective quality and condition scores into a combined “quality-condition” score. With the previous two processes we were able to create six new features and reduce over twenty composite features without sacrificing the variance explained by our predictors.

Lastly, there were numerous categorical variables that did not have any ordinal or numerical value. Some examples of such categorical variables were neighborhood of property, proximity to certain notable landmarks or industry (such as the U.S. departments or Iowa State University present in the city), or the building type (i.e. townhouse, one floor residence, etc.).

We used scikit-learn’s one-hot-encoding method to dummify these features and represent each category’s existence in a separate column from the others with a ‘1’ if present, or a ‘0’ if absent. We note that this process drastically increased our overall dimensions from the original 79 to 250. We will seek to remove or penalize many of these features in our feature selection process.

Feature Selection

With so many features, we run a high risk of multicollinearity; where the presence of certain features with largely similar relationships artificially inflate the variance explained in our model and create a false impression of accuracy in prediction. One example is the garage area and amount-of-car garage; these two features are directly tied to one another in magnitude and correlation, and would explain virtually the same variance against sale price.

In this case we would seek to remove one and keep the other. Another risk in modeling with so many features is the curse of dimensionality. Using all 250 features with only 1,400 observations (houses) in our data set would create such a sparsity that our model would have no accuracy. We tried two different approaches to resolve the issues of multicollinearity and high dimensionality; Akaike Information Criterion (AIC) and Lasso Regression.

AIC = -2ln(L) + 2p

AIC is an ideal statistical estimator for model selection in that it balances the trade-off between goodness of fit and simplicity of a model. On the left side of the equation (-2ln(L)), L is the maximized value of the likelihood function for the model. The higher the magnitude of L, the more negative our AIC value is and the better our model fits. However, the right side of the equation helps in balancing against overfitting by applying a positive counterbalance, multiplying 2 by p, the number of estimated parameters in our model.

2p AIC

Essentially, the higher the magnitude of 2p, the higher our AIC value which causes underfitting in our model. With these two counterbalances we seek to find the lowest AIC value by trying forward AIC, where we start with an empty model and add one variable at a time that continues to marginally maximize the value of our likelihood function without becoming too complex and backward AIC, which starts with a full model then reduces parameters and complexity while sacrificing minimal likelihood.

We use AIC as opposed to Bayesian Information Criterion (BIC) as our primary goal is prediction and not descriptive analysis. Backward AIC gave our best results, which makes sense in dimension reduction, as we start with 250 features and reduce to 43 with only marginal loss in the likelihood function. However, backward AIC cannot tell us the ranked importance of our features because it removes variables one by one from the full model.

Forward AIC, though not the optimal result, is very close and provides ranked feature importance as it adds the strongest and next-strongest coefficient from an empty model one by one while eschewing multicollinear variables. We identified through this process that total square footage, neighborhood, overall quality/condition, roof material, and year built are the most important features. Additionally, we reduced our two main risks of multicollinearity and high dimensionality through this process.

LASSO

Another great method for feature selection is Least Absolute Shrinkage and Selection Operator (LASSO) regularized regression, as it applies a penalty (lambda) that shrinks the coefficients of some variables to 0 and minimizes our residual sum of squares (the main goal of most regression predictions, shown in the image below). This process tunes the parameter lambda so that features explaining more of the variance in sale price remain non-zero with our minimum RSS; shrinking unimportant features to 0 and essentially eliminating them from the model.

Where AIC kept categorical features combined, a benefit of LASSO is that it utilizes dummified variables and can define even our key distinct categorical features. In this approach, we identified that specifically the Crawford and Stone Brook neighborhoods impact the model the most, along with house condition (normal), typical functionality, and building type.

Correlation, AIC, and LASSO all give us complimentary, yet different approaches to ranking feature importance; but AIC and LASSO specifically provide a measured way of eliminating high dimensionality and multicollinearity from our model which will be necessary to find our most optimal predictions.

Removing Outliers

We chose to manually remove certain extreme outliers in our dataset to produce a better fit. By plotting each continuous variable against SalePrice, we identified and removed the 16 most extreme outliers which totaled a little more than 1% of our dataset. In particular, ID 1299 was found to be an outlier across five separate features.

Model Fitting & Final Model

We dedicated a large amount of resources towards properly tuning our linear regression model. We chose to focus on a simple GLR model to not sacrifice too much interpretability. It was important for us to understand how our features were impacting price, which would be much harder had we included ensemble techniques.

We performed a gridsearch on numerous hyperparameters and options. We tested various models with both One_Hot_Encoding as well as Label_Encoding finding that One_Hot_Encoding outperformed as expected. It is not reasonable to expect that, even among the categorical variables that imply order, the magnitude of order would be so perfectly linear.

We tested numerous scalers on our dataset and found that normalization performed better than standard scaling. Creating a linear regression targeting Log(y) instead of y itself generally improved the performance of our tests. We also performed another independent process of feature selection via Lasso. After testing this new set of features against the features we obtained through AIC, we concluded that the 195 remaining features derived from Lasso were the best set of features we had. These would end up as the features in our final model.

After confirming all these adjustments on our data, we tuned the alpha parameter on a Ridge regression through a five-fold cross validation, resulting in an optimal alpha of 0.5. After training our final model on the full training dataset, it had an intercept of log 10.45 which translated to $34,500 as a pseudo “baseline price”. Among the 195 coefficients, the ones that carried the most weight were GrLiveArea and OverallQual. Such findings are aligned with expectations as GrLiveArea can be seen as a proxy for size and OverallQual can be seen as a “product rating” for the house.

Obstacles

There were numerous technical challenges during this project that ultimately turned into excellent learning opportunities. We validated the flaw of imposing ordinality upon categorical values through testing. We experience the difficulty in identifying outliers and tested the impact of their removal as a method of determining whether these data points should be removed. We also made some mistakes early on in interpreting the MSE due the fact that our Predicted_Y was in the form of Log(SalePrice) instead of the sales price itself.

We were also beset with a couple of overarching difficulties that seemed more macro in nature. Our entire team originally lacked domain expertise in real estate, construction, and development. While we did not see it as a significant detriment, we did acknowledge the added value industry expertise would have brought. Perhaps we would have been more clever with feature engineering or been able to draw greater insights from the coefficient values.

The training dataset had a very low sample size to feature count ratio. The training dataset was under 1500 house sales but started with nearly 80 features. After dummifying the categorical variables and dropping all irrelevant features according to Lasso, we were left with 195 features. This is well over 10% of the total data points we have! The curse of dimensionality was definitely present throughout this project.

Future Work

Regarding further work on this project, we would like to try tree models or use ensemble techniques for a more prediction-focused approach. Comparing this dataset with datasets of other housing markets around the country could provide us with interesting parallelisms as well as differences. A point of particular interest is what features would remain if we performed the same process that we implemented here on a dataset of a different region. Perhaps the number of bathrooms matter more in Los Angeles than it does in Ames.

Thank you for reading! If you'd like to check out our work on github, click here.