Indeed Web Scraping-Data Science Job Market Outlook

The moment I heard the second project would involve web scraping, I knew which website I want to use for my first spider crawl. Indeed.com, one of the most popular website for job-seekers, with detailed requirements for each position and new postings every few seconds, it seemed like a great choice research into the data scientist job market.

The Scraping Process:

The tool I used for this project is Scrapy. The key word I used for the project is "Data Science". Some issues I encountered while building my spider were the following:

First, the sponsored job postings are often duplicated. Indeed.com has 6 sponsored job postings every page and they randomly appear in each page repeatedly. luckily, they are linked using different url style from the regular postings. So I constructed my spider to get 10 regular postings per page to avoid duplicates.

Second, the limit of 100 pages per search posed a challenge. Although Indeed shows over 120k results for my search, I was limited to 100 pages(around 1K results) on my search. In order to get as much postings as possible, I conducted my search for 14 top hiring cities, with around 1K postings per each city. Although this is only 11% of the total postings, this should be a good sample for the whole posting population.

Findings

Tech companies are the obvious top hires by amount of job postings. Amazon.com, Google, Facebook, Microsoft comprised about 1% of my total data set. I will explore their specific requirements later.

In order to extract information from each job posting. I constructed a list of keywords using Regex, and examined whether they appear in one particular job description or not. For those keywords, I also put in their abbreviations (Artificial Intelligence and AI) and plural forms (Decision Tree and Decision Trees). Below are my findings:

The most popular programming tool is Python, followed closely by SQL. AWS(Amazon Web Service) ranks 6th here, probably because of Amazon is the top hiring company in this data set. Excel appears to still be widely used by companies for basic data manipulation. Big Data tools like Hadoop and Spark are also in high demand.

Below is a bar graph of the most popular skill sets. Machine learning is the most mentioned buzzword. This is not surprising since machine learning is the big idea that covers most of the data science topic. Visualization is also mentioned pretty often, which shows that in addition to modeling the ability to communicate findings to audiences is also appreciated.

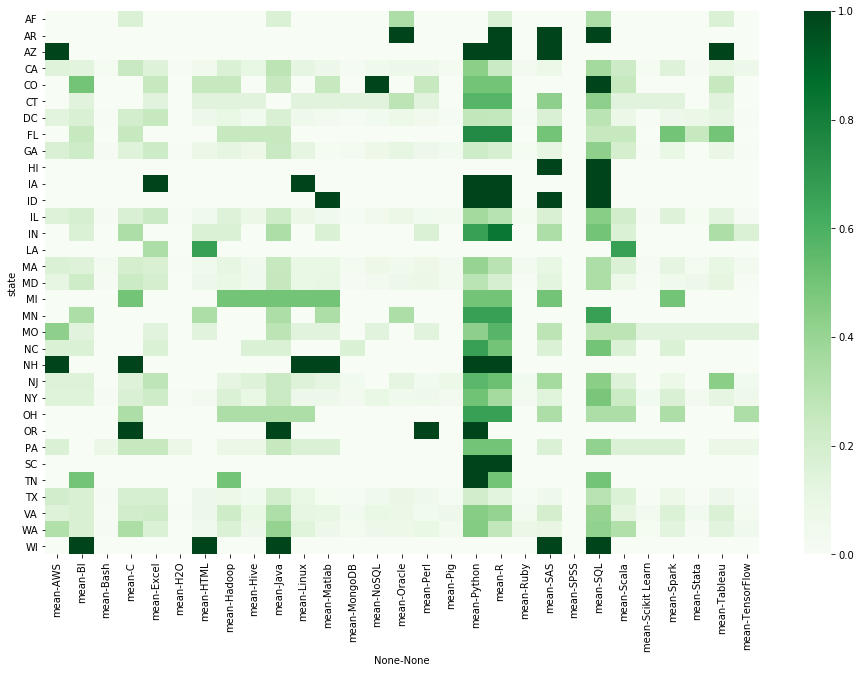

The two graphs below show the popularity rank of toolkits and skill sets within each state. We can see that states like NY/CA/WA indicate more diversified needs while some states indicate more particular needs regarding specific programming languages and skill sets.

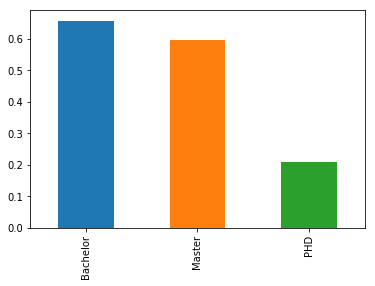

Do degrees matter in terms of looking for a data scientist jobs? The answer is yes. Sixty percent of the job postings require a Bachelor degree or Master degree. Twenty percent of them require a Ph.D.

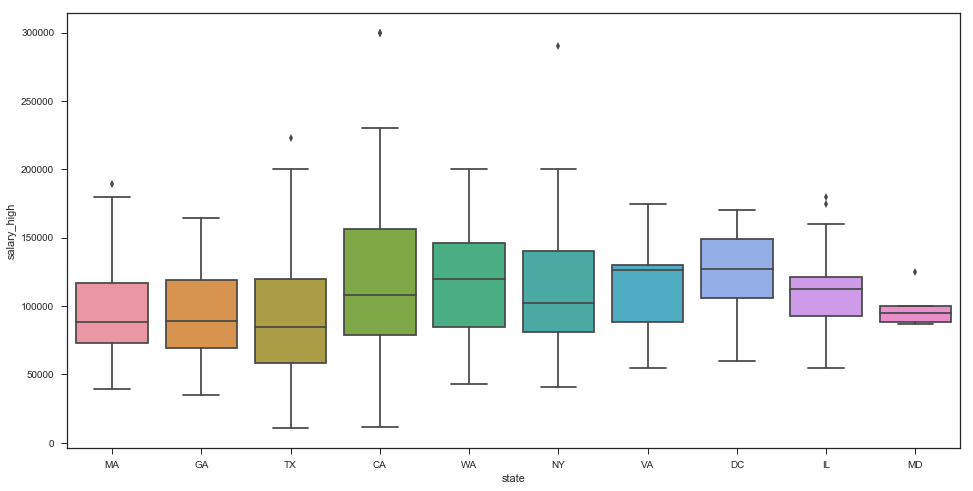

How much are data scientists paid? The box plot below is based on about 500 job postings that included salary range. I extracted any salary with more than 5 digits(which indicates a yearly salary). This is a very small portion of my dataset but should be able to give us an idea.

Knowing that tech companies are the top hires. I want to find out more about their specific job requirements. I exported all job descriptions from Apple, Amazon, Microsoft, Google and Facebook. I used Rstudio to construct a comparison word cloud. After removing a lot of stop words, the key words start to arise to the surface. This is my favorite graph so far since I learned so much about each company just by googling the buzzwords in their word cloud.

Below is a commonality cloud for the above 5 companies. We can see that most of the popular words in our bar graphs appear here again including machine learning, Python, SQL, degrees, etc.

Topic Modeling using Gensim and Visualization with pyLDAvis

LDAvis maps topic similarity by calculating a semantic distance between topics. Finding the right parameters for LDA is an art by itself. For this project, i mostly used the default parameters and trained 2 models based on a topic of 10 and 20 separately. Below is an 2D illustration with LDAvis for the model with 20 topics. We already have some interesting readings browsing through each topic.

Topic 1 is about marketing. Topic 8 is about company culture. Topic 10 is about internships(actually a big portion in my dataset), a lot of company names showed up in the word ranking. Topic 16 is the biggest topic, it includes most of the programming tools and skill sets that relate to data science jobs.

The model here is more for exploration and practice. A lot of refining works like lemmatize tokens, computing bigram( so machine learning can be counted as one word), optimize the number of topics, etc, still needs to be applied.