Predicting House Sale Prices - Ames, IA

Introduction

Over the last two years, house sales prices have skyrocketed, and home buyers are paying more than the asking price to grab any available home in this real estate frenzy. A CNN Business article states that the National Association of Realtors said in a report on Wed June 22, 2022, that the median home price in May topped $400,000 for the first time, hitting a record of $407,600. That's up nearly 15% from a year ago. At this time, a tool that helps predict house sales prices based on its features, could prove to be handy. The said tool could assist realtors/mortgage lenders to render precise performance in such a cut-throat sales market.

The objective of this project is to create a machine learning model that can assist home realtors/mortgage lenders to determine a house’s sales price. I wanted the model to use existing information provided about the home; its features such as the number of bedrooms/bathrooms, basement size, garage size/capacity, etc. to predict the house sales price. Through this research, I also made some recommendations about some probable ways that may get the homeowners a high sales price. Several models were developed, and some were shortlisted to create an optimal price determination tool.

Factors affecting House Sales Price:

Any house's sale price depends upon some universal factors that make the house more desirable among the pool of potential buyers. Some of these factors include:

- Location

- Usable Space

- House Condition (external and internal)

- Remodeling

- The number of bedrooms/bathrooms

For this project, I decided to focus on the house properties only and how they can influence sales price. According to an article by Opendoor, a home’s usable space (not garages, attics, and unfinished basements) matters when determining its value. Similarly, homes that are newer possibly remodeled, and in good condition appraise at a higher value.

Dataset Introduction

The house sales data used in this project comes from a Kaggle dataset - House Prices - Advanced Regression Techniques. This dataset includes sales price information for several homes in Ames, Iowa. Along with the sales price, we also get in this dataset 79 explanatory variables describing (almost) every aspect of the residential homes. Some of these variables give information on Lot Frontage/shape, neighborhood, house condition/quality, year remodeled, roof material, etc. The aim here is to use these unique descriptors in creating a model to best predict house prices.

House Sales Price Analysis

In my quest to figure out what factors influence a house's sales price, the first thing that I looked at was how the distribution looked for all sale prices. Sales prices for the houses were right-skewed and required log transformation for a more Gaussian-like distribution. This step ensures that our model achieves the goal of linearity. I then conducted some exploratory data analysis to research relationships between the dataset variables and the house sales price.

What we can see from these graphs here is that there is a clear relationship between some of the numerical/categorical features and the sales prices. The higher the first floor and total above-ground living area, the higher the house's sales price will be. Similarly, if the sales price relation to some of the categorical features is looked at, we can gain some useful information. From the boxplots below, we can see that a house with a higher basement and overall house quality rating evaluation will surely command a higher sales price.

Data Preprocessing

Next, before going into more detail on the data modeling let us first discuss how the data was preprocessed which is a crucial step in machine learning (several model types are sensitive to outliers). Upon initial evaluation, I notice some of the data variables had a lot of missing values. Missing values were imputed in the data accordingly. For example, I saw a lot of missing values in Pool QC and Fence categorical features, meaning the houses probably did not have a pool or a fence. In such cases, values were imputed as "No Pool" and "No Fence". For any missing numerical area-related missing values, the value of zero was added.

I also removed extreme outliers (something that can make our model prediction) from some of the features such as a single categorical value for the Utilities feature. Another thing that I changed in terms of preprocessing was that I changed the data type for some numerical columns with year, and month-related information to categories.

Feature Engineering

After the basic data preprocessing, I also created some numerical and categorical features and combined some categories into one for some categorical features.

- New Numerical Features:

- sold_age = Age of the house when it was sold.

- Usable_space = Sum of basement, above ground, first and second floor areas.

- yr_since_remod = Years since the remodeling was done.

- Total_Halfbaths = Sum of all half baths.

- Total_Fullbaths = Sum of all full baths.

- Encl_Porch_tot = Sum of all enclosed porch areas.

- BsmtFinSF = Sum of Type 1 and Type 2 finished basement area.

- New Categorical Features:

- remod_y_n: Whether house was remodeled or not.

- Combined values in categorical features:

- LandContour: replacing values other than Lvl to Notlvl.

- Heating: replacing values other than GasA to Heat_other.

- Electrical: replacing values other than SBrkr to Electr_other.

- PavedDrive: replacing values other than Y to NP.

House Sales Price Analysis (New Features):

After creating the new features to understand the data better, I also studied the relationship of some of these features to the house sales prices. One of the interesting clear relationships that I uncovered was between House Sales Price and Usable Space Area. It looked like a house with greater usable space area, will cost much more than a house with a low area.

Similarly, I also looked at how the sales price was related to whether the house was remodeled or not and the years since it was remodeled. It seems like the homes that were more recently remodeled had a higher median sales price than those that were remodeled 60 years ago. If we look at the boxplot below, we can see that although the average house sales price is lower for remodeled houses, some of the remodeled houses have commanded higher sales prices than ones that were not.

Data Modeling

Next, we will look at the regression models (both linear and tree-based models were attempted) attempted for this project. All the data was log-transformed and numerical features were standardized. Models were trained/evaluated on a 70:30 train-test split.

Linear Models:

For the Linear models, categorical features were either label encoded or dummified (see below) based on the type of feature. For these models, variables with high multicollinearity were removed (based on VIF values) for example Roof Style, and Garage Type. The types of Linear models attempted are listed below:

- Multiple Linear Regression (MLR)

- Ridge and Lasso Regression

- Support Vector Regression

Multiple Linear Regression (MLR):

For the final MLR model, independent features were selected via Lasso regression (hyperparameter alpha was chosen by grid search cross-validation). List of selected features (Lasso alpha=0.01) is shown below.

If we look at the residuals and prediction error plots for this model, we see that the residuals are fairly random and uniformly distributed and there is a decent linear agreement between the actual and predicted house sale price values.

Penalized Regression (PLR):

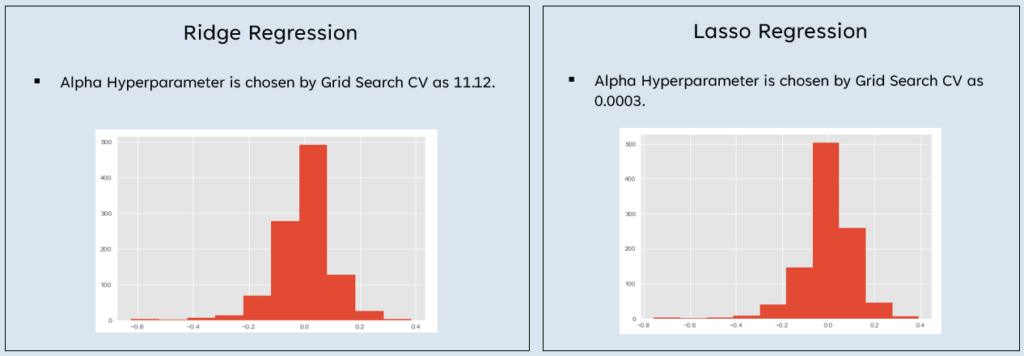

The results for the Ridge and Lasso PLR models are shown below. The only change to note is that, for the Lasso model, the data was normalized beforehand.

For both models, the residuals are uniformly distributed (low variation for the Ridge model), with a good agreement between the actual and the predicted house sales price values. The accuracy for both Ridge and the Lasso Regression models, (90.6% and 90% respectively) are decent and an improvement over the MLR model accuracy (87.6%).

Support Vector Regression Model (SVR):

For the final SVR model, all the basic preprocessing steps were the same as other linear models. Some features with high multicollinearity were dropped. Some additional work was done in selecting model hyperparameters. Hyperparameters C (controls regularization; the strength of the regularization is inversely proportional to C), and epsilon (specifies the epsilon-tube within which no penalty is associated in the training loss function with points predicted within a distance epsilon from the actual value) were determined from plotting R2 and errors for train and test data. Other hyperparameters (kernel coefficients gamma and kernel in this case) were selected by Grid Search CV.

In terms of uniform residual distribution, actual/expected sale price agreement and accuracy it seems like the SVR model fares much better than its other linear counterparts.

Tree-based models

For the tree-based models, categorical features were label-encoded only. Features with high multicollinearity were not dropped. The model hyperparameters which are listed below, were chosen by Grid Search CV. The types of tree-based models attempted are listed below:

- Random Forest

- XGBoost

Random Forest (RF) model:

For the final Random Forest model, the residual and prediction error plots provide us with impressive results. This model is an improvement over the other linear models; however, there seems to be a bit of a difference in the training and testing data accuracy, indicating overfitting.

Next, I also looked at the feature importances as provided by the final Random Forest Model to gain more information on which features were contributing to the results.

XGBoost Model:

The next and the last model type that I attempted was the XGBoost. For this model type, I ran two different versions of this model. For one version, all features were used, and the other features were selected by Sklearn's Recursive Feature Selection (RFE). RFE is a feature selection algorithm that uses feature elimination to select features for the model to use.

As per Scikit Learn's documentation, the estimator is trained on the initial set of features and the importance of each feature is obtained either through any specific attribute or callable. Then, the least important features are pruned from the current set of features. That procedure is recursively repeated on the pruned set until the desired number of features to select is eventually reached.

Lastly, the performances of both model types were compared with each other. For both models, the hyperparameter max_depth (the maximum depth of a tree) was determined from plotting R2 and errors for train and test data. An eta (step size shrinkage used in update to prevent overfitting) hyperparameter value was selected to optimize performance. Other hyperparameters were selected by Grid Search CV.

The results for the XGBoost model with RFE selected features are displayed below. In the first graph below, we see the similarity between the predicted and expected house sale price value. The test dataset house sales values are in decent agreement with each other.

The second graph shows the model importances as shown by the XGBoost model.

Final model evaluation metrics:

The table below shows train and test data accuracy scores and errors for all the models attempted, including different versions for each. For all the model types, the final model had the RFE selected features, except for the XGBoost model for which the two different versions were run.

Based on accuracies and RMSE (Root Mean Squared Error) scores, the SVR, XGBoost and XGBoost (RFE) models were shortlisted to be used for creation of the house sales price determination tool. These models were chosen because they were not overfitting and have relatively low errors scores.

Conclusion

If we look at the evidence provided by exploratory data analysis, we can see that overall house quality and usable available space inside the house matter when determining the final house sales price. Some other necessary characteristics that emerged while studying the data modeling results are listed below:

- Overall condition that the house is in.

- Finished Basement area (in square feet).

- Total above ground living area (in square feet).

- Whether the house has been remodeled or not.

- Years since the house has been remodeled.

Based on what I have uncovered through the research in this project; the few things that I can recommend realtors/mortgage lenders do to possibly get a higher sales price are as follows:

- Make sure the house on sale has more usable living space.

- The realtor/mortgage lenders can check if the house, basement, and garage is are good condition and if evaluation rating is high. If not, steps need to be taken to correct this issue.

- If the house basement is unfinished, recommend the seller consider getting it finished.

- Recommending the seller for renovation if not done in a long time.

Future Work

Something, I would like to do if given some more time with this data is to go back and do a more thorough data analysis and work to see if the models themselves can provide more insight not previously uncovered.

References:

- Paul R. La Monica, C., 2022. Could a housing slump threaten the stock market and the entire economy? [online] CNN. Available at: <https://www.cnn.com/2022/06/22/investing/premarket-stocks-trading/index.html> [Accessed 27 June 2022].

- Gomez, J., 2022. 8 critical factors that influence a home's value | Opendoor. [online] Opendoor. Available at: <https://www.opendoor.com/w/blog/factors-that-influence-home-value> [Accessed 27 June 2022].

- Kaggle.com. 2022. House Prices - Advanced Regression Techniques | Kaggle. [online] Available at: <https://www.kaggle.com/c/house-prices-advanced-regression-techniques> [Accessed 27 June 2022].

- scikit-learn. 2022. sklearn.feature_selection.RFE. [online] Available at: <https://scikit-learn.org/stable/modules/generated/sklearn.feature_selection.RFE.html> [Accessed 27 June 2022].