Mass Shootings in America

Contributed by Paul Grech and Chris Neimeth. They took NYC Data Science Academy 12 week full time Data Science Bootcamp program between Sept 23 to Dec 18, 2015. The post was based on their second class project(due at 4th week of the program).

Project By: Paul Grech & Chris Neimeth

Understanding Mass Shootings in America

Few topics are as emotionally charged as gun control. The horror of mass shootings seems to ring in our ears as an everyday occurrence; America has 5% of the world’s population, yet 31% of its mass shooters. Sadly, resolution of mass shootings and alignment on gun control is at a partisan deadlock. Discussions are emotionally charged and avoid facts. To encourage people to find common ground between civil liberty and the social contract we developed an application to explore relevant data. We hoped to create meaningful dialogue by including links to national gun control forums as well.

The Data

Data was pulled from two separate sources and merged depending on the analysis.

The first dataset is from Stanford University and is a collection of 218 events between 1966 and 2015. This data was collected by faculty and staff along with students beginning in 2012.

The second dataset is crowd-sourced Mass Shooting Tracker data. This dataset initially began at the beginning of 2014 on Reddit. It consists of 995 events between the start of 2013 to present.

Merging the two datasets was somewhat challenging given their different reporting techniques and definitions of mass shooting.

Data Import & Preprocessing

Data munging was relatively straightforward. As the code below shows, the data was imported, cleaned and labeled to standardize various features used in the layout of the application.

##### DATA IMPORT Import2015 <- read.csv("Data/2015CURRENT.csv", header=TRUE) Import2014 <- read.csv("Data/2014MASTER.csv", header=TRUE) Import2013 <- read.csv("Data/2013MASTER.csv", header=TRUE) Import1966 <- read.csv("Data/stanfordnew.csv", header = TRUE) ##### DATA CLEANING 2013 - 2015 names(Import2015) Data2015 <- Import2015[, c(2, 4:7)] names(Data2015)[2] <- "Killed" names(Data2015)[3] <- "Wounded" names(Import2014) Data2014 <- Import2014[, c(2, 4:7)] names(Data2014)[2] <- "Killed" names(Data2014)[3] <- "Wounded" names(Import2013) Data2013 <- Import2013[, c(2, 4:7)] names(Data2013) <- c("Date", "Killed", "Wounded", "Location", "Article") Data13.15 <- rbind(Data2013, Data2014, Data2015) ##### DATA CLEANING 1966 Data1966 <- tbl_df(Import1966) names(Data1966) <- toupper((names(Data1966))) Data1966 <- Data1966[,c("DAY.OF.WEEK", ### Remaining variable names removed for display purposes names(Data1966) <- c("Day", ### Remaining variable names removed for display purposes # Cleaning Dates Data1966$Date <- as.Date(Data1966$Date, format = "%m/%d/%y") Data1966$Date[1:2] <- sub(20, 19, Data1966$Date[1:2]) # Quantity of Shooters Data1966$ShooterAge <- as.character(Data1966$ShooterAge) Data1966 = mutate(Data1966, ShooterQuant = round(nchar(Data1966$ShooterAge) / 3)) # Creating shooter AgeBucket Data1966 = mutate(Data1966, ShooterAgeBucket = ifelse(ShooterAvgAge < 18, "<18", ifelse(ShooterAvgAge < 25, "18-24", ifelse(ShooterAvgAge < 35, "25-34", ifelse(ShooterAvgAge < 50, "35-49", "50+"))))) # Standardizing Race Data1966$ShooterRace[grepl("White", Data1966$ShooterRace)] <- "White American or European American" Data1966$ShooterRace[grep("Black", Data1966$ShooterRace)] <- "Black American or African American" Data1966$ShooterRace[grep("Asian", Data1966$ShooterRace)] <- "Asian American" Data1966$ShooterRace[grep("Some other race", Data1966$ShooterRace)] <- "Unknown" Data1966$ShooterRace[grep("Two or more races", Data1966$ShooterRace)] <- "Unknown" # Creating Total Victims = Data1966 = mutate(Data1966, VictimsTotal = VictimsFatal + VictimsInjured)

The Application Overview

The app uses a web application framework for the R programming language called Shiny which has two components know as the user-interface and server. The ui.r file is responsible for the layout of each tab while the server.r file is responsible for the content. All ui.r and server.r code can be found in the following github repository here.

The application itself consists of five tabs each aimed at allowing the user to explore a different aspect of each event.

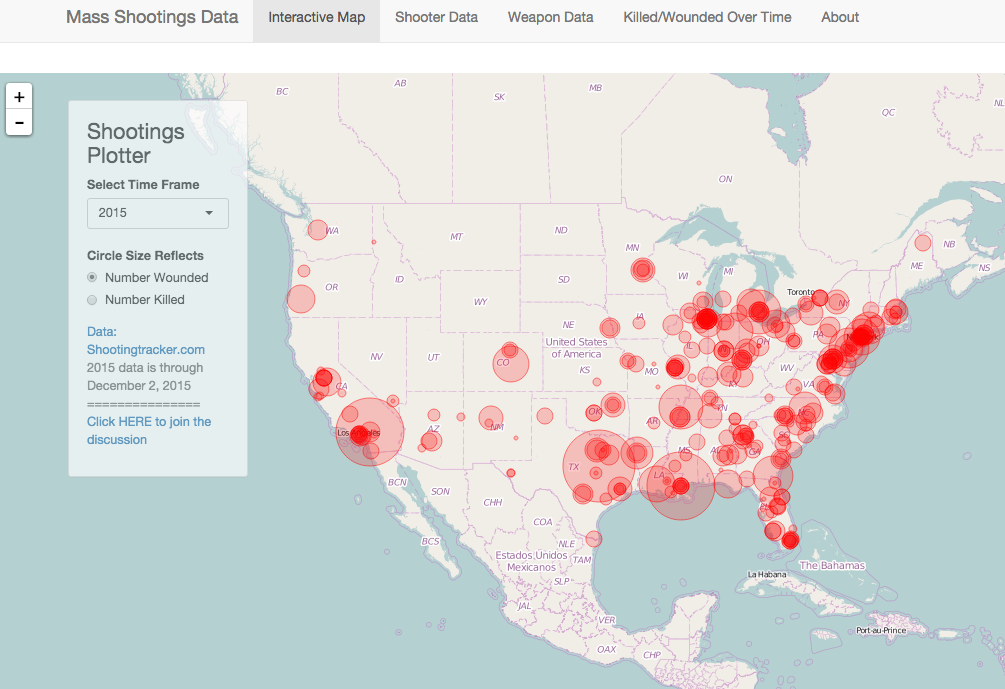

Geo Coding and Mapping

Tab 1 consists of an open-source JavaScript library map known as leaflet. Leaflet is used to plot the location for each event. Once the map layer was completed, the map object was stored as output$map in  the server.r file to be called by ui.r. Layers, such as the circles representing different shooting events, are added to the map using the functions: addTiles, addCircleMarkers. A floating window is also added to the map using layers. Two pull-down options are included in the floating window. The first allows the user to select the year of data to view; the second selects killed or wounded data to determine the radius of the circle marking each event. Location information from the raw data was translated to longitude and latitude coordinates using the geocode() function from the ggmap2 library in R. This function calls Google’s map API for each of the city, state locations.

the server.r file to be called by ui.r. Layers, such as the circles representing different shooting events, are added to the map using the functions: addTiles, addCircleMarkers. A floating window is also added to the map using layers. Two pull-down options are included in the floating window. The first allows the user to select the year of data to view; the second selects killed or wounded data to determine the radius of the circle marking each event. Location information from the raw data was translated to longitude and latitude coordinates using the geocode() function from the ggmap2 library in R. This function calls Google’s map API for each of the city, state locations.

(Note, there is a 2,500 call/day limit for non-paid use of this service.)

##### Needed for Maps Analysis library(rgdal) library(sp) library(taRifx.geo) library(utils) # Simple script for geocoding the data from Masshootings website 2013-2015 # Change dataclass to "character" to be readable by geocode # Geocoding data using GGmap and Google Data2015$Location <- as.character(Data2015$Location) Data2015$geo <- geocode(Data2015$Location) Data2015g <- Data2015 # Parse GeoCoded Data into longitude (lon) and latitude (lat) columns # Remove nested dataframe with geodata Data2015g$lon <- Data2015g[[6]][[1]] Data2015g$lat <- Data2015g[[6]][[2]] Data2015g <- Data2015g[-6] # Rinse and repeat above for the following data sets # 2014 ######################################### Data2014$Location <- as.character(Data2014$Location) Data2014$geo <- geocode(Data2014$Location) Data2014g <- Data2014 Data2014g$lon <- Data2014g[[6]][[1]] Data2014g$lat <- Data2014g[[6]][[2]] Data2014g <- Data2014g[-6] # 2013 ######################################### Data2013$Location <- as.character(Data2013$Location) Data2013$geo <- geocode(Data2013$Location) Data2013g <- Data2013 Data2013g$lon <- Data2013g[[6]][[1]] Data2013g$lat <- Data2013g[[6]][[2]] Data2013g <- Data2013g[-6] # 13.15 ######################################### Data13.15$Location <- as.character(Data13.15$Location) Data13.15$geo <- geocode(Data13.15$Location) Data13.15g <- Data13.15 Data13.15g$lon <- Data13.15g[[6]][[1]] Data13.15g$lat <- Data13.15g[[6]][[2]] Data13.15g <- Data13.15g[-6] # Warning messages: # 1: geocode failed with status ZERO_RESULTS, location = "Ottawa, KA" # 2: geocode failed with status ZERO_RESULTS, location = "Witchita, KA" # 3: geocode failed with status ZERO_RESULTS, location = "Parsons, KA" # 4: geocode failed with status ZERO_RESULTS, location = "Topeka, KA" # Save geocoded data to rdata file to be used save(Data13.15g, Data2013g, Data2014g, Data2015g, file="App/data/shootingsdata.Rdata")

Shooter, Weapon and Victim Data

The three main components within a mass shooting discussion include the shooter profile, weapon profile and severity of the impact (casualties) across time. Each of these components was given its own tab or analysis and displays various information about each.

The shooter tab allows the user to select profile data via a drop down menu. Age, gender, history of mental illness, military experience, or race of the shooter can be selected from the drop-down. 2014 census demographic data is included with race plot, allowing the user to visually compare shooter and population data. This tab was created using the following code:

##### ANALYSIS 1 # Adding census data TempRace <- group_by_(Data1966,"ShooterRace") %>% summarize(., "ShooterTotal" = (n() / nrow(Data1966))*100) DataRace <- mutate(TempRace, CensusTotal = c(5.4, 13.2, 1.4, 2.6, 77.4)) DataRace <- melt(DataRace, id.vars = "ShooterRace") names(DataRace) <- c("Race", "variable", "value")

The weapon tab provides a drop down menu to select weapon category or weapon type. Category refers to the class of weapon, including rifle, shotgun or pistol, while the type refers to whether the weapon is semi-automatic or automatic. This analysis was performed using the following code:

##### ANALYSIS 2 WeaponDF <- Data1966[,c("Date", "WeaponShotgun", "WeaponRifle", "WeaponHandgun", "WeaponAuto", "WeaponSemiAuto", "WeaponTotal")] colnames(WeaponDF) <- c("Date", "Shotgun", "Rifle", "Handgun", "Automatic", "SemiAutomatic", "Total") WeaponDF[,-1] <- lapply(WeaponDF[,-1], function(x) as.numeric(as.character(x))) WeaponDF[is.na(WeaponDF)] <- 0 TotalWeapons <- sum(WeaponDF[,c(2, 3, 4)]) PercWeapon <- data.frame('Weapon' = c("Rifle", "Handgun", "Shotgun"), 'Percent' = c((sum(WeaponDF$Rifle) / TotalWeapons) * 100, (sum(WeaponDF$Handgun) / TotalWeapons) * 100, (sum(WeaponDF$Shotgun) / TotalWeapons) * 100) ) # Creating percentage by weapont type - Automatic, Semi-Automatic, Unknown PercAuto <- (sum(WeaponDF$Automatic) / TotalWeapons) * 100 PercSA <- (sum(WeaponDF$SemiAutomatic) / TotalWeapons) * 100 PercUnk <- 100 - PercAuto - PercSA # Creating data frame by weapon type PercWeaponType <- data.frame('Weapon' = c("Automatic", "Semi-Automatic", "Unknown"), 'Percent' = c(PercAuto, PercSA, PercUnk))

The victim tab allows the user select an annual or month frequency to display victims killed and wounded. The following code is used:

##### ANALYSIS 3 DataUniv <- Data13.15[,c(1, 2 , 3)] DataUniv$Date <- sapply(DataUniv$Date, as.character) DataUniv$Date <- as.Date(DataUniv$Date, format = "%m/%d/%Y") colnames(DataUniv) <- c("Date", "VictimsFatal", "VictimsInjured") Data1966 <- mutate(Data1966, CityState = paste(City, ", ", State)) DataUniv <- rbind(filter(Data1966[,c("Date", "VictimsFatal", "VictimsInjured")], Date < "2013-01-01"), DataUniv) TotalVictims <- sum(DataUniv[,c(2, 3)]) DataUniv <- mutate(DataUniv, Year = substr(DataUniv$Date, 1, 4)) DataUniv <- mutate(DataUniv, Month = substr(DataUniv$Date, 6, 7)) DataYear <- filter(DataUniv, Year > 2012) %>% group_by(., Year) %>% summarize(., Killed = sum(VictimsFatal), Injured = sum(VictimsInjured)) DataMonth <- group_by(DataUniv, Month) %>% summarize(., Killed = sum(VictimsFatal), Injured = sum(VictimsInjured)) DataYear <- melt(DataYear, id.vars = "Year") DataMonth <- melt(DataMonth, id.vars = "Month")

Findings

Development for this application, particularly the data munging, was not difficult. The primary challenge was to create an accessible interface that visualized data to encourage interaction and, thereafter, discussion. We wanted to create a product that everyone could use.

The data itself did have some telling features. Of note to the authors was the gun type used, prevalence of previously diagnosed mental illness among the shooters and seasonality to the occurrence of mass shootings events as seen in the image to the left. Our hope is that this type of information will lead to practical and balanced reform, allowing all parties to be satisfied with the outcome.

Future Work

This project touches the surface of a huge topic. Future work could go in many directions. Along with many other ideas, the authors discussed developing a service to retrieve relevant event data through web scraping and natural language processing.