Predicting Food Desert via Social Media

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

1. Introduction

Access to nutritious food is imperative to physical well-being and quality of life. Failing to consume healthful food on a regular basis can lead to a series of adverse health outcomes, including obesity, diabetes, and cardiovascular diseases. Food deserts are areas that lack access to affordable fruits, vegetables, whole grains, low-fat milk, and other foods that make up the full range of a healthy diet (CDC, 2016).

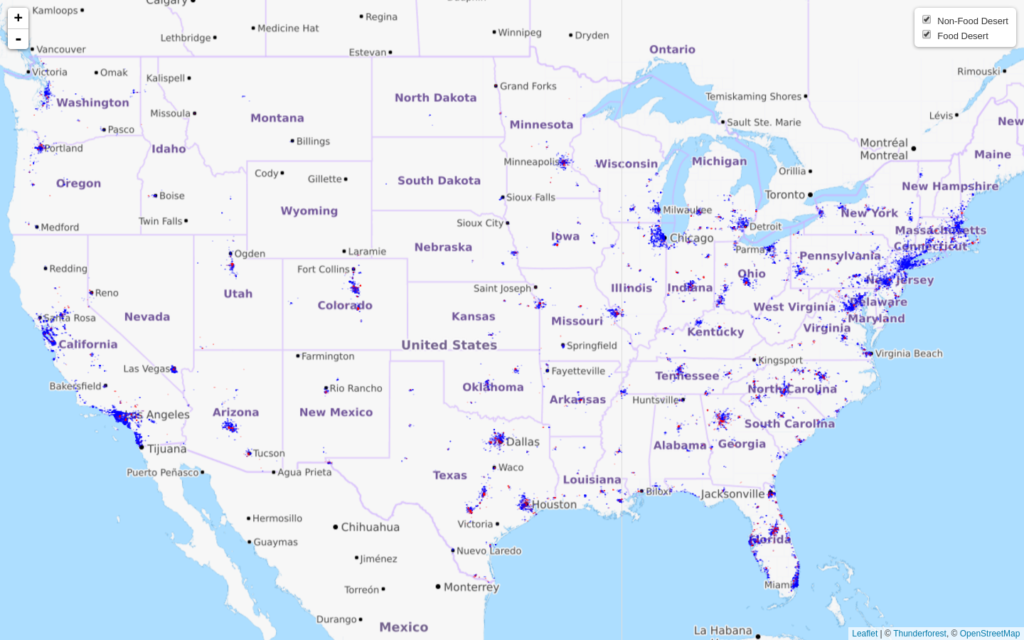

The definition of food deserts is that areas where at least 500 people and/or at least 33 percent of the census tract's population reside more than one mile from a supermarket or large grocery store (for rural census tracts, the distance is more than 10 miles) (ANA, 2011). The distribution of food deserts (green) is shown in Figure 1.

A rich body of research has emerged which has identified content and language usage in the platforms of social media to reflect individual’s and population’s milieu. Among the many individuals are known to share on the social media, food ingestion or dining experiences constitute a unique category.

Twitter, for instance, captures a number of minute details about our daily lives, including dietary and dining choices, and prior work has indicated it to be a viable resource that can be leveraged to study ingestion and public health related phenomena.

2. Motivation

Given the vast amount of available Twitter data, and an increasing interest in quickly and precisely identifying regions of the country likely to be food deserts, including recognizing their nutritional and dietary challenges.

In this project, I wanted to build a monitoring system which can use social media as a "sensor" to capture people's dining experiences and the nutritional information of the food they are consuming, and predict the food desert status of different areas using the linguistic constructs of ingestion content, nutritional attributes, and other socio-economic information, such as age, sex, ethnicity or race, education, income, housing status, population, etc (Figure 2).

3. Data Collection

Twitter Data:

Figure 3 shows the framework that fetches and filtered real-time Twitter data (Widener and Li, 2014). Two modules are built to completed the tweet data collection task: a retrieval module and a parsing module. The retrieval module is responsible for establishing a connection with the Twitter web server through its streaming API. Two rules are applied here to filter out noisy tweets.

The first rule is that tweets without location information are excluded. The second rule is applied to fetch tweets about food ingestion only. A set of food-relate food words is performed to query Twitter. I compiled a list of 233 food name list based on an online food vocabulary word list that was used by previous studies ( Sharma and Choudhury, 2015) and the USDA food composition database.

Only food names appearing in both sources will be included in this food list. By applying the above two rules for filtering, many irrelevant tweets were removed, significantly increasing the number of tweets actually about food ingestion stored in the database.

Once the raw tweets are fetched, they are saved in a database. The parsing module is used to deserialize the raw tweet data into a text-based JSON object and then extract the desired tweet information, including tweet time, tweet text, location, and source.

The collection process for this project occurred from 12/5/2016 to 12/16/2016, at which point a total of over 230,000 tweets with valid latitude - longitude information were collected.

Food Desert Data:

In a parallel data collection task, I downloaded cartographic information on 72,217 census tracts throughout the US from USDA Food Access Research Atlas (2013), of which 7436 census tracts are officially identified to be food deserts. Census tracts are relatively permanent subdivisions of a county and usually have between 2,500 and 8,000 people.

Socioeconomic Status (SES) Data:

Additionally, for each census tract (both food deserts and non-food deserts), I obtained the most recent socio-economic data from the Federal Financial Institutions Examination Council (FFIEC) online system (2016) by using web scraping (Selenium with Python). The SES data consists of four tables, demographic, population, housing, and income (see table below for features selected in this project).

| SES Features | |

| median house age | #households |

| owner housing units | median family income |

| distressed/underserved tract | #families |

| population | % below poverty line |

| %low access 0 -17 yrs | % low access, low-income |

| %low access 65+ yrs | urban/rural |

| % minority | vehicle access |

| % non-Hispanic white | % group quarters population |

4. Data Processing and Exploratory Data Analysis

Mapping Tweets to Food Desert Regions:

Since the tweets I collected were with valid latitude - longitude information. I then utilized the Federal Communications Commission (FCC) API to query the latitude - longitude pair of each tweet for a corresponding US census tract. The API query actually returns a 15 character Census Block number. The first 11 digit uniquely identifies a census tract. For tweet with lat-long coordinates outside the US, the API returns a null code.

After this step, I successfully mapped over 40,000 tweets to the census tracts in USDA Food Access Research Atlas: 3,927 tweets (8%) in food deserts and 42,645 (92%) in non-food deserts (Figure 4).

Figure 4. The distributions of tweets in food deserts (red) and non-food deserts (blue)

Extracting Nutritional Information:

Since the goal of this project was to predict food desert via Twitter, I estimated nutrient intake based on the text of each tweet. Firstly, I developed a regular expression matching framework in which each word in a tweet was compared to the list of 233 food names described above ( see Twitter Data part). Then, I calculated the nutritional intake of each tweet by matching the food item name to the USDA food information description.

For this project, I calculated five major nutrients' ingestion: energy (kcal), protein (g), cholesterol (mg), sugar (g), and fat (g). Figure 5 shows the differences in mean nutrients intake between non-food deserts and food deserts.

Figure 5. Nutritional estimations (average) in non-food deserts (red) and food deserts (green) corresponding to the five nutrients

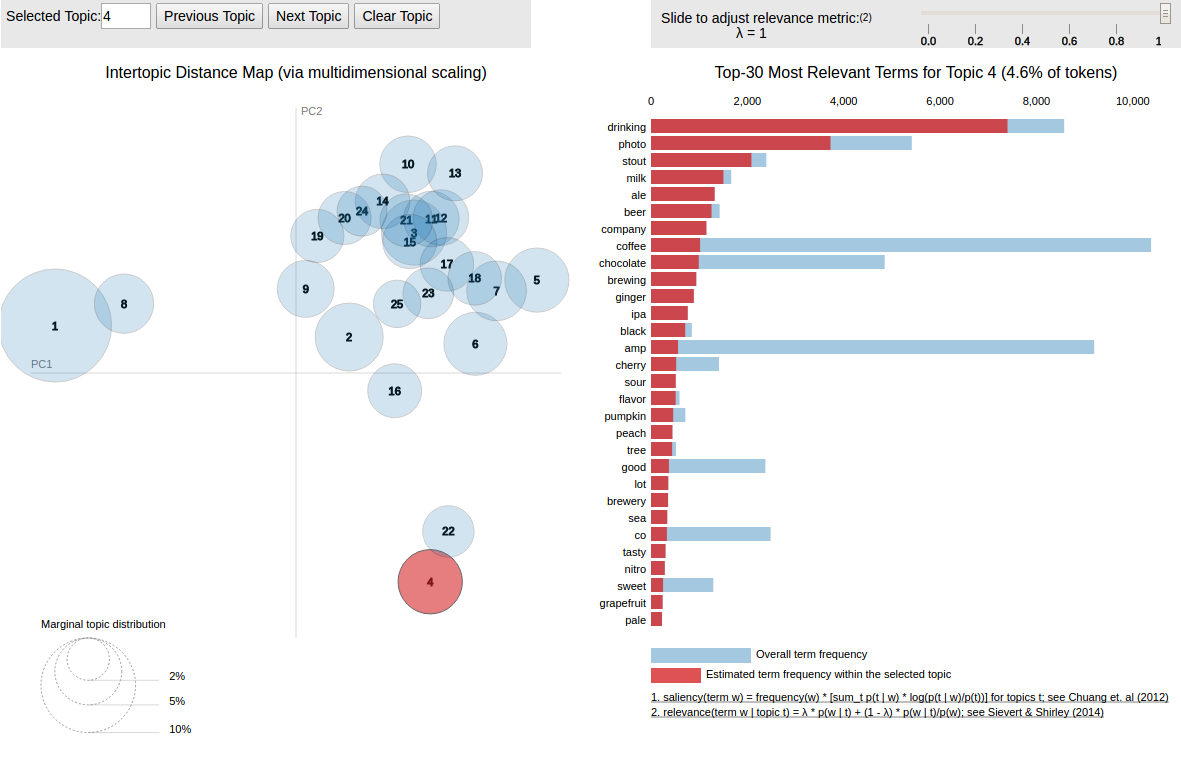

Topic Analysis of Ingestion Language via Latent Dirichlet Allocation (LDA)

In order to create features related to ingestion language of tweets, I applied LDA a topic modeling approach in the context of tweets, which has been commonly used to analyze health-related social media data, as well as to cluster food-related social media posts.

Topic Modeling is different from rule-based text mining approaches that use regular expressions or dictionary based keyword searching techniques. Topic modeling is an unsupervised method used for finding and observing the bunch of words (called “topics”) in large clusters of texts. Topics can be defined as “a repeating pattern of co-occurring terms in a corpus” (Figure 6).

For example, a good topic model should result in – “health”, “doctor”, “patient”, “hospital” for a topic – Healthcare, and “farm”, “crops”, “wheat” for a topic – “Farming”. Topic Models are very useful for the purpose for document clustering, organizing large blocks of textual data, information retrieval from unstructured text and feature selection.

There are many approaches for obtaining topics from a text such as – Term Frequency and Inverse Document Frequency (TF-IDF). LDA is another popular topic modeling technique, which is a matrix factorization technique. LDA assumes documents are produced from a mixture of topics. Those topics then generate words based on their probability distribution.

Given a dataset of documents, LDA backtracks and tries to figure out what topics would create those documents in the first place. In vector space, any corpus (collection of documents) can be represented as a document-term matrix.

LDA then converts this Document-Term Matrix into two lower dimensional matrices. One is a document-topics matrix and another one is a topic – terms matrix with dimensions (N, K) and (K, M) respectively, where N is the number of documents, K is the number of topics and M is the vocabulary size.

Figure 7 shows how I applied LDA topic modeling in this project. For the combined set fo tweets from all food deserts and non-food deserts, I obtained topics by using a version of LDA given in Python Gensim library. I used the default parameter settings and set 25 as the number of topics. Meanwhile, I used Jensen-Shannon (JS) divergence to measure the topic distribution of each tweet.

It means that there were 25 topic-related features created for each tweet. The LDA topic results are explored using pyLDAvis, which maps topic similarity by calculating a semantic distance between topics (via JS divergence). For example, model 1 is about job or work; model 4 is about drinking, beverage; and model 13 is about food ingestion (Figure 8).

Figure 8. LDA topic results: mode 1, 4, and 13

5. Model Training (Classification)

I developed four different classification models with same sets of features to predict the USDA defined food desert status of a tract (python scikit-learn). The features include: 5 nutrient intake features, 16 SES attributes of census tract, and 25 LDA topic features. Meanwhile, I applied ensemble methods to combine predictions of Logistic Regression, Random Forest, and XGBoost, in order to improve the robustness over a single estimator. The results of modeling are summarized in the table below.

| Model | AUC | F 1 score |

| Logistic Regression (L2) | 0.7958 | 0.2935 |

| Random Forest | 0.9648 | 0.6280 |

| XGBoost | 0.9614 | 0.6207 |

| Support Vector Machine | 0.5357 | - |

| Ensemble | 0.9650 | 0.6903 |

Figure 9 shows the receiver operating characteristics (ROC) curves for predicting food desert status of different models.

6. Summary and Feature Plan

In this project, I explored how ingestion and food related content extracted from social media, Twitter. Meanwhile, I tried to build a pipeline to predict areas challenged by healthy food access by including tweets topic analysis together with nutrient intake estimation and SES attributes of a tract (Figure 10). Results showed that I was able to predict USDA defined food low access status of a tract, "Food Desert", by utilizing the model.

However, there are some notable limitations in this project. For example, tweets have a lot of noisy information. Also, I did not apply sentiment analysis of the text or the content of the images shared on Twitter, which might lead to a more accurate prediction. The next steps for this project are:

- Apply Achechy API to filter out noisy tweets

- Create new features: sentiment analysis of tweets

- Parameter tuning and model ensemble/stacking

- Develop a web interactive application to monitor/find food deserts using streaming data from Twitter

You may also explore this project via Chuan's GitHub.

References:

Analytics Vidhya, Beginners Guide to Topic Modeling in Python

Alex Perrier, Learning Machine, Topic Modeling of Twitter Followers