Prediction of Credit Default Risk

The most important task for any lender is to predict the probability of default for a borrower. An accurate prediction can help in balancing risk and return for the lender; charging higher rates for higher risks, or even denying the loan when required.

This helps genuine borrowers also as they can get loans as per their risk-profiles; also lower default-rates help in keeping the rates lower.

So I decided to apply the machine learning skills gained by me to prediction of probability-of-default on loans.

The data-set I used was from a challenge hosted by 'Home Credit' on Kaggle from Jun'18 to Aug'18.

Home Credit Group is an international consumer finance provider with operations in 10 countries. It focuses on responsible lending primarily to unbanked population with little or no credit history. Home Credit had hosted this challenge on Kaggle so that kagglers can help it unlock the full potential of its data. The competition was about predicting future payment behavior of clients from loan application, and demographic and historical credit behavior data.

Though the competition is over the datasets are still available at Kaggle.

This data-set intrigued me as it involved considering lot of indirect information while giving loans to people with insufficient or non-existent credit histories, which will help provide a positive and safe borrowing experience to these people, and avoid being taken advantage of by untrustworthy lenders.

Also of interest to me was that it was not plain black-box modelling but involved compiling, short-listing, modifying and aggregating data based on understanding the business scenario. The data was in the raw form as Home Credit R&D team was keen to not only see what modelling approaches will the Kaggle community use but also how will the community work with the data in such form.

Data Files

There were 7 different sources of data:

|

S.No. |

File Name |

# rows |

# columns |

Description |

|

1 |

application_ train.csv |

307,511 |

122 |

Main training file, with static data for all applications including whether there was a default on loan. |

|

2 |

application_ test.csv |

48,744 |

121 |

Main test file. |

|

3 |

bureau.csv |

1716428 |

17 |

All client's previous credits provided by other financial institutions that were reported to Credit BureauFor every loan in sample, there were as many rows as number of credits the client had in Credit Bureau before the application date. |

|

4 |

bureau_ balance.csv |

27,299,925 |

3 |

This table had one row for each month of history of every previous credit reported to Credit Bureau – i.e the table had -- # loans in sample, # of relative previous credits, # of months where there was some history observable for the previous credits. |

|

5 |

previous_ application.csv |

1,670,214 |

23 |

All previous applications for Home Credit loans of clients who had loans in the sample. |

|

6 |

POS_CASH_ balance.csv |

10,001,358 |

8 |

Monthly balance snapshots of previous POS (point of sales) and cash loans that the applicant had with Home Credit. This table had one row for each month of history of every previous credit in Home Credit (consumer credit and cash loans) related to loans in the sample – i.e. the table had -- # loans in sample, # of relative previous credits , # of months in which there was some history observable for the previous credits. |

|

7 |

credit_card_ balance.csv |

3,840,312 |

8 |

Monthly balance snapshots of previous credit cards that the applicant has with Home Credit. This table had one row for each month of history of every previous credit in Home Credit (consumer credit and cash loans) related to loans in the sample – i.e. the table had -- # loans in sample, # of relative previous credit cards , # of months where there was some history observable for the previous credit card. |

|

8 |

installments_ payments.csv |

1,360,5401 |

37 |

Repayment history for the previously disbursed credits in Home Credit related to the loans in the sample. There was -- a) one row for every payment that was made, plus b) one row each for missed payment. |

Data Linkage

Application train & test were the primary files corresponding to the loan applications. Predictions were to be made about the test file.

Main tracking id linking the loan application data in these files to secondary and tertiary files was SK_ID_CURR. Bureau and previous_appl were the secondary files which had information about other loans and applications of these loan applicants. They were linked through SK_ID_BUREAU and SK_ID_PREV to the payment information about these loans in tertiary files.

Data Description

Home Credit had provided a file -- “HomeCreditcolumnsdescription.csv” -- having a brief description of all the columns in different files. The description of these columns was useful in feature engineering.

Description of bureau and bureau_balance:

Evaluation Metric

Evaluation metric was the Receiver Operating Characteristic Area Under the Curve (ROC AUC, also sometimes called AUROC). This is useful as the data-set was imbalanced with just 8.07% default-rate. With this data even if one classifies all the loans as no-default the accuracy will be 91.93%! Even though such a prediction will have zero True-Positive-Rate (sensitivity).

When we measure a classifier according to the ROC AUC, we do not predict default/no-default, but instead predict the probability of default (between 0 and 1). The ROC curve is developed by plotting the ratio of True-Positive-Rate and False-Positive-Rate (TPR / FPR) for different values of the threshold from 0 to 1. AUC ROC is the total area under the ROC curve for the threshold range (0, 1).

Exploratory Data Analysis

For numeric variables I checked the description and histogram. For example:

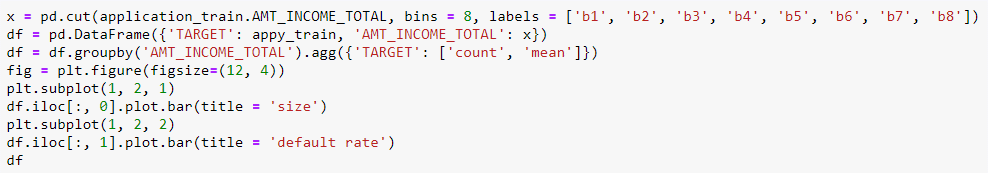

I also divided numerical variables into bins and plotted size and mean-default-rate for each bin.

For categorical variables I checked the size and mean_default_rate for each category. For example:

The exploratory data analysis helped in further decisioning about missing values, anomalies, feature-engineering and aggregation of data.

Handling Anomalies

Decision was taken on basis of information provided, variation in default-rates across bins, deviation from mean (in case of anomalies) and judgment on why a value might be missing.

For example:

- It was observed that a few AMT_INCOME_TOTAL were excessively high, and as per a comments by host information on income was self-proclaimed and not validated. Values more than 3-sigma higher than mean were substituted with mean.

- There was some skewness in AMT_CREDIT also, but very few of them were violating the spread of default-rate, so it was not touched.

- There were 55,374 values that had DAYS_EMPLOYED 365243 (a negative number shows the applicant has been employed for many days before the application day; positive shows the applicant is expected to be employed after these many days). Here 365243 was not touched as this could be Home Credit entering a high value (~1000 yrs) to reflect the fact that applicant is not expected to be employed in near future.

Handling Missing Data

Categorical values were imputed as a separated category ‘xan.’

For numeric variables both 'mean' and 'median' imputations were tried and checked through logistic regression. As 'median' imputation gave a better score that was selected.

For a few numeric variables, which had high missing percentage and showed strong link with default-rate, a separate binary category was created.

Dummification of Categorical Variables

Train and test data-sets were joined vertically -- after adding a 'code' column to split them later -- to have same dummy variables in both.

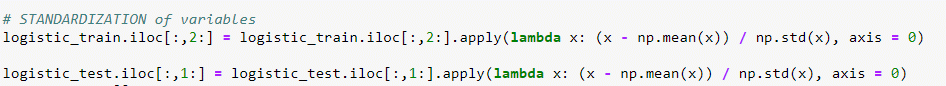

Standardization of Variables

Variables in all files were standardized before modelling.

Collating and Modifying Data

Feature Engineering

New features were created as per my estimate of impact of default-rate. For example some of the features created in application file are:

- Annuity as a proportion of credit

- Credit as a proportion of income

- Of clients social surround of people who could have been late (OBS) what proportion actually defaulted (DEF)

New features also included missing variables as category for a few features.

There was an improvement in scores – validation and Kaggle – after introducing new features.

- Kaggle score before new_features: 0.68185

- Kaggle score after reduction: 0.68637

Dimensionality Reduction

After dummification of categorical variables and adding new features the number of features in application sets increased to 246. These were reduced to 68 features after applying feature_selection from sklearn.

There was a very little decline in scores in test (Kaggle) and validation sets after reduction of features. So for further processing this reduced dataset with 68 features was used.

- Kaggle score before reduction: 0.68637

- Kaggle score after reduction: 0.68594

Feature Engineering and Aggregation of Data from Secondary Files

Instead of merging secondary files directly with application I created new features by aggregating information.

Feature Engineering and Aggregation of Data from Tertiary Files

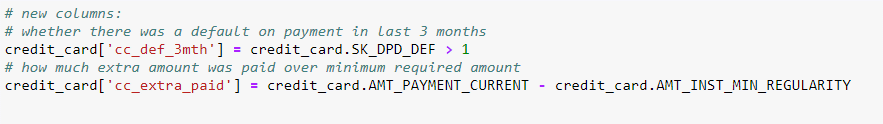

New features were created as per my estimate of their impact on default-rate. For example:

Data was aggregated at two levels (SK_ID_CURR and SK_ID_BUREAU or SK_ID_PREV). For example:

Modelling

Upsampling to Handle Imbalanced Classes

Predictions by models are unreliable in datasets with a disproportionate ratio of observations in each class. As this data-set has just around 8% defaults I decided to upsample the defaults to address this issue.

From the train data 30% was held for validation. From remaining 70%, smaller category (default) was upsampled.

Cross Validation

I used GridSearchCV of sklearn with 5-fold cross-validation. ‘scoring’ parameter set as ‘roc_auc’.

I tracked the performance for the test and train scores within gridsearch and for comparison stored them in a dataframe.

I also maintained record for validation results on 30% hold data.

Final Model Performances

I used three models on the final train (70%), validation (30%) and test (kaggle) data. The performances were:

Best performance on Kaggle data was given by breaking into Principal Components before LogisticRegression, which most probably was due to low model variance introduced by uncorrelated principal components.

ROC Curve

ROC curve with validation data for Stochastic Gradient Boosting Model which performed best on validation data.

Main Classification Metrics

Classification metrics, as per default threshold (0.5), were:

F1 score is the harmonic mean of precision and recall

Confusion Matrix for the default threshold (0.5), was:

Decision Function

Though the Kaggle contest was about the auc_roc score, which does not involve deciding a particular threshold probability(default) for classifying as default, I decided to go further and attempted to get the optimal threshold on the basis of a loss function based on real loss due to false-negatives and opportunity-loss due to false-positives.

There is a real-loss to a lender when a borrower does not pay back. There will be a real-loss to Home Credit whenever someone predicted to be no-default, defaults (false-negatives).

At the same time, lenders make money through lending, so there is a an opportunity-loss when someone who should have been lent money because of being no-default case is rejected because of being predicted as default (false-positives).

So, losses for a loan because of mis-classification, will depend upon pr(false-negative) and p(false-positive). These probabilities depend upon the 'prediction of probabilities of default by the model' and the 'threshold for classifying as default.'

Total loss is the sum of:

- Expected Real Loss = p(false-negative | threshold | model) x loss-given-default

- Expected Opportunity Loss = p(false-positivetive | threshold | model) x lost-gain

For example:

As-per the confusion-matrix above for the sgb model at the default-threshold (0.5):

- p(FN) = 2592/92254 = 0.028

- p(FP) = 21296/92254 = 0.231

For a loan of $100,000 if the loss-given-default is 70%, and total-gain-over-life-of-loan on an approved loan that doesn't default is 20%, expected-loss-due-to-misclassification will be:

- Real Loss = 0.028 x 100,000 x 70% = $1,960

- Opportunity Loss = 0.231 x 100000 x 20% = $4,620

- Total Loss = 1,960 + 4,620 = $6,580

This loss will be different for different thresholds for any given model. For any given model the optimal-threshold will be the onethat minimizes this loss.

Following are the loss-values for a range of values of threshold:

Plot of total_loss for different threshold values, along with the optimal threshold (for minimum total loss):

Conclusion

ROC_AUC is a useful metric especially for imbalanced classes. But for business decisions this needs to be combined with other factors, e.g. loss_given_default.

For this model (StochasticGradientBoosting), with the given assumptions of loss-given-default and gain-over-life-of-loan, optimal threshold for minimizing misclassification-loss is 0.715.

Challenges

- Computing power - For this big data-set computing power better than my laptop would have been helpful.

- Limited time - One week was not enough to explore all the variables completely.

- Lack of complete information about variables - The way some of the data was tracked was not clear. E.g. on credit cards in some cases the the amount unpaid was given as negative in debt, while in some other cases the difference between card limit and unpaid amount was given as positive in debt.

Future Work

- Develop business understanding to do better feature engineering, imputation of missing values and handling of extreme values

- Using advanced methods like neural networks for classification

- More work on decision function by including business factors in scoring parameters of models