Value-based PC Part Selection

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

The final PC build application can be accessed via this link.

For people looking for more power and upgradability than a laptop can provide, desktop computers offer a reasonable alternative. Unfortunately, the pre-built computers that major manufacturers sell are considerably overpriced considering the quality of their components. For example, gaming computers will come with high end processors and graphics cards packaged with the cheapest available power unit and motherboard. Cutting corners does not make much sense when it can lead to your computer bursting into flames.

What's the alternative? Build your own PC!

Without prior experience, entering the world of desktop computers can be daunting without guidance. To begin, how do we know that this collection of parts will function properly when assembled? There are eight key components that comprise the core of a PC build, as shown in the basic chart below.

In the past, consumers would have to carefully read product pages and spec sheets to be roughly 90% certain that everything would work together. Enter PCPartPicker.com. In mid-2014, PCPartPicker became the go to site for checking compatibility between all parts of a build. As of January 2017, this site was valued at $1.9 million with roughly 860,000 daily page views.

The other obstacle to PC building is finding "value", i.e. parts that are reliable and produce the promised specifications (specs). As of now, the only way to find value was to use a build guide that gives suggestions about trusted brands. These can be biased heavily by popularity and do not inform the consumer where generics are just as good as name-brands.

Motivation: make value-based PC part selection easier via an automated recommendation engine

Scraping PCPartPicker with PhantomJS and BeautifulSoup

To build such a recommendation engine, we need data on each available product. Since PCPartPicker contains specifications and ratings on all relevant components, as well as updated prices from major retailers, it is the logical choice to scrape for information. Combining simple PhantomJS, bash, and BeautifulSoup scripts made this process relatively quick and easy.

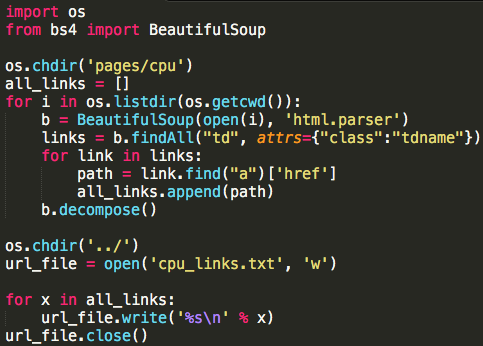

First, gather all of the product URLs from each subcategory page (here for the CPU subcategory):

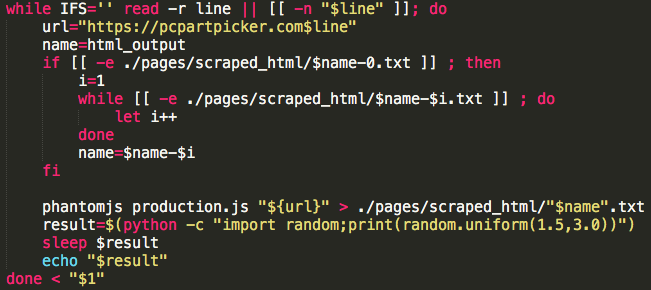

Next, parse each product URL and append it to a compilation file:

Finally, use the scraped URLs to scrape each individual product page:

After all of this scraping the end product is a collection of pages such as the one below (in raw HTML format). Of specific interest for this project:

- Name: the product name, sometimes containing information not found elsewhere on the page

- Ratings: average value and number of ratings on PCPartPicker

- Specifications: a ragged list of features for each component, varying wildly in content

- Price: the lowest value of the Prices table minus one cent and not accounting for shipping

Data preparation and value analysis with Pandas

The data produced from the scraping process was relatively unstructured and extraction of specifications required RegEx and unit conversion. Since this is a standard and well-covered process, I won't delve into the details here.

More interesting is the determination of the most valuable quantities for each component. A link to the slides containing analysis can be found in the sidebar of the final application.

Example analysis: Memory

Random access memory (RAM, memory) is on-motherboard storage used for quick access of frequently used data. In simple terms, having more RAM means that your computer can run more processes/applications at the same time without slowing down.

For desktop computers, RAM is sold in sticks with capacity between 2 and 32GB. Typically 2 sticks are used for dual-channel mode which increases the overall performance, i.e. 2 sticks of 4GB run faster than 1 stick of 8GB RAM. While other components such as CPU and GPU have only two or three competing companies, RAM seems to have more options. Can the scraped data tell us what to look for in terms of RAM value?

Below is the correlation heatmap for the memory dataframe. The most significant take away is the extremely strong correlation (0.94) between the product price ($) and the total memory (GB). The presumption from this chart is that there is limited advantage to buying higher priced RAM, especially considering the marginal correlation (0.19) between price and rating value.

Our suspicions are confirmed when plotting total memory v. price in a scatterplot. We see that this strong correlation is reinforced by a strong linear relationship. Highlighted in red is the effect of branding: selling a product with similar specifications at a higher price than other manufacturers. In essence, there is limited advantage to paying for a name-brand RAM supplier: it is better to get the cheapest possible RAM.

As mentioned there is no "trusted name" in RAM production. Logically the above relationship makes sense since more competitors should lead to lower prices for the consumer assuming that production is relatively trivial.

Database formation and querying with SQL

Instead of reading the entirety of our data into memory every time we want to return a build recommendation, we can save our cleaned datasets into a SQL database. This way elements can be queried as needed, i.e. returning one compatible component instead of the entire collection. The SQLAlchemy (create_engine) and Pandas (to_sql) packages makes this process straight-forward in Python:

Here we have created 8 distinct tables in the pcpart.db which we can query in R using the RSQLite package. Specifically, we can formulate a general SQL query as shown below.

Notes:

- Spec_vec contains the column names we want to get from the table of interest

- Filter_str contains any conditions that need to be placed on the component of interest (e.g. price > 0 by default)

Building a recommendation engine with Shiny

The problem of selecting compatible parts for a PC build can be described theoretically via graph theory. The eight core components and their compatibility form an undirected graph where the motherboard (6 edges) and the CPU (4 edges) are the most significant nodes. The PSU is linked to all other parts by the relation that the PSU must output more wattage than the sum of the other components. In practice, the majority of PSUs can handle all possible combinations of other components with ease and thus the links between PSU and other components are weak.

There are now two possible components that can be the starting point of our build selection: the motherboard or CPU. Since the motherboard has few numerical specifications that can be easily translated to value, I selected the CPU as the "pivot point": the component that is selected first which influences the choice of every other component.

Once the CPU is selected, the motherboard is determined via socket compatibility and the remaining components are selected in parallel, assuring compatibility of the final build. In practice, this means that our parameter space has been greatly reduced (for better or worse) by selecting CPU from a smaller pool of high value/high rating/high spec components. If we want to map the entire parameter space of builds to find overall build value, we would need to construct an algorithm to loop through all CPUs and record all possible build combinations. Alternatively, we could pivot on a different component (such as the motherboard) and compare build value to see if one is more comprehensive or preferable than the other.

There are currently three operational modes for the recommendation engine with various levels of implementation.

- Rating-first

- Spec-first

- Price-first

Rating-first

This is the simplest mode: after compatibility is ensured, the highest rated component is selected. In the default state of the app, this mode can be accessed by sliding each component's 'rating weight' slider to the 1 position.

This is the simplest mode: after compatibility is ensured, the highest rated component is selected. In the default state of the app, this mode can be accessed by sliding each component's 'rating weight' slider to the 1 position.

This option is most useful for components where value is harder to determine: motherboards and cases. By putting price limits on these components, an appropriate choice can be selected.

Spec-first

The default value of the application is spec-first: select the highest value component after compatibility is ensured.

compatibility is ensured.

For the majority of components we can assign a numeric variable which indicates the objective value. In this early-stage of development, value is determined by the most significant numeric factors of each component and implemented by SQL "order by" commands. Given more time, a rigorous algorithm for determining value of each component would be developed, ideally with an overall price filter to generate the best build per dollar value.

Price-first

As is implied, price-first selects each component first by compatibility, then price, and finally by rating or spec value. This can be enabled in the app at the bottom of the first filter tab via the "price priority" checkbox.

While most restrictive, this option has the most value to a potential user: rating values and price ranges can be adjusted to generate more realistic builds than can be produced with the first two modes.

Example Usage

- Enable "price priority"

- Note that 2GB of memory is unacceptable for a modern operating system

- Increase the price minimum to ~$25 for 4GB

- Increase the price minimum to ~$50 for 8GB

- Change the PSU to fully modular with Bronze efficiency

- Produces a good quality, cheap unit for only $35

- Increase the minimum rating on all components slider to get a well reviewed CPU and motherboard

- Remove the overclockable filter on GPUs to reduce the price on that component

Final result: $283 build with an average customer rating of 4.41

In practice, it would be useful to have a couple of dropdown choices for parts of interest such as CPU and GPU so that the user could generate compatible value-based builds with those selections in mind. For now, these users could use this tool to assess the value of other components.

Future development will focus on improving the algorithm for value-based selection and implementing user interaction as mentioned above. Another possible development route would include setting use-based filters such as high-end gaming, web-browsing-only, etc.