Renthop Kaggle Competition: Team Data Jedys

Introduction

This post is about the third of the four projects we are supposed to deliver at the NYC Data Science Academy Data Science Bootcamp program. The requirements were:

For this project, your primary task is to employ machine learning techniques to accurately make predictions given a dataset. The framework will be through the lens of the Two Sigma Connect: Rental Listing Inquiries from Kaggle. While the primary goal of Kaggle competitions is generally focused on predictive accuracy, you will be expected to lead your audience through descriptive insights as well. For the purposes of your project you will aim to not only create a model that predicts well, but also allow yourself to describe data insights drawn from exploration.

Everybody from bootcamp cohort 8 was assigned the same project. In contrast to the first two projects though, this was a team effort. Daniel Epstein, Jessie Gong Zhengxiang, Stefan Heinz, Yvonne Lau, and Ethan Weber contributed to this project.

Source Data

The data at hand were apartment rental listings in New York City from the website renthop.com. The training set for modeling consisted of 49,352 observations and 14 variables:

| bathrooms | bedrooms | bldg_id | created | descr | displ_addr | features | lat | list_id | mng_id |

| photos | price | street_addr | interest_level |

A test set - 74,659 records x 13 variables - was also available as well as a ZIP file consisting of ~700,000 images that were used in the rental listings from the two datasets.

The target variable was interest_level, which was divided into 3 categories: high, medium and low. The goal in this competition was to "predict how popular an apartment rental listing is based on the listing content like text description, photos, number of bedrooms, price, etc."

Basic Exploratory Data Analysis

Interest Level

The training dataset was very imbalanced, as there was a greater proportion of apartments listed as low interest_level than at medium (3.1x) or high (8.9x).

Price x Interest Level

The histogram and density plot show hat lower-priced apartments draw more high-level interest than higher-priced ones.

|

|

Location

Apartments in this dataset are located all over New York City, though the majority are located in Manhattan. This chart shows all the apartments plotted on a map with the color coding indicating the particular interest_level.

The map shows that most of the apartments that fall into the high interest_level category are not located in Manhattan but in Brookly. The majority of the listings for Manhattan only received low interest. Taking into consideration what we learned from the density plot above, this leads to the conclusion that the prices for apartments in Brooklyn are lower than those in Manhattan.

Feature Engineering

Feature Importance

The feature importance plot below shows the most important features for our best model. We will go on to describe how we created the various features from the columns already present in the dataset.

Price

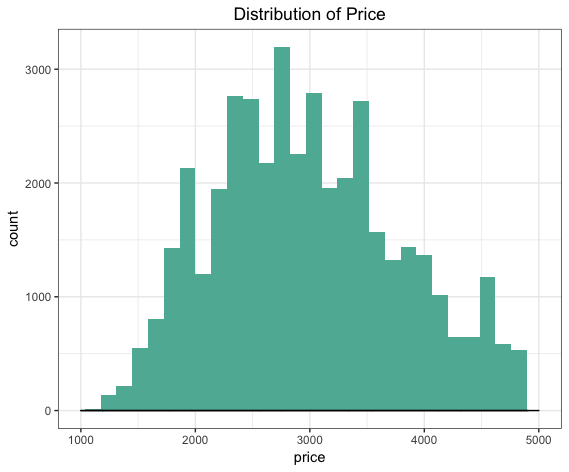

After some initial data cleaning, this histogram shows that the average price for an apartment in New York City, at least for those listed on renthop.com, seems to be $3,032 (median: $2,950).

In the rental market, price heavily influences level of interest. However a $2,500 1-br won't raise the same level of interest as a 3-br for the same price. For a more "apples-to-apples" comparison, we created a price/room and price/bed feature. The number of bedrooms here varied from 0 to 4, and number of bathrooms from 1 to 7.

Timestamps

The timestamp created came in the standard MySQL format YYYY-MM-DD HH:MM:SS. It was converted from character to POSIXct in order to be able to apply date arithmetics functions to, in turn, extract details such as week, weekday, hour, ...

Although renthop.com is listed as a New York company, the timestamp might not be in EST but in PST because the their servers seem to be hosted in San Francisco.

Location Clustering

Level of interest is also dependent on price with respect to location. A $2,000 1-bedroom apartment should lead to a higher level of interest in Hell's Kitchen than in The Bronx. However, the original dataset only has lat/long information. This subway map shows the median rents for 1-bedroom apartments inNew York City as identified by their closest subway stop for the years of 2015-2016.

Because we were limited to the data set provided by kaggle.com, we could not link the GPS coordinates or addresses back to information about the neighborhood a certain apartment is located in. We therefore used the density based clustering algorithm DBSCAN to create the neighborhoods ourselves. Given a set of points in some space, DBSCAN groups points that are closely packed together (i.e. points with many neighbors nearby), and marks points as outliers if they lie alone in low-density regions. 2,487 neighborhoods resulted from this clustering.

Price x Location

Our intuition was that given the average rental price for a neighborhood, level of interest on a listing is expected to be higher for listings priced below market average. Hence, with the new set of neighborhoods obtained from DBSCAN, we generated "above market" and "below market" features as follows:

- price difference: difference between price of a listing and average price of a neighborhood

- price per room difference: difference between price per room of a listing and average price per room of a neighborhood.

Apartment features and photos

Because the aforementioned columns features and photos of the training and test datasets were not strings but lists, i.e. multiple values for each row, we decided to omit both from our overall apartments dataset and created two separate data frames consisting only of aptID and feature or aptID and photo, respectively. We ended up with 267,906 features and 276,614 photos for our training set (test: 404,920, 419,598). From these 2 (or 4) datasets we created featureCount and photoCount columns for the main dataset in order to have some basic information about features and photos in there.

Dummy feature columns

From the abovementioned features we picked the top 20 most common and created so-called dummy variables, i.e. columns that are coded {0, 1}, depending on if a certain feature was present or not. This allowed us to separate features to see which are most predictive.

Photos

We analyzed all the photos that came in the 80gb ZIP file and extracted basic information from them:

- Width, height in pixels

- RGB values

- Brightness (based on RGB values)

We then aggregated the above values for each apartment observation (i.e. listing_id), calculating mean and median for:

- Width, height

- Pixel ratio and size, and difference of ratio from golden ratio (1.618034)

- RGB values

The images were also clustered using k-means clustering. Unbeknownst to us at the time, there are better ways to analyze images in a basic way.

Text Feature Extraction: Tf-idf

In order to extract numerical features from the description content, we use Tf-idf and apply logistic regression models to predict interest levels based on Tf-idf vectors for each apartment. The probabilities of the predicted interest level would become new features in our main model.

The above figure shows the workflow of our description feature extraction. After cleaning the text, we vectorized all nouns and adjectives, which generated an apartment-word matrix. We selected the top 1000 terms according to their weights as the input for our logistic regression models.

In order to predict interest levels, we split the training dataset into equal parts. We trained two logistic regression models with those two subsets, and predicted each subset with the trained model on the opposite subsets. For the test dataset, we train a new logistic model with the entire training dataset and predicted interest levels with the trained model.

Now we can get the probabilities of our predictions, giving us three more columns of probabilities of high, medium and low interest levels for both training and test datasets. Finally, we used medium and high columns as new features in our main model.

Sentiment analysis

The text in the description column might yield interesting insights. However, as with free text entries everywhere, format, content etc. differ widely. We used sentiment analysis to try to get an idea how the description might be perceived by users of the website. This resulted in 10 new dummy variables conveying the strength of the following emotions for each description:

| anger | anticipation | disgust | fear | joy | sadness | surprise | trust | negative | positive |

These columns in our case contained values in the interval [0 .. 58], with higher values indicating a stronger presence of a particular emotion.

Putting it all together

Feature engineering was done by every member of our team. Some of us used R, while others explored options in Python. This led to a fragmented codebase with various features being added from different team members and sources over time. We created an R script to make sure that the final data frame we used for our modeling could easily be reproduced.

To achieve this, we integrated all the various R code chunks and the results from the computations done in Python (csv files). The script, once executed, would then go on to create the data frames and the files for exporting these data frames based on the train or test input data, and then create data frames, files for the aforementioned separate photos and features data frames.

Model Selection & Tuning

Our model selection process began with an analysis of which models were possible to use and which would be best given the nature of the task. The project is a supervised classification problem. The training set contained nearly 50,000 observations, and over 100 columns. Many of those columns were engineered from other columns. While this produced novel and useful information, it also created multicollinearity.

One example of this was the calculated cost per bed and apartment price. Our final constraints were time and computing power. The models indicated that a tree-based model was our best option, but we tested a number of different approaches.

Models we experimented with include multiple logistic regression. Despite regularization, manual feature selection efforts and tuning, this model did not produce useful results. While we could deal with multicollinearity through regularization, this forced us to abandon useful information. Support Vector Machines may have been a better option with adequate time and computing power, but these algorithms proved too computationally expensive to be useful given our time and computing power constraints.

We focused our efforts on trees when a simple random forest model outperformed our other models without serious tuning and feature selection efforts. Ultimately, XGBoost proved to be the most effective model. Gradient boosted trees outperform random forest models because they partition the sample space to minimize a user-selected objective function, and according to user-selected regularization parameters. This is different from random forest, which selects a random subset of features for each partition. XGBoost outperformed GBM due to more flexible tuning parameters, and more efficient processing due to parallel computing.

We created a spreadsheet to organize our tuning process and a model to assist in parameter selection. Each team member submitted parameters we tested to this spreadsheet. We then trained a random forest model on that spreadsheet to predict which features might minimize logloss. This model quickly reached local minima, and did not prove to be very useful. Going forward, a similar model may be useful if we could introduce a randomness parameter.

Finally, we experimented with a neural network. We trained a simple neural network using TensorFlow and Keras. Training took 60 hours, which precluded any time for any tuning. The resulting model produced a logloss of 0.6. We then ensembled this model with our XGBoost model using the geometric and harmonic mean. Had our results been uncorrelated we may have seens significant reduction in logloss. While the geometric mean did produce a logloss score very close to our XGBoost model (0.57), we were not able to produce superior results using these methods.

Once we had determined that XGboost would give us the best predictive accuracy, we started tuning the model with a focus on decreasing overfitting for the model. We decided to do tuning manually while keeping a Google Spreadsheet containing parameters for each model along with the training, validation and Kaggle logloss error. By doing manual parameter tuning, we were able to take advantage of multiple computers and adjust in an more quickly, something not possible when tuning parameters using grid search.

The parameters we changed from default values in the xgboost package were eta, gamma, max depth, column sampling per tree and subsampling.

Eta, or the learning rate, affects the rate at which feature weights are minimized every iteration. A smaller eta will reduce the amount of overfitting but increase the number of iterations that are required. We found our best results with an eta of .01.

The second parameter we tuned, lambda, controls regularization of the XGboost model.

Gamma controls the amount of error reduction required by new branches in the decision tree. The default value for gamma, 0, produces a model without any regularization. Increasing this value produces a model that overfits the training set to a lesser degree but does not necessarily minimize validation or test error. We found that a value of .175 for gamma was optimal for reducing the logloss of the test set.

Max depth is a parameter that controls the number of splits a tree is allowed to have. A greater max depth value results in more complex trees that are better able to predict the training set but do not predict a test set well due to overfitting. A max depth of 7 produced a model with complexity without overfitting.

Our column sampling by tree was optimal at 0.8, which means that each tree sampled 80% of the features randomly, which serves to reduce overfitting and produce trees that do not require all of the features.

Subsampling was the last parameter that we modulated. We ended up going with a subsampling of 0.8, meaning that each iteration used a random 80% sample of the training set. Like the other parameters we adjusted, this served to reduce overfitting while still providing a model with accurate prediction.

Conclusion

There are a lot of explorations pertaining to image recognition that could still be explored to improve our model. Going further, we could use image recognition to compare number of rooms listed with what is shown in photos. We also could investigate whether the presence of floorplans has any impact on interest level.

20 features helped us get more accurate results; however, they were very computationally expensive for the XGBoost model. A good way to mitigate this would be to create a secondary model using features to predict interest level and then use those results in the XGBoost model instead of all the features.