Renthop Kaggle Competition: Team Null

Contributed by:

Scott Edenbaum, Ray (Xu) Gao, Tommy (Yaxiong) Huang, and Dodge Coates. With educational backgrounds ranging from Mathematics, and Computer Science, to Financial Modeling, Team Null leveraged their strong analytical skills and programming proficiency to tackle the Kaggle challenge. The team implemented various machine learning algorithms, including Stacking Models, Voting Classifiers, and Artificial Neural Networks during their journey, joining the top 50% of submissions.

Introduction:

3/4th of all NYC housing stock consists of rental units, and the NYC residential real estate market ranks as the second most expensive cost per square foot in the United States. Finding the right mix of housing accommodations in a new city is challenging enough, but finding a good value can seem like finding that proverbial "needle in the haystack."

RentHop utilizes a vast array of data to sort rental listings by quality to assist apartment-seekers in finding the perfect apartment. Two Sigma and RentHop, a portfolio company of Two Sigma Ventures, partnered with Kaggle to create a competition. Each team submits their model predictions and are ranked according to the outcome (aka. "logloss") of their predictions. The models are used to generate classification prediction for the "Interest" variable, with three possible values: "Low," ",", or "High."Medium

Approach:

- Inspect website - Getting a proper understanding of the dataset is is one of the first steps towards completing our analysis. Naturally the next logical step was reviewing the RentHop website to get a better understanding of how the listing data is collected.

- RentHop's "Feature" variable is a collection of 18 checkboxes and an input text-box where users can enter a wide range of text inputs.

- Kernels - Kaggle offers access to a large community of users asking and answering data science questions pertaining to the challenge at hand. We found using the Kaggle "Kernels" increased our efficiency tremendously when doing our initial analysis of the project.

- Additional Tools

- Subplot - display multiple histograms/charts in a single image.

- EXIF.py - gathers image file metadata.

- PILLOW (aka PIL) - library for basic image processing.

- JSON file format - very well supported within Pandas.

- SSH, Screen - Unix terminal tools for remote connections and terminal multitasking (facilitated operating our models on a headless remote linux server).

- Make - Unix tool used to process data files before loading into models.

- Git & GitHub - Version control for sharing project data.

- Emacs. Sublime, Text Wrangler, iPython Notebook, and Nano - various editors and development environments.

- Decide framework of tools for the project.

- Reasons for choosing Python programming language include:

- Consistent syntax among various libraries, and personal preference among team members.

- Portability - can easily run the same file on multiple computers with minimal tweaking.

- JSON (JavaScript Object Notation) - file structure that is both syntactically easy to understand and well supported in Python.

- Strong library of existing packages to assist in parsing ~83GB of directories and image files.

- Reasons for choosing Python programming language include:

Workflow:

- After the initial background research, the project follows the following workflow:

- Manipulate Data - reformulate dataset with dummy variables

- generate derivative features and additional features from the given dataset

- EDA - Analyze the current variables used in the model

- visualize with appropriate charts

- Feature Selection - Choose quantity and select which features are used in the model

- Train/Tune Model - Run model with inputs derived from Feature Selection

- re-run the model with varying parameters specific to the underlying model

- Interpret Results - Determine the effect of the applied combination of features and model input parameters in order to optimize the model's performance.

- desirable results include increased model stability and decreasing model error

- Manipulate Data - reformulate dataset with dummy variables

Execution:

Feature Pre-Process

The first step in our execution was to make some base assumptions about which included features are most and least important for purposes of creating a benchmark. We assumed (incorrectly) that building ID, manager ID, and listing ID would have little to no positive impact on our models. For our purposes, display address, and street address were of no benefit to our model.

There are ~124,011 listings, each with 0 - 13 images, totaling ~700k images at about 83gB in size. We assumed that there would be a link between better quality images and higher interest property listings, so we collected the average brightness and average luminance, both on a 0 - 255 scale (0 - dark, 255 - bright). In addition, we used exif.py to gather metadata, and collected average image file size and number of images for each listing to great success.

We took a look at the basic numeric variables (including: Latitude, Longitude, Price, Bathroom, and Bedroom) in order to understand the apartment interest level. We decided to use these features as the foundation for our model, and a benchmark for comparison of future models.

Initially, we made the assumption that the apartment interest levels will have some correlation with the location features (latitude/longitude). The reason behind this is that we had a natural inclination to associate higher price with higher interest, and if that assumption holds it would naturally follow that the chart would have clusters of high interest areas in high income neighborhoods, say areas near Central Park. We were undeniably wrong. The chart below clearly depicts the lack of any clear clustering amongst the high and medium interest neighborhoods and an overall abundance of low interest properties.

Additional derivative features include manager count, building count, word count, word diversity, and description sentiment. The next step in our process is EDA.

EDA (Exploratory Data Analysis)

First, we conducted a brief analysis and visualization of the price, bathroom, bedroom, and word count variables.

This exercise was a great example of how one's biases can influence their analysis. We also made a point of deciding what interest level actually represent. Our team came to agreement that the interest level is a representative of "buying pressure" and a better proxy indication of value than price like we originally thought.

In general, when someone looks for an apartment, price is one of the most important factors to determine if this rental is a good value. We'll continue by analyzing the prices in different interest levels.

Something unusual is happening to the low interest apartments in the price kernel density chart. This is a result of having a substantial quantity of high priced low interest properties, so we decided to do a logarithmic transformation of the data to normalize the price.

Observing the various interest levels in the above chart, we noticed that the average price of the high interest properties is less than the average price of the medium interest properties. The average price of the low interest properties is the highest amongst the group, but that was due to the low interest high priced outliers.

Next we looked at the bathroom data.

One bathroom is the most common arrangement for the rental properties of all interest levels, an expected result for the NYC housing market. However, a few super high priced listings add the high bathroom count, outliers that are visible in the graph. The medium and high interest properties are very similar, so the bathroom values may be better suited as a binary indicator for low or not low interest properties.

Visualization of bedroom data is to follow.

An overview of the plot indicates that the bedroom could be a useful indicator to differentiate high interest properties from low and medium interest property listings. The median number of bedrooms was the same for both high and medium interest level.

We looked into Manager_ID to determine if it could be a useful indicator to classify various interest levels.

The x axis denotes the frequency count for individual managers. We notice that there is a peak for each interest level. This corresponding manager lists more apartments than others. We also notice that the high interest properties are spread among a rather small quantity of managers, and one manager in particular has orders of magnitude more listings than their competitors.

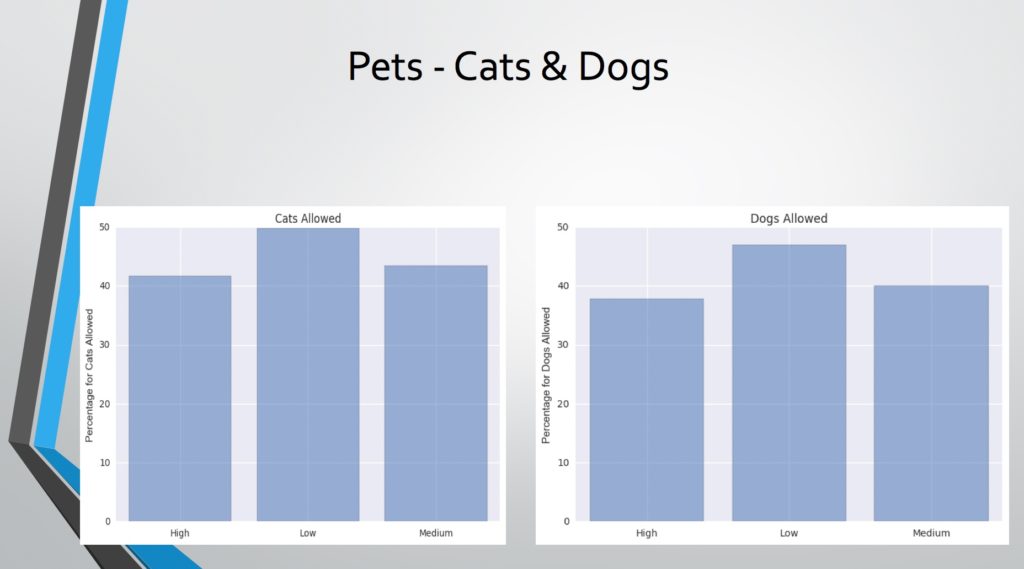

There are 18 user selectable features (Parking Space, Elevator, Doorman, Cats Allowed, Dogs Allowed, etc). Those features can be good indicators for classifying interest levels, but are often counter-intuitive, such as cats allowed and dogs allowed.

It is interesting that low interest apartments have a higher tendency for allowing dogs and cats when compared to medium and high interest properties. This is a good indicator to classify the low interest properties, but it may be an artifact of the large cross-section of low interest properties relative to the high and medium interest properties.

Next we analyzed the derivative features, starting with description length (word count).

There is a bump at the beginning of the low interest curve, right around the value of x = 0. This corresponds to descriptions of length 0, or empty descriptions. Naturally, apartment listings that omit key information such as the description, are destined to be categorized as low interest. Buyers are ultimately interested in getting a good value, regardless of price, and the lack of data, makes it almost impossible to determine whether the property in question is a good value or not.

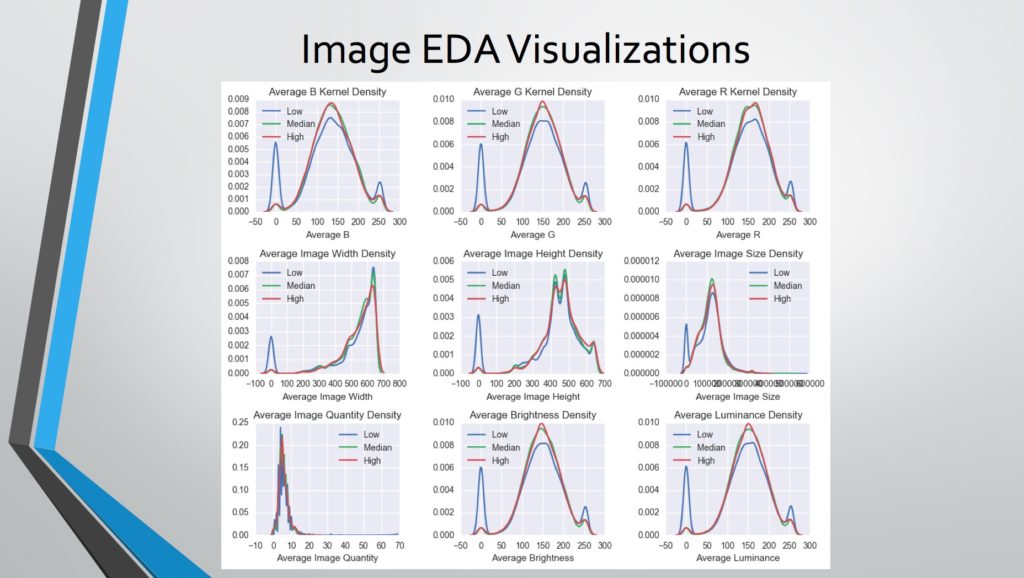

Lastly, we analyzed the image related features.

The chart depicts the derivative features generated from the image data. A common image format is RGB, where R (Red), G (Green), and B (Blue) values range from 0-255, and combine to form the color of each pixel. In this case, we were able to determine the brightness of each image by averaging the R, G, and B values, then we averaged the brightness values for each property listing. Image Width and Height refer to the average pixel count for the images for each property listing. Similar to the description length, a lack of images is a strong indicator of a low interest property.

Feature Engineering

We did a trial feature selection by using Price, Bathroom, Bedroom, Latitude, and Longitude to form our benchmark. We also generated two logistic regression models by using the 5 variables and using 3 variables (omitting Latitude and Longitude). Omitting Latitude and Longitude was of little consequence to our predictions.

We used the Extra Tree Classifier (Extra-Tree Randomized Tree Classifier), a variant of the random forest classifier, to measure the feature importance. Unlike a random forest, at each step the entire sample is used and the decision boundaries are picked at random, rather than choosing the optimal boundary. By averaging the various subsamples, the Extra Tree Classifier improved the prediction accuracy and counteracts overfitting.

Below is our initial feature importance plot:

Price turns out to be the most important feature, followed by average image size (for each listing). Word Count (description length) is the 3rd most important feature as we have seen throughout the EDA.

We did more research on the image features RGB, average image height and width, existence of metadata, etc. were added into the feature selection consideration.

Here price drops to the second most important feature, and average image size is now top of the chart. Manager Count is the fourth most important feature according to the chart above. The inclusion of the additional image features adjusts the weighting on the feature importance.

Model Structure:

- Model Development

In This Kaggle Project, we have tried three types of 'high level' models: ensemble (aka stacking) models, voting classifiers, and artificial neural networks - each with separate tuning parameters. Each of these models comes with its own set of pros and cons, and ultimately provide different log-loss results on the test set.

Initially we used the stacking model due to its simplicity. Since we created the code to implement the algorithm and our primary objective was precision and consistency, it wasn't optimized for performance. This model's accuracy performed rather poorly, even when compared to the benchmark - the top 5 most important factors in a simple logistic regression.

Our experience with the stacking model left us looking for better options, and we tried a voting classifier next. Voting classifier is another way to combine the results of different models. There are both hard and soft voting options. While hard voting obeys the majority wins rule, soft voting is much more flexible for multi-class problems. It allocates the weight for each model and calculates the final result based on the basic model results and weights. Voting classifiers may be one of the fastest algorithms used to stack different models.

However, a major downside to the voting classifier, as in our case, is unstable predictions. This means that running a voting classifier model with identical parameters will yield different results each time it is executed.

The last model we used in our project is the artificial neural network - a supervised machine learning algorithm growing in popularity amongst the data science field. This particular model is quite well-structured in the python “keras,” package. By using this model and selecting the top features, we achieved the best score on the test set, a logloss of 0.585.

Unfortunately, the run time for the neural network is extremely long without the assistance of appropriate GPU hardware. When operating our model, each cross-validation fold with 5 bags needed approximately 7 hours to complete on a 12 core Intel Xeon server. In this project, we set 10 folds, so we were limited in the amount of times we were able to run the model within the project timeframe.

- Models

- Stacking is a very useful technique to combine the results of multiple models and generate a better result. The basic process is to use each classifier's output as the next classifier's input. The basic model we use includes: Logistic Regression, K-Nearest Neighbors, Gradient Boosting, Random Forest, and Ada boosting. The results are then passed to XG Boost as the next step. Here's a brief overview of the stacking model in 5 steps:

- Voting Classifier is a much faster way to combine model results. Based on hard voting or soft voting, it can summarize the results of basic models. In this project, the basic models we use for the voting classifier are: Logistic Regression, Random Forest, Naive Bayes, Decision Tree, Gradient Boost, and Ada Boost. We also found a solution to the unstable results from the voting classifier, A stepwise process to generate an optimized linear combination of underlying models. We set a baseline model including the above mentioned models.

- By using a loop to add each model into our voting classifier, we can compare and add new basic models to our classifier. After this process, our model has 10 Gradient Boost, 2 Ada Boost, 1 Logistic Regression, 1 Random Forest, 1 Naive Bayes, and 1 Decision Tree model. This model generates much better results compared to the stacking model.

- Artificial Neural Network is a relatively complex training model which includes different layers, nodes, and activation functions. We use two hidden layers in this project. The first hidden layer includes 300 nodes, and the second layer includes 50 nodes. Both layers use sigmoid activation functions. Regarding the output layer, since we used the Keras package in Python, the softmax function is built-in and usable for multiple mutually exclusive classes.

Results:

Initially we omitted seemingly useless and highly correlated features such as building ID , manager ID , description, latitude, longitude, and created. Our intention was to decrease dimensionality and prevent overfitting problems. However, after selection and testing of various models and parameters, we found it is better to include those features, however counterintuitive it may seem. Here is a chart of our prediction scores. Over time we did manage to improve the score dramatically and end up in the top 50% of submissions.

Conclusion:

Successful group cohesion through proper use of Github, along with a preliminary discussions of individual strengths and weaknesses was key in facilitating concurrent development throughout the duration of the assignment. Concurrent work on feature engineering, model development, EDA, and parameter tuning was necessary in order to complete the project within the deadline.

When working on models that take hours to run, it was very helpful to work out of a more traditional development environment (such as sublime, emacs, or text wrangler) rather than an iPython notebook. This development path allows additional portability and facilitates execution of code on multiple computers or remote servers. We found access to a 12 core Xeon cpu server running Ubuntu linux extremely useful throughout the development of our model.

The models were developed locally on our laptops, and the code was sent to the remote server through git. Then the code was executed in a terminal 'screen' which allows for the model to continue running even after the remote connection (ssh) to the server terminates.

The conclusions we can draw from this project are many. It is extremely important to fully understand and define the value that is being modeled, as well as keep an objective view in regards to the analysis. It was quite interesting and humbling to find so many seemingly contrarian indicators throughout this analysis - such as the surprisingly unimportant Latitude/Longitude features.

For more details and project code check our project GitHub repository.