Studying Data to Predict Rental Interest

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

RentHop Intro

Kaggle, a data science competition network recently acquired by Google, is home to many machine learning competitions of various types and difficulties. One of their more popular contests involves predicting the amount of interest (Low, Medium, or High) a particular rental listing will receive. To compete in this competition, I created an XGBoost-based model that ultimately scored .55724, good enough for first place in our cohort. Lets take a look at the process and the mistakes made along the way.

The Process

The listings contained the following information: Bathrooms, Bedrooms, Building ID, Date Created, Description (text), Address, Features, Lat/Long, Manager ID, Photos, and Price. Some basic EDA revealed that 70% of the listings received Low interest. As such, I oriented my thinking to identifying/creating features that would suggest higher interest than the baseline. First, I created some naive features, such as price divided by bedrooms, bathrooms, features, and photos. I then ran these features plus the original data set through XGBoost and received a log loss of .73. The chase was on.

In order to achieve greater progress, I felt I had to analyze the problem beyond just the given dataset. Most data science problems represent the backend of a common problem from everyday life. Often, a key to creating a working model is to approach the problem from the end user's perspective.

In this case, we are essentially examining what attributes makes a home more desirable. The lat/longs of the rental listings revealed them to be New York City apartments, primarily in Manhattan and nearby Brooklyn. In NYC, I suspected that neighborhood, features, price, distance to subway stops, distance to schools, and safety would all be key factors in a listing's interest. In the limited time available, I set out to encode these features.

Dataset

First, I one-hot encoded the top 50 features, performed PCA, and reduced down to the 30 or so features that were statistically significant. I then found the lat longs for NYC neighborhoods, and created a column to display the neighborhood as a feature. I one hot encoded the addresses to show whether the listing was on an Ave or Street. By this time, the model was running a sub .60 log loss.

Finally, I found an open data set provided by the New York government listing the locations of all 1000+ subway stops in NYC. I created a distance matrix to display the nearest stop to each listing, along with the distance to that stop. This improved the model, but not as much as I would have hoped. I thought more about it, and realized I was missing a key component. Living next to a subway stop is great, but the key is living next to a subway stop that contains multiple lines connecting you with the rest of the city.

Feature

I decided to create a new feature: distance to subway stop divided by the number of lines at that particular stop (to amplify the "less is more" aspect of the original distance-to-subway feature). The result was a model with a much improved .573 log loss.

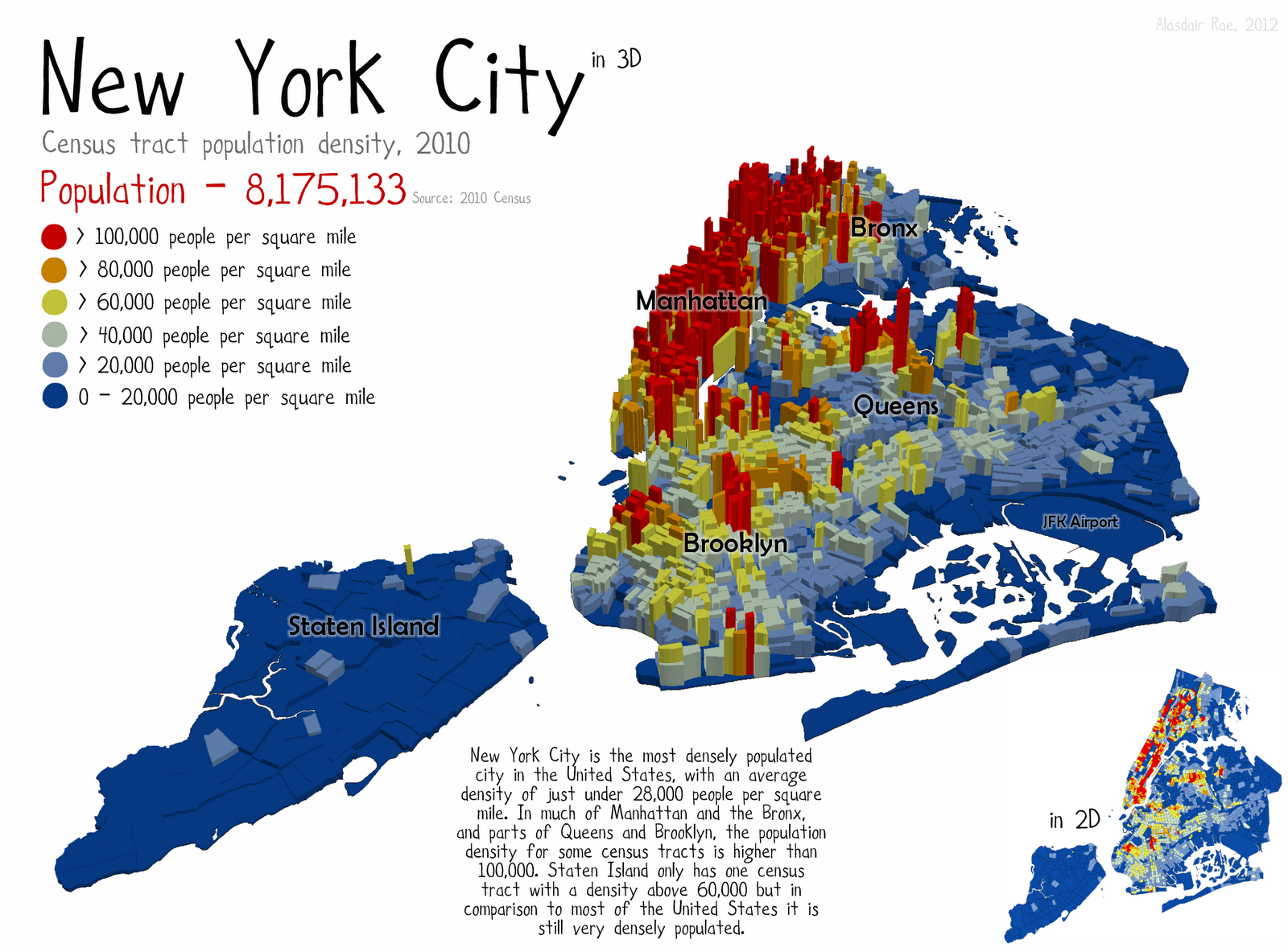

Density Maps

Next, I took a look at density maps of NYC in general. Like most cities, the density in NYC is not linear. There are pockets of extreme density/demand that may be just a few blocks from a (comparatively) undesirable area.

It would be nearly impossible to encode all of the desired areas into the model, and not time efficient considering our tight deadline. Instead, I decided to use a proxy. What is located in high traffic areas? What depends on foot traffic and high density for its business model? While looking at pictures of high density streets, the answer was obvious. Every fifth person was carrying their signature cup: Starbucks. There are 20,000 Starbucks locations on earth, and almost 500 alone in the NYC area. I created another feature showing the listing's distance to the nearest Starbucks and ran the model: Improvement, but very little. Again, I had a good idea that needed more thorough follow through.

Thinking about it conceptually, I imagined a listing on the western or eastern edge of Manhattan. There could be one Starbucks relatively close, but the next nearest Starbucks would be probably twice as far away, and likely in the same direction. In a popular area, however, the second closest Starbucks would theoretically be in a different direction, and maybe just slightly farther away. I decided that the average distance of the nearest four Starbucks would probably be a good proxy for the "centralness" of a listing. Encoding that feature pushed the model all the way to .561. With a little tuning (slower learning rate, more trees), our XGBoost model achieved its top score of .55724.

The Mistakes

How much time do you have? There were many, MANY misguided features added (and subsequently removed) and attempted model blends that did not improve my score. Here are a few:

- Lasso, Ridge, and Elastic Regression - I attempted to use these models as they are strong in aspects that XGBoost is weak, possibly making for a good pairing. In this case, that theory was false

- Sentiment Analysis - Analyzing the description for mood was a simple (and cool) bit of code that ultimately did nothing

- Street or Avenue - The model improved when this feature was removed, go figure

- Image Brightness - I tried to run a basic scan of the images to see how bright they were, as a proxy for whether or not they were taken professionally. The model would have taken 30+ hours to run, crowding out all other analysis time, so I aborted.

- Junk Listings - There were several listings with incorrect or incomplete data. I tried to encode a binary "junk" feature, but it was useless. This was probably because these listings were nearly always Low interest, which was the baseline in any case.

- Schools - I also created a distance matrix to the nearest top 45 elementary, middle, and high schools in the area. As soon as I pulled and edited that data sheet, I should have known it wouldn't be successful. All the best schools were in the worst neighborhoods.

I also attempted to reduce the entire model using PCA, and blend it with linear/generalized linear models. None of these strategies provided any improvement. In the end, this competition reinforced the idea that thinking outside of the box is just as important as pure model engineering. After this project, I am greatly looking forward to business challenges that can be solved by advanced modeling in the future.