CTR Prediction in RTB Display Advertising

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Authors: Quentin Picard, Yongguang Qin, Chung Meng Lim, Daniel Park

Introduction

For our Capstone Project at NYC Data Science Academy, we chose to work on a big data supervised learning problem provided by an adtech company that specializes in user behavior and location analysis. We chose to focus on the problem of CTR (Click-Thru Rate) estimation with the hope of improving current company metrics. Our client is an active player in Real Time Bidding (RTB) markets and gave us access to 2 weeks of data from 3 exchanges. The data was split in 3 categories:

- Bid Requests (36 billion rows, ~60 fields): ad space received from the exchange on which the company can bid.

- Impressions (302 million rows, ~30 fields): ads won by our client during the bidding

- Clicks (450k non-duplicate clicks, ~30 fields): impressions that were clicked by a user after display

The following graph[1] shows the overall workflow with some companies as illustration. Additional introductory information about the functioning of RTB markets is available here[2].

Our strategy was to explore which machine learning algorithms were used by the industry, implement them and compare the results. We downloaded the data to Spark in order to take advantage of parallel execution and collaborated using Databricks notebooks.

Modeling

Class imbalance

In our data only about 0.5% of impressions were clicked.This tells us that statistically speaking the clicks (‘1’) are more valuable than the not-clicked ads (‘0’) in order to learn the boundary between the 2 classes. As long as said boundary exist, we should be able to subsample the elements of the majority class with only a small impact on our final results.

The graph below shows that the coefficient variances obtained by fitting a model to the training data tapers off as the class ratio increases.

Diminishing returns in unbalanced binary data[3]

For our modeling, we used the workflow described below. We split into training and test according to the date so that it would correspond to a normal production setting in which the object is to predict the click through rate for the next day or next few days.

All the 1s were kept in the training set. We ended up with about 11 times more 0s than 1s. In the test set we kept the original inbalance to minimize bias in the prediction. We just applied a unique downsampling ratio to the whole dataset.

Subsampling process

Categorical variables

All the features in our dataset were categorical with the exception of age; we also made that categorical through binning, including the extreme version which considers each value as a group. Since most of the features were identifiers with relatively high cardinality (e.g. ad id, campaign id), the traditional treatment of these with one hot encoding impacted performance significantly. This was addressed in the Follow-The-Regularized-Leader model using the “hashing trick” and forcing sparsity through regularization.

Interactions features (conjunctions)

A few papers[4] on CTR prediction report good results with the use interaction features. It makes logical sense that an ad for Gucci would have a higher rate of success (as measured by the probability of a click event) on the Vogue website versus motortrend.com. We didn’t observe improvements justifying the additional performance drain in our dataset. One explanation is that we were dealing exclusively with ads displayed on apps (e.g. ‘Words with Friends’) and not on websites. The algorithm couldn’t find as good a content match between those apps and the creatives (ads).

Models

Predicting CTR in the ad industry has been studied extensively in recent years. Logistic Regression is a common algorithm to handle the volume of data efficiently[5]. We therefore started to implement the standard Logistic Regression available in the PySpark ML library. It gave decent results (see graph below) and performed better than the Decision Tree and Random Forest, which were also available.

In order to improve performance and predictive accuracy, we proceeded to research industry publications for non-standard algorithms. We tried the Follow-The-Regularized-Leader Proximal (FTRL) implementations presented by Google[6]. It uses a single weight layer to allow the training of large amounts of data. Because we implemented the algorithm from scratch, it didn’t take full advantage of parallelism in Spark. Still, we were able to train both the sub-sampled and the full dataset (42 million rows in the training set), even with a single thread. Fitting the 42 million rows took approximately 10 hours.

FTRL attempts to produce both sparsity and high predictive accuracy. Sparsity is measured by the proportion of non-zero factors in the weight matrix (here, a single column). Having lots of zeros enables low memory usage and fast vector operations.

Let’s dive into some of the details of the implementation.

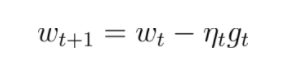

Stochastic Gradient Descent performs the update:

Where t is the round of update (one round for each training observation), ηt is the learning rate in round t and wt is the weight layer in round t.

FTRL instead tries to solve for the weight satisfying :

where:

and using the notation: ![]()

- Brendan McMahan[7] shows that the 2 equations above generate identical coefficients when the λ1 term is removed.

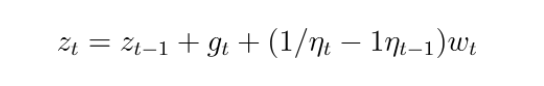

For memory efficiency we store:

and using:

we solve for wt in closed form and get the equations detailed in the algorithm summary below:

Notes:

- Learning rate

‘Per coordinate’ refers to the fact that the algorithm can learn at different speeds for different features. If a feature has never been seen, then the algorithm will have to learn fast to use this new information. Conversely, if a feature has already occured many times, the algorithm does not have to learn anew but just tweak the existing weight. - Feature hash trick

A hashing trick used in the industry forces all features values into a set maximum (e.g. 220 which is about 1 million). This is meant to improve performance but could lead to ‘collisions’ if the number of unique values across all features is above that number. In practice, because the term is good at introducing sparsity, our implementation only created about 50,000 non-zero values on the full dataset.

PySpark FTRL Implementation (model .fit method)

PySpark FTRL Implementation (model .fit method)

Evaluating Model Performance

Our main performance metric to compare algorithms was Area Under the Receiver Operating Characteristic Curve (AUROC). Area Under Precision-Recall and LogLoss are 2 other common metrics but we couldn’t interpret them as easily (note that for LogLoss probability estimates are affected by the downsampling and need to be transformed back).

A summary of the results is presented below:

ROC Curve - Top 5 algorithms

FTRL performed best, which is consistent with industry research, It was also able to squeeze additional information when given the full dataset (AUROC was still in the same range, though, as expected from our subsampling analysis). Simple logistic was the next best performing model.

Other Analysis

We plotted the decrease in performance when predicting CTR on impressions further into the future. Although we only had a total of 22 days of data, we were able to observe a decrease in predictive accuracy. We would expect this trend to be more significant if more days were available, meaning than the model would need to be refreshed at regular intervals in a production environment.

AUROC Decay (FTRL algorithm)

Conclusion

Analysing real world production data was an exciting challenge. The team experienced ‘classic’ big data struggles including inconsistencies/ noise in a massive dataset and cluster reboots during model runs. We also noticed that Spark is a relatively new technology with some outstanding bugs and missing functionality compared to Scikit-Learn. In the future we would love to tweak our algorithm on more data and maybe try to bring an improvement-- even if it is just a small one -- to the FTRL implementation.

References

[1] Weinan Zhang and Jian Xu. Learning, Prediction and Optimisation in RTB Display Advertising

[2] Wang Jun, Zhang, Weinan, Yuan, Shuai. Display Advertising with Real-Time Bidding (RTB) and Behavioural Targeting

[3] Trevor Hastie, Robert Tibshirani Jerome Friedman. Statistical Learning course

[4] Yuchin Juan, Yong Zhuang, Wei-Sheng Chin, Chih-Jen Lin. Field-aware Factorization Machines for CTR Prediction

[5] Olivier Chapelle, Eren Manavoglu, Romer Rosales. Simple and Scalable Response Prediction for Display Advertising

[6] H. Brendan McMahan, Gary Holt, D. Sculley, Michael Young, Dietmar Ebner, Julian Grady, Lan Nie, Todd Phillips, Eugene Davydov, Daniel Golovin, Sharat Chikkerur, Dan Liu, Martin Wattenberg, Arnar Mar Hrafnkelsson, Tom Boulos, Jeremy Kubica. Ad Click Prediction: a View from the Trenches

[7] H. Brendan McMahan. Follow-the-Regularized-Leader and Mirror Descent: Equivalence Theorems and L1 Regularization