Joining Data Without A Key

More detail for this project (including analysis and findings) can be found in my team’s capstone project write-up. The purpose of this post is to go into greater detail on one specific aspect of the story: research done to join two tables without a proper key field between them. Some of the content of the aforementioned blog post is repeated here in order to tell this story.

Problem Statement

For this project we were presented with two data tables:

- Image Capture data for people who passed in front of a camera

- Beverage Dispense data reflecting volumes of different beverage choices

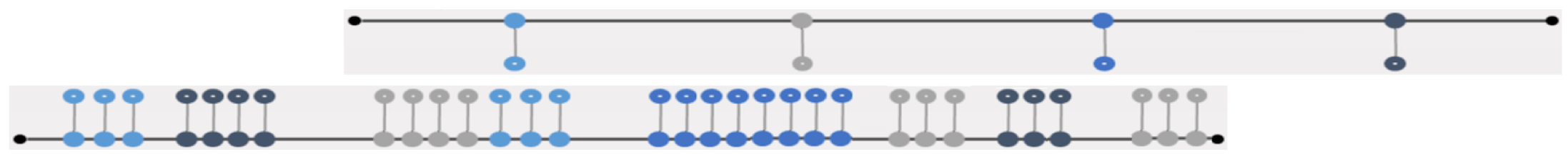

The Image Capture data started and ended about one day earlier than the Beverage Dispense data. Syncing the time stamps involved matching up two completely different time lines with events occurring on completely different scales. In an ideal world, the before and after of the sync might look like this:

Before:

After:

In reality, the more you match up some of the date/times, the more you throw off some of the others, and the risk of error in what you do match up is high. Further complicating this problem, there could be one or more beverage dispense events that you were then looking to map to 5, 10, or even 20 or more image capture frame records (in the other table). I believe there was even one case where the number of potentials was as high as 75 records.

Solving The Problem

Our team explored many different approaches to both understanding and solving these issues. This includes:

- Brute Force: common sense guessing based on a spot check analysis of time fields and attempting to join different combinations of time fields with different time shifts.

- Code that attempted to shift the date/time stamps in a loop attempting to find the best synch up

- Code that performed the join based on whether a date/time in the beverage table could be found within the min/max date/time interval or records in the Image Capture table. This assumed a “best date/time” for the beverage event would be found and then the join could be performed without needing an exact 1-to-1 date/time match up.

- Use of Gradient Descent in tests of both a probability maximization formula and error minimization formula.

- The final approach described below that ultimately got used

The Gradient Descent approach seemed the most promising initially, but analysis of the results showed that some records in the joined result set did not seem to make sense. The amount of time to dispense drinks based on their volume was not sufficient for what we were seeing after joining the Image Capture data to the beverage dispense data.

This led to the team brainstorming together, and a final approach emerged:

- The error between potential matchup records was defined as the sum of the seconds of all beverage events to the nearest image event. When looking at potential records, the code sought the ones with the lowest error.

- To link the data, we needed a time span in which it is reasonable that the persons from the image capturing are responsible for the dispense events. The data collection and sampling process described in the “stake out” section of our team blog post was invaluable to this process.

- We took two sigma before and two sigma after the mean to filter out potential records in the distribution of what could be joined from the set of Image Capture frames near the beverage events records. This resulted in only 59% (4 sigma) of the frame capture records being targeted for inclusion in the join.

- We then defined a formula for “best guess” ranking to find the top 5 records to include in the join. The formula included these factors in its ranking:

- Time distance from beverage observation to first Image Capture Observation

- User duration in front of camera (how long were they there?)

- Number of user frames in the time window (how often were they there?)

- Face size bonus: a number recorded closeness to the camera at time of observation. Larger was assumed to be better for data capture in this context.

Conclusion

This approach produced the most useful results from this specific data set. All the previous approaches (not used) could have application to other problems in other data sets. Ultimately, it is analysis of the data that should always drive decisions of this nature.