Machine Learning on Bank Marketing Data

Introduction

Retail Banking is a provision of the services by banks to an individual consumer. Such services offered include savings/transaction accounts, mortgages, personal loans, and credit/debit cards. It is a very competitive business.

One of the biggest challenges the business faces is figuring out how to identify condumers who are most likely to do business with it and target those who have suitable needs to sign up for its services. Because of that, the marketing of services plays a vital role in winning customers over.

In this study, we have implemented multiple muchine learning algorithms on a marketing data set of an European retail bank. The goal is to understand the important factors on short-term deposit account sign-ups and to develop a strategy to help banks focus on those most promising leads in order to win them over.

The Data

The data set used here is from UCI machine learning repository. It is derived from the direct marketing campaigns of a Portuguese banking institution. The marketing campaigns were based on phone calls. Often, more than one contact to the same client was required, in order to access if the product (bank term deposit) would be ('yes') or would not be('no') subscribed.

The following categories of information are included in the data set:

Consumer data: Age, sex, job and marital status, education, loan status, and so on.

Campaign activities: When and how to contact, times to contact, outcome of previous campaigns.

Social and economic environment data: Euribor 3 month rate, employment variation rate, consumer price index, and

consumer confidence index.

Outcome: A 'yes' for the bank if there is a term deposit subscribed, a 'no' for the other outcome.

Data Visualization and Pre-processing

The data set contains 12 categorical variables and 7 numerical variables as its features and a binary category as its response variable. The following plots show the relationships between its economic numerical variables and the distribution of the response variable.

The plot on response variable shows highly unbalanced distribution of its binary classes. The relationships between the economic data are non-linear. Economic data is not normally distributed. In order to fit machine learning algorithms, all the categorical variables and response variable are encoded into numerical levels.

This data set also contains missing data on one numerical feature: pdays. This feature indicates the number of days that passed by after the client was last contacted from a previous campaign- it was coded as '999' if the contact never happened. Over 90% of the records show a number missing for the pdays. In order to implement the machine learning algorthms, we need to imput the missing values of this feature in a way to maximize its prediction accuracy.

In the study, we tested the following appraches with the Logistic Regression algorithm:

- Leave it as it is (999)

- Imput as the column mean

- Imput as zero

- Remove the column from data set

Since the result shows the highest prediction accuracy on the first apprach. we will keep the data as it is in the rest of study.

The Models and Model Optimizations

In order to meet the goal set up above, we need to develop a classification model with a balanced ability of giving correct prediction on both consumer sign-ups and no sign-ups. This model is considered as suitable since it can help marketing personnel to focus on those promising leads and reduce or eliminate effort on those who are unlikely to conduct business with them. To develop such model, we implemented:

- Rebalance the train data: Oversample the minority class to overcome the unbalanced response output

- Implement multiple classification algorithms: Explore different classification algorithms to seek the best fit

- Redefine model evaluation criteria: Focus on model sensitivity rather than overall accuracy

- Implement grid search cross validation: Find optimal model parameters

- Develop validation curves: Keep overfitting in check

- Evaluate sensitivity: Compare sensitivity through Classification Reports

The Results

The algorithms used in the study consist of Logistic Regression, Radom Forest, Gradient Boosting, Support Vector Classifier and Neural Network. Without oversampling and parameter optimization, all algorithms show around 90% accuracy overall but about 20% sensitivity on fitting and prediction of custmer sign-ups. After rebalancing the training data and grid search cross validation, all the algorithms except the Support Vector Classifier showed improvement on balanced sensitivities and the top two improvements were from Gradient Boosting and Neural Network. The following outputs are then generated from Logistic Regression, Gradient Boosting and Neural Network. Here Logistic Regression is served as a baseline other algorithm to be compared with.

Logistic Regression: baseline Gradient Boosting: Neural Network:

On train set: On train set: On train set:

precision recall f1-score support precision recall f1-score support precision recall f1-score support

class 0 0.91 0.99 0.95 29229 class 0 0.95 0.83 0.89 29229 class 0 0.96 0.44 0.60 29229

class 1 0.67 0.24 0.35 3721 class 1 0.34 0.67 0.45 3721 class 1 0.16 0.84 0.27 3721

avg/total 0.88 0.90 0.88 32950 avg/total 0.88 0.82 0.84 32950 avg/total 0.87 0.49 0.57 32950

On test set: On test set: On test set:

precision recall f1-score support precision recall f1-score support precision recall f1-score support

class 0 0.91 0.99 0.95 7319 class 0 0.95 0.84 0.89 7319 class 0 0.96 0.43 0.60 7319

class 1 0.66 0.23 0.35 919 class 1 0.34 0.66 0.45 919 class 1 0.16 0.84 0.26 919

avg/total 0.88 0.90 0.88 8238 avg/total 0.88 0.82 0.84 8238 avg/total 0.87 0.48 0.56 8238

The highlighted data shows that the sensitivity of sign-ups on fitting the train data has been increased from 24% on baseline to 67% on Gradient Boosting, and to 84% on Neural Network Classifier and that the coressponding improvements on the test set have raised from 23% to 66% and then further to 84%. By improving the sensitivity on class 1, we improve the ability of these models to predict customer sign-ups. However, sensitivity on class 0 has been reduced from 99% on baseline to 83% on Gradient Boosting, and to 44% on Neural Network on train data and from 99% to 84% and then further to 43% on test set. The reduction of sensitivity on class 0 will reduce the ability to predict no sign-ups correctly.

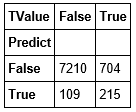

Further, let's take a look at the prediction distributions on test set:

Baseline: Gradient Boosting: Neural Network:

Compared with those true values, the prediction output on test set shows:

1. The baseline model is accrurate in making predictions in general: It is able to predict correctly over 90% of the time. However, it can only predict customer sign-up correctly 23% of the time. So the model is not informative enough to our project goal.

2. Gradient Boosting has been improved on predicting customer sign-ups correctly to 66% of the time and it is able to filter out those who will not sign-up 84% of the time.

3. Neural Network has been improved further on predicting sign-ups correctly to 84% of the time, however, its ability of filtering out those who will not sign-up was reduced to 43% of the time.

4. Compared with Gradient Boosting, Neural Network prediction is more expensive because of its failure to identify those who will not sign up.

Finally, as shown below, both Random Forest and Gradient Boosting are able to identify those features which have important impacts on the response variable. These outputs supply very valuable insight to guide further marketing campaign.

From Random Forest: From Gradient Boosting:

The differences of feature importances reflect the differences of mechanism between thees models.

Conclusion

In this study, we have applied machine learning techniques to retail bank marketing data and explored how the techniques can be used to help the bank to conduct its marketing campaign:

- Multiple Classification Algorithms have been evaluated.

- Oversampling/undersampling has been implemented for unbalanced data.

- Cross Validation was used for parameter selection.

- Gradient Boosting is the best model for balanced sensitivities and interpretation of feature importance.

- Neural Network has the best ability to predict sign-ups but gives no insight on feature influence.

- Both Gradient Boosting and Neural Network can add value to further a marketing campaign.