Predicting Success on Stack Overflow

Can machine learning models outcompete humans in outcomes prediction on the popular tech Q&A site?

Introduction

As the only database administrator for an organization with 1,500 employees, I regularly need to integrate data with a growing array of systems and technologies. Like millions of software engineers and IT professionals around the world, I find that my search for answers to technical questions often leads to Stack Overflow. Voting and other forms of user participation on Stack Overflow serve to identify useful questions and answers. Helpful users have contributed to the site’s becoming the leading web community for getting answers to computer programming and other technology questions.

While working with databases, ETL, and reporting by day and doing assignments for the NYC Data Science Academy’s online bootcamp at night and on weekends, I came across a series of blog posts by leading database expert and author Brent Ozar about the Stack Overflow dataset. Brent discussed the public availability of the sizable database that stores the multitude of questions, users, votes, badges, and comments over the site’s 9 years of operation, and why he finds it useful for teaching performance testing. For me, the fact that Stack Overflow publishes its data presented a different opportunity: Could I build predictive models that could compete with people in ascertaining outcomes on Stack Overflow, such as whether any user would mark a given question as a favorite?

As I set out to test this proposition, I determined that my response would take two major parts. First, I would need to build a pipeline that would acquire and clean Stack Overflow data, build and apply predictive models, and export selected data and associated predictions. Second, I would build a web application that would challenge users to a simple game that I dubbed Stack Overlord, which tests their ability to defeat machine learning models (“the machine”) in predicting how selected questions and users fared on the site. In the process, I made use of a range of technologies, from Google BigQuery, Apache Spark, and H2O in a pipeline running on Amazon Web Services (AWS) EC2 to a Flask web application using the AWS Lambda serverless computing platform.

Stack Overflow

Stack Overflow came to surpass existing programming question and answer sites after its launch in 2008 because of key decisions by its founders, Joel Spolsky and Jeff Atwood. Making the site open and free to use erased the barriers that had limited the efficacy of several established sites that served as walled gardens where answers were only available to paying members. In founding Stack Overflow, Spolsky and Atwood reasoned that the broadest possible participation would lead to the best answers and the fastest responses. This may have been possible because they initially did not regard Stack Overflow primarily as a revenue-generating business, but rather as a community and extension of their popular blogs. (It now generates income largely through paid job listings.)

A second key distinction that helped Stack Overflow to eclipse a series of more established Q&A sites can be found in its savvy use of gamification principles. As users submit questions, answers, comments, and votes, they are also participating in a game that rewards them for their contributions to the community. When others vote that a user’s answer is useful, that user gains in reputation. The metrics that the site uses are visible and stored in the site’s database.

As users seek to build their reputation on the site, they receive incentives to participate in ways that others find helpful. Gaining in reputation can also afford users more permissions on the site; for example, new users cannot make comments until they have acquired at least 50 points of reputation. Users can also spend some of their reputation points on bounties, which allow other users to gain extra points by answering a question of particular interest or importance to the person offering the bounty. These mechanisms encourage users to offer value in their answers and actions, especially to the extent that they are motivated to keep “playing” the site like a game. It also discouraging antisocial behavior such as spamming.

User reputation is therefore a key element of Stack Overflow and forms a major focus of the machine learning models and web application I would develop. Users can build their reputation points through submitting questions and answers that other users vote up and by submitting an answer that the user who submitted a question accepts as the best answer. There are also several other means to gain reputation, such as suggesting edits that are accepted, submitting documentation that is accepted or voted useful, and accepting another user’s answer to one’s own question. Users lose reputation points when others vote that their questions are not useful, if others withdraw up votes on their questions or answers, or if they repeatedly spam the site or engage in other conduct that harms the community.

The site provides a variety of means by which users classify and evaluate content, which serves to organize the massive amount of content on the site and make it easier for users to find what is most relevant and useful. These include tags that identify the programming language, operating system, framework, library, and other technologies that a question addresses. The site also keeps track of and reports on the number of up and down votes and the number of views received, which indicate how valuable and visible the content was to the community. Many of the metrics that the site tracks promote community moderation through gamification.

Users may also mark a question as a favorite by clicking on a star, which suggests that they find it useful enough that they want to bookmark it and return to it in the future, perhaps to check for new answers. This process of favoriting a question is distinct from voting a question up or down, although users whose questions are favorited sufficiently may receive badges that Stack Overflow awards for a variety of achievements. Predicting whether a question would be marked as a favorite at all appeared to be an intriguing challenge, so I chose it as another focus of the models and game that I would build.

Stack Overflow has been releasing public versions of its database for several years, making them available with a Creative Commons ShareAlike 3.0 (CC BY-SA 3.0) license. This licensing allows others to make use of, transform, and share the content, even for commercial purposes, with attribution. While this project does not have a commercial objective, the flexible licensing of the dataset makes clear that my project to predict outcomes of data from the Stack Overflow database and present data and predictions in the form of a game is permitted.

As Brent Ozar points out, the Stack Overflow database consists of a small number of large tables (some as large as 90GB) with a variety of data types and real-world data. As such, some records contain unexpected values or formats, and require filtering or other forms of cleaning.

To Ozar, these factors makes it superior to the small, clean databases that software vendors such as Microsoft typically provide for demonstrations, training, and testing on their server products. For me, the Stack Overflow dataset offered a better alternative to some of the well-worn datasets used in statistics and data science courses. The fact that its content comes from a site I use regularly was simply a bonus.

The Pipeline

A number of key decisions went into the development of the pipeline that extracts data, cleans it, builds machine learning models and makes predictions, and exports results in a format that can be used by a separate web application. Linux on Amazon Web Services (AWS) Elastic Compute Cloud (EC2) was selected as the platform on which the pipeline would run partly because of the likelihood that the volume of the data would exceed what my laptop, well-equipped though it is, could comfortably process.

Although a Virtual Private Server (VPS) running Linux could also provide the additional computing power that I was likely to need, AWS provides the advantage of great flexibility. It allowed me, for instance, to work with a virtual machine of a given computing power, then stop the machine, resize it to an instance with much more RAM and processing power at its disposal, and start it again to take on a larger task. Amazon’s Free Tier also enabled me to do some work at no cost, while I could readily switch to a machine with 8 virtual CPUs and 32GB of memory for just $0.376 per hour at any time, with the knowledge that even larger instances were always available if needed. Other services such as Microsoft Azure offer comparable capabilities, but one purpose of this project was to gain experience with tools that are widely used in industry, that interest me, and that are new to me. AWS dominates the public cloud computing market, with 4.7 times the market share of Azure as of the first quarter of 2017, according to this report, though Azure usage is growing substantially.

In acquiring data from the Stack Overflow public dataset, I chose to take advantage of the fact that it is hosted as a public dataset on Google BigQuery, part of the Google Cloud Platform. This was an alternative to using BitTorrent to download the entire dataset and then installing SQL Server in the cloud or locally so that I could extract the specific data I needed. I have been working with SQL Server for many years and use it every day, so I welcomed the chance to try out Google BigQuery. Using BigQuery to extract data will be an easy adjustment for anyone with good SQL skills. Google explains that it offers standard SQL, which follows the SQL 2011 standard, but offers some additional extensions, and also enables querying through BigQuery legacy SQL, which has some differences from the standard. Google recommends that users employ the standard SQL, rather than using legacy SQL, which differs in some of its syntax, functions, and handling of data types. I followed that recommendation and found few difficulties in writing queries to extract the data I needed.

The Google BigQuery pricing scheme was new to me. It depends in part on the amount of data that queries process. Google uses a columnar data store and charges based on the amount of data in the columns included in a query. As a result, it is possible to run a query that returns a small number of rows and find that Google states that it processed 36GB, for example, in running the query. Flat rate pricing is only available to large customers, so it is generally important to develop an understanding of how much data queries are likely to process in order to contain costs. This project benefitted from a pricing policy that makes the first 1TB of data processed free of charge, so there were no costs in extracting the necessary data from the Stack Overflow public dataset through BigQuery.

Google BigQuery provides a convenient web interface for running SQL queries, but I knew that I would ultimately need to acquire data as part of a pipeline. I wrote a Bash script that could accept arguments from the command line, such as a file containing a BigQuery SQL command to call, a file to store output from BigQuery on the AWS instance, and the date range to use in the query. By running the Bash script with different parameters in the pipeline, I was able to acquire the data I needed through a batch process.

As Brent Ozar noted in his discussion of the utility of the Stack Overflow public dataset for testing and training purposes, as a source of real world data, it can be messy, containing some unexpected and questionable values. Some text entries are in double-byte characters. For example, a user may submit questions, answers, and comments in English, but write his or her profile in Korean. In addition, some fields contain data that is not valid, such as fanciful entries in the age field that some users submitted, and there are entries in the field that records when a user joined Stack Overflow that precede the date when the site was created. For these and other reasons, it was necessary to clean the data before it could be used to build machine learning models and produce predictions.

I chose to use Spark as a means to clean the data I extracted from Stack Overflow and also to create new features that I would later use in building predictive models. I used PySpark in particular, and, although this choice worked out well for the purposes I have described, it did have other consequences that affected subsequent steps in the pipeline, as I will explain later.

I created Python scripts to clean each data file that I extracted from the Stack Overflow public dataset on Google BigQuery, and created Bash scripts to submit the scripts to Spark. Spark Submit would be another option, which would probably be more appropriate in a true production environment.

These Python scripts use the Spark’s capabilities to remove records that contain invalid data. They also create categorical features and do other preprocessing. For example, as discussed earlier, Stack Overflow assigns users reputation points based on their actions on the site. This means that new users start with a reputation of 1 point, top users may have a reputation in the hundreds of thousands of points, and some users may have a reputation that is negative due to the submission of spam or other actions that other users deemed unhelpful. Rather than use and ask users to predict the raw reputation scores, I used Spark to compute the quintiles for these scores. I simplified this and other tasks by created a Python class providing utilities functions for cleaning and preprocessing data in Spark dataframes in the ways that I required.

Spark was especially useful in my work with tags. The Stack Overflow public dataset includes a tags field in its questions table. The tags field contains up to five tags that were assigned to a question that a user submitted on the site. The tags are stored separated by a pipe character (|). PySpark made it relatively easy for me to create functions to work with tags in my class for cleaning and preprocessing data. While splitting the contents of the tags field into separate tags would be straightforward in many platforms, Spark allowed me to accomplish more without difficulty. For example, I was able to calculate the total frequency of occurrence of the combined tags associated with each question. This information, I hypothesized, could be useful because it could indicate whether the technologies to which a question pertains are common topics of discussion or obscure ones, which might affect whether other users favorite the question.

I initially planned to use PySpark for building predictive models as well and took preliminary steps to do so. While I was able to produce models and generate predictions using PySpark, I encountered limitations and complexities related to the divergence between two machine learning packages for Spark, the older MLlib package and the newer ML package. The MLlib package was created to work with Spark’s resilient distributed datasets (RDD) and offers a comprehensive implementation to use them. When Spark later introduced dataframes, the ML package emerged to work with the newer dataframes, rather than the RDDs that underlie them. The Spark 2.0 documentation advises:

“Using spark.ml is recommended because with DataFrames the API is more versatile and flexible. But we will keep supporting spark.mllib along with the development of spark.ml.”

I found that, in some cases, I could build a model and make predictions using the ML package, but could not evaluate the predictions as I would have liked with that package. Often, the MLlib package appeared to be more comprehensive, particularly as a means to generate metrics by which to evaluate predictions, but it would have required conversion of dataframes to RDDs and a different approach to get the results I wanted.

In addition to the differences between the ML and MLlib packages and the limitations involved, I found that I would sometimes encounter Spark features that I wanted to use, only to discover that they were available in Scala, the primary language in which Spark is written, but had not yet been ported to Python. This suggests that it may be preferable to work with Spark in Scala if one’s organization is adopting Spark for major projects, but for the purposes of this capstone project, I decided to adopt a different technology for building and applying machine learning models.

The alternative that I chose to pursue was to use the H2O machine learning platform. Written primarily in Java, H2O describes itself as a fast, scalable machine learning API that also offers Python and R APIs. A recommendation from Chicago-based data scientist at Uptake and NYC Data Science Academy graduate and consultant Joseph Lee encouraged me to investigate H2O, which proved to be a capable tool well suited to this project.

Just as I had created a data cleaner class to provide functions that would facilitate my cleaning and preprocessing of data in Spark, I similarly created a data predictor class to assist in building machine learning models and making predictions, this time with H2O. These included functions to initialize a connection to the H2O instance, import data from a CSV file into an H2OFrame, define fields as factors where appropriate, perform grid search, build machine learning models, such as logistic regression, random forest, and gradient boosted machine models, generate and evaluate predictions, and export results.

While results varied depending on a number of factors, including the amount of data used in training the models, I found that, in the case of predictions of whether a particular question would be marked as a favorite by any users, when built with a small amount of data, the models achieved an AUC value ranging from 0.807 to 0.840 for validation datasets with the Gradient Boosted Machine (GBM) model achieving the best results. Here AUC refers to the area under the ROC (Receiver Operating Characteristic) curve, which represents the tradeoff between the true positive rate, sensitivity, and the false positive rate (specificity).

While these AUC values are acceptable but not outstanding, they do show that it is possible to achieve reasonable predictions of whether a question will be favorited on Stack Overflow without any knowledge of the actual substance of the questions. The models consider factors such as the length of the title and the length of the body of a question, how long the user who submitted the question has been a member of the site, the total frequency of the question, and other data related to the question. However, it does not perform any natural language processing (NLP) to determine things such as the readability of the text of the question or use text analysis techniques such as term frequency–inverse document frequency (tf-idf). Text analysis through NLP techniques has the potential to produce new meta-features that could contribute to more effective predictions of whether a question is favorited. This might be a productive path to consider for future development of this or similar projects, but the large domain of text analysis was determined to exceed the scope of this capstone project.

The H2O platform that this project employs for machine learning modeling could also be integrated with Spark through a Spark application library called Sparkling Water. Sparkling Water provides means to convert between Spark and H2O data structures, including Spark’s RDD and DataFrame and H2O’s H2OFrame.and to call on H2O machine learning capabilities from Spark, including PySpark. Although I did not end up using Sparkling Water in this project, it could be an appropriate extension to this project and could well prove useful to other projects that would benefit from integration between PySpark and H2O.

In addition to building models and making predictions using H2O, the data predictor class I created computes quantile metrics for the fields specified. This enables me to easily produce quantile metrics (such as the 25th percentile, median, and 75th percentile) for several features for each question and user. These metrics are not used directly in making predictions, but they are displayed in the web application, as I will discuss in a subsequent section.

After using H2O to predict outcomes and calculate quantile metrics, my pipeline saves the results to JSON files. Finally, it needs to transfer these JSON files, which include selected fields from the Stack Overflow dataset that I cleaned and preprocessed along with predicted and actual outcomes, to a location where they will be accessible to a separate web application. I chose to use the Amazon S3 service for this purpose. This is accomplished through a straightforward Python script thanks to the boto3 module, which facilitates the upload to and download from an S3 bucket. A Python dictionary provides the mapping of local filenames on the AWS instance that runs the pipeline to the intended names of the data files that the web application will expect.

Readers can find setup notes for the pipeline on GitHub.

The Stack Overlord Online Game

I developed the Stack Overlord game to make it possible to match wits with the machine learning models that I trained on Stack Overflow question and user data. Even without analyzing the substance of questions or other user submissions, such as answers and comments, the models produced reasonable, though not outstanding, predictions. How well would human players do in making predictions without viewing the text of questions or other user content? I conceived of my goal as creating a Minimum Viable Product (MVP), which would enable people to take the basic steps of competing against machine learning models. I did not set out to build a refined, full-featured game, which would have required more resources, such as a graphic designer, than were available for what was a solo capstone project.

How to Play Stack Overlord

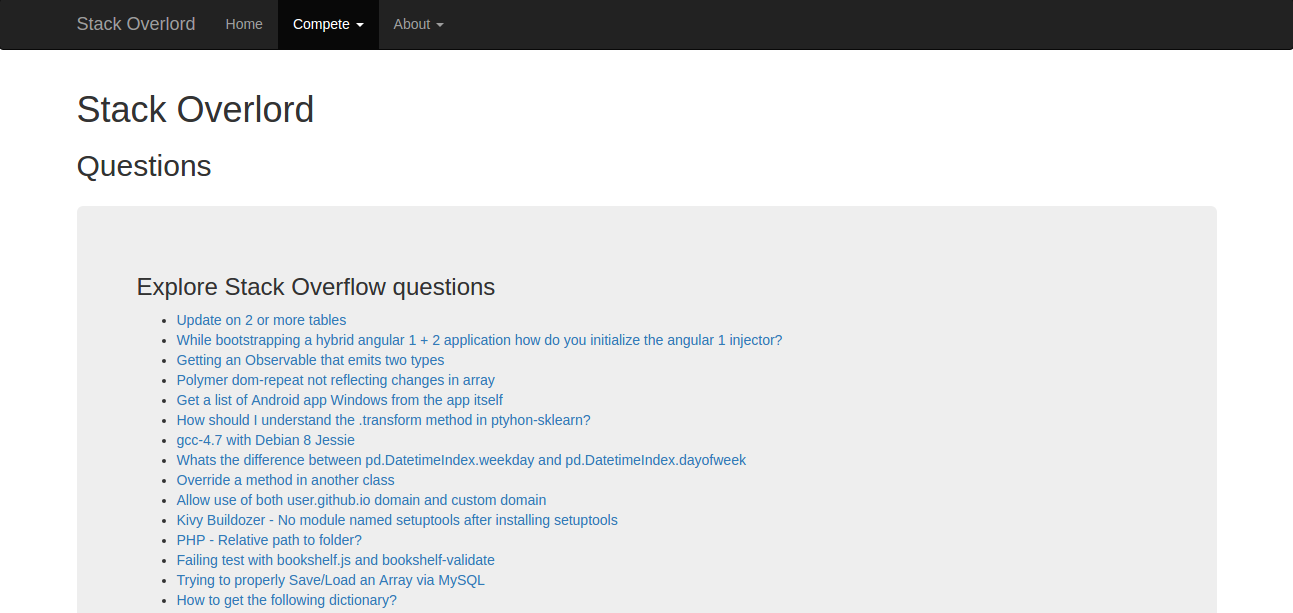

Playing Stack Overlord is designed to be a straightforward, but flexible process. Players may start with either of two types of challenges: Stack Overflow questions or users. If a player chooses questions, he or she is presented with a list of the titles of Stack Overflow questions. The player may start from the question at the top of the list or choose any question that is of interest.

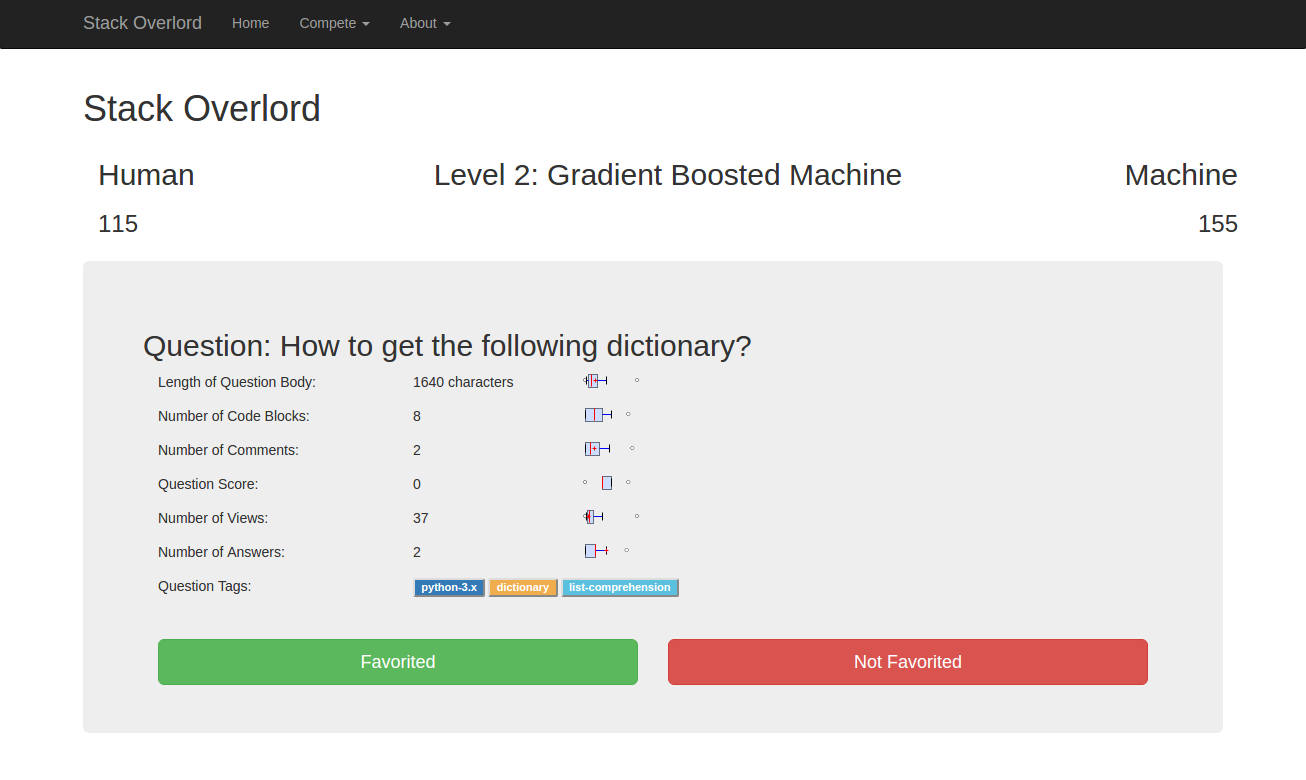

When the player selects a question, a page appears with selected measurements of that question. The challenge for the player is to correctly predict whether the question has been favorited (by any other Stack Overflow user). The player can use the box and whiskers charts of the measurements to understand how the question compares with other questions by each metric.

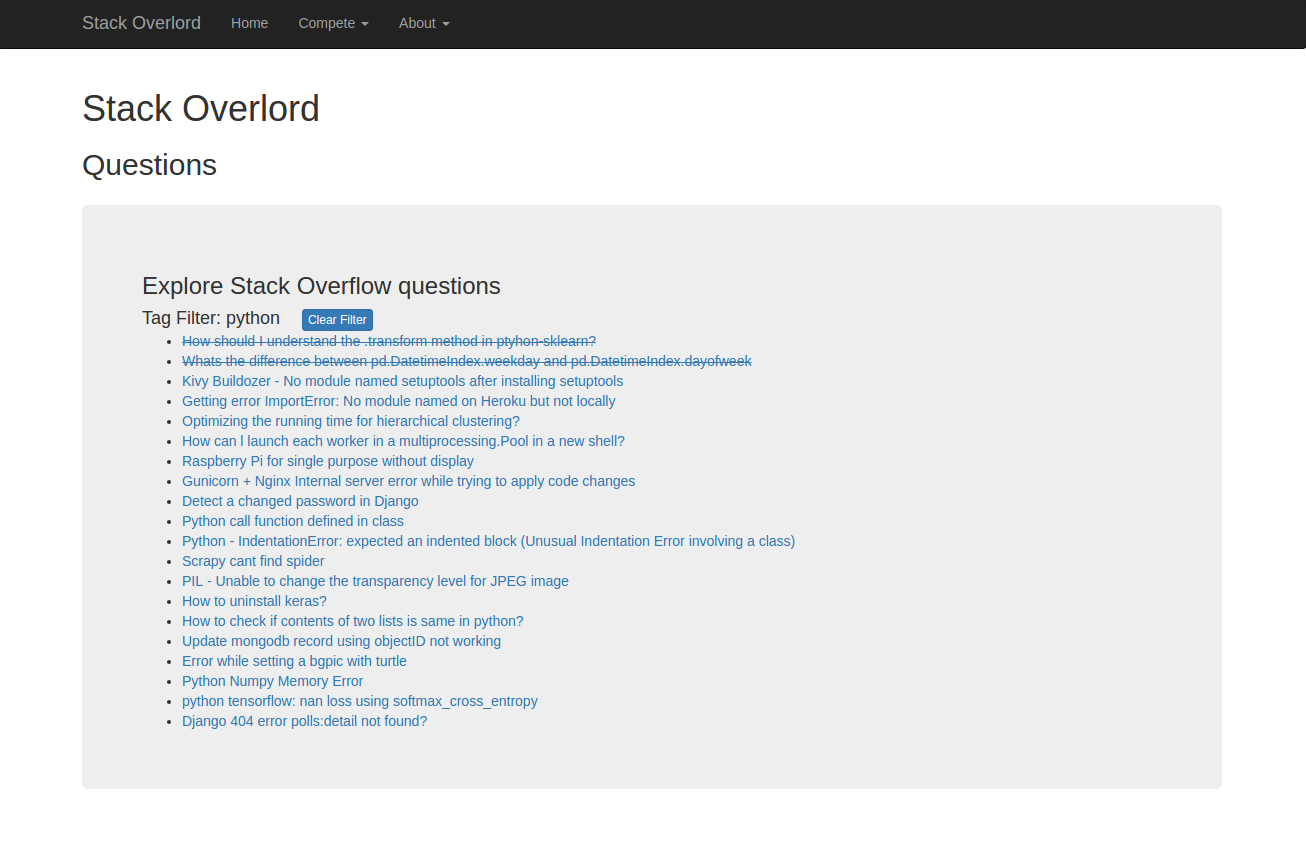

Prior to making a prediction, the player may wish to select one of the tags that appears on the question. Choosing a tag enables the player to specialize in questions associated with that tag. This means that, once the player completes the current question, the game will continue to present questions that share the same tag, as long as they are available. Once a player clicks to select a tag, a black border appears around that tag. The tag can be deselected by clicking the same tag again or by clicking a different tag to select it instead. The player can choose to answer questions related to one tag, such as Python, for a while, and then clear that selection or switch to a different topic of interest, such as Java, later.

To maximize the number of points earned, the player must predict correctly when the machine learning model (“the machine”) predicts incorrectly. If both the human player and the machine predict correctly, then each receives partial credit.

The player may choose to return to the list of questions, which will be filtered by the tag that he or she selected, if any. The list of questions will indicate which questions the user has already attempted.

After the player accumulates sufficient points predicting whether questions were favorited, he or she advances to a higher level. The player will then compete against a different machine learning model, which will be a more challenging adversary than that of the initial level, which is a logistic regression model.

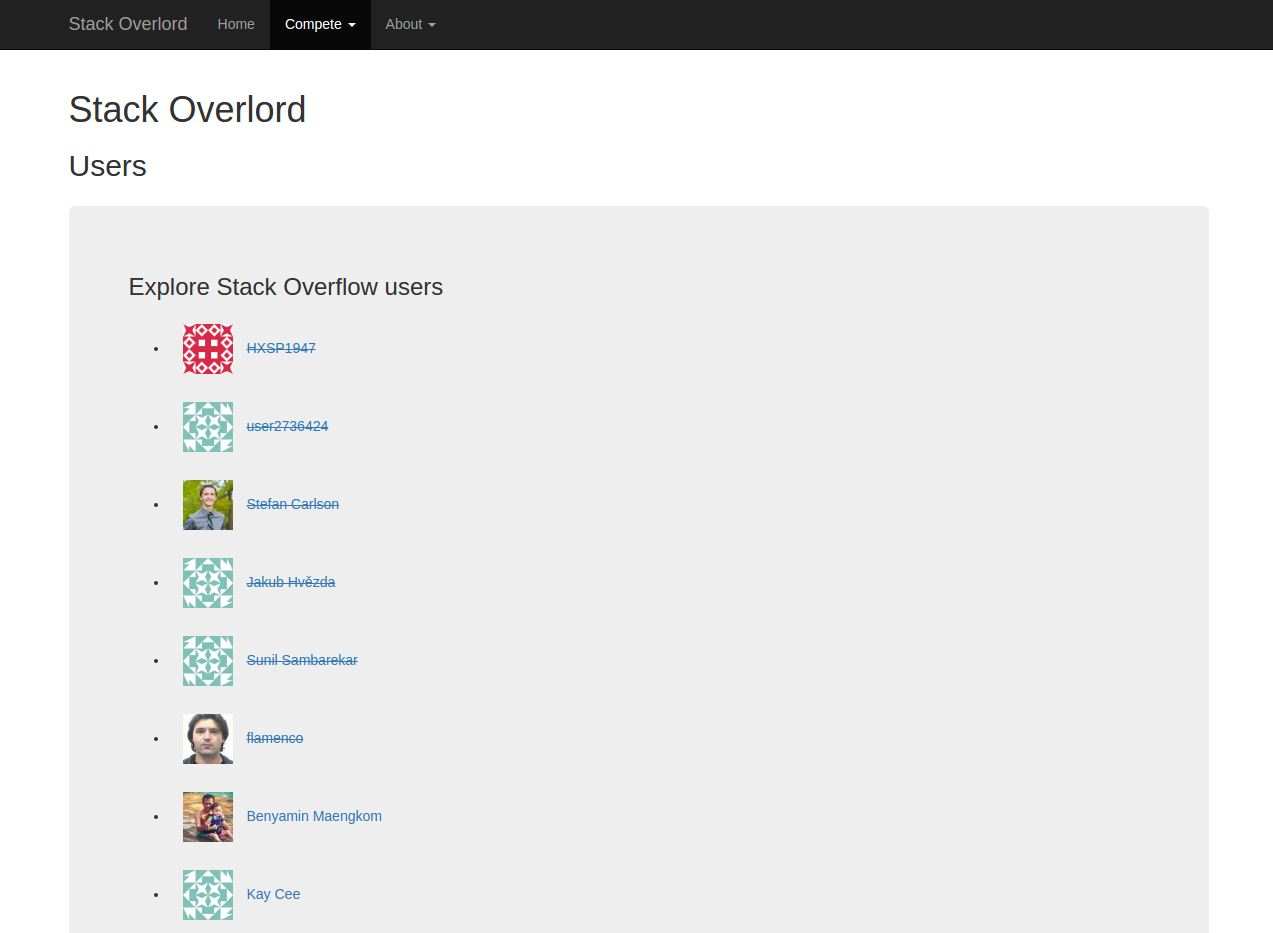

Players may start with or switch to the other type of challenge, predicting the reputation levels that users attain, at any time during the game. Choosing Users from the menu displays a list of user names and avatars, where available, from Stack Overflow. The player may then choose from the list to start competing.

Once the player selects a user from the list, the game shows the player measurements that describe the user. The measurements are accompanied by box and whisker charts that put the measurements in the context of other users, as is the case for questions. Players are expected to use a slider to show the reputation quintile that they expect the user to achieve.

The outcome of a player’s prediction of the Stack Overflow user’s reputation level is scored and displayed in a manner that is similar to the treatment of Stack Overflow questions. Players receive the most points when they choose the correct quintile, and the machine learning model does not. They can also earn some points for getting closer to the right answer than the machine.

Players may return to the list of users, where users for which the player has already made predictions will be indicated by strikethrough text.

The game keeps track of the scores achieved by the current player and the machine across the two types of challenges and displays them after a player makes a prediction for a question or user.

Building the Game

I chose to develop the game using Flask, a lightweight web framework written in Python. Flask depends on the Werkzeug WSGI (Web Server Gateway Interface) library and the Jinja2 template system but provides limited capabilities compared with many other web frameworks, such as Django. However, many extensions are available to add functionality to Flask, making it possible for a developer to rapidly build an application with the necessary capabilities but without the bloat associated with some heavier frameworks. It is also less opinionated than many other frameworks. Even the Jinja2 template engine can be replaced, if needed.

Flask is frequently used for rapid application development, including in data science and engineering, such as to display dashboards. It can also scale up to very large applications that meet the highest levels of demand, reportedly including Pinterest, which experiences about one billion visits per month.

Sticking with the lightweight theme, I selected Amazon’s AWS Lambda service to host the web application that I developed. Amazon states that Lambda enables “serverless” applications because it makes it possible to run code, including a web application, without the traditional responsibilities of setting up and managing a dedicated web server. Instead, Lambda provides a service that executes functions when it is called, such as when a user makes an HTTP request. It is highly scalable but very inexpensive for a low traffic web application.

The Lambda architecture has some noteworthy limitations. Requests cannot take more than 300 seconds to execute, and there are also limits on the size of the packages used to deploy functions and dependence on other tools within the AWS ecosystem. None of these were concerns for the web application I sought to build, so I proceeded with its development and deployment on AWS Lambda.

One key capability that the Stack Overlord game required was the ability to access the subset of data that the pipeline extracted from the Stack Overflow public dataset using Google BigQuery, cleaned and preprocessed using PySpark, and built and applied machine learning models to using H2O. As noted earlier, the pipeline concludes by uploading results to an S3 bucket. The web application is then able to acquire from the S3 bucket the data it needs to present challenges to players.

Because the Flask application, like the pipeline itself, is written in Python, it was possible to use the boto3 module again to access the S3 bucket. This time, boto3 is used to acquire JSON data files from the bucket. They can then be read into Python lists and used to display Stack Overflow questions and users.

If I were to go beyond a Minimum Viable Product, I would use either a SQL database or a NoSQL database such as MongoDB or Amazon’s own DynamoDB to store the questions and users. While that would be more robust, it was not necessary to create a working game using Flask.

Twitter Bootstrap was used as the front-end framework. A collection of customizable HTML and CSS templates, Bootstrap makes it easy for people who are not graphic designers to produce presentable user interfaces. It also makes it possible for a web designer to transform the look of a site dramatically without necessarily requiring changes to the application code. In the case of Stack Overlord, the result was clean, but unlikely to result in a call from Electronic Arts.

The game stores some information on the client side, using the capabilities of modern HTML5 compliant web browsers, specifically SessionStorage. This provides an alternative to using cookies, which have much more limited storage capacity. The JavaScript JSON.stringify() method makes it possible to convert a JavaScript object into a string, which can then be stored in SessionStorage. The complementary JSON.parse() function converts a string into a JavaScript object, and can be used to make session variables accessible after retrieving the session object from SessionStorage.

These capabilities gives the application easy access to session information such as the scores of the human player and the machine, outcomes of each completed challenge (which need to be redisplayed if the player selects them again), and more. When the player completes a new challenge and his or her score and/or the machine’s score changes, the web application creates an updated session object and replaces the existing session object in the SessionStorage. The use of SessionStorage means that the game can be played with recent versions of major web browsers, such as Google Chrome, Mozilla Firefox, or Microsoft Edge, but not with older browsers that do not support HTML5.

The web application also benefitted from other technologies related to Bootstrap. Among these is the Bootstrap Slider JavaScript library that provides the slider component that player use to indicate their prediction of the reputation level of a Stack Overflow user. This library provided a clean and effective UI component for this purpose that integrated well with the application. It could also be easily modified to accommodate a different number of quantiles of reputation levels or other metrics.

The libraries Flask-Bootstrap and FlaskNav also aided in the construction of the web application. Flask-Bootstrap provides an extension that, once loaded, provides support for Jinja2 templates that are based on Bootstrap. FlaskNav is an extension that assisted in the creation of the site navigation in a manner that can be readily updated or extended.

Simply displaying measurements such as the length of the text of a question that a user submitted on Stack Overflow or the number of blocks of code that the user included in his or her submission can leave players with too much uncertainty. Is 1200 characters a lot or a little for a Stack Overflow question? How does three blocks of code compare to the typical question on the site? Fortunately, the jQuery Sparklines plugin helped to provide a solution to this problem. I used the Sparklines plugin to provide box and whiskers diagrams that show, where possible, how the measurements for particular questions or users compare with the median, lower and upper quartiles, and the extremes (2nd and 98th percentile). This provides crucial context for evaluating the numbers for each question or user to afford the human player a fair chance in making predictions.

The assistance that the box and whiskers charts provide could also be extended further to increase its value as an element of the game. For example, it would be possible to devise higher levels of gameplay that are more challenging simply by limiting the number of box and whisker charts that are presented or reducing the information that each presents. Alternatively, users could be required to purchase the hints that box and whisker diagrams can provide, using a few of their points to make the purchases if they wish to gain an information edge. Any of these options would serve to make the game more challenging.

The code for the web application can be found on GitHub.

Conclusion

In this project, I drew on a variety of technologies, platforms, and tools to develop a pipeline that extracts and preprocesses data from Stack Overflow, builds and applies predictive models, and exports results to a cloud-based platform. I also built a web application that consumes the predictions and associated data and presents them in the form of a game, in which players compete against machine learning models in predicting Stack Overflow outcomes. The result was a loosely coupled system that is currently capable of obtaining data and producing reasonable predictions that are presented interactively as a competition between human and machine.

Both open source technologies and cloud based platforms, especially Amazon Web Services, benefitted the development of this system. Open source technologies, including Apache Spark and the H2O machine learning platform, were critical to the cleaning and preparation of the data and the development and application of predictive models. The web application also relies heavily on open source technologies, such as Flask, Twitter Bootstrap, and jQuery. Google Cloud Platform’s BigQuery web service provided the means to extract source data from Stack Overflow, while Amazon Web Services platforms such as EC2, S3, and Lambda enabled both the pipeline and the web application.

There are many ways in which this project could be extended if more time and resources were available. A few of these include:

- Applying natural language processing to analyse the text content of Stack Overflow questions and using the findings to strengthen predictive models

- Developing more types of challenges for players to encounter in the game

- Creating more levels of game play, which could be achieved by developing a broader range of machine learning models and using increasingly competitive models as players advance to higher levels, which is currently done for a small number of levels

- Limiting the ability of players to see box and whiskers charts at higher levels of game play or requiring them to use some of their points to unlock them

- Creating a database back end for the game, both to store the processed data and predictions based on Stack Overflow and to enable storage and authentication of player accounts

- Tracking of players’ scores in the database and providing the means to visualize how players compare with each other and how the machine learning models compare with the population of human players

These types of enhancements are achievable, given sufficient time, due to the capabilities of the underlying technologies and platforms and extensibility of the system.