Scraping comic book reviews: Critics vs Fans

Contributed by Ho Fai Wong. He is currently in the NYC Data Science Academy 12 week full-time Data Science Bootcamp program taking place between April 11th to July 1st, 2016. This post is based on his third class project - Web scraping (due on the 6th week of the program).

I. Introduction

There is such a plethora of US comic book publishers and series that, as a comic book fan, it is sometimes challenging to decide which new series to try out. Luckily, multiple comic book review websites can easily be found online. Among them, comicbookroundup.com aggregates critic and fan reviews from different websites and calculates average critic and fan ratings. Do critics and fans agree though? What insights can we glean from differences in their ratings and opinions?

To answer these questions, I wrote a web scraping application using Scrapy in Python to extract the publisher, series and issue rating information from comicbookroundup.com, and analyzed it in Python using Jupyter Notebook. All the code is available here.

II. Approach

The hierarchy of these pages is summarized in the image below. To analyze critic vs user (i.e. fan) ratings, I needed to extract issue ratings from the website by crawling 3 levels down.

Step 1: Crawl with Scrapy

- Scrape publisher urls from main page: 20 publishers total

- For each publisher, scrape series urls: 5,829 series total

- For each series, scrape series info, issues and ratings: 33,157 issues total

Below is a snapshot of the scraping "spider" Python code illustrating these 3 steps: scraping the publisher urls to a text file, scraping each publisher page for the series urls, and then scraping each series page for the issue ratings.

Step 2: Pass to Jupyter Notebook

- Series data:

- Save series data to MongoDB collection

- Load into series dataframe in Jupyter Notebook

- Issues data:

- Extract issues from nested dictionaries in series data into MongoDB collection

- Load into issues dataframe in Jupyter Notebook

III. Analysis

Issues and average ratings by publisher

To start the data exploration, it would be helpful to see the relative size of each publisher and high-level ratings by critics and users.

DC and Marvel are by far the largest publishers.

Most ratings fall within the 7-9 range (out of 10). For most publishers, users tend to rate issues more generously than critics (i.e. publishers are located on the left of the y=x 'diagonal' line). DC and Marvel, the 2 largest publishers, are rated relatively low by critics.

Critic vs user ratings

Let's plot the critic vs user ratings for every issue in our data set. The green line represents the y=x diagonal. As seen previously, most ratings fall within the 7-9 range and critics tend to rate comics lower than users for a given issue. Also, users tend to rate a comic 2 at a minimum, whereas critics hesitate less to rate it at 1.

Looking at the rating difference (critic - user), the differences follow a normal distribution, with a mean slightly skewed to the left of 0 i.e. once again, critics tend to rate more harshly than users.

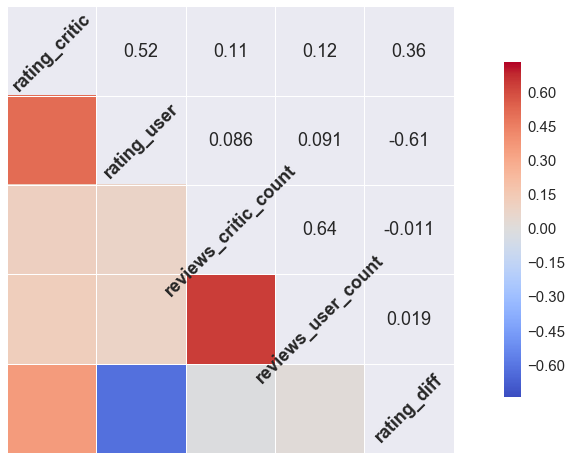

Correlation between ratings and reviews

I was curious whether there was any correlation between ratings and number of reviews. The correlation plot below shows that, as expected, critic and user ratings are correlated, as are the number of critic and user reviews. However, the number of reviews (either critic and/or user) are not strongly correlated with ratings. In other words, a popular issue could be reviewed a large number of times by critics and/or users but have a low or high rating; its popularity doesn't provide insight on its perceived quality.

Looking at correlations in a bit more detail, the scatterplot matrix below also shows that extreme rating differences are tied to issues reviewed by a small number of users (and therefore we probably shouldn't put too much weight to their rating). Critics, however, tend to agree more on these issues.

Focus on top publishers

Lastly, let's look at critic vs user ratings by publisher. I selected the top 5 for simplicity. As observed before, users tend to rate issues higher than critics, for all publishers.

DC and Marvel, the 2 largest publishers, have more issues rated poorly by critics compared to Image, Dark Horse and IDW (larger left tail of the density curve). Conversely, the latter 3 publishers have a higher concentration of highly rated issues by critics compared to DC and Marvel. This could perhaps be related to the different types and genres of comics from these different publishers (e.g. adult-themed vs child-friendly).

IV. Conclusion

In conclusion:

- Overall, critic and user ratings are in line and rate comics ~7-9

- Critics are slightly more discerning than users

- Critics hesitate less to rate a comic very low

- A comic's rating and number of reviews are not highly correlated

- Comics with large rating differences between critics and users were usually rated by very few users

Ultimately, in order to discover new comics to read, it may be preferable to identify reviewers who share similar preferences, and focus on their recommendations specifically, instead of simply relying on average ratings be they from critics or fans since ratings hover around ~7-9 anyway.

Potential future steps include:

- Building a Shiny application to search for best reviewed titles, writers and artists, and explore based on individual preferences

- Finding outliers (e.g. series, writers, artists, issues with largest rating difference)

- Scraping individual reviews to build word clouds, identify users who repeatedly contribute to the largest ratings differences, etc

In the meantime, if you have any comments, suggestions or insights, please comment below!