Scraping of dice.com

Contributed by Arda Kosar. He is currently in the NYC Data Science Academy 12 week full time Data Science Bootcamp program taking place between April 11th to July 1st, 2016. This post is based on his third class project - Web scraping (due on the 6th week of the program).

Part I. Introduction

The main motivation when I came up with the idea of scraping a job board is that, I will start working as a data scientist after the bootcamp and I wanted to see how the job posts look like regarding my field. What are the trends, what are the main skills companies look for etc.

My first plan was scraping Indeed.com however I came across some difficulties which I will address throughout this post. After trying to over come the difficulties for three days - since this is a timed project, the allowed time limit was around 1 week and I really wanted to create an output - I decided to change the website to dice.com. I will also talk about what worked better for me with dice.com.

At the end of the project I was able to collect the data I want from dice.com and I got some insights which I will share in the Analysis part.

Part II. Data Collection

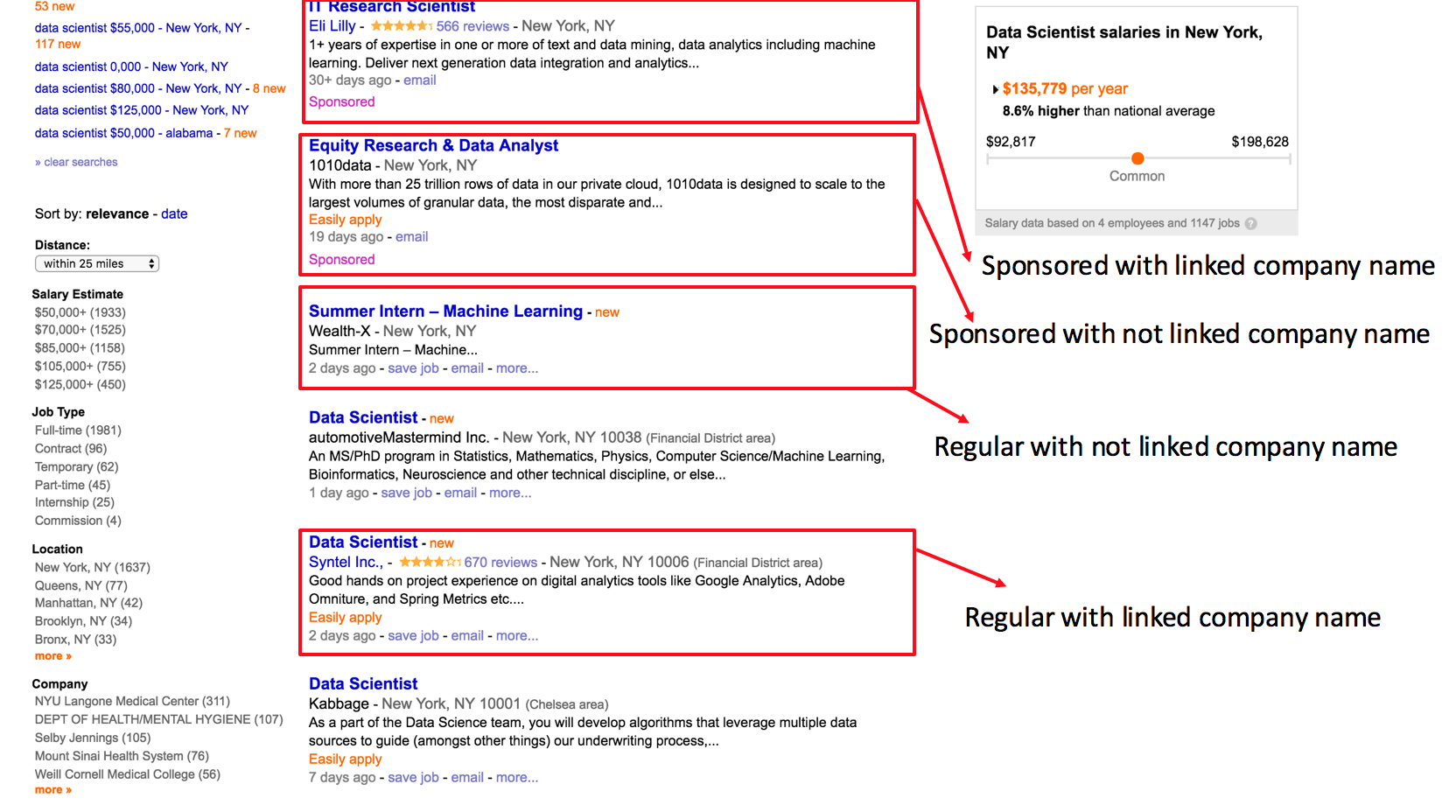

For the collection of my data I used scrapy and selenium. For indeed.com I had some difficulties regarding the html structure. I was trying to scrape job posts however I figured out that there were 4 different kinds of jobs and they were all under different tags in the page. An example from a page and the 4 types of jobs can be seen in the image below:

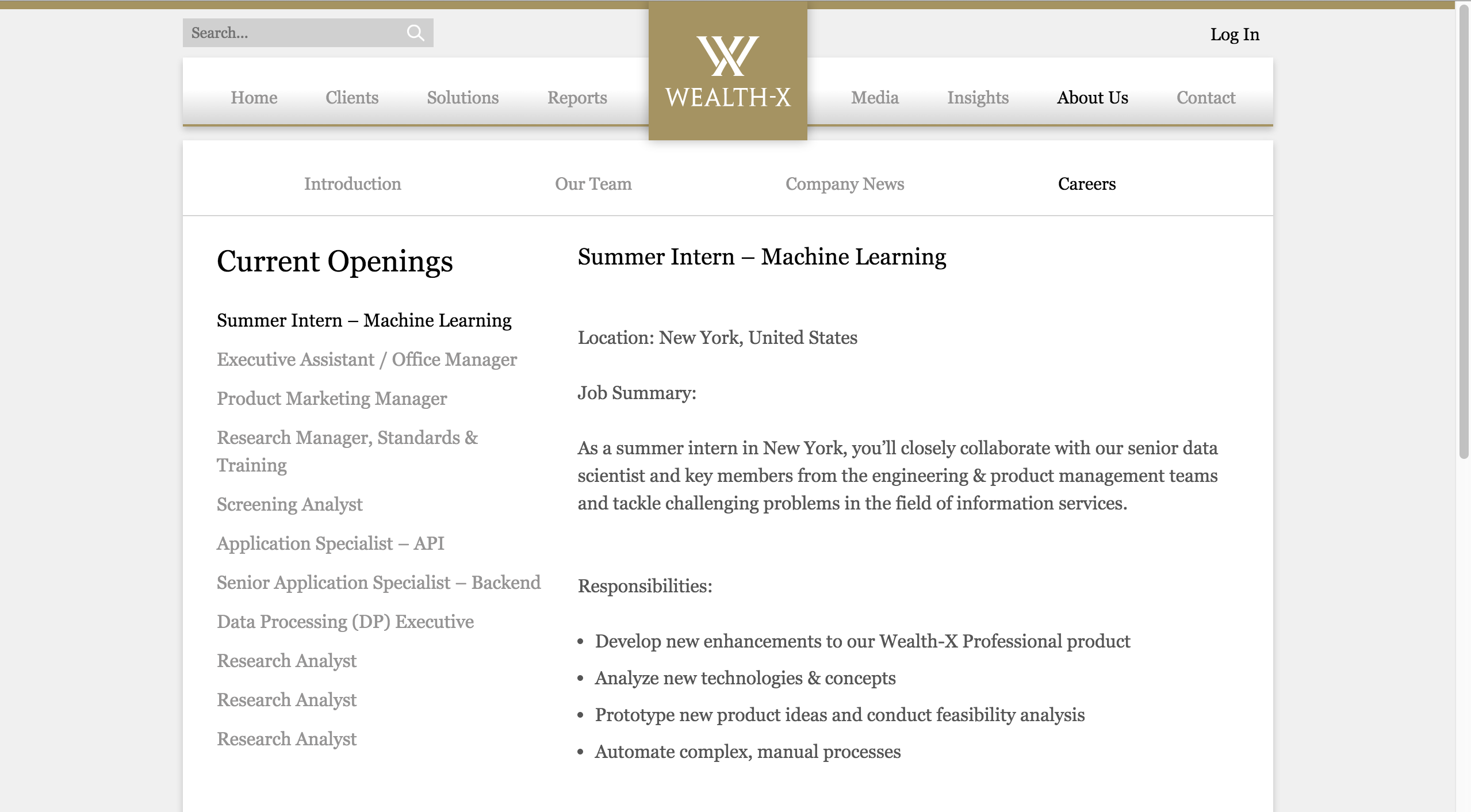

Since I can not get all the data I wanted from the first page, I thought about getting the information from the job description pages. When you click the job link there is the job description page. However, when the job title is clicked by the user the page directs the user to a different page with a different structure as follows:

Since the html structures of the job description pages were also different, the extraction of job information was a challenge from the job description pages.

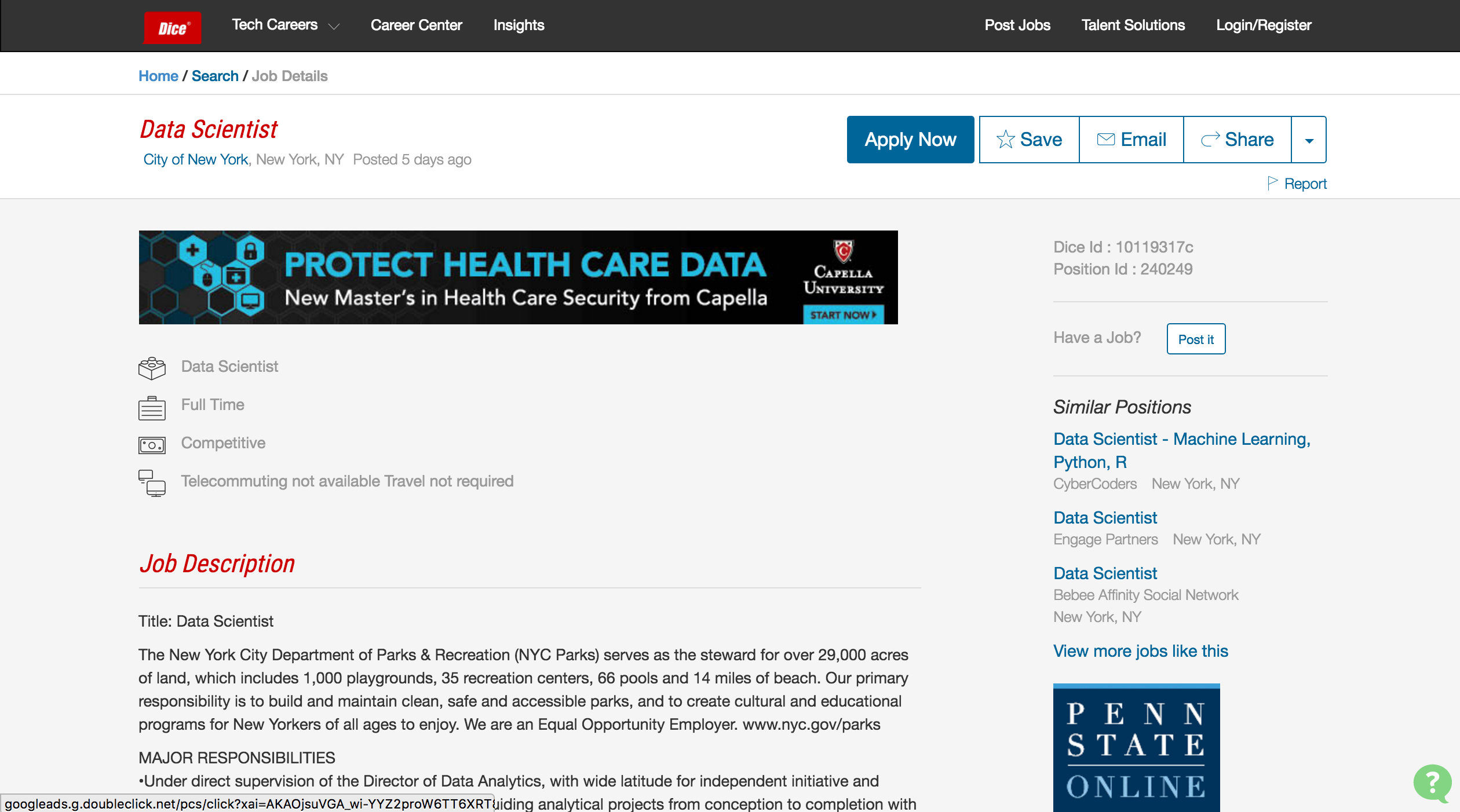

After considering all these I decided to change my website to dice.com, after taking a look to its html structure. In the search results page all jobs were the same type:

The job description pages were also have the same structure for all of the jobs in the search results. I extracted the job title, company name, job type, salary range and location information from the job description pages:

First I created a for loop for collecting all the links to the separate job posts from the search results page:

After the collection of all the links to the jobs posts, I got into the links and extracted the information I want, by creating the response of the page and iterating over the xpaths for the fields I want:

I wrote the extracted information to a csv file by running "scrapy crawl -o file.csv -t csv" from the terminal.

Part III. Data Analysis

At the end of the all scraping work flow, I obtained the following dataset:

As it can be understood from the head of the dataset, the data contains a lot of noise. For the purposes of this project, I carried out my data munging process in Python, mainly by using Pandas, Numpy, Seaborn packages. I imported my csv file to Python and converted it to a Pandas data frame.

Part III - I. Frequency of Unique Job Titles

As a first goal to my analysis I wanted to analyze how many unique job titles are there and how many of them are job posts that actually state "Data Scientist" in their title. However since the data was really noisy I had to do some feature engineering. First I used "value_counts" in order to find the counts of the unique job titles and then rewrote the job titles that contain similar words. For example; if job titles were like "Chief Data Scientist", "Lead Data Scientist" and "Data Scientist", I changed their value to "Data Scientist" so that I can get an exact count:

I calculated the frequency by dividing the value_counts by the length of my job_title vector. The bar graph that gives the frequencies of the unique job titles is as follows:

As can be seen from the graph "Data Analyst" is the most commonly included job title when "Data Scientist" is typed into the search box. Before plotting the graph I was expecting to see Data Scientist as the first title however there were more job posts which specifically includes "Data Analyst" and "Big Data Engineer".

Part III - II. Number of Jobs by Company Name

After analyzing the data according to job titles, since I have the company name, I wanted to see the number of companies which posts for the most number of jobs:

The company which has the highest number of job posts is CyberCoders, since it is a permanent placement recruiting firm. Deloitte has the second highest job post count which shows that the company places a high importance to this field of business.

Part III - III. Number of Jobs by Location

I did the scraping for data science jobs in New York City and for 30 mile radius. Obviously I was expecting to see the highest number in New York City however I was also curious about the 30 mile radius part. I grouped the data according to location and plotted the job counts by the unique locations:

Part IV. Conclusion

As a conclusion to web scraping project, it did not went the way I wanted. However I learned a lot from the experience in this project.

First of all the scraped data would have chosen in a more analyzed therefore after the scraping I would not end up with a dataset which included so much noise. Before the scraping flow I could have checked the fields I wanted to scrape and see that they do not include a pattern and they include a lot of noise which would make my job more difficult in producing output.

Secondly, I should have analyzed the web site more thoroughly before scraping. By this, I could find out that indeed would be difficult to scrape which would lead me to the path of choosing another website.

A positive outcome from the project for me is that I learned a lot by dealing with a noisy dataset. Since I could not plot anything with my categorical variables, I had to create some numerical variables to create some plots and in order to create those numerical variables I carried out a lot of data munging which was a fruitful process for my learning flow.