Studying Data to Predict Housing Prices in Ames

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Kaggle Competition: Housing Dataset from Ames, IA

Advanced Regression Techniques

by The Bench Initiative

-

Eric Adlard

-

Ryan Essner

-

Sabbir Mohammed

The code for this project can be found here.

INTRODUCTION:

The Ames Housing dataset was compiled by Dean De Cock and is commonly used in data science education, it has 1460 observations with 79 explanatory variables describing (almost) every aspect of residential homes in Ames, Iowa. This dataset is part of an ongoing Kaggle competition which challenges you to predict the final price of each home. The goal of our project was to utilize supervised machine learning techniques to predict the housing prices for each home in the dataset. It became clear that the high number of features, with most of them being categorical was going to be a challenge. Our steps towards creating a highly accurate model were as follows:

- Data exploration and cleaning

- Feature engineering

- Modeling

1. Data exploration and cleaning

The dataset was obviously very complex and detailed. This required careful attention to detail and a thorough examination of the many feature variables. The following table demonstrates a summary of our work for this initial step:

Another graphical view of our analysis is the following correlation plot from all the quantitative variables identified. Although this might not necessarily provide directly actionable or inferential information, it definitely aided in our exploration of the data.

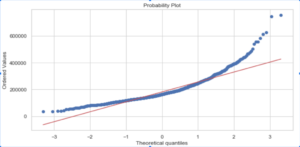

Sale price is the value we are looking to predict in our project, so it made sense to examine this variable first. The sale price exhibited a right-skewed distribution which was corrected by taking the log. Once the log was taken, we were no longer violating the normality assumption for regressions.

Sale Price without any transformations:

Sale Price with log transformation:

Missingness and imputation

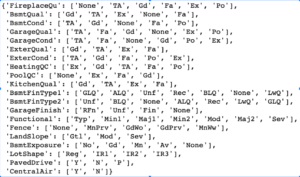

Next, we decided to look at missing values by feature in the train and test dataset. As you can see above there was significant missingness by feature across the train and test datasets. Most of the missing data corresponded to the absence of a feature. For example, the Garage features, mentioned in the above table, showed up as "NA" if the house did not have a garage. These were imputed as 0 or "None" depending on the feature type. Below is a breakdown of how we handled imputation across all the features.

2. Feature Engineering

The specific points that were addressed in our feature engineering are as follows:

- GarageYear → There was an observation with a GarageYear of 2207. After confirming with YearBuilt, we concluded that this should be changed to 2007.

- MSSubClass → Converted to String-type as this is really a categorical variable.

- "Zero" inflated features (such as PoolArea, OpenPorchSF, EnclosedPorch, 3SsnPorch, ScreenPorch) → Converted to ordinal features reflecting whether or not the feature exists for a given observation

- Ordinal Categorical features were encoded into Numerical-type

- Nominal Categorical features were Dummified

- Outliers → Removed observations with GrLivArea > 4500

- Standardized numerical features

- Log transformation applied to the response variable, "Sale Price"

As a demonstration, the following is a snapshot of the encoding that was implemented on ordinal categorical features:

Newly Created Features:

As part of our feature engineering, we analyzed the set of features to craft new, additional features that combine information from existing variables, thus reducing model complexity. These include the following:

- PorchSF → Reflects the total square feet of all porch areas: OpenPorchSF + EnclosedPorch + 3SsnPorch + ScreenPorch

- TotalBath → Reflects the total number of baths the house has: BsmtFullBath + 0.5*BsmtHalfBath + FullBath + 0.5*HalfBath

- TotalSF → Total Square feet of house

- MultiFloor → New Ordinal feature that reflects whether or not the house had multiple stories

3. Data on Models

We approached the regression problem of predicting the final Sale Price of houses in Ames, IA using fundamental, supervised machine learning techniques. Namely, we used penalized regression techniques such as Ridge, Lasso, and their composite, ElasticNet. As an additional effort, we also attempted Stacking and Random Forest techniques in the hope of generating different results.

The general pipeline for building our models included:

- Standardization of numerical features

- Hyperparameter tuning using gridsearch and k-folds,

- K-folds for model selection utilizing cross_val_score, and

- Retraining models on the entire training set, using the optimized hyperparameters.

The ridge model appeared to perform the best, with an RMSE score of 0.12116 as reported from the kaggle site.

"Reduced" Models

Model complexity was definitely a concern for a data set of this width. In an attempt to introduce a reduced data set, so that the model results could be more interpretable as well as addressing the potential for high variance due to a large number of features - we simply removed features and reduced the data set to include, mainly variables involving housing size and location. This newly modified data set included:

- 26 original features, plus new variables aggregating living area, number of bathrooms & porch areas (shown below)

- A final count of 88 feature variables including dummified variables

We decided to build Ridge, Lasso and ElasticNet models on this reduced data set to test whether lowering the complexity of the data let led to simplified results. We employed k-folds cross validation to optimize our choice of hyper-parameters(using k=3). Visually, the number of features were still too high to reveal any insight into the penalized reduction of cefficients.

Ridge Regression Model:

Lasso Regression Model:

Very predictably however, in seeking the right balance within the bias-variance tradeoff by reducing complexity, we very clearly removed features that contained within them, valuable predictive information. In essence, while we may have reduced variance, we increased bias and overshot our model accuracy. As such, the Root Mean Squared Error (RMSE) as measured by the kaggle site against a test data set was significantly increased.

"Reduced" model performance:

CONCLUSIONS

This was definitely a rewarding project and our participation in this kaggle.com competition exposed us to the challenges of machine learning projects in general and on a larger scope, the mindset needed to approach data science problems.

Focusing on future efforts to improve our models in this project, or even to develop our own individual styles going forward, we have compiled a list of improvements. The lesson from the "reduced" data set is simply that we need to apply more refined feature engineering in order to work towards the optimal balance between bias and variance. The importance of domain expertise is something that also became very apparent.

Additionally, as we hone our craft and expand our skills, one aspect we would have liked to explore is the use of more models and different approaches to identify the best solution for this problem. We choose to keep our methods simple and robust in order to learn and ensure our understanding, but perhaps being able to apply newer methods and models will yield better results.

Another consideration that would actually expand the scope of the problem and its solution, is to include and analyze external data involving local policy changes and economic trends in the housing market specific to Ames, IA. Perhaps, adding even more data such as school zoning or transportation and commercial information would produce models with more predictive power.

Thank you for viewing our project!

- We welcome all comments and suggestions -