Podcasts Apple Reviews of Politics/News-oriented NPR Podcast

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Apple Podcasts, the most popular podcast streaming platform on the web, used to provide very little information concerning analytics to the users hosting podcasts on its website. As a result, a podcast’s user reviews and ratings became central to its marketing. Hosts and guests alike will ask listeners to rate and review podcasts on iTunes, Stitcher, and wherever else they listen in order to hopefully sell an ad.

This lack of analytics is no longer the case. In June 2017, Apple announced it would finally roll out a feature for its uploading users to track their respective analytics. However, the information provided is still limited, and the practice of marketing based on user reviews and ratings is still in full effect.

National Public Radio isn’t necessarily in need of selling ads on its podcasts, but given how prolific the organization is in the digital audio industry, I thought it would be fun to scrape the user reviews for news and politics NPR podcasts and go through their contents with a little natural language processing to see if there was a difference in the subjectivity and polarity of each show's review.

Python Library

I used a Python library called Selenium to scrape the website. I had to use Selenium because the webpage loaded in an infinite scroll. There were no page buttons to push. Rather, more reviews showed up the further down the page you scroll.

I scraped the username, review title, review body, rating, and review date of each respective review. I scraped reviews for NPR Politics, Here and Now, Fresh Air, Embedded, NPR News Now, 1A, Up First, and Latino USA.

I scraped individual datasets for each and then combined them into a total .csv file at the end for analysis. I then preprocessed the data by adding common words I found in reviews that had little to do with the sentiment to a list of stop words, removing all punctuation, and lowercasing every word.

I then created word clouds that showcased the most commonly used terms in the total dataset and for each of the individual podcasts. I also determined the subjectivity and polarity of the total dataset and each of the individual podcasts.

Also, just for fun, I did all the above for only the one-star reviews.

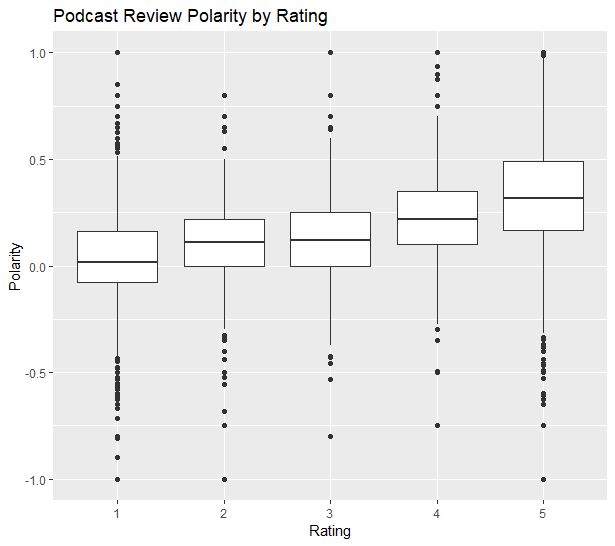

The most surprising insight I gleaned was that the one-star reviews for each of the podcasts were surprisingly positive and objective. I attributed this to a few things. First, many of the one-star reviews were rated so low due to technical issues such as podcasts being uploaded to the incorrect feeds. This would make sense given the average age of NPR’s audience, even its digital audience.

The other reason so many of the one-star reviews weren’t particularly negative is that often times the one-star reviews included language that was very positive. For instance, the first one-star review on the day I scraped began with the words “I love this podcast”. Many of them would go on to point out one very specific error that irked them.

All in all, if NPR wanted to advertise more on its podcasts, none of my findings would indicate they would have a problem doing so. In fact, my findings might even bolster a potential ad sale.