The Ultimate Guide To Developing A Winning Trading Strategy With NLP Sentiment Analysis

See our app here

With all the hype about generative AI generated by the rapid adoption of ChatGPT, it’s no surprise that AI is starting to play a major role outside the tech sector. In order to stay ahead of the times, it’s crucial not only to understand the inner workings of neural networks, but also to be able to find ways to apply its foundation into gaining insights, maintaining reputation, and analyzing trends.

In this blog post, we’ll be exploring the latter. In other words, we’ll be using NLP sentiment analysis to devise a stock market trading strategy capable of outperforming traditional trading strategies. Additionally, to remain diverse, we will also be using the same methodology to find correlation with other data, such as the housing market, GDP, etc.

The Goal.

In order to create an accurate model, we must first find a large corpus of highly accurate data with sentiment to train the NLP sentiment classifier on. To achieve this, we use StockTwits. For those unfamiliar with StockTwits, it’s a platform similar to Twitter, though users can label their tweets about companies as either bullish or bearish.

This data was extracted from Kaggle (link here) and initially contained 1.5M observations. However, this was reduced to 900K in order to further balance the dataset.

The test data consists of 4.7M tweets, spanning a total of 19 companies from 2015 to 2023, was scraped from Twitter using Snscrape. In order to compare these to real-world data, stock data from the companies were scraped from Yahoo Finance using YFinance.

For non-stock comparisons, an additional 1.7M tweets were scraped on 9 different topics. These topics were then compared with real-life data taken from the US Bureau of Labor Statistics.

Preprocessing

As with any model, data must first be cleaned before processing it through the model. Depending on the model used, the level of preprocessing varies.

For traditional neural nets (RNN/CNN), each tweet was first changed to its lowercase form. Usernames, hashtags, websites and special characters were also removed as they provide no value and would have made it much more difficult to pass through the model (as they added dimensionality). Additionally, stop words (or/and/are/you) were removed. The tweets were then lemmatized (studying, studies, study were all changed to the single word “study”).

The tweets were then passed through a spelling-checker package, which aimed to fix any spelling errors. The words were then both tokenized and vectorized into a numerical form. In order to pass each tweet through the model with the same dimension, the vectorized tweets were forward-padded with 0’s and truncated at 31, as the majority of tweet lengths were less than 31 (after removing stop words, special characters, websites, and alike).

For transformer models, the usernames, hashtags and websites also provided minimal value. However, position could be important for additional context. Therefore, these were changed to <USERNAME>, <HASHTAG>, and <URL>. Special characters and uppercase words may represent a different context, so they were kept.

The tweets then underwent a subword tokenization, which is similar to the word tokenization that is used for traditional nets, but would further break down longer works into subwords. For higher accuracy, input sequences were further represented by adding <SOS> and <EOS> strings to denote where the tweets would start and end, respectively. Attention Masking was also used to indicate the tensor position and which tensors should and should not be attended to.

Models

We first approached the problem with traditional neural nets: CNN, LSTM, and a combination of the two. With these models, we were able to get a validation accuracy of 0.71, 0.77, and 0.75, respectively. In addition to the traditional neural nets, transformer models were explored. Particularly, the BERT base model, FinBERT (BERT with a finance flair), BERTweet (BERT with a Tweets flair), RoBERTa (Optimized BERT), and a fine-tuned StockTwits version of RoBERTa. These models were found to have a validation accuracy of 0.80, 0.84, 0.86, 0.85, and 0.88, respectively.

Convolutional Neural Networks (CNN) are known for their ability to learn hierarchies and patterns. While they’re traditionally used to classify or detect images, they’re also exceptional at finding the patterns in speech recognition and sentiment analysis. That is accomplished by utilizing three layers (convolution, pooling, and fully connected) to create feature maps, reduce the number of dimensions, and transform the layers to produce the output.

The input vectors were passed through the model through 5 epochs but were found to be gradually diminishing after 3. With CNN, an accuracy score of 0.715 was achieved with a loss function of 0.53.

We also used Recurrent Neural Networks (RNN) to approach the problem. RNNs are known for their ability to capture long term dependencies. They do so by recursively updating their hidden states. However, this gating mechanism adds specific weights and biases to the gradient that may cause it to either explode or vanish. To combat this, a specific type of RNN was used.

Long-Short Term Memory (LSTM) is able to have the benefits of capturing sequential long-term dependencies without its gradient exploding or vanishing. It does so on the basis of three gates (input, forget, output). These gates control which information should be stored, discarded, and outputted, respectively. The vectors are passed in sequentially in an auto-regressive manner, essentially consuming words of minimal to zero relevance.

The tensors had an early stop at 5 epochs, in which it provided no additional value for the model. At this stage, the highest validation accuracy achieved was 0.775 with a loss function of 0.455.

Transformer models were found to be much more effective than traditional neural nets due to their self-attention mechanism. Unlike the traditional methods where vectors were passed in sequentially, transformer models connect all input vectors to output vectors indirectly. The query vector is multiplied with the key vector through a dot matrix and passed as a vector compared to its value vector. Overall, these three vectors give the transformer models the profound ability to compute the attention weights of each word, where words with no intrinsic value are discarded, allowing the model to focus only on sequences with higher attention weights. A SoftMax activation normalizes these attention scores to 1.

With our final model, RoBERTa fine-tuned, we see that the accuracy increases substantially with each epoch; unfortunately, however, the loss function also increases with each epoch. We were able to achieve a 0.8893 validation accuracy with 0.34 as our loss function.

Testing the Prediction

Once we were able to capture an accurate model, we wanted to then ensure that the model was capable of accurately predicting certain keywords. To do so, we pass in a simple phrase, such as “Hell yeahhh ~ I'm feeling extra bullish today. Looks like them stock prices are shooting to the moon 😍”. This phrase gave a score of 0.9984, outputting a bullish sentiment. The sentiment weight of each word was then visualized along with the composition weight, which in this case would be a positive weight added to the mean of the other weights combined.

Similarly, we can generate predictions for a bearish sentiment, such as “We're about to enter a recession 😭😭”. The model predicted a score of 0.0071, which classified the phrase as bearish. As before, the sentiment weight of each word could be calculated, and its mean added to the sentence composition weight. In this case, it would be negative.

Developing the trading strategy.

The next step would be to use the model’s algorithm to predict 4.7M scraped tweets in 19 different companies. To visually clarify the sentiment, we plot the long-term and short-term sentiment moving average at 200-days and 50-days, respectively. When the short-term MA line crosses the long-term MA line, it is an indication of a bullish market and the sentiment is colored green. When the opposite occurs, a death cross is observed, as indicated in the chart as red.

To find the correlation between the sentiment and the stock price, we introduce the Granger Causality Coefficient, which extracts a number that correlates with two lagged time-series. With most companies, we were able to achieve a coefficient between 0.75 – 0.90. We then explored opportunities to use this sentiment correlation to devise a trading strategy with the same methodology as the traditional trading strategy.

We then create buy and sell signals: Buy at the start of the bullish market and sell at the start of a bearish market. From here, we’re able to compute the profit/winning rate and compare it with the conventional RSI trading strategy. In more than half of the companies observed, the sentiment trading strategy outperforms the traditional method. For the rest of the companies, a quarter is observed to be about equal to the traditional trading strategy.

To conclude, rather than replacing the trading strategy completely, this can be seen as a nice complement to the traditional route. In other words, we should be able to utilize this sentiment trading strategy to boost our confidence in buy-sell signals within the market.

Correlation outside of Stock.

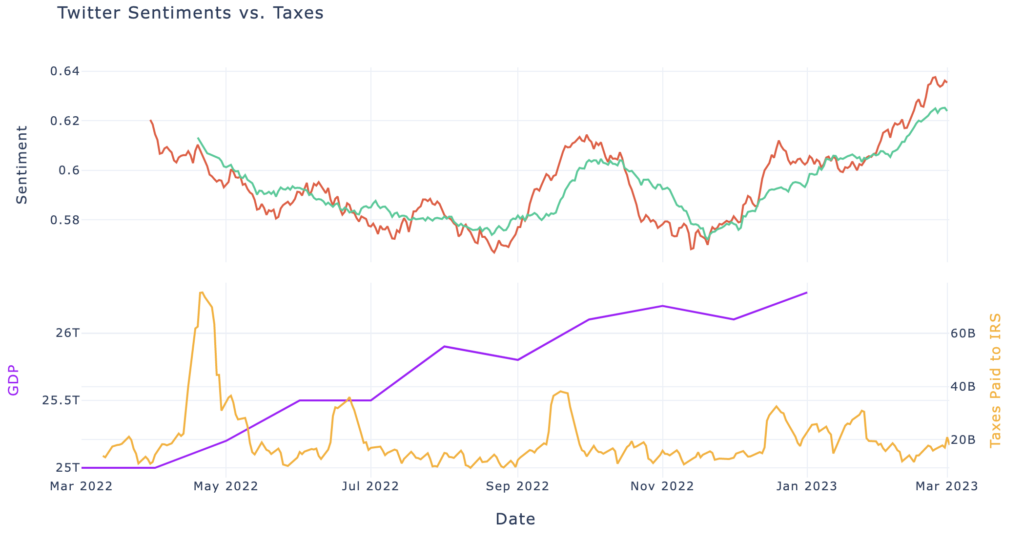

Aside from stock data, we wanted to test how well our sentiment prediction would compare to real-world data. To do this, we predicted the 1.7M scraped tweets on 9 various topics and compared it to data given by the US Bureau of Labor Statistics.

From here, we can see that a correlation definitely occurs with each timestep of our prediction compared to real-world data. Similarly, a granger causality coefficient was computed for each of these topics and was often found to have a great correlation between the two. For instance, the plot shown above has a causality coefficient of 0.94 and 0.96 for GDP and Taxes Paid to the IRS, respectively.