Data Study on Home Depot Product Search Term Relevance

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction:

Machine Learning technology has opened a world of possibilities that I find fascinating. . Thanks to the innovation of the Neural Network, data shows some machine algorithms are capable of out-performing humans in things like image classification (which will be a later project). I believe that with a well-trained model, a machine may be able to understand and better serve humanity.

Natural Language Processing came into my mind as a very interesting and challenging topic. Also, it is a very useful tool to have since we will be trying to model human behaviors most of the time. This project is actually a Kaggle challenge; its link can be found here. Although it has already expired, I intentionally choose this project to practice my NLP skill and try different methods.

Before starting my project, there is one more important thing need to be noted: As almost top tiers in this game has done manually word cleaning and feature extraction, I am not going to do so. As I am trying to test how the algorithm performs in a NLP project, I want to let the algorithm to be able to grab the features. Thus, I will not do any dirty work to help the algorithm.

The entire project can be found on my Github repository at: https://github.com/Jian-Qiao/Home-Depot-Product-Search-Relevance-

Project Summary:

This challenge was held by Home Depot on Kaggle.com. Home Depot wants to build a more versatile search recommendation to support its online sales. With matching customers' search terms and the product attributes/description, it will be easier for a customer to find the right product he/she wants. Thus, the process of selection is improved, and customers are more likely to make a purchase.

Data:

The project’s data contains three parts:

- product_description.csv contains description in text form for 124,428 different products id. It has 2 columns: product_uid, description

- attributes.csv contains total 2,044,803 unstructured attributes for 86263 different product ids. The data has 3 columns: product_uid, name (attribute name) , value (attribute value)

- train.csv contains 74067 search_term & product matches scored manually by Home Depot employees. It has 5 columns: id, product_uid, product_title, search_term, relevance.

Validation:

The validation will be based on another data file test.csv, which is another 166,693 matches with the same structure as train.csv except for a missing relevance column.

Hands on:

Data Select:

For the data part, both attributes.csv and description.csv contain almost the same information. Initially, I chose to use attributes.csv thinking that because it appears s more structured, it would make it easier to compare different attributes.

However, after doing some data cleaning, I discovered that using attributes.csv would be a mistake because the name column is very messy. It has 5410 different attributes names, with typos, spaces, duplicates. Even if I can clean up the attributes values, I would need to clean the names and cluster them together, and that doesn’t even take care of the duplicated attributes. So, I quickly abandoned attributes.csv and went with description.csv instead.

Data Cleaning:

As I have decided to go with description.csv, there are more to be concerned about. But as for Natural Language Processing project, the first step is always cleaning the data.

Basically what this piece of code does is:

- Transform words into a workable type.

- Stem the words.

- Remove the word if it's a stop word (common words that don't affect the content of the sentence, like: "the", "a" ...).

- Remove punctuation.

- Singularize all words.

- In the data set 'x' is used as a multiplication sign and 'in' stands for 'inch.' They are not needed for the model to understand the sentence content. So, I removed it.

But there are still typos in the data set. Human may be able to recognize a typo, but a machine will treat them as a new word, which will bias the model.

Spelling Errors

So, later on in this project, I did some research on how to correct spelling errors. Luckily, I found a post explaining how Google's spell correcting algorithm works. So I got those codes implemented into my project.

I applied these 2 functions to both my training data set and description data set. And now I have a cleaner and better data set on hand for modelling.

Train_vec Data Set

Let's take a look at my train_vec data set:

ML Algorithm:

First Attempt (TF-IDF):

For Natural Language Processing, TF-IDF is a very common technique to use. Although there may be some downsides, my mentor Luke suggested me to try it first. With the experience from implement TF-IDF, I will be able to know how to improve or choose the algorithm in the future.

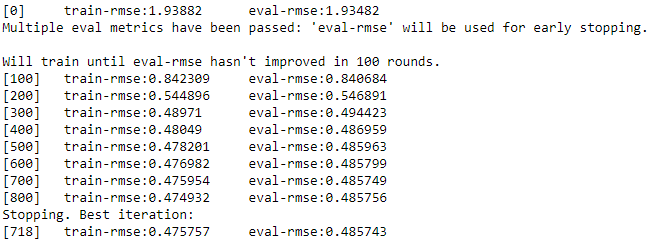

And throw the TF-IDF matrix into a xgboost model: (xgboost package in python require a complicated way to install, the full tutorial can be found here)

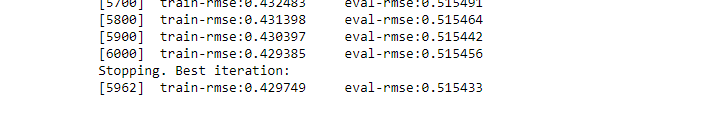

After 3 hours:

Problems

The result is not very satisfying. Also, there are couple of problems that result from using TF-IDF in this project:

- TF-IDF counts frequency of each word. By sending the matched data set into the model is more like saying, "If the search-term is exactly like this, and the product description is exactly like this, the relevance will be more like this." It is very limited to our training data set, since there will be new combinations of search_term-description in the test set, and our model wouldn't know what to do.

- TF-IDF takes a limit number of words. In my example, I took 400 words from description and 200 words from search_term. We know there are way more words in the complete data set. What if the model encounters words which are not in the TF-IDF matrix? Again, our algorithm would just play dumb.

- It is extremely slow. Working with that gigantic matrix is not just costly but also painful.

So, I need to seek an alternate way to work on this project.

Second Attempt (My Own Relevance Index):

So, based on how TF-IDF algorithm works. I need something that directly compares the search_term with corresponding product description.

After thinking about this issue, I decide to write my own function to replace the TF-IDF algorithm:

What it does is to find how many times each word in a product description appears in the corresponding search_term and takes an average of all frequencies.

As search_term is highly unlikely to have duplicated words, the frequency of each word is most likely to be either 1 or 0.

The reason why I wrote this function is that I want to check how relevant the search_term is to a specific product description. Take an example:

search_term:"Chair"

Product Description 1 :"This is a wooden furniture set, including one dinner desk and 4 chairs"

Product Description 2 :"This is a black chair with only 2 legs. It's a very cool chair because it's so different from normal 4 leg chairs."

After cleaning the data:

Product Description 1 :"wooden furniture set includes one dinner desk 4 chairs." My function gives:0.11111

Product Description 2 :"black chair only 2 legs very cool chair because differ normal 4 leg chair." My function gives: 0.21428

I also created another function for checking the relevance of product description to search_term.

This basically the same idea as last one, except that it adds another function called 'shrink..

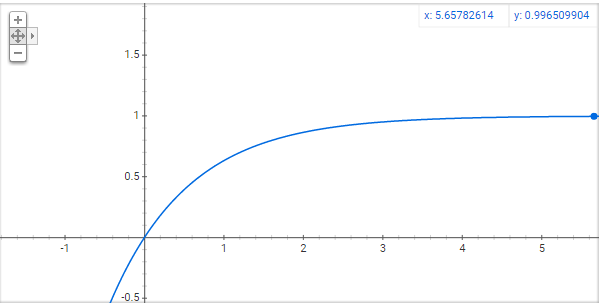

The reason for 'shrink' is that I don't want the frequency of a certain word to dominant the index for the entire sentence. So I will need a transformation of number pass the origin and monotonically increase with decreasing increment. That would look like this:

And y=1-1/exp(x) is exactly the transformation I wanted.

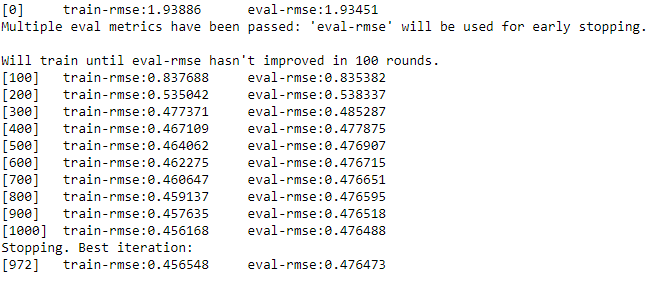

I used my functions to check both product title and product description (Of course if the title matches with the search_term, it indicates of high relevance). And train the data with xgboost.

After replacing TF-IDF by my own function, the result has been improved a lot.

Third Attempt (Cosine Distance):

Although TF-IDF itself does not perform very well in this object, my mentor Luke showed me another way to use TF-IDF in this project by implementing Cosine Distance.

The idea is that if that after transforming the TF-IDF matrix we treat each entry as a vector and calculate the Cosine Distance between the vector of search_term and the vector of product_description. The more relevant these two are, the closer their Cosine Distance should be.

With the Cosine Distance added into my algorithm. The rmse has been reduced a little bit.

Fourth Attempt (Add product_uid):

Then I went back to Kaggle.com to read other people's work. Interestingly, they tend to have product_uid included in their training models. Theoretically speaking, a product's id code shouldn't play a part in the relevance of such item with customers’ search_term. However, in reality, adding a product_uid column does help improving the result.

Conclusion:

The first thing I learnt in this project: TF-IDF cannot always be the go-to solution for all Natural Language Processing projects. Take this project as an example, TF-IDF is not just costly but also very limited here. As TF-IDF requires the object to be something an article with a large size word pool, it is not very useful here (our search_term only has couple words). What if the word has a typo? What if the word is not in the TF-IDF matrix. These 2 questions are not significant in an article, since typos or uncommon words only take a small portion of the entire content. But in this certain project, 2 or 3 words will control the meaning of the entire search_term.

Later on after adding my own feature extraction index and other methods as support. The algorithm worked way faster with way better performance. But a typo in the search_term can still bias its entire meaning. Although I have added a typo correction algorithm into the data cleaning part, the machine still cannot operate like a human would.

Future

So we will be back to the idea of how important manual data cleaning and feature extraction are in a NLP project. The conclusion is that manual data cleaning and feature extraction are still vital in a NLP project. The winners' methods in this competition show that having a big team doing manual data cleaning and feature extraction is something they have in common. Also they have stacked a lot of different models together to improve the accuracy of their model. Although the model got improved a little, it's not an efficient way and only viable when playing with a static data set like this.

Further more, a neural network is said to be able to automatically extract and select features from the training data set and can outperform other algorithms in a wide variety of tasks. Thus, I will not spend more time doing manual data cleaning. I'm going to approach to my next project, which is a very interesting one, building a Facial Expression Recognition model using CNN by Tensorflow.