Higgs Boson Kaggle Case Studies

Contributed by by Ruonan Ding, Frank Wang and Joseph Wang. They are currently in the NYC Data Science Academy 12 week full time Data Science Bootcamp program taking place between January 11th to April 1st, 2016. This post is based on their fourth class project - Machine learning(due on the 8th week of the program).

Introduction

The particle physicists explore the fundamental force and mass of the universe. They use particle accelerator to break the atoms of the energetic particles to detect sub-particles (smaller particles than atom). The ATLAS detector at CERN's Large Hadron Collider was built to search the mysterious Higgs boson responsible for generating the masses. The Higgs boson is named after particle physicist Peter Higgs, who with other five physicists predicted the existence of such a particle in 1964.

On 4 July 2012, the ATLAS and CMS team announced they had each observed a new particle in the mass region around 126 GeV. This particle is consistent with the Higgs Boson predicted by the Standard Model. The 2013 Noel prize in physics was awarded jointly to François Englert and Peter Higgs for their theoretical discovery of Higgs boson, the origin of mass of subatomic particles.

in physics was awarded jointly to François Englert and Peter Higgs for their theoretical discovery of Higgs boson, the origin of mass of subatomic particles.

The production of a Higgs particle is an extremely rare event. A Higgs is produced every few trillion collisions. Therefore it takes years to find this particle. It is challenge for physicists to analyze such massive data with extremely weak signal.

Machine learning is proposed for this competition to explore the potential improvement of the significance of the signal. This may potentially speed-up the discovery of true signals and save enormous hours for particle physicists.

Data Engineer

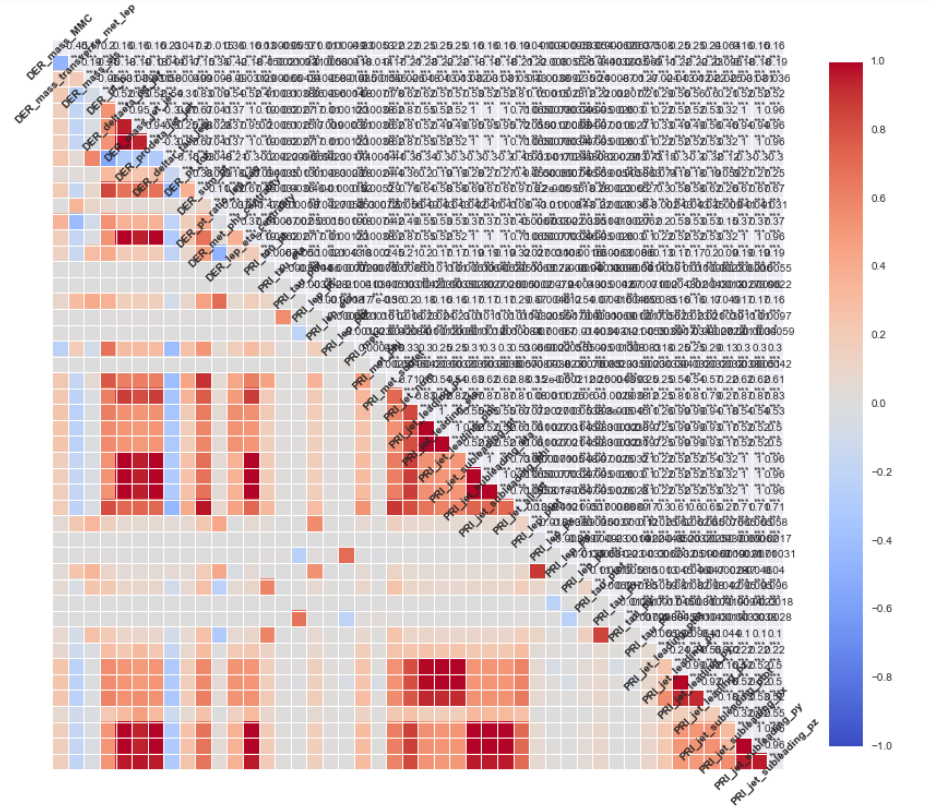

Variables are indicated as “may be undefined” when it can happen that they are meaningless or cannot be computed. In this case, their value is − 999.0, which is outside the normal range of all variables. Both background and true signal has large missing values. Seven features have missing up to 70%. Three features miss up to 40%. We treat the missing values as NA in the tree based models. We imputed the missing values for the dimension reduction models.

The jets number (PRI_jet_num) has only 3 values: 0,1,2,3 and therefore can be separated into 4 categories.

From physics point view, the cross-section of Higgs particle generation strongly depends on the energy. This is true for other particles. Therefore, it important to keep all the energy related features such as momentum. We add 16 momentum as new features in our model to leverage the sensitivity to the energy. The azimuth angles have less information. We keep them in our study.

Logistic Regression with PCA

We utilized principle components to reduce dimensions so that we can obtain the most information out of the features as well as eliminate correlations between variables. With the 42 variables we currently have, we were able to reduce the dimension down to the 15 synthetic variables. Next step is to reconstruct the given variables in terms of newly created Principle Components and train logistic regression on the newly constructed variable dimensions. Using 5 folds cross-validation and repeated 5 times on the sample data. Note that the metric to optimize is accuracy in this model. We didn't tune the model in terms of AMS. The AMS submission on the final test data is about 1.61.

Takeaways from this mode:

- For PCA, data imputation and scaling is very important. We need to validate my imputation and scaling

- In terms of model tuning, I should have used AMS. Accuracy is not the same as AMS.

- Cross validation could have done on a bigger scale for this model since the running time was fairly slow.

Random Forest

We used random forest as our second model. In this attempt we try to fix the mistakes we made with previous model. The threshold is the key in this dataset. Because the dataset is not a perfect 50-50 balanced of signal and background, picking the threshold to assign signal and background is very important to tune for a higher AMS. Below si the importance of features ranked by random forest model.

After tuning with 500 trees and mtry of 7, and also tuning the threshold manually, we were able to achieve a 2.68 AMS. Note that because running time, we only run the model on 10% of the training data. In order to get a better result, we should expand the training size and cross-validation.

Gradient Boosting Model

Third model we trained was gradient boosting model with 500 tree, learning rate of 0.1, inter depth of 10. We performed a 2 fold cross-validation twice on the entire training data. After doing some research, we make a cutoff on the probability prediction and call the upper 14% of events as signal. We optimize this threshold to maximize the AMS. We used a testing grid. After running several tests around, the best cutoff is the top 14% (0.86).

the AMS. We used a testing grid. After running several tests around, the best cutoff is the top 14% (0.86).

Using GBM, we were able to achieve 3. 51 ranked 732 on the Kaggle. We could have done cross validation in more fold to and finer grid to achieve a better score.

Neural Networks

Neural networks have been widely applied to different areas of industries including stock market prediction, credit rating, fraud detection, property appraisal, and medical diagnosis. For the Higgs boson data where the actual Higgs boson events are much rarer than the other background events. This shares the same character as the data shown in fraud detection where the multilayer neural networks were successfully applied. With the limited computational resources, instead, we only consider one layer of neurons and use neural number as the tuning variables to learn about our data. For the prediction accuracy, we use 20 principle components from the scree plot of PCA analysis. We split our training data into 80 percent for machine learning and 20 percent for test set validation. We observe the single layer possess 80 percent training prediction precision almost comparable to the result from test set without overfitting. The prediction accuracies are almost unchanged when the number of neurons are above 4. For the AMS measure, the test set prediction (around 1.2) is much worse than the training set result 2.3. To dramatically improving the learning, an adaptive boosting may be applied to the single layer neural network to boost the weight of the importance for the rare events. The outcome can be beneficial when one face with limited computational resources.

XGBoost

The XGBoost is type of adaptive boost, the training error of the last step is used to train the next tree. This mechanism make it very powerful to find the best solution. Cross-Validation is used to tuning the parameters. The optimized parameters are: the maximum tree depth is 4; the number of iterations is 170; the learning rate is 0.1. The auc score is 0.94 and AMS score is 3.60 with Kaggle rank 584. The best score is 3.66 from the cross-validation.

Conclusion

Why we care about Higgs bosons? Bosonic particles in nature such as photons and phonons are all massless. The interesting aspect of bosons are due to the exchange symmetry which leads to the enhancement of probability for observing another bosonic particle when a boson particle exists a priori. The Higgs bosons are special due to its bosonic nature with the mass originated from Higgs mechanism from the standard model. The Higgs mechanism provides an explanation on the origin for fundamental particles with mass.

Our attempt to predict signal using the given features and various model gave us a chance to examine the pros and cons of different models. We wish to do stacking and ensemble as the last step to combine couple model's results.