How Many Data Science Jobs Are There? A Scrape of Glassdoor

Abstract

Once we all graduate from NYC Data Science Immersive Program, we will have become data scientists. We will have knowledge on advanced machine learning algorithms, natural language processing, and how to analyze and visualize large data sets. But then what? We probably should get a job in that field. Although people say that the data science field is really ‘hot’ right now, what does this mean in terms of job prospects? How many jobs are offered to data scientists and how much do they earn? With these questions in mind, I decided to scrape data science job postings from Glassdoor.

The Questions

When I first started this project, there were three big overarching questions:

- How many jobs are there for data scientists?

- How much do data scientists make?

- What programs do data scientists need to know in order to get hired?

Since these three questions are so broad enough, many sub questions can be created and could affect my analysis. Some of the factors that I wanted to control for were:

- Education level of the candidate

- Skills companies were looking for when hiring

- The industry the company was in

- The size of the company

- The location of the job postings, based on state as well as city

- The cost of living for that city in comparison to the estimated salary of the posting

- The rating of the company

- The type of company

After writing down a checklist of questions and potential avenues to explore, I was ready to scrape.

The Scrapping Process

The Planning

Using Scrapy, my plan was to look up every Data Science job posting on Glassdoor. After viewing the posting, I would be able to retrieve information such as the job title, the company’s name, the estimated salary of the posting, and the description of the job (which includes the programs needed and educational requirements). I would also be able to get information about the company, such as the industry it was in and its size.

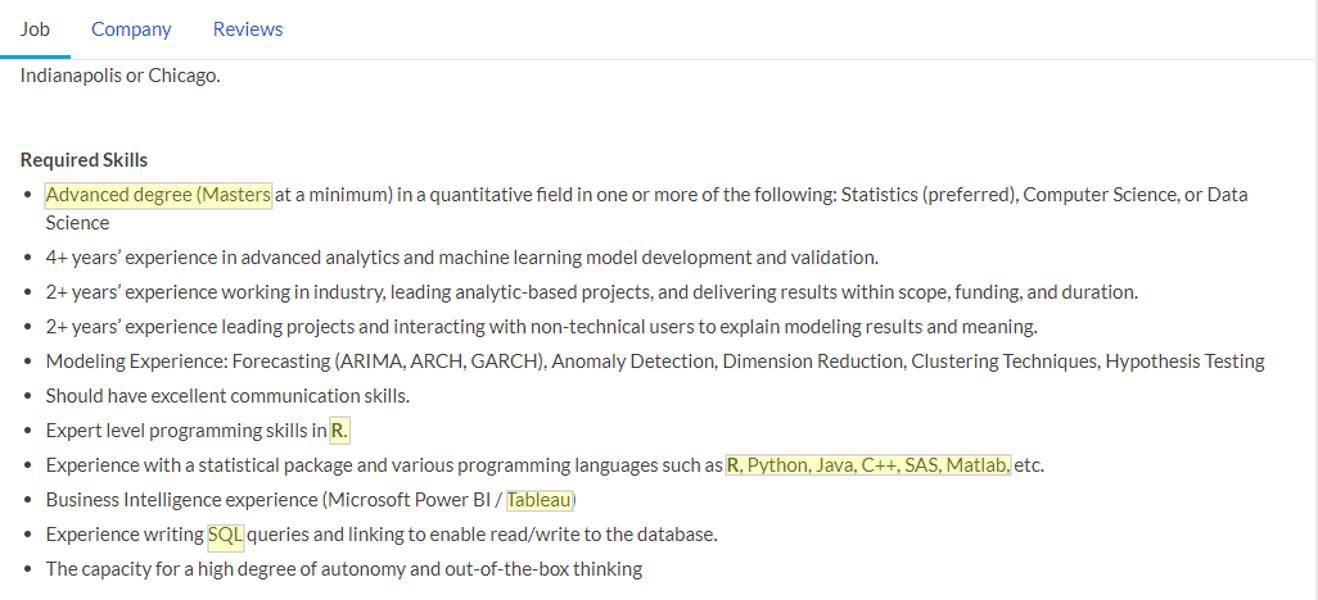

Images showing the relevant information that I would scrape (highlighted) from the job posting and the company description pages respectively.

The data collection seemed like it was going to be relatively straightforward, but there were a few unforeseen problems that I encountered.

The Hiccups With Scrapping

Encountering JavaScript

One issue that occurred was my attempt to retrieve information about the company. Even though it was listed in the source code of the website, the code that Scrapy looks at to gather information, my program was unable to scrape it properly. When attempting to debug my code, looking for a solution, I found that even though the information on the company was in the source code, the website uses JavaScript, something that Scrapy does not recognize, to get the information from another link.

A picture of what was being “seen” by Scrapy, due to the data being called by JavaScript. The company information is missing.

After learning this, I searched through the network files that was being transmitted in hopes of finding the source of the JavaScript. I ended up finding a promising file titled “companyOverview” which contained a request URL. That URL which was the same for every posting, except for a string of numbers which was the company ID, contained all the information needed for that company.

The network file that Glassdoor uses to see which website to call to present company information.

The network file that Glassdoor uses to see which website to call to present company information.

The URL and the page containing the company’s information.

The URL and the page containing the company’s information.

Once I found this separate page which hosted the missing data, I was able to scrape the company information successfully.

“Page 31”

Another problem I encountered was a strange error where my code would break whenever it reached to the 31st page of Glassdoor. My initial thought was that Glassdoor was recognizing that I was scrapping their website and banning me from their website. I soon concluded that this was not the case, since I was able to access. As I investigated the problem, I learned that Glassdoor only provides the first 30 pages for its viewers. Even though it states “30 out of 731 pages”, there is no 31st page (or any page past 30).

Despite Glassdoor stating that had 791 pages of listings…

...I would get an error for any page past 30.

In order to gather enough data, I decided to look up 16 different cities across the US and find all the data science job postings located in those areas. I also discovered a way for my spider to go to the next website programmatically, by decoding what each part of the URL meant.

The formula for the URL to search through all the listings for all of my cities.

After overcoming these two obstacles, I was able to scrape all the necessary information from Glassdoor.

Extracting Description Data

Once my scrape was finished, I still had one final task to do before I could run my analysis. I designed my spider to capture all of the information listed in the description and convert it into one long sentence, for every job posting. I still needed to extract the important pieces of information, such as the necessary skills and minimum education levels from the job description.

The parts of the description that we still need to extract

In order to get all the relevant information, I used regular expressions. I couldn’t only tokenize the words in the description because there were multiple ways the skill or requirement could have been listed. For example, a job stating a minimum of a master’s degree could say things such as “MA” or “Masters Degree.” or a “Graduate degree”. If I only tokenized the words in the description, I would extremely underestimate the job that would require a masters degree. I also had to make sure I wasn’t getting false positive, from sentences containing the word “master” (e.g.: candidates need a mastery in a financial modeling). I did this for various programs that a data scientist might use as well as for different educational levels required for data science jobs.

Once all my code was extracted and cleaned, I started my analysis and visualization

The Visualizations

The first visualization I wanted to see was a correlation plot between the variables I scrapped from Glassdoor and the salary the salary that was posted.

Figure 1: A Variable Correlation Matrix

Most skills appeared to have a mild to moderate increase in estimated salary, with Python being the highest. Job postings requesting Excel appeared to be lower paying job, having a negative correlation with salary estimate. Salary_high and salary_low have completely correlated with estimated salary because the estimated salary on Glassdoor was generally the mean between those two figures. This should not be stated that all excel jobs are lower paying, but the linear relationship between these two variables is negative. Further analysis would be needed to uncover the true relationship between all of the variables.

The interactive graphs below are created through the python program Plotly.

Figure 2: Number of Job Postings in Comparison to Required Educational Level.

In this graph, I measured the number of job postings in comparison to the required educational level for job posting. Note that jobs which state two different educational requirements (e.g.: “this position is open to people with a bachelors or a masters”) would be counted twice. I believe the reason why MBA listings are so low is because most jobs state that applicants would need a masters or graduate degree in a relevant field, such as economics or finance.

Figure 3: Salary Range of Job Postings in Comparison to Required Educational Level.

I measured the salary range for each of the job postings and compared it to the required educational level for the job postings. The further the applicant’s education goes, the higher the median and mean salary is. That said, even though the salary of a graduate degree is significantly higher than an undergraduate’s, we must factor in the opportunity cost of years spent not earning an income and further the applicant’s education. I also tested the significance of the pay between educational levels with an ANOVA and found the results to be significant.

Figure 4: Number of Job Postings in Comparison to Required Skills.

Another factor that I wanted to know was what skills were needed in order to get a job as a data scientist. In our program we talked about the importance of SQL, and it shows in this graph. It is the most desired skill for data scientists, followed very closely by python. Other languages, such as R and Java are popular as well, but not to the same extent. This graph shows the number of job postings that ask for a specific skill or program.

Figure 5: Number of Job Opportunities and Median Salary by Industry

As I continued exploring the data, I was curious to see how many jobs were being offered in certain industries and how much they were willing to pay. In the graph above, you can see the median salary for all of the industries and compare it to the number of jobs being offered in the industry. The average median salary for all of the industries was around $102,000. The highest paying industry was Retail, which Information Technology ($115,000) offered the most number of jobs (with a median of $112,000).

Figure 6: Data Science Salaries Compared to Industry

Although certain industries such as retail and information technology paid well, I was curious to see if they had a large number of outliers, causing the data to be skewed to the right. As you can see, both information technology and retail have similar IQR (interquartile ranges) and not too many outliers. The mean of retail was also similar to the median, providing evidence for a normally distributed salary range. This was true also industries such as finance, insurance, government, food services, and aerospace and defense.

Figure 7: Data Science Salaries Compared to Company’s Size

When analyzing my data, I was curious to see how big the companies were that were hiring data scientists. I wanted to see how well small start up companies were paying in comparison to multinational corporations. From my findings, size did not seem to make a major difference in the amount they are willing to pay for their data science positions.

Figure 8: Data Science Salaries Compared to Company Ratings

After looking at other factors regarding salary, I was curious to see if companies that paid more were more highly rated. The black line is a simple OLS regression estimating the data science salary based on the company’s overall rating. This regression does not have much explanatory power and is mainly used here as a visual showing which companies pay more, controlling for their rating.

Figure 9: Number of Job Postings in Comparison to States

Although everyone is talking about how Silicon Valley is hiring tons of data scientist, I was curious how many data science positions were offered in other locations in the US. This graph provides a count on the number of jobs posted based on their state (or district for Washing DC). This bar chart should be taken with a grain of salt since cities had to be specified to retrieve enough data, since Glassdoor only provided 30 pages of listings. I will address how I would improve this, given more time, in the Next Steps section of this blog post.

Figure 10: Data Science Salaries Compared to Location

For this graph, I selected the 25 cities that offered the most number of data science positions. Even though certain locations might offer a lot of data science positions, are they well paying? I plotted the salary ranges for each of the cities in the graph above. As you can see, the two cities with the highest median incomes for data scientists are San Mateo, CA and San Francisco, CA.

Figure 11: The Ratio of Data Science Salaries Compared to Yearly Rent Based On Compared to Location

Although California appeared pay their data scientists the most, I also knew that these cities could be rather expensive places to live. In order to control for this, I found rent information for each city from Apartmentlist.com. From the website, I collected median rent information for a one bed room apartment for each of those cities and divided the estimated salary range they offered. When you control for cost of yearly rent, it shows that places such as San Mateo, San Francisco, and Palo Alto do not pay the best. Instead jobs that are located in cheaper places to live, such as Houston and Chicago offer better pay. Although this is a simplistic control for cost of living, it does illustrate that higher pay does not always translate to more money being earned.

Further Exploration and Next Steps

This project is rather interesting to me and I plan on improving it after I graduate form the immersive program. One way I would want to improve my project is to get a larger number of cities represented in my scrapping of Glassdoor. I would like to include at least one major city in every state to get a more accurate representation on the number of companies that are hiring data scientists. I would also like my scrape to be updated on a regular basis, since the number of postings change so frequently in todays market. I would like to set up a way to automatically scrape Glassdoor (and other job marketing sites such as indeed and monster) every two weeks to keep up with the ever changing demand for data scientists.

I would also improve this project by getting a more accurate way to represent cost of living in each city. Although rent for an apartment is a start, there are many other indicators I would like to use, such as price of food, transportation, and entertainment. If I can more accurately control for cost of living factors, then I can see which cities offer the highest paying jobs while simultaneously being the cheapest place to live.

Another way I will want to improve my project is to create a model predicting the monetary value of each skill a candidate has. For example, the question I would like to answer is how much more valuable is a candidate to a company if they gain mastery over a certain program such as Python or Spark. This would also lend itself very well to people looking for jobs, but unsure on what they should learn to stay competitive in the field. I can envision this project becoming an application, where a user would put in information about themselves (e.g.: their education, the skills that they know, the industry they want to work in), and they would receive output on what their estimated salary should be, along with jobs that offer skills they already know. It can also recommend certain skills that they do not have to increase their value to a company, thus increasing their salary.