Big Data in a Small Package - Building a Raspberry Pi Cluster for Hadoop and Spark

Before we go ahead with our installation of Spark, lets confirm Hadoop is running through its web interface.

Since SME1 is set as the cluster namenode, the two urls contain the Hadoop web interface: http://SME1:8088 and http://SME1:50070

We can tell all 5 nodes in our the cluster correctly registered as DataNodes. Now, on to Spark!

First, we need to download Spark from the Apache website http://www-us.apache.org/dist/spark/spark-2.1.1/spark-2.1.1-bin-hadoop2.7.tgz

From our master node (SME5) download the compressed file from the link above with wget:

wget http://www-us.apache.org/dist/spark/spark-2.1.1/spark-2.1.1-bin-hadoop2.7.tgz

Decompress the package with tar

tar -xvf spark-2.11-bin-hadoop2.7.tgz

Then move the newly unpacked directory to /usr/local/spark

mv spark-2.11-bin-hadoop-2.7 /usr/local/spark

Now lets edit /usr/local/spark/conf/spark-env.sh and make some adjustments for our cluster resources.

Also include the following two lines in the spark-env.sh

export PYSPARK_PYTHON=/usr/bin/python3

export PYSPARK_DRIVER_PYTHON=/usr/bin/ipython

Now create a master file in /usr/local/spark/conf/

either use nano:

nano /usr/local/spark/conf

add the cluster master node

SME5

or use echo

echo SME5 > /usr/local/spark/conf/master

Now that Spark is configured (aside from the slaves file on the master node) we can copy our Spark installation to our other nodes with the scp bash command in a for loop. For my cluster, I used the command.

for i in 1 2 3 4 5; do scp -r /usr/local/spark/ 'sme'$i:/usr/local/; done;

Note, this may take a while as it copies the files to each node one at a time. Also, if there are any errors, you may need to use the chown or chmod commands to adjust file permissions/ownership.

Alternatively, you could just download, unpack, move, and edit the Spark configuration files on each node, but who has time for that?

At this point each node in the cluster should have an identical installation of Spark in the /usr/local/spark directory.

on your master node, run the following command with nano to create the slaves file:

nano /usr/local/spark/conf/slaves

Note, since this is a very low memory environment, I kept the Spark master node out of the slaves list so it won't double as a worker node like the Hadoop NameNode on SME1 doubles as a DataNode.

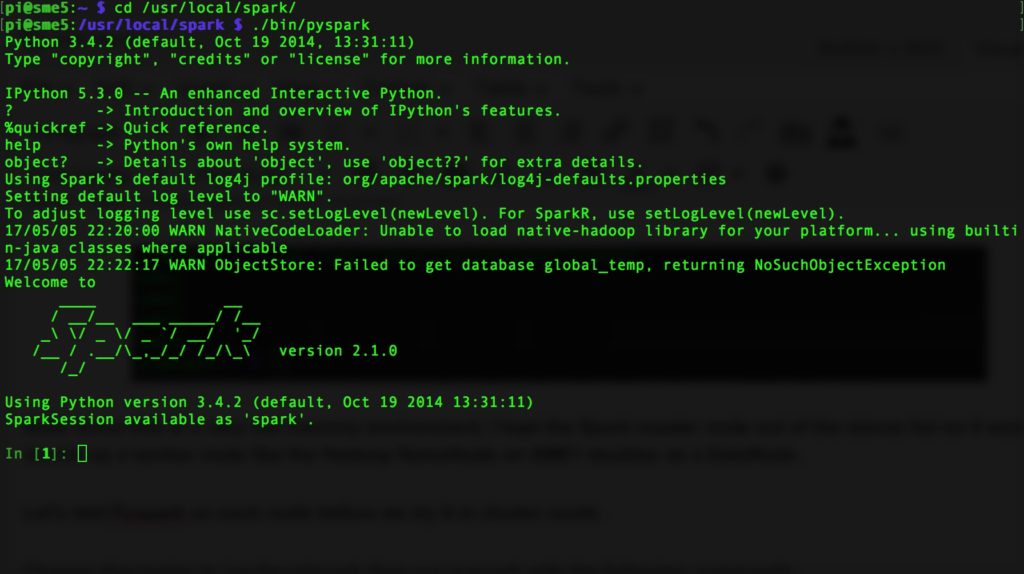

Let's test Pyspark on each node before we try it in cluster mode.

Change directories to /usr/local/spark then run Pyspark with the following commands:

cd /usr/local/spark

./bin/pyspark

If everything works we'll have the Pyspark shell run and see the nice Pyspark logo.

Time to start our Spark cluster! From the master node run:

cd /usr/local/spark

./sbin/start-all.sh

The Spark web UI should load on http://<master>:7077 if everything is working.

Spark works! Continue to the next page for the final setup of Jupyter-notebooks.

About Author

Related Articles

Leave a Comment

Jair June 13, 2017

Hi Scott,

Thanks for posting. As a heads up, "sudo pip3 install ipython3" didn't work for me. However, "sudo pip3 install ipython" seems to work fine.

Jair