Top 9% Open Kaggle Competition - Santander Products Recommendation

Introduction

In order to support the clients for range of financial decisions, Santander Bank offers their customers personalized product recommendations time to time. Under current system, not all the customers received the right product recommendations for them. To better meet the individual's needs and ensure their satisfaction,this challenge seeks to improve the recommendation system by predicting which products their existing customers will use in the next month based on their past behavior. Having a precise and strong recommendation system, the sales of the bank can be maximized. At the same time, the right products can also help the customers utilized their financial plan.

Data Size

The size of training data set is about 2.3 GB, which has 13,647,409 observations. The test data set has 929,615 observation.

Input Features

From column 1 to column 24 are the input features, which contain 21 categorical features and 3 continuous features. The input features contain customers’ demographic and status with the bank information. On top of this, the observations are in the time series format. The data contains each customer’s information from January 2015 to May 2016.

Output Features

From column 25 to column 48 are the output features, which contains the product purchased information according to each customer from January 2015 to May 2016. Each column stands for one product, and there are 24 products in total. The final purpose is to make a prediction on which products customers are going to purchase in June 2016. In this case, the prediction is going to be multi-classifier.

Evaluation

To measure the result, the competition is using Mean Average Precision @ 7. From the formula where |U| is the number of the users in two time points, P(k) is the precision at cutoff k, n is the number of predicted products, and m is the number of added products for the given user at that time point. @7 here means the evaluation only take the top 7 products into account no matter how many products are in the prediction. At the same time, if the customer does not purchase any product, the precision is also defined to be 0.

Workflow

In order to manage and implement a great model on this complex data within two and half week as a team, the team were following the steps showing below.

- Data Cleaning and Exploratory Data Analysis (EDA): Due to a lot of missing value and the time series format of the data, the data cleaning and EDA were executing simotaniously. Mean while, the insights gaining from doing data cleaning and EDA are also the foundation and inspiration for the later on feature engineering.

- Feature Engineering and Models Training : Due to the evaluation method, 7 decimal places does matter for the score. Feature Engineering is the part that can make impact on the model. Therefore, feature engineering play a main role in this project. Instead doing different features and then going to model training, the two stages were actually moving simultaneously as well. More models we were feeding, the more insights were found, and the more features engineering were added to improve the result.

- Ensemble and Models Stacking: After getting results from different single models, different combinations were being tried for ensemble and stacking to see is there any possible chance to improve the result.

- Data cleaning

By doing numeric EDA, we dicovered that there were 24 features contain missing value in the data set. Besides having missing value by columns, the data also had missing observations. Missing observations means that some of the customers missing data for certain months in between the overall time range.

After deeper investigation, there were 5 features being dropped before imputation due to over 95 % of missing value and repetitive information of other features.

Imputation

There were about 4 kinds of imputation strategy implement for this data set. For the features in the ‘Unknown’ column, the missing values were all labeled as ‘unknown’. The reason is that the features in this column are more customer’s demographic information. Therefore, in order not to make any assumption, labeling ‘unknown’ was the only way. For the features in the Common Type column, the median of each features were imputed for the missing values because those were the features that described the relationship between the bank or continued variable. The features in the others were imputed by couple different methods. Beside the ‘age’ feature, the missing values of rest of the features were fill in based on treating those observation as new customers. From the EDA, we were discovered that those missing values were the same observations. And within those observations, ‘all the account activities were under 6 months, which were also the bench mark for being a new customers. For the ‘age’ features, the after scaled mean were using for imputation in order to avoid some skewness in the data. Last but not least, there were two kinds of products having missing values. Due to the evaluation penalized the false negative, we would like to assume that the products havent been purchased yet.

EDA

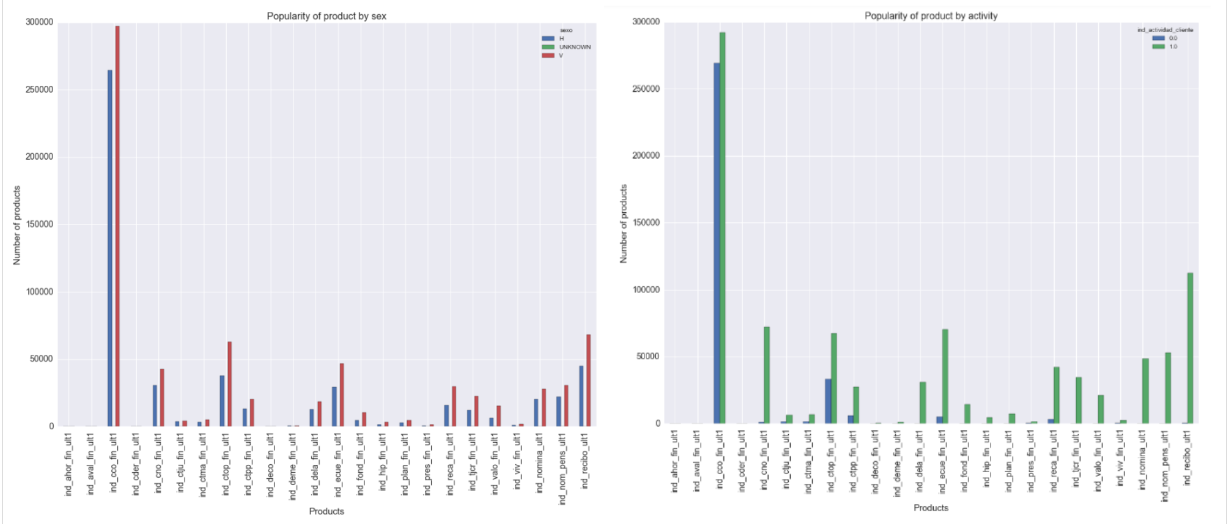

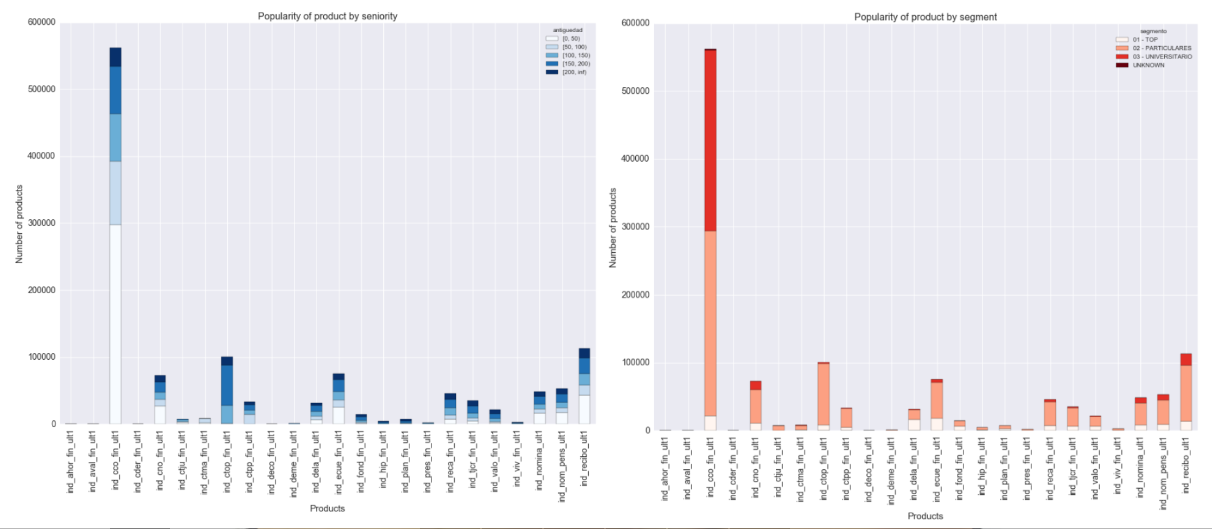

At first, we would like to take a look at how the product owned related to customer’s demographic information at May 2016. We could see that no matter which segments of the customers, the ‘current cash account’ was the dominated product among all.

Since the data set was in a time series format, it was important to look at the trend of the numbers of the customers. As the graph indicated, there were a big amount of new customers appear in July 2015, and keep growing for a bit for 4 to 5 months.

When we look at how many products does each customer own in May 2016, we discovered that there were customers do not own product anymore. Also, most of the customers own 1 to 2 products.

The following graphs show that if the customers own 1, 2, or 3 products, which products have the highest popularity.

Instead looking at the relationship between products and customers, we also did some investigation on how does the product sales over time. Using these two products as example, the first one indicates that there were almost no selling activities for the last 6 months. The second graph shows that that product was constantly sold over the time.

In addition, we also take a look at the income distribution by cities. The graph shows that the income varies in all the cities. From this interesting information, we were using it as part of feature engineering later on.

Feature Engineering

Feature engineering is key in this project. Based on the results of the model training, each time new useful features are added into the models, the scores get improved. In the following paragraphs, we will discuss our process of feature engineering.

In this project, we have several rounds of feature engineering, which can be divided into 4 stages. In the first stage, the input and output features are encoded from letters to numbers, and only the original features in the data set are used in the model training, therefore, it is 22 features in total. Since the data set is way too large, and using all the data will run out the laptop’s memory limit, so in actual model training, one month’s data is used as training set. However, in order to find the month which gives the best prediction, three directions of month selection are performed: using the previous month to predict the current month, using the month from last year to predict the same month of current year, using one month to predict the situation of three months later. After performing all the combinations, the pattern between months are not that clear, and the scores based on MAP@7 are not good.

Then, using the same idea of month combinations, combining adding the previous month’s product information as input features, meaning 46 input features in total, the performance of models is improved. Also, it is found that the best way in this dataset to recommend new products is based on the same month from the previous year. Since then, the data of June, 2015 is used as the train set to give the recommendation for June, 2016.

In the following steps, what we did was adding or dropping features based on time series, k-means clustering, and EDA.

Our purpose of the model training is to let the model be sensitive to newly added products. Because machine learning is not that smart, we cannot anticipate the model training process to understand what we want them to do, we need to provide the model the information we want the model to know directly. Therefore, we create a change feature. Change here means use the current month’s product information minus previous month’s product information. This change feature has two levels, that is, “1” and “0”. “1” represents newly added products, “0” represents other statuses. The new features are selected based on the results of time series.

Change features have a positive effect on the model, to further improve the model, we reseparate the change features back to ‘-1’, ‘0’, and ‘1’, respectively representing, close an account, no change, and open a new account. At the same time, 5 products are dropped from the predicting list, because the bank doesn’t sell those products anymore. Since the Kaggle system calculation penalizes more on false negative. Attempting not to miss any prediction, class weight is added for output features based on popularity of the products, meaning give more weight for popular products.

The month selection methods used in the first two rounds of feature engineering are actually to manually search the time effect between months. Combining the information from the manually searching and time series results based on the three levels of change features, more product information from different months are added as new features. Here’s an example that how a certain product information of a certain month is chosen based on time series.

This is the change of pension account through time. ADF test result shows this time series is stationary, which is statistically significant, the lag number is 4. According to this information, since the data of June, 2015 is as train set, the product change information of February, 2015 is added as a new feature. However, again, this dataset is weird, for month 13, and month 14, there are sharp increase and decrease in the chart. At that time, the bank has about 50,000 pension accounts, and in month 13, there were over 10,000 pension accounts being closed, and in the next month, almost 20,000 newly opened pension account. This also tells that randomly adding the change features into the model is not appropriate, because it is hard to predict the erratic change in the time series.

More feature engineering process involves in the model training procedure, and more details will be discussed in the following paragraphs.

Models Training

The baseline of our model is the recommendations based on popularity of the products at the end of May 2016. Multiple modeling algorithms were tested at various rounds along with our feature selection process including: Xgboost, Naive Bayes, Random Forest, Neural Networks, Adaboost and collaborative filter. In the last two rounds of feature selection, Xgboost and Random Forest stood out and out-performed other models.

With following new features: adding 5 previous months’ account history, a marriage index (combination of age, sex and income), removing city and 5 rare products. Our Xgboost model scored 0.02996 on Kaggle Leader Board and Random Forest model which scored 0.02946 is the second best among our single models.

Ensemble Models

Multiple ensemble model strategies were tested in our modeling process. Voting helped to improve the quality of our model in earlier rounds of modeling when we had diverse models of similar quality, however when later we only have two highly quality but correlated models, the voting process stopped helping our models improve. Stacking strategies were also attempted at later stages using Xgboost and Random Forest models, however, due to high correlation of our models, neither of these ensemble model strategies helped us to achieve a model of higher score.

Ensemble Models - Stacking Strategy 1

Ensemble Models - Stacking Strategy 2

Insights and Finding

The key to build a good model in this competition is to use June 2015 as the train set because 5 correlated main account types (nom_pens, nomina, recibo, reca and cno) show seasonal changes. Using June 2015 as the training month and account history from Jan 2015 to May 2015 enabled us to capture the time series aspect of the dataset. Removing 5 rare products (aval, ahor, viv, deme, deco) also contributed to improve our models, we saw a 4% increase in our Kaggle Leader Board score by making this change alone.

At the end of competition, we were working on a new strategy to improve our model. The new customers who joined after June 2015 showed different product purchasing behaviors from the old customers. We could use their data from July 2015, which wasn’t in our training set, to build models for them separately. Although the “new-customer only” model did not improve the predictions on new customers (Kaggle LB score ~ 0.0297), combining them with the predictions from our Xgboost model trained on old customers could provide better predictions.

Strategy to Improve New Customer Prediction

Final Result

Our best model at the end of the Santander Product Recommendation Kaggle competition is Xgboost model with aforementioned engineered features, which scored 0.0299626 on Public Leader Board and 0.0302852 on Private Leader Board would put us among top 9% of all the participating teams in this competition.

Kaggle Leader Board Standing, top 9% of 1806 participating teams